By Xu Jianwei (Zhuying)

The saturated development of the mobile side is causing the entire IT industry to look forward to the Internet of Everything era. In the Internet of Things (IoT) scenario, there are often many different terminals deployed in different locations to collect various data. For example, there are 100,000 loT devices in a certain area, and each of them sends data every five seconds. Then, 630.7 billion data points will be generated every year. These data are generated sequentially, and the format of the data generated by loT devices is consistent. There is no need to delete or modify. Time series databases have emerged to deal with the needs described above.

The time series database pursues fast writing, high compression, and fast data retrieval under the premise of assuming no data insertion, no update requirements, and stable data structure. The label (tag) of time series data is indexed to improve query performance so you can quickly find values that match all specified tags. If the number of label (tag) values is too large (high cardinality problem), the index will have various problems. This article mainly discusses some feasible solutions when InfluxDB encounters the high cardinality problem of written data.

Time series databases mainly store metric data. Each piece of data is called a sample. The sample consists of the following three parts:

<-------------- time-series="" --------=""><-timestamp -----=""> <-value->

node_cpu{cpu="cpu0",mode="idle"} @1627339366586 70

node_cpu{cpu="cpu0",mode="sys"} @1627339366586 5

node_cpu{cpu="cpu0",mode="user"} @1627339366586 25Ordinarily, lablelsets in time-series are limited and enumerable. For example, the optional values of a model in the example above are idle, sys, and user.

Suggestions on labels in the official Prometheus documentation:

CAUTION: Please remember every unique combination of key-value label pairs represents a new time series, which can dramatically increase the amount of data stored. Do not use labels to store dimensions with high cardinality (many different label values), such as user IDs, email addresses, or other unbounded sets of values.

When designing a time series database, it is also assumed that the timeline is low in cardinality. However, with the widespread use of metrics, timeline expansion cannot be avoided in many scenarios.

For example, tags appear as pod/container IDs, and some tags appear as user IDs, even URLs in cloud-native scenarios. The timeline expands significantly when these tags are combined.

This contradiction is inevitable. How do we solve it? Should the party that writes data adjust the number of time-series written when writing data? Should the time series database change the design to apply to this scenario? There is no perfect solution to this problem yet.

In the real-world situation, if the time series database is not unavailable after the timeline is expanded, the performance will not drop exponentially. In other words, when the timeline does not expand, the performance is excellent. After the timeline expands, the performance can reach the level of good or pass.

How can the performance of the time series database be good when the timeline is expanded? Next, let's discuss this issue through the InfluxDB source code.

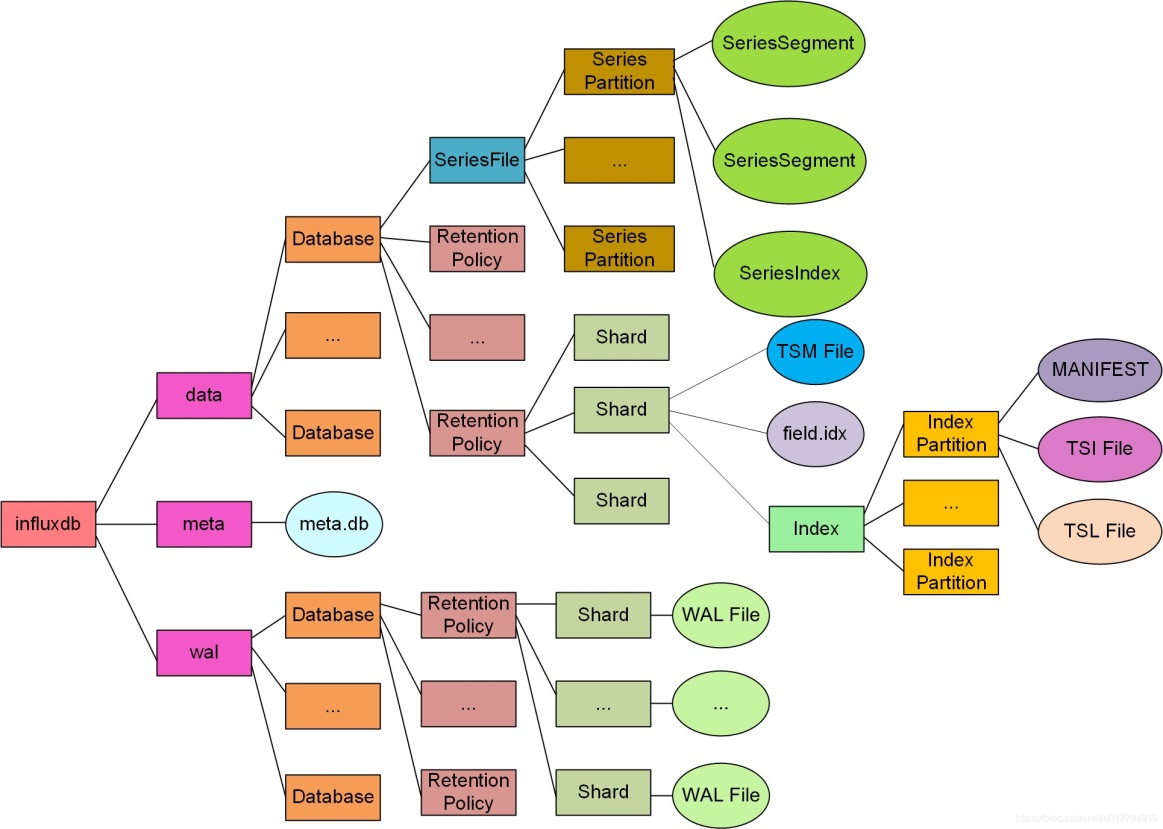

The main processing logic of the InfluxDB TSM structure is similar to LSM. After the data is reported, it is added to the cache and log file (WAL). The reported data is compacted (data files are merged and indexes are rebuilt) to speed up the retrieval or compress the ratio.

Indexing involves three aspects:

Please refer to the official article for the specific index implementation of InfluxDB.

When the timeline expands, the retrieval performance of TSI and TSM does not decrease seriously, and the problem mainly occurs in the Series Segment Index.

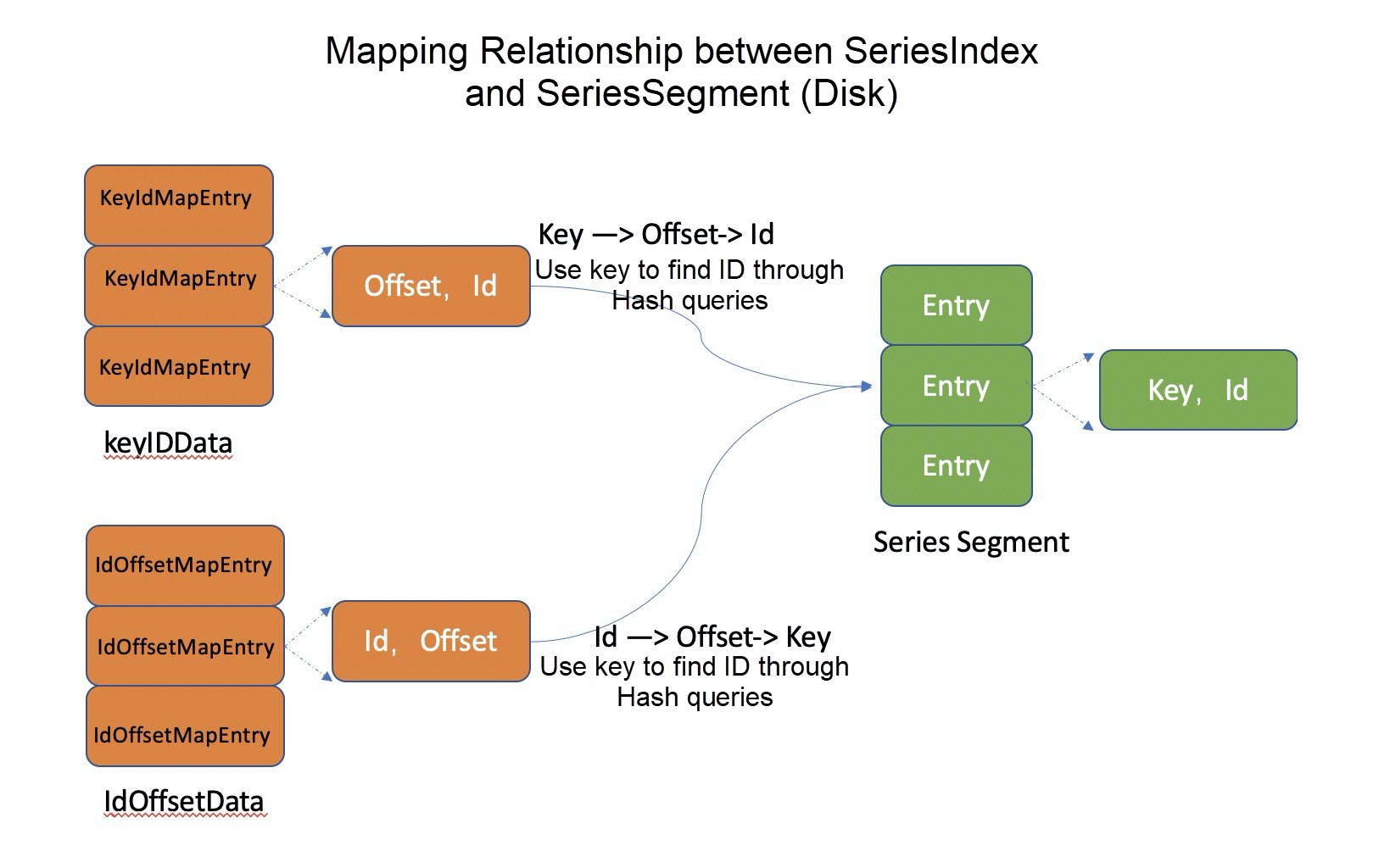

In this section, we will discuss the forward index of timeline files in InfluxDB (time-series key -> id, id -> time-series key):

The specific code (InfluxDB 2.0.7) is listed below:

tsdb/series_partition.go:30

// SeriesPartition represents a subset of series file data.

type SeriesPartition struct {

...

segments []*SeriesSegment

index *SeriesIndex

seq uint64 // series id sequence

....

}

tsdb/series_index.go:36

// SeriesIndex represents an index of key-to-id & id-to-offset mappings.

type SeriesIndex struct {

path string

...

data []byte // mmap data

keyIDData []byte // key/id mmap data

idOffsetData []byte // id/offset mmap data

// In-memory data since rebuild.

keyIDMap *rhh.HashMap

idOffsetMap map[uint64]int64

tombstones map[uint64]struct{}

}When the series key is retrieved, it will be searched in the memory map first and then in the disk map. The specific implementation code is listed below:

tsdb/series_index.go:185

func (idx *SeriesIndex) FindIDBySeriesKey(segments []*SeriesSegment, key []byte) uint64 {

// Search in memory map

if v := idx.keyIDMap.Get(key); v != nil {

if id, _ := v.(uint64); id != 0 && !idx.IsDeleted(id) {

return id

}

}

if len(idx.data) == 0 {

return 0

}

hash := rhh.HashKey(key)

for d, pos := int64(0), hash&idx.mask; ; d, pos = d+1, (pos+1)&idx.mask {

// Search in disk map offset

elem := idx.keyIDData[(pos * SeriesIndexElemSize):]

elemOffset := int64(binary.BigEndian.Uint64(elem[:8]))

if elemOffset == 0 {

return 0

}

// Obtain corresponding ID through offset

elemKey := ReadSeriesKeyFromSegments(segments, elemOffset+SeriesEntryHeaderSize)

elemHash := rhh.HashKey(elemKey)

if d > rhh.Dist(elemHash, pos, idx.capacity) {

return 0

} else if elemHash == hash && bytes.Equal(elemKey, key) {

id := binary.BigEndian.Uint64(elem[8:])

if idx.IsDeleted(id) {

return 0

}

return id

}

}

}Here is some additional knowledge about the implementation of converting memory HashMap to disk HashMap. We all know that HashMap stores arrays. The implementation in InfluxDB is to map the disk space through mmap (see keyIDData of SeriesIndex) and then access the array address through Hash. Robin Hood Hashing is adopted and conforms to the principle of memory locality. (The code for the search logic is shown above.) The developers put a lot of thought into the manual migration of Robin Hood Hashtable to disk Hashtable.

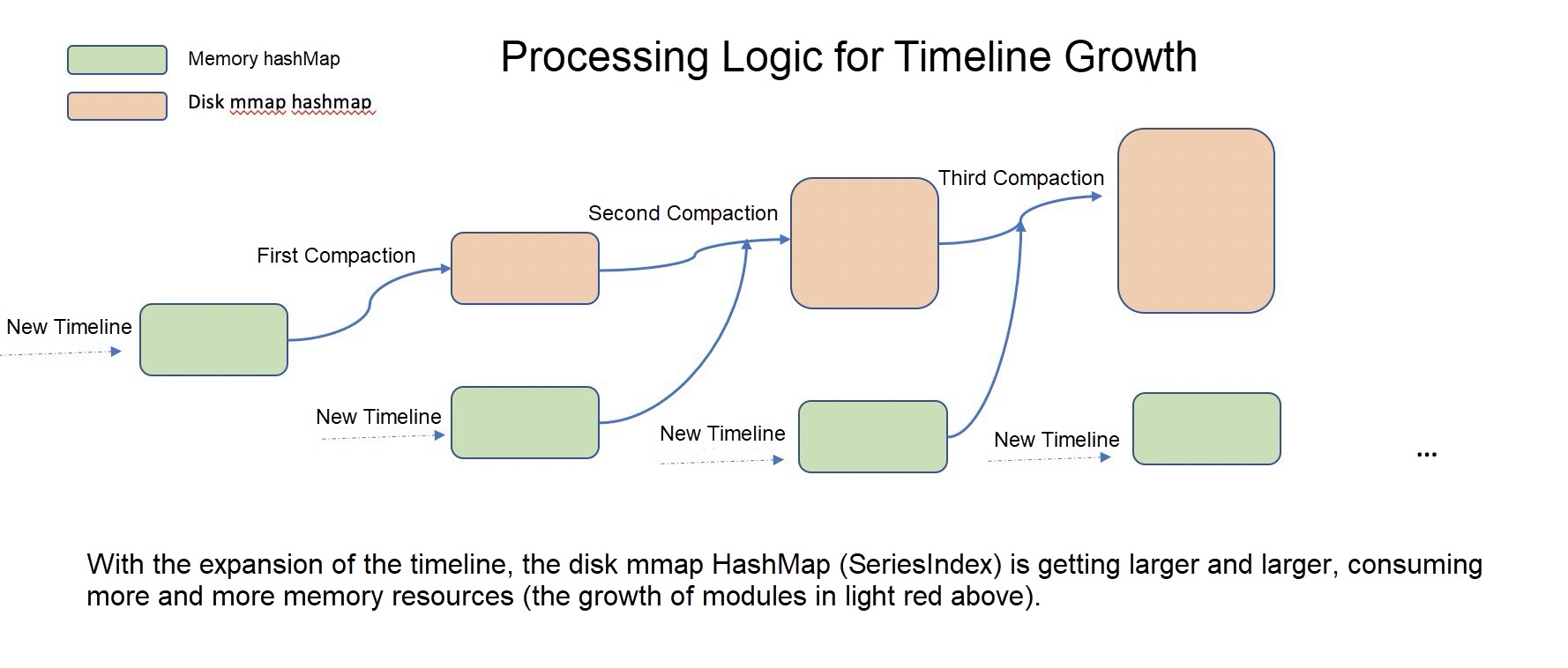

How are memory map and disk map generated? Why do we need two maps?

InfluxDB puts the newly added series key into the memory HashMap first. When the memory HashMap exceeds the threshold, merge the memory HashMap and the disk HashMap (traverse all SeriesSegments and filter the deleted series keys) to generate a new disk HashMap. This process is called compaction. After the compaction is completed, the memory HashMap is cleared and continues to store new series keys.

tsdb/series_partition.go:200

// Check if we've crossed the compaction threshold.

if p.compactionsEnabled() && !p.compacting &&

p.CompactThreshold != 0 && p.index.InMemCount() >= uint64(p.CompactThreshold) &&

p.compactionLimiter.TryTake() {

p.compacting = true

log, logEnd := logger.NewOperation(context.TODO(), p.Logger, "Series partition compaction", "series_partition_compaction", zap.String("path", p.path))

p.wg.Add(1)

go func() {

defer p.wg.Done()

defer p.compactionLimiter.Release()

compactor := NewSeriesPartitionCompactor()

compactor.cancel = p.closing

if err := compactor.Compact(p); err != nil {

log.Error("series partition compaction failed", zap.Error(err))

}

logEnd()

// Clear compaction flag.

p.mu.Lock()

p.compacting = false

p.mu.Unlock()

}()

}tsdb/series_partition.go:569

func (c *SeriesPartitionCompactor) compactIndexTo(index *SeriesIndex, seriesN uint64, segments []*SeriesSegment, path string) error {

hdr := NewSeriesIndexHeader()

hdr.Count = seriesN

hdr.Capacity = pow2((int64(hdr.Count) * 100) / SeriesIndexLoadFactor)

// Allocate space for maps.

keyIDMap := make([]byte, (hdr.Capacity * SeriesIndexElemSize))

idOffsetMap := make([]byte, (hdr.Capacity * SeriesIndexElemSize))

// Reindex all partitions.

var entryN int

for _, segment := range segments {

errDone := errors.New("done")

if err := segment.ForEachEntry(func(flag uint8, id uint64, offset int64, key []byte) error {

...

// Save max series identifier processed.

hdr.MaxSeriesID, hdr.MaxOffset = id, offset

// Ignore entry if tombstoned.

if index.IsDeleted(id) {

return nil

}

// Insert into maps.

c.insertIDOffsetMap(idOffsetMap, hdr.Capacity, id, offset)

return c.insertKeyIDMap(keyIDMap, hdr.Capacity, segments, key, offset, id)

}); err == errDone {

break

} else if err != nil {

return err

}

}This design has two drawbacks:

The forward index of InfluxDB is at the database level. There are two ways to reduce memory during compaction. One way is to increase the number of partitions, and the other way is to divide multiple measurements into different databases. However, the problem is that InfluxDB, which already has data, is not good at adjusting two pieces of data.

We know the Hash index is an O1 query, which is very efficient. However, there is a scale-out problem for growing data. Let's compromise. If the partition is greater than a certain threshold, the Hash index becomes a B+ tree index. B+ tree has limited performance degradation for data expansion, which is more suitable for high cardinality issues and no longer requires global compaction.

In InfluxDB, each shard has a time interval, and the timeline data in a time interval is not large. For example, 180-day series keys are stored in a database, while shards generally have a span of only one day or one hour. There is a huge gap between the series keys stored in the two. In addition, constructing the forward index of the series key in the shard level is friendlier to the deletion operation. When the shard expires and is deleted, diff operation will be performed to compare all series keys of the current shard with those in other shards, and the series keys will be deleted when they do not exist.

In the production environment, timeline expansion has a lot to do with measurements. Generally, a few measurements have the timeline expansion problem, but most do not.

We can add measurement timeline statistics when compacting the forward index of the series key. If the timeline of measurement is expanded, all series keys of the measurement can be switched to B+ tree. The series keys that do not expand continue to adopt the Hash index. As such, the performance of this solution is better than the second one, but the development cost will be higher.

Currently, the problem of high cardinality is mainly reflected in the forward index of series keys. Personally, the second solution can be adopted in the short term, and then the fourth one can be gradually used. This can solve the problem of timeline growth with little performance degradation and low cost. The third solution involves relatively large changes, but the design is more reasonable, which can be used as a long-term repair solution.

This article mainly uses InfluxDB to explain the high cardinality problem of time series databases and feasible solutions. The dimension explosion of metrics causes timeline expansion. Many believe this is because of the misuse or abuse of the time series database. However, when facing the explosion of information and data nowadays, the cost of converging data dimensions without divergence is very high, much higher than the cost of data storage.

The Divide and Conquer strategy is needed to solve this problem, improving the tolerance of time series databases to dimension explosions. In other words, when timeline expansion occurs, the time series database will not crash, and the metrics without timeline expansion continue to run efficiently. The metrics with timeline expansion can experience slight performance degradation. It will be the core capabilities of time series databases to improve tolerance for timeline expansion and control the explosion radius of timeline expansion.

The sample code in this article was written in Golang and based on the InfluxDB source code. Special thanks to Boshu, Lizi, and Renjie for helping to explain InfluxDB and discussing the timeline expansion problem.

212 posts | 13 followers

FollowAlibaba Cloud Native Community - February 13, 2023

ApsaraDB - August 7, 2023

Alibaba Clouder - July 31, 2019

Alibaba Cloud Native Community - February 24, 2023

Alibaba EMR - May 20, 2022

digoal - May 9, 2020

212 posts | 13 followers

Follow Time Series Database (TSDB)

Time Series Database (TSDB)

TSDB is a stable, reliable, and cost-effective online high-performance time series database service.

Learn More Time Series Database for InfluxDB®

Time Series Database for InfluxDB®

A cost-effective online time series database service that offers high availability and auto scaling features

Learn More Database for FinTech Solution

Database for FinTech Solution

Leverage cloud-native database solutions dedicated for FinTech.

Learn More Oracle Database Migration Solution

Oracle Database Migration Solution

Migrate your legacy Oracle databases to Alibaba Cloud to save on long-term costs and take advantage of improved scalability, reliability, robust security, high performance, and cloud-native features.

Learn MoreMore Posts by Alibaba Cloud Native