By Yang Che

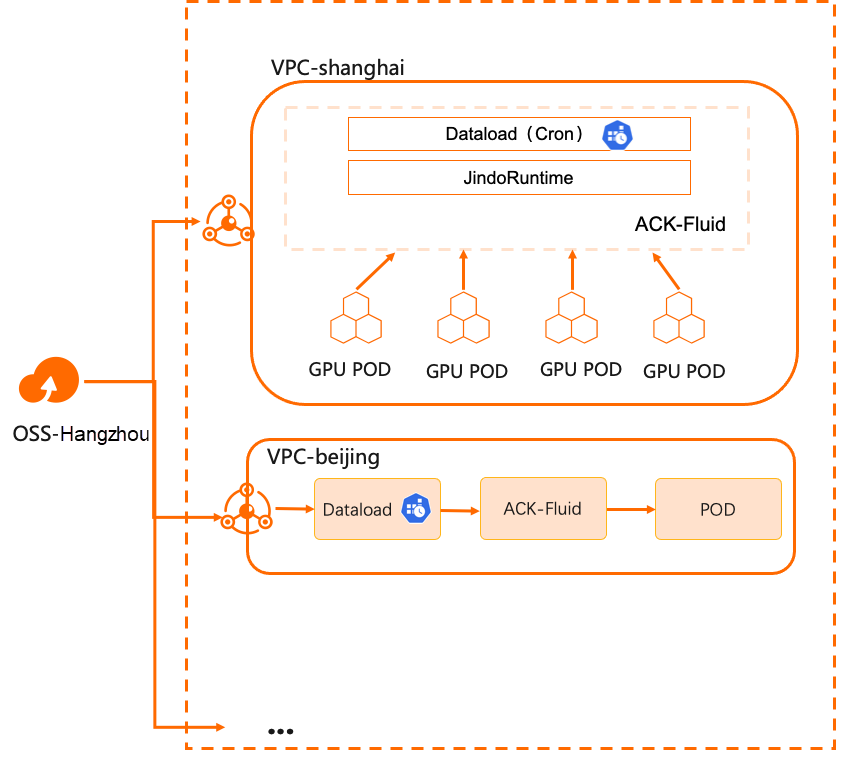

In the previous articles, we discussed Day 1 of combining Kubernetes and data in hybrid cloud scenarios, focusing on solving data access problems and connecting cloud computing with offline storage. Building upon this, ACK Fluid further addresses the cost and performance issues related to data access. Moving on to Day 2, when users actually implement this solution in a production environment, the main challenge lies in how the operations and maintenance team handles data synchronization for multi-region clusters.

Many enterprises establish multiple computing clusters in different regions for various reasons such as performance, security, stability, and resource isolation. These clusters require remote access to a centralized data storage center. For example, with the growing popularity of large language models, supporting multi-region inference services based on these models has become a necessity for many businesses. However, this specific scenario presents several challenges:

• Manual synchronization of data across data centers on multiple computing clusters, which is time-consuming.

• Complexity in managing large language models due to the large number of parameters and files. Different businesses choose different basic models and business data, resulting in different final models.

• Continuous and frequent updates of model data based on business inputs.

• Slow startup and file retrieval time for model inference services. Large language models have extensive parameter sizes, often in the hundreds of GBs, which leads to significant time consumption for pulling parameters into GPU memory and extremely long startup times.

• Synchronous updating of models across all regions, which negatively impacts the performance of an overloaded storage cluster.

In addition to the acceleration capabilities of common storage clients, ACK Fluid provides scheduled and triggered data migration and preheating capabilities to simplify data distribution.

• Reduce network and computing costs: Cross-region traffic costs are significantly reduced, computing time is shortened, and the cost of computing clusters is slightly increased. Furthermore, costs can be further reduced through elasticity.

• Accelerate application data updates: By performing data access within the same data center or zone, latency is reduced, and cache throughput concurrency can be linearly scaled.

• Streamline complex data synchronization operations: Customizable policies can control data synchronization operations, reducing contention for data access and automating O&M complexity.

This presentation describes how to use the scheduled warm-up mechanism of ACK Fluid to update data accessible to compute clusters in different regions.

• Create an ACK Pro cluster with a version of 1.18 or later. For more information, see Create an ACK Pro cluster [1].

• Cloud-native AI suite is installed, and ack-fluid components are deployed. Important: If you have open source Fluid installed, uninstall it and then deploy ack-fluid components.

• If the cloud-native AI suite is not installed, enable Fluid Data Acceleration during installation. For more information, see Deploy the cloud-native AI suite [2].

• If the cloud-native AI suite is installed, deploy the ack-fluid on the Cloud-native AI Suite page [3] in the Container Service console.

• A Kubernetes cluster is connected by using kubectl. For more information, see Use kubectl to connect to a cluster [4].

Prepare the Kubernetes and OSS environments. It only takes about 10 minutes to deploy the JindoRuntime environment.

1. Run the following command to download a copy of test data:

$ wget https://archive.apache.org/dist/hbase/2.5.2/RELEASENOTES.md2. Upload the downloaded test data to the corresponding bucket of Alibaba Cloud OSS. The upload method can use ossutil, a client tool provided by OSS. For more information, see Install ossutil [5].

$ ossutil cp RELEASENOTES.md oss://<bucket>/<path>/RELEASENOTES.md1. Before creating a Dataset, you can create a mySecret.yaml file to store the accessKeyId and accessKeySecret of OSS.

Create the mySecret.yaml file by using the following YAML template:

apiVersion: v1

kind: Secret

metadata:

name: mysecret

stringData:

fs.oss.accessKeyId: xxx

fs.oss.accessKeySecret: xxx2. Run the following command to generate a Secret:

$ kubectl create -f mySecret.yaml3. Use the following sample YAML file to create a dataset.yaml file that contains two parts:

• Create a Dataset that describes information about the remote storage dataset and UFS.

• Create a JindoRuntime to enable JindoFS for data caching in the cluster.

apiVersion: data.fluid.io/v1alpha1

kind: Dataset

metadata:

name: demo

spec:

mounts:

- mountPoint: oss://<bucket-name>/<path>

options:

fs.oss.endpoint: <oss-endpoint>

name: hbase

path: "/"

encryptOptions:

- name: fs.oss.accessKeyId

valueFrom:

secretKeyRef:

name: mysecret

key: fs.oss.accessKeyId

- name: fs.oss.accessKeySecret

valueFrom:

secretKeyRef:

name: mysecret

key: fs.oss.accessKeySecret

accessModes:

- ReadOnlyMany

---

apiVersion: data.fluid.io/v1alpha1

kind: JindoRuntime

metadata:

name: demo

spec:

replicas: 1

tieredstore:

levels:

- mediumtype: MEM

path: /dev/shm

quota: 2Gi

high: "0.99"

low: "0.8"

fuse:

args:

- -okernel_cache

- -oro

- -oattr_timeout=60

- -oentry_timeout=60

- -onegative_timeout=60The following table describes the parameters in the YAML template.

| Parameter | Description |

| mountPoint |

oss://<oss_bucket>/<path> indicates the path where the UFS is mounted. The path does not need to contain endpoint information. |

| fs.oss.endpoint | The public or internal endpoint of the OSS bucket. |

| accessModes | The access mode of a Dataset. |

| replicas | The number of workers in the JindoFS clusters. |

| mediumtype | The cache type. When you create a JindoRuntime template, JindoFS temporarily supports one of the following cache types: HDD, SSD, and MEM. |

| path | The storage path. You can specify only one path. If you set mediumtype to MEM, you must specify a local path to store data, such as logs. |

| quota | The maximum size of cached data. Unit: GB. The cache capacity can be configured based on the UFS data size. |

| high | The upper limit of the storage capacity. |

| low | The lower limit of the storage capacity. |

| fuse.args | The optional fuse client mount parameter. It is usually used with Dataset access mode. When the Dataset access mode is set to ReadOnlyMany, we enable kernel_cache to use kernel cache to optimize read performance. In this case, you can set attr_timeout (cache retention period of file attribute ), entry_timeout (cache retention period of file name read), and negative_timeout (cache retention period of file name read failure). The default value is 7200s. When the Dataset access mode is set to ReadWriteMany, we recommend that you use the default configuration. In this case, the parameters are as follows: - -oauto_cache- -oattr_timeout=0- -oentry_timeout=0- -onegative_timeout=0. Use auto_cache to ensure that the cache becomes invalid if the file size or modification time changes. Set the timeout period to 0. |

4. Run the following commands to create a JindoRuntime and a Dataset:

$ kubectl create -f dataset.yaml5. Run the following command to check the deployment of the Dataset:

$ kubectl get datasetExpected output:

NAME UFS TOTAL SIZE CACHED CACHE CAPACITY CACHED PERCENTAGE PHASE AGE

demo 588.90KiB 0.00B 10.00GiB 0.0% Bound 2m7s1. Use the following sample YAML file to create a file named dataload.yaml.

apiVersion: data.fluid.io/v1alpha1

kind: DataLoad

metadata:

name: cron-dataload

spec:

dataset:

name: demo

namespace: default

policy: Cron

schedule: "*/2 * * * *" # Run every 2 minThe following table describes the parameters in the YAML template.

| Parameter | Description |

| dataset | The name and namespace of the dataset where the dataload is executed. |

| policy | The execution policy. Currently, Once and Cron are supported. Here, create a scheduled dataload task. |

| shcedule | The policy that triggers the dataload. |

schedule uses the following cron format:

# ┌───────────── Minute (0 to 59)

# │ ┌───────────── Hour (0 to 23)

# │ │ └ ─────────────── A day of a month (1 - 31)

# │ │ │ ┌───────────── Month (1 to 12)

# │ │ │ │ │ │ ───────────── A day of a week (0 - 6) (Sunday to Monday; in some systems, 7 also represents Sunday)

# │ │ │ │ │ or sun, mon, tue, web, thu, fri, sat

# │ │ │ │ │ │

# │ │ │ │ │ │

# * * * * *Meanwhile, Cron supports the following operators:

• A comma (,) indicates an enumeration. For example, 1, 3, 4, 7 indicates that Dataload is executed at the 1st, 3rd, 4th, and 7th minute per hour.

• The conjunction (-) indicates a range. For example, 1-6 indicates that the Dataload is executed every minute from the 1st to 6th minute per hour.

• The asterisk (*) represents any possible value. For example, the asterisk in the "hour domain" is equivalent to "every hour".

• A percent sign (%) indicates "every". For example, %10 indicates that the Dataload is run every 10 minutes.

• A slash (/) is used to describe the increment of the range. For example, /2 * indicates that the Dataload is run every 2 minutes.

You can also find more information here.

For more information about advanced configurations of Dataload, see the following configuration file:

apiVersion: data.fluid.io/v1alpha1

kind: DataLoad

metadata:

name: cron-dataload

spec:

dataset:

name: demo

namespace: default

policy: Cron # including Once, Cron

schedule: * * * * * # only set when policy is cron

loadMetadata: true

target:

- path: <path1>

replicas: 1

- path: <path2>

replicas: 2The following table describes the parameters in the YAML template.

| Parameter | Description |

| policy | The dataload execution policy, including [Once, Cron]. |

| schedule | The schedule used by Cron. This parameter is valid only when the policy is set to Cron. |

| loadMetadata | Indicates whether to synchronize metadata before dataload. |

| target | The target of the dataload. You can specify multiple targets. |

| path | The path where the dataload is executed. |

| replicas | The number of cached replicas. |

6. Run the following command to create a Dataload:

$ kubectl apply -f dataload.yaml7. Run the following command to check the Dataload status:

$ kubectl get dataloadExpected output:

NAME DATASET PHASE AGE DURATION

cron-dataload demo Complete 3m51s 2m12s8. After the Dataload status is Complete, run the following command to check the current dataset status:

$ kubectl get datasetExpected output:

NAME UFS TOTAL SIZE CACHED CACHE CAPACITY CACHED PERCENTAGE PHASE AGE

demo 588.90KiB 588.90KiB 10.00GiB 100.0% Bound 5m50sIt can be seen that all files in OSS have been loaded into the cache.

This article will create an application container to access the above file to view the effect of scheduled Dataload.

1. Create a file named app.yaml by using the following YAML template:

apiVersion: v1

kind: Pod

metadata:

name: nginx

spec:

containers:

- name: nginx

image: nginx

volumeMounts:

- mountPath: /data

name: demo-vol

volumes:

- name: demo-vol

persistentVolumeClaim:

claimName: demo2. Run the following command to create an application container.

$ kubectl create -f app.yaml3. Wait for the application container to be ready. Run the following command to view the data in OSS:

$ kubectl exec -it nginx -- ls -lh /dataExpected output:

total 589K

-rwxrwxr-x 1 root root 589K Jul 31 04:20 RELEASENOTES.md4. To verify the effect of dataload updating the underlying file regularly, we modify the RELEASENOTES.md content and upload it again before the scheduled dataload is triggered.

$ echo "hello, crondataload." >> RELEASENOTES.mdUpload the file to OSS again.

$ ossutil cp RELEASENOTES.md oss://<bucket-name>/<path>/RELEASENOTES.md5. Wait for the dataload task to be triggered. When the Dataload task is complete, run the following command to check the running status of the Dataload job:

$ kubectl describe dataload cron-dataloadExpected output:

...

Status:

Conditions:

Last Probe Time: 2023-07-31T04:30:07Z

Last Transition Time: 2023-07-31T04:30:07Z

Status: True

Type: Complete

Duration: 5m54s

Last Schedule Time: 2023-07-31T04:30:00Z

Last Successful Time: 2023-07-31T04:30:07Z

Phase: Complete

...The Last Schedule Time in Status is the scheduling time of the last dataload job, and the Last Successful Time is the completion time of the last dataload job.

In this case, you can run the following command to check the current Dataset status:

$ kubectl get datasetExpected output:

NAME UFS TOTAL SIZE CACHED CACHE CAPACITY CACHED PERCENTAGE PHASE AGE

demo 588.90KiB 1.15MiB 10.00GiB 100.0% Bound 10mYou can see that the updated file has also been loaded into the cache.

6. Run the following command to view the updated file in the application container:

$ kubectl exec -it nginx -- tail /data/RELEASENOTES.mdExpected output:

\<name\>hbase.config.read.zookeeper.config\</name\>

\<value\>true\</value\>

\<description\>

Set to true to allow HBaseConfiguration to read the

zoo.cfg file for ZooKeeper properties. Switching this to true

is not recommended, since the functionality of reading ZK

properties from a zoo.cfg file has been deprecated.

\</description\>

\</property\>

hello, crondataload.As you can see from the last line, the application container can already access the updated file.

If you no longer use the data acceleration function, clear the environment.

Run the following command to delete the JindoRuntime and application container:

$ kubectl delete -f app.yaml

$ kubectl delete -f dataset.yamlThe discussion on optimizing data access in hybrid cloud scenarios using ACK Fluid concludes here. The Alibaba Cloud Container Service team will continue to iterate and optimize it in this scenario for users. As the practice deepens, this series will be continuously updated.

[1] Create an ACK Pro cluster

https://www.alibabacloud.com/help/en/doc-detail/176833.html#task-skz-qwk-qfb

[2] Deploy the cloud-native AI suite

https://www.alibabacloud.com/help/zh/ack/cloud-native-ai-suite/user-guide/deploy-the-cloud-native-ai-suite#task-2038811

[3] Container Service console

https://account.aliyun.com/login/login.htm?oauth_callback=https%3A%2F%2Fcs.console.aliyun.com%2F

[4] Use kubectl to connect to a cluster

https://www.alibabacloud.com/help/zh/ack/ack-managed-and-ack-dedicated/user-guide/obtain-the-kubeconfig-file-of-a-cluster-and-use-kubectl-to-connect-to-the-cluster#task-ubf-lhg-vdb

[5] Install ossutil

https://www.alibabacloud.com/help/zh/oss/developer-reference/install-ossutil#concept-303829

Observability | Key Metrics to Focus On When Using Prometheus to Monitor E-MapReduce

212 posts | 13 followers

FollowAlibaba Cloud Native - November 29, 2023

Alibaba Cloud Native - November 29, 2023

Alibaba Cloud Native - November 29, 2023

Alibaba Container Service - January 15, 2026

Alibaba Container Service - November 15, 2024

Alibaba Cloud Native - November 29, 2023

212 posts | 13 followers

Follow ACK One

ACK One

Provides a control plane to allow users to manage Kubernetes clusters that run based on different infrastructure resources

Learn More Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More Storage Capacity Unit

Storage Capacity Unit

Plan and optimize your storage budget with flexible storage services

Learn More Hybrid Cloud Storage

Hybrid Cloud Storage

A cost-effective, efficient and easy-to-manage hybrid cloud storage solution.

Learn MoreMore Posts by Alibaba Cloud Native