11.11 The Biggest Deals of the Year. 40% OFF on selected cloud servers with a free 100 GB data transfer! Click here to learn more.

X-Engine is a new generation storage engine developed by Alibaba Database Department and is the basis of the distributed database X-DB. To achieve 10 times the performance of MySQL and 1/10 the storage cost, X-DB combines software with hardware to make full use of the most cutting-edge technical advantages in both software and hardware fields.

FPGA acceleration is our first attempt in the custom computing field. At present, the FPGA-accelerated X-DB has been subject to small-scale online grayscale release. FPGA will assist X-DB in the 6.18 and Double 11 shopping carnivals this year and will meet Alibaba's business departments' high database performance requirements.

Owning the world's largest online transaction website, Alibaba's OLTP (online transaction processing) database system needs to satisfy high-throughput service requirements. According to our statistics, several billion records get written into our OLTP database system on a daily basis. During the 2017 Double 11 (Singles' Day) shopping carnival, the system's peak throughput reached 10 million TPS (transactions per second). Alibaba's business database systems mainly have the following characteristics:

To meet Alibaba's stringent requirements on performance and cost, we have designed a new storage engine; it is called X-Engine. We have used many cutting edge database technologies in X-Engine; these include highly-efficient memory index structures, asynchronous write assembly-line processing mechanism, and optimistic concurrency control for in-memory databases.

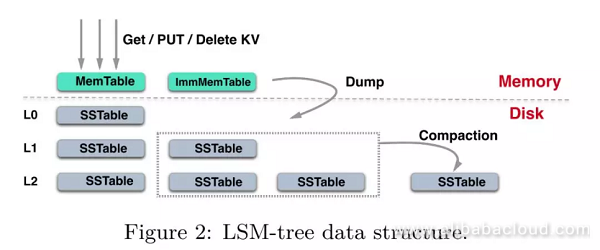

To achieve the best write performance and facilitate the separation of cold and hot data for tiered storage, X-Engine has borrowed the design of LSM-Tree. X-Engine maintains multiple memtables in its memory. It appends all newly written data to these memtables, rather than directly replacing existing records. As the data storage is relatively large, it is impossible to store all data in memory.

When data in memory reaches a specified volume, we flush it to the persistent storage to form an SSTable. To reduce latency in read operations, X-Engine regularly schedules compaction tasks to compact SSTables in the persistent storage. X-Engine merges key-value pairs in multiple SSTables by keeping only the latest version of key-value pairs if multiple versions exist (all key-value pair versions currently referenced by transactions will also be kept).

Based on the characteristics of data access, X-Engine applies tiered storage to persistent data, where we store active data in relatively high data layers, and merge less active data (seldom accessed) with base-layer data and store it in the base-layer. It compresses base-layer data at a high compression rate and migrates it to storage media featuring large capacity but the relatively low price (such as SATA HDDs) to achieve the goal of storing a large quantity of data at a relatively low cost.

In this case, tiered storage creates a new problem: the system must frequently compact data, and the larger number of data writes requires more frequent compaction processes. Compaction is a compare & merge process which requires high consumption of CPU and storage I/O. In high-throughput write cases, a large number of compaction operations will occupy a large number of system resources. This can surely cause the performance of the entire system to drop tremendously thus leading to a huge impact on the application system.

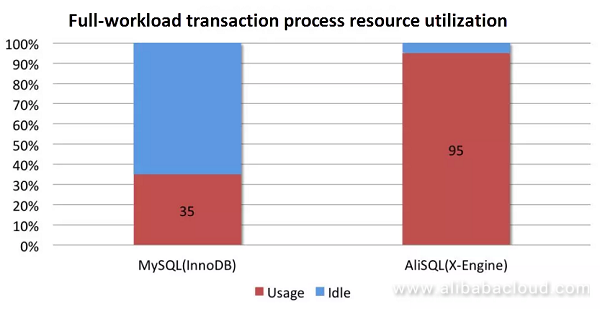

The completely new X-Engine has extraordinary multi-core expansion capability to achieve very high performance. Its front-end transaction alone can almost completely consume all CPU resources, and it has a much higher resource using efficiency than InnoDB. We have shown the comparison between the two in the following figure:

At such a performance level, the system does not have any other resources for compaction operations; otherwise, performance levels will drop.

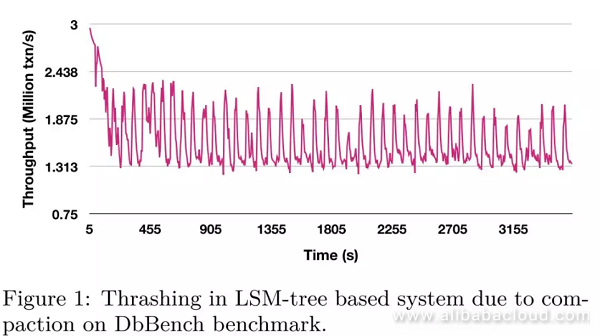

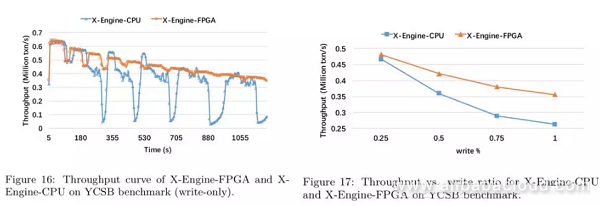

Based on our testing results, in DbBench benchmark's write-only scenario, the system periodically suffers from performance jitter. When a compaction task occurs, the system performance drops by more than 40%, and when the compaction task ends, the system performance returns to normal. We have shown this behavior in the following figure:

However, if we do not conduct compaction promptly, the accumulation of multi-version data can seriously affect the read operations.

To solve the performance jitter caused by compaction, academic experts have put forward many structures such as VT-tree, bLSM, PE, PCP, and dCompaction. Although these algorithms can optimize the compaction performance across multiple aspects, they cannot reduce consumption of CPU resources by compaction. Based on relevant research statistics, when using SSD storage devices, the computing operations of compaction in the system consumes approximately 60% of computing resources. Therefore, no matter what optimizations we implement for compaction in the software layer, for all LSM-tree based storage engines, performance jitter caused by compaction is always an Achilles' heel.

Fortunately, special hardware opens a new door for solving performance jitter caused by compaction. In fact, it has become a trend to use special hardware in solving traditional databases' performance bottlenecks. We have already offloaded database operations such as Select and Where to FPGA, and more complex operations such as Group By are under research. However, the current FPGA acceleration solutions have a couple of drawbacks:

To ease the impact of compaction on X-Engine's system performance, we have used an asynchronous hardware device FPGA, rather than the CPU to complete the compaction operation. This approach is crucial for a storage engine that satisfies stringent service requirements by maintaining the overall system performance at a high-level and avoiding performance jitters. Here are the major design features:

X-Engine's storage structure contains one or multiple memory buffer areas (memtable), and multilayer persistent storage L0, L1... Each layer contains multiple SSTables.

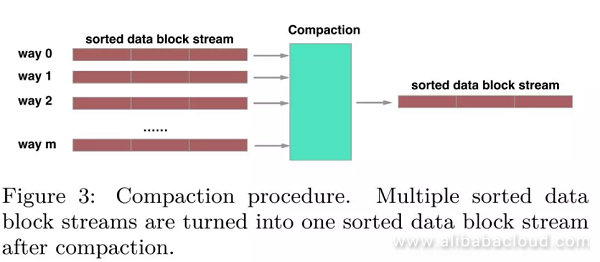

When memtable is full, it turns into an immutable memtable and then flushes to an SSTable to L0. Each SSTable contains multiple data blocks and one index block to index the data block. When it reaches the maximum number of L0 files, it triggers the merge of SSTables that have the overlapped key ranges; this process is called compaction. Likewise, when we reach the maximum number of SSTables at a layer, it merges with lower layer data. In this way, cold data constantly flows downward while hot data remains at a relatively higher layer.

We can specify a range of key-value pairs that merge during a compaction process and this range may contain multiple data blocks. Generally, a compaction process involves merging data blocks between two adjacent layers. However, we need to pay special attention to compaction tasks between L0 and L1. This is because as SSTables in L0 directly flushes from the memory, keys of SSTables in this layer may get overlapped. Therefore, a compaction task between L0 and L1 may involve merging multiple data blocks.

For read operations, X-Engine needs to search for the required data from all memtables. If it fails to find the data in memtables, it searches in the persistence storage, from higher to lower layers. As a result, timely compaction operations not only shorten the read path but also save the storage space. However, this method uses a lot of system computing resources and causes performance jitter. This is an urgent problem that X-Engine must solve.

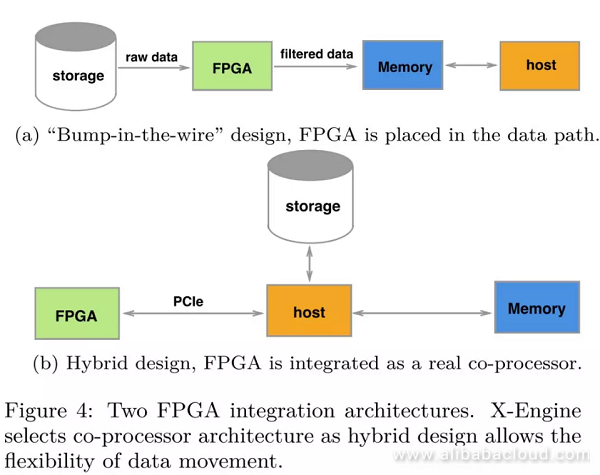

From the perspective of the existing FPGA accelerated databases' status quo, we can divide FPGA accelerated database architectures into two types; the bump-in-the-wire design and the hybrid design. In the early stage, because of the FPGA card's insufficient memory resources, the former type of architecture is relatively popular. In this architecture, we place FPGA on the storage data path and use the host as a filter. The advantage is that it requires zero data replication, while the drawback is that the acceleration operation must be a part of the streamlined process, therefore making it not flexible enough in terms of the design method.

The latter architecture design uses FPGA as a coprocessor, where we have connected FPGA to host via PCIe and use the DMA method for data transmission. As long as the offloading computation is intensive enough, data transmission costs are acceptable. The hybrid architecture design allows more flexible offloading methods. For complex operations such as compaction, data transmission between FPGA and host is necessary. Therefore, we have used the hybrid architecture design for hardware acceleration in our X-Engine.

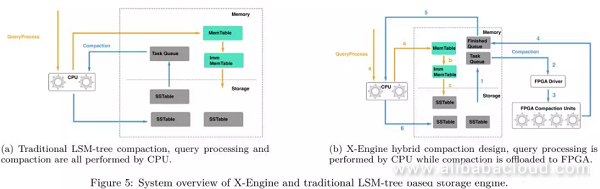

In traditional LSM-tree-based storage engines, CPU is responsible for handling normal user requests, as well as the scheduling and execution of compaction tasks. In other words, CPU is both the producer and consumer of compaction tasks. However, in a CPU-FPGA hybrid storage engine, CPU is only responsible for producing and scheduling compaction tasks. In this method, we need to offload the execution of compaction tasks to the special hardware (FPGA).

For X-Engine, handling of normal user requests is similar to that of LSM-tree-based storage engines:

When L0 reaches the maximum number of SSTables, compaction gets triggered. We can divide offloading of a compaction task into the following steps:

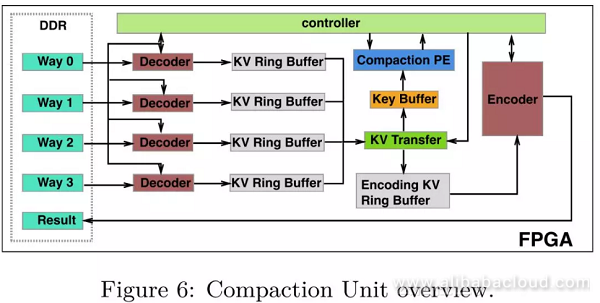

Compaction Units (CU) are the basic unit for FPGA to execute compaction tasks. An FPGA card can place multiple CUs, and each CU is composed of the following modules:

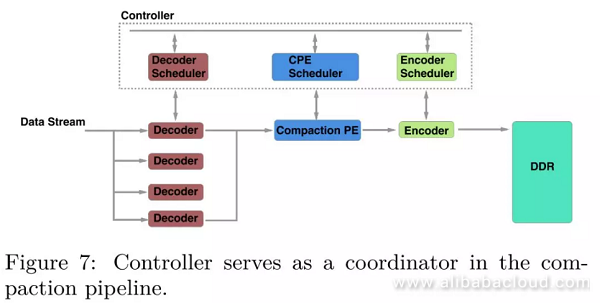

A compaction process contains three key steps: decoding, merging, and encoding. The most significant challenge for designing a proper compaction pipeline is that the execution time for each step varies significantly. For example, because of parallel processing, the throughput of the decoder module is much higher than the encoder module. Therefore, we must suspend some fast modules to wait for downstream modules still in the pipeline. To match the throughput differences in each of the pipeline's modules, we have designed a Controller module to coordinate different steps in the pipeline. An additional benefit of this design is that it decouples each module in the pipeline and enables more flexible development and maintenance during engineering implementation.

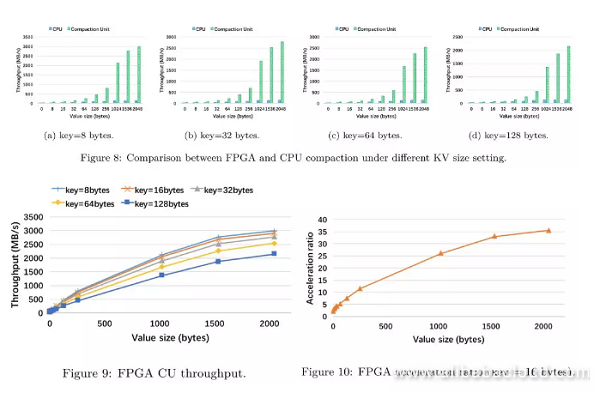

When integrating FPGA compaction into X-Engine we hope to have independent CU throughput performance; the baseline of the experiment is the CPU.

Single-core compaction thread (Intel(R) Xeon(R) E5-2682 v4 CPU with 2.5 GHz)

We can draw the following three conclusions from the experiment:

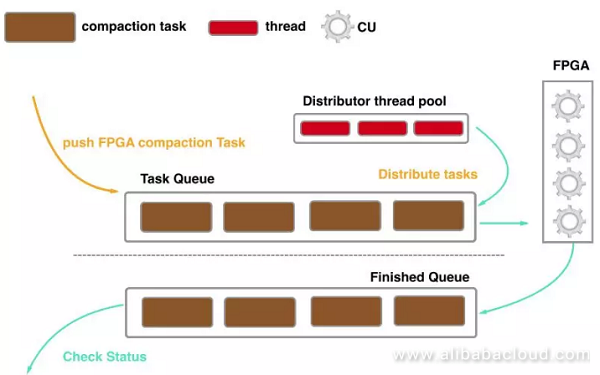

Because a link request in FPGA is completed in milliseconds, using the traditional synchronous scheduling method will cause high thread switching costs. Based on FPGA's characteristics, we have redesigned an asynchronous scheduling compaction method, where:

Asynchronous scheduling significantly reduces the thread-switching cost of CPU.

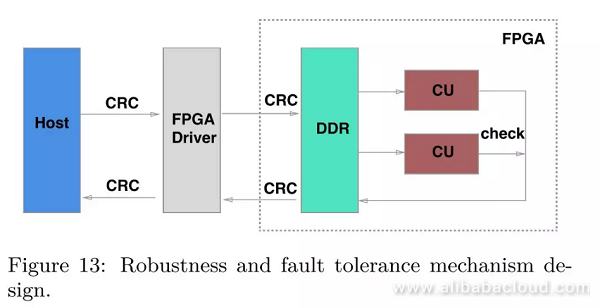

For FPGA compaction, the following three reasons can lead to the failure of compaction task:

To ensure the data is correct, the CPU will conduct computation again on all failed tasks. As we mentioned earlier in the fault tolerance mechanism, we have addressed a small part of compaction tasks that exceed the limits and have avoided the risk of FPGA internal errors.

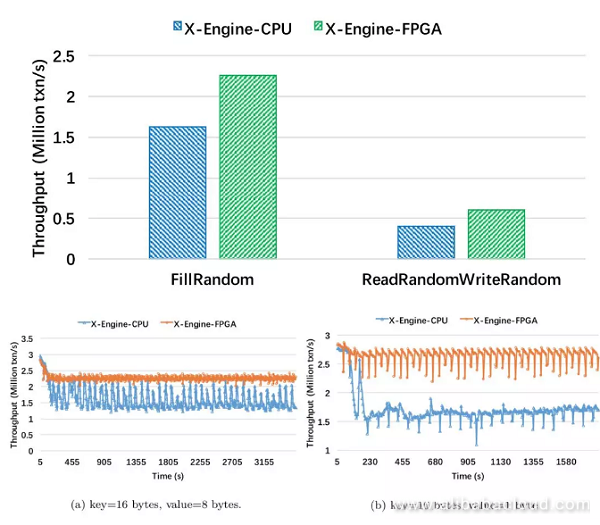

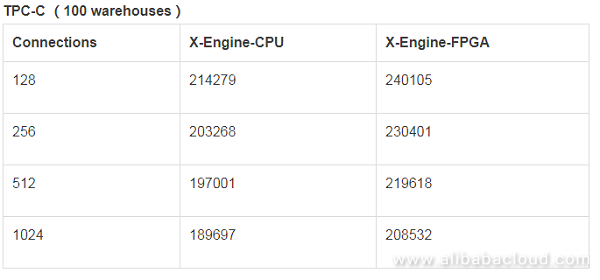

We compared the performance of two storage engines:

Result analysis:

Result analysis:

Result analysis:

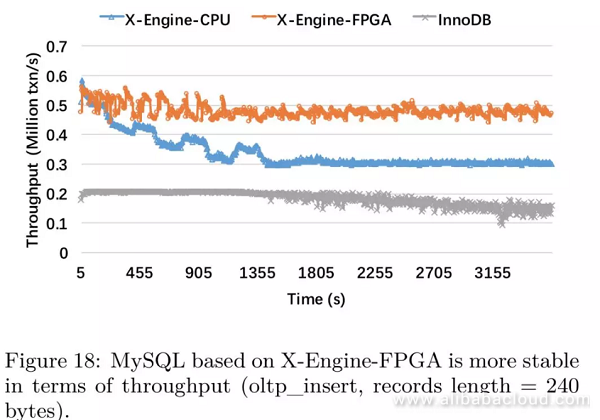

We have included testing for InnoDB in this experiment (buffer size = 80 GB)

Result analysis:

In this article, the X-Engine storage engine accelerated by FPGA brings 50% performance improvement for the KV interface, and 40% performance improvement for the SQL interface. With the decrease in the read/write ratio, FPGA's acceleration effect becomes more obvious, thus meaning that FPGA compaction acceleration is suitable for write-intensive workloads. This is consistent with the intention of the LSM-tree design. Also, we have avoided FPGA's internal defects by designing a fault tolerance mechanism, and we've finally created a high-availability CPU-FPGA hybrid storage engine that meets Alibaba's real service requirements.

It is the first real project that uses a heterogeneous computing device introduced by X-DB to accelerate core database functions. Based on our experiences, FPGA can completely meet the computing demands raised by X-Engine's compaction tasks. At the same time, we have been researching to schedule more suitable computing tasks to FPGA for execution, such as compression, BloomFilter generation, and SQL JOIN operators. At present, the R&D for the compression function is completed, and it will be built into a set of IP together with Compaction to perform data compaction and compression operations simultaneously.

X-DB FPGA-Compaction hardware acceleration is an R&D project completed by three parties; these parties are respectively the Alibaba Database Department database kernel team, the Alibaba Server R&D Department custom computing team, and Zhejiang University. Xilinx's technical team has also made great contributions to the success of this project. We hereby extend our gratitude to them. We will post X-DB online for public beta this year. You will then be able to experience the significant performance improvement with FPGA acceleration to X-DB.

Read similar articles and learn more about Alibaba Cloud's products and solutions at www.alibabacloud.com/blog.

2,593 posts | 793 followers

FollowAlibaba Clouder - December 10, 2019

Alibaba Cloud Community - March 22, 2022

ApsaraDB - July 30, 2024

Alibaba Clouder - September 29, 2017

ApsaraDB - April 13, 2020

Alibaba Clouder - May 27, 2019

2,593 posts | 793 followers

FollowLearn More

Tablestore

Tablestore

A fully managed NoSQL cloud database service that enables storage of massive amount of structured and semi-structured data

Learn More Data Transmission Service

Data Transmission Service

Supports data migration and data synchronization between data engines, such as relational database, NoSQL and OLAP

Learn MoreMore Posts by Alibaba Clouder

Raja_KT November 23, 2018 at 7:53 am

I do not have much background about this but few questions.How do you overcome FPGA's SEU and FIT issue?How much space can FPGAs be accomodating?Can you give an example of data , when it passes from L0 to L1, L1 to L2 caches .....and the typical L0, L1,L2 sizes of specific servers?Do you do CRC beyond FPGAs?Will other FPGA's solutions like Arria 10 , Cyclone 10, Stratix 10 , Achronix's eFPGAs etc be tested?