Data Transmission Service支援在不影響業務正常啟動並執行情況下,將部署在本地、ECS或其他雲上的MySQL資料庫遷移至RDS MySQL執行個體。DTS支援庫表結構遷移、全量遷移以及增量遷移,同時使用這三種遷移類型可以實現在自建應用不停服的情況下,平滑地完成自建MySQL資料庫的遷移上雲。

前提條件

自建MySQL(源庫)資料庫版本需為MySQL 5.1、5.5、5.6、5.7、8.0。

目標RDS執行個體的可用儲存空間需大於自建MySQL資料庫中已使用的儲存空間。

遷移方案概述

DTS提供以下三種遷移類型,在自建資料庫遷移至RDS MySQL時,建議您同時配置結構、全量和增量遷移,實現自建應用在不停機的情況下平滑遷移上雲。

庫表結構遷移:遷移庫表結構、視圖、觸發器、預存程序和函數。

全量遷移:遷移源庫中的存量資料。

增量遷移:在全量遷移的基礎上,將源庫的增量資料移轉到目標庫中。

常見遷移方案

遷移方案 | 業務停機時間 | 資料一致性 | 費用 | 適用情境 |

結構+全量+增量遷移(推薦) | 不停機(業務不中斷) | 無論遷移期間是否有資料寫入,遷移完成後(增量遷移無延遲)資料一致。 | 收費 | 生產環境遷移,要求不停機或停機時間最短。 |

結構+全量遷移 | 較長(需等待全量遷移完成) |

| 免費 | 能容忍長時間停機的業務或測試環境。 |

計費說明

使用DTS將自建MySQL遷移至RDS MySQL時,庫表結構遷移、全量遷移和公網流量均免費,以下內容收費:

增量遷移:當配置遷移任務時選擇了增量遷移,則在增量遷移正常運行期間計費(庫表結構遷移和全量遷移運行期間、增量遷移暫停或失敗期間均不收費)。

資料校正:當配置遷移任務時選擇了資料校正,可能會產生資料校正費用。

注意事項

主鍵限制:所有待遷移的表必須包含主鍵或唯一約束,且約束內的欄位值具有唯一性,否則可能導致目標庫出現資料重複。

DDL限制:在庫表結構遷移和全量遷移階段,請勿執行DDL操作(如庫表結構變更),否則資料移轉任務會失敗。

DML限制:如果僅執行全量遷移任務(無增量遷移),請勿向源執行個體中寫入新的資料,否則會導致源端和目標端資料不一致。

表數量限制:若遷移對象為表層級且需編輯列名映射,單次任務最多支援1000張表。超出此限制時提交任務會報錯,請拆分為多個子任務。

字元集限制:若待遷移的資料中包含四位元組儲存的內容(例如生僻字、表情等資訊),則目標端接收資料的資料庫和表必須使用

utf8mb4字元集。若您使用DTS遷移庫表結構,則還需將目標庫中執行個體層級的參數character_set_server設定為utf8mb4。帳號遷移限制:如需將源庫帳號遷移至目標庫,還需滿足額外的帳號遷移條件和注意事項。

主備切換限制:如果源庫有主備結構且在遷移時發生了主備切換,則會導致遷移失敗。

MySQL 8.0隱藏列限制:若源庫為MySQL 8.0.23及以上版本,且資料包含不可見的隱藏列(包括無主鍵表自動產生的隱藏主鍵),必須先將其設定為可見 (

ALTER TABLE ... ALTER COLUMN ... SET VISIBLE;),否則會導致資料丟失。不支援遷移以下內容:

使用注釋文法定義的解析器(Parser)。

不記錄Binlog的操作(如物理備份恢複、外鍵級聯)所產生的資料。

階段一:準備工作

授權DTS訪問雲資源

使用阿里雲帳號(主帳號)訪問快速授權頁面,單擊確認授權。

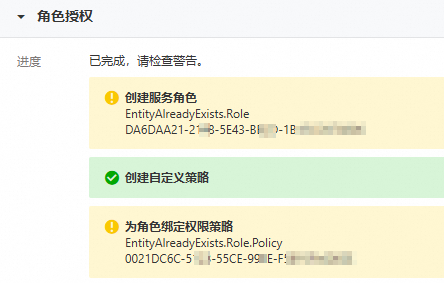

在執行完授權操作後,如果頁面出現提示

EntityAlreadyExists.Role和EntityAlreadyExists.Role.Policy,則表明當前阿里雲帳號已授予DTS訪問雲資源的許可權,您可以繼續完成後續準備工作。

建立資料庫帳號並授權

使用DTS進行資料移轉時,源庫帳號和目標庫帳號必須具備如下許可權:

資料庫帳號 | 庫表結構遷移 | 全量遷移 | 增量遷移 |

自建MySQL帳號 | SELECT許可權 |

| |

RDS MySQL帳號 | 讀寫權限 | ||

您可以使用已有資料庫帳號(需具備相關許可權)完成遷移任務,也可以通過以下方式分別在源庫和目標庫建立並授權用於遷移的資料庫帳號:

自建MySQL帳號

-- 建立遷移專用帳號(dts_user和Your_Password123需修改為您自己的資料庫帳號和密碼)

CREATE USER 'dts_user'@'%' IDENTIFIED BY 'Your_Password123';

-- 授予SELECT許可權(用於結構遷移、全量遷移)

GRANT SELECT ON *.* TO 'dts_user'@'%';

-- 授權增量遷移所需額外許可權

GRANT REPLICATION CLIENT, REPLICATION SLAVE, SHOW VIEW ON *.* TO 'dts_user'@'%';

-- 允許DTS建立心跳錶,用於推進Binlog位點,監控增量同步處理延遲

GRANT CREATE ON *.* TO 'dts_user'@'%';

FLUSH PRIVILEGES;RDS MySQL帳號

建議使用RDS MySQL高許可權帳號,您可以通過如下方式建立:

訪問RDS執行個體列表,在上方選擇地區,然後單擊目標執行個體ID。

在左側導覽列單擊帳號管理,然後單擊创建账号。

账号类型:選擇高許可權帳號,其他參數(如帳號名稱和密碼)按控制台要求填寫。

確認源庫接入方式並添加白名單

您需要根據自建MySQL資料庫類型選擇合適的接入方式。同時,若您的自建資料庫只允許與特定IP地址建立串連,您需要將DTS伺服器的IP位址區段加入到相應資料庫的安全設定(防火牆、白名單、安全性群組等)中,以允許DTS伺服器的訪問。

自建資料庫類型 | 推薦接入方式 | 白名單與配置 |

本地自建資料庫 (有公網地址) | 公網IP | 需手動添加DTS伺服器IP地址白名單。 |

本地自建資料庫 (無公網地址) |

|

|

ECS自建資料庫 | ECS自建資料庫 | 系統自動添加DTS服務IP位址區段。 |

RDS MySQL(目標庫)執行個體的接入方式為雲執行個體,系統會自動添加DTS服務IP位址區段進RDS執行個體白名單中,無需您手動設定。

(如需增量遷移)配置源庫Binlog

如果您需要使用增量遷移,源庫Binlog需完成以下配置並重啟MySQL使配置生效:

源庫需開啟本地Binlog日誌,且Binlog日誌需保留7天及以上。

源庫

binlog_format參數需設定為row,binlog_row_image參數需設定為full。如果自建MySQL是雙主叢集(兩者互為主從),為保障DTS能擷取全部的Binlog日誌,您需開啟參數

log_slave_updates。

您可以通過以下命令配置源庫Binlog:

Linux命令

使用

vim命令,修改設定檔my.cnf中的如下參數。log_bin=mysql_bin binlog_format=row # MySQL 8.0以下版本通過expire_logs_days設定binlog儲存時間長度,預設為0(永不到期) # MySQL 8.0以上版本通過binlog_expire_logs_seconds設定binlog儲存時間長度,預設為2592000秒(30天) # 如果您修改過MySQL中Binlog保留時間長度且小於7天,可以通過以下參數重新設定Binlog儲存時間長度為7天及以上 # expire_logs_days=7 # binlog_expire_logs_seconds=604800 # 建議設定為大於1的整數 server_id=2 # 當自建MySQL的版本大於5.6時,則必須設定該項。 binlog_row_image=full # 當自建資料庫為雙主叢集時,需開啟此參數 # log_slave_updates=ON修改完成後,重啟MySQL進程。

/etc/init.d/mysqld restart

Windows命令

修改設定檔my.ini中的如下參數。

log_bin=mysql_bin binlog_format=row # MySQL 8.0以下版本通過expire_logs_days設定binlog儲存時間長度,預設為0(永不到期) # MySQL 8.0以上版本通過binlog_expire_logs_seconds設定binlog儲存時間長度,預設為2592000秒(30天) # 如果您修改過MySQL中Binlog保留時間長度且小於7天,可以通過以下參數重新設定Binlog儲存時間長度為7天及以上 # expire_logs_days=7 # binlog_expire_logs_seconds=604800 # 建議設定為大於1的整數 server_id=2 # 當自建MySQL的版本大於5.6時,則必須設定該項。 binlog_row_image=full # 當自建資料庫為雙主叢集時,需開啟此參數 # log_slave_updates=ON修改完成後,重啟MySQL服務。 您可以通過Windows中的服務管理員重啟服務,或使用如下命令重啟服務:

net stop mysql net start mysql

階段二:配置遷移任務

登入Data Transmission Service控制台,在左側導覽列選擇資料移轉,然後單擊建立任務。

配置源庫及目標庫資訊

源庫配置

配置項

說明

資料庫類型

選擇MySQL。

接入方式

選擇準備工作中確認的源庫接入方式,本文以本地自建資料庫(有公網地址)為例,對應接入方式為公網IP。

執行個體地區

選擇源MySQL資料庫所屬地區。如果源庫地區不在可選地區範圍內,就近選擇即可。

網域名稱或IP地址

填入源庫外網地址或IP。如源庫地址具有公用DNS可解析的網域名稱,推薦優先以網域名稱方式接入。

連接埠

填入源庫的服務連接埠(需開放至公網),預設為3306。

資料庫帳號

填入準備工作中建立的帳號或符合許可權要求的已有帳號。

資料庫密碼

填入該資料庫帳號對應的密碼。

串連方式

請根據實際情況選擇非加密串連或SSL安全連線。

若自建MySQL未開啟SSL加密,請選擇非加密串連。

若自建MySQL已開啟SSL加密,請選擇SSL安全連線。同時,您還需要上傳CA 憑證並填寫CA 密鑰。

目標庫配置

配置項

說明

資料庫類型

選擇MySQL。

接入方式

選擇雲執行個體。

執行個體地區

選擇RDS MySQL執行個體所在地區。

是否跨阿里雲帳號

選擇不跨帳號。

RDS執行個體ID

選擇目標RDS MySQL執行個體ID。

資料庫帳號

填入RDS MySQL執行個體的資料庫帳號,推薦使用高許可權帳號。

資料庫密碼

填入該資料庫帳號對應的密碼。

串連方式

根據資料庫實際情況選擇非加密串連或SSL安全連線。如果設定為SSL安全連線,您需要提前開啟RDS MySQL執行個體的SSL加密功能,詳情請參見使用雲端認證快速開啟SSL鏈路加密。

測試源庫與目標庫串連

在頁面下方單擊測試連接以進行下一步,並在彈出的DTS伺服器訪問授權對話方塊單擊測試連接。若測試不通過,請根據錯誤提示檢查網路、帳號或許可權配置。

配置任務對象

您需要依次完成以下對象配置、進階配置和資料校正配置內容。

對象配置

在對象配置頁面中,您可以參考下表描述,結合業務實際情況配置遷移類型、待遷移的庫表、觸發器和事件遷移、目標庫大小寫策略及已存在表的處理模式等內容。在配置完成後,單擊下一步高級配置。

配置

說明

遷移類型

如果只需要進行全量遷移,建議同時選中庫表結構遷移和全量遷移。

如果需要進行不停機遷移,建議同時選中庫表結構遷移、全量遷移和增量遷移。

說明若未選中庫表結構遷移,請確保目標庫中存在接收資料的資料庫和表,並根據實際情況,在已選擇對象框中使用庫表列名映射功能。

若未選中增量遷移,為保障資料一致性,資料移轉期間請勿在源執行個體中寫入新的資料。

源庫觸發器遷移方式

請根據實際情況選擇遷移觸發器的方式,若您待遷移的對象不涉及觸發器,則無需配置。更多資訊,請參見配置同步或遷移觸發器的方式。

說明僅當遷移類型同時選擇了庫表結構遷移和增量遷移時才可以配置。

開啟遷移評估

評估源庫和目標庫的結構(如索引長度、預存程序、依賴的表等)是否滿足要求,您可以根據實際情況選擇是或者否。

說明僅當遷移類型選擇了庫表結構遷移時才可以配置。

若選擇是,則可能會增加預檢查時間。您可以在預檢查階段查看評估結果,評估結果不影響預檢查結果。

目標已存在表的處理模式

預檢查並報錯攔截:檢查目標資料庫中是否有同名的表。如果目標資料庫中沒有同名的表,則通過該檢查專案;如果目標資料庫中有同名的表,則在預檢查階段提示錯誤,資料移轉任務不會被啟動。

說明如果目標庫中同名的表不方便刪除或重新命名,您可以更改該表在目標庫中的名稱,請參見庫表列名映射。

忽略報錯並繼續執行:跳過目標資料庫中是否有同名表的檢查項。

警告選擇為忽略報錯並繼續執行,可能導致資料不一致,給業務帶來風險,例如:

表結構一致的情況下,在目標庫遇到與源庫主鍵的值相同的記錄:

全量期間,DTS會保留目的地組群中的該條記錄,即源庫中的該條記錄不會遷移至目標資料庫中。

增量期間,DTS不會保留目的地組群中的該條記錄,即源庫中的該條記錄會覆蓋至目標資料庫中。

表結構不一致的情況下,可能導致只能遷移部分列的資料或遷移失敗,請謹慎操作。

是否遷移 Event

請根據實際情況選擇是否遷移源庫中的事件(Event)。若您選擇是,則還需遵循相關要求並進行後續操作。更多資訊,請參見同步或遷移事件。

目標庫對象名稱大小寫策略

您可以配置目標執行個體中遷移對象的庫名、表名和列名的英文大小寫策略。預設情況下選擇DTS預設策略,您也可以選擇與源庫、目標庫預設策略保持一致。更多資訊,請參見目標庫對象名稱大小寫策略。

源庫對象

在源庫對象框中選擇待遷移對象,然後單擊

將其移動至已選擇對象框。說明

將其移動至已選擇對象框。說明遷移對象選擇的粒度為庫、表、列。若選擇的遷移對象為表或列,其他對象(如視圖、觸發器、預存程序)不會被遷移至目標庫。

已選擇對象

如需更改單個遷移對象在目標執行個體中的名稱,請右擊已選擇對象中的遷移對象,設定方式,請參見庫表列名單個映射。

如需批量更改遷移對象在目標執行個體中的名稱,請單擊已選擇對象方框右上方的大量編輯,設定方式,請參見庫表列名批量映射。

說明如果使用了對象名映射功能,可能會導致依賴這個對象的其他對象遷移失敗。

如需設定WHERE條件過濾資料,請在已選擇對象中右擊待遷移的表,在彈出的對話方塊中設定過濾條件。設定方法請參見設定過濾條件。

如需按庫或表層級選擇遷移的SQL操作,請在已選擇對象中右擊待遷移對象,並在彈出的對話方塊中選擇所需遷移的SQL操作。

進階配置(可選)

進階配置是基於正常DTS遷移任務上的微調(如斷連重試時間、遷移速率限制、監控警示等)及特殊情況的處理(如DTS專屬叢集、源表Online DDL工具執行過程的暫存資料表)。如無以下進階配置需求,可不修改此頁面,保持預設值,單擊下一步資料校正。

配置

說明

選擇調度該任務的專屬叢集

DTS預設將任務調度到共用叢集上,您無需選擇。若您希望任務更加穩定,可以購買專屬叢集來運行DTS遷移任務。

複製源表Online DDL工具執行過程的暫存資料表到目標庫

若源庫使用Data Management(Data Management)或gh-ost執行Online DDL變更,您可以選擇是否遷移Online DDL變更產生的暫存資料表資料。

重要DTS任務暫不支援使用pt-online-schema-change等類似工具執行Online DDL變更,否則會導致DTS任務失敗。

是:遷移Online DDL變更產生的暫存資料表資料。

說明Online DDL變更產生的暫存資料表資料過大,可能會導致遷移任務延遲。

否,適配DMS Online DDL:不遷移Online DDL變更產生的暫存資料表資料,只遷移源庫使用Data Management(Data Management)執行的原始DDL語句。

說明該方案會導致目標庫鎖表。

否,適配gh-ost:不遷移Online DDL變更產生的暫存資料表資料,只遷移源庫使用gh-ost執行的原始DDL語句,同時您可以使用預設的或者自行配置gh-ost影子表和無用表的Regex。

說明該方案會導致目標庫鎖表。

是否遷移帳號

請根據實際情況選擇是否遷移源庫的帳號資訊。若您選擇遷移是,還需要選擇待遷移的帳號並確認帳號許可權。

源庫、目標庫無法串連後的重試時間

在遷移任務啟動後,若源庫或目標庫串連失敗則DTS會報錯,並會立即進行持續的重試串連,預設重試720分鐘,您也可以在取值範圍(10~1440分鐘)內自訂重試時間,建議設定30分鐘以上。如果DTS在設定的時間內重新串連上源、目標庫,遷移任務將自動回復。否則,遷移任務將失敗。

說明針對同源或者同目標的多個DTS執行個體,網路重試時間以後建立任務的設定為準。

由於串連重試期間,DTS將收取任務運行費用,建議您根據業務需要自訂重試時間,或者在源和目標庫執行個體釋放後儘快釋放DTS執行個體。

源庫、目標庫出現其他問題後的重試時間

在遷移任務啟動後,若源庫或目標庫出現非串連性的其他問題(如DDL或DML執行異常),則DTS會報錯並會立即進行持續的重試操作,預設持續重試時間為10分鐘,您也可以在取值範圍(1~1440分鐘)內自訂重試時間,建議設定10分鐘以上。如果DTS在設定的重試時間內相關操作執行成功,遷移任務將自動回復。否則,遷移任務將會失敗。

重要源庫、目標庫出現其他問題後的重試時間的值需要小於源庫、目標庫無法串連後的重試時間的值。

是否限制全量遷移速率

在全量遷移階段,DTS將佔用源庫和目標庫一定的讀寫資源,可能會導致資料庫的負載上升。您可以根據實際情況,選擇是否對全量遷移任務進行限速設定(設定每秒查詢源庫的速率QPS、每秒全量遷移的行數RPS和每秒全量遷移的數據量(MB)BPS),以緩解目標庫的壓力。

說明僅當遷移類型選擇了全量遷移,才有此配置項。

您也可以在遷移執行個體運行後,調整全量遷移的速率。

是否限制增量遷移速率

您也可以根據實際情況,選擇是否對增量遷移任務進行限速設定(設定每秒增量遷移的行數RPS和每秒增量遷移的數據量(MB)BPS),以緩解目標庫的壓力。

說明僅當遷移類型選擇了增量遷移,才有此配置項。

您也可以在遷移執行個體運行後,調整增量遷移的速率。

環境標籤

您可以根據實際情況,選擇用於標識執行個體的環境標籤。本樣本無需選擇。

是否去除正反向任務的心跳錶sql

根據業務需求選擇是否在DTS執行個體運行時,在源庫中寫入心跳SQL資訊。

是:不在源庫中寫入心跳SQL資訊,DTS執行個體可能會顯示有延遲。

否:在源庫中寫入心跳SQL資訊,可能會影響源庫的物理備份和複製等功能。

配置ETL功能

監控警示

根據業務需求選擇是否設定警示並接收警示通知。

不設定:不設定警示。

設定:設定警示。您還需要設定警示閾值和警示通知,當遷移失敗或延遲超過閾值後,系統將進行警示通知。

資料校正(可選)

資料校正用於監控源庫與目標庫資料差異,支援在不停服的情況下對源庫和目標庫進行校正,協助您及時探索資料和結構不一致的問題。資料校正類型分為以下三類:

資料校正方式

費用

說明

全量校正

收費

對全量任務中需要校正的資料進行校正。

增量校正

對增量任務的資料進行校正。

結構校正

免費

對需要校正的對象進行結構校正。

如需使用資料校正功能,可以在配置遷移任務時配置資料校正,也可以在遷移任務完成後單獨配置資料校正任務。如無需資料校正,可直接進行後續步驟。

階段三:預檢查與啟動

在完成階段二的配置後,單擊下一步儲存任務並預檢查。DTS將檢查源庫和目標庫的環境、許可權、配置等是否滿足遷移要求。

等待預檢查完成。

預檢查通過率顯示為100%:則說明環境已準備就緒,可以進行後續購買和啟動。

預檢查失敗或出現不可忽略的警告:請單擊失敗檢查項後的查看詳情,並根據提示修複後重新進行預檢查。

出現可忽略的警告項:請仔細閱讀警告內容,確認無相關風險後(如資料不一致等問題)可選擇忽略。

預檢查完全通過後,單擊下一步購買。

選擇資源群組配置(預設為default resource group)和合適的鏈路規格(規格越高,遷移速度越快)。

閱讀並選中《資料轉送(隨用隨付)服務條款》,單擊購買並啟動,並在彈出的確認對話方塊,單擊確定,任務將自動開始執行。

階段四:資料驗證與業務切換

等待DTS遷移任務完成

未包含增量遷移的任務:在全量遷移完成後自動結束,任務運行狀態變為已完成。

包含增量遷移的任務:任務不會自動結束,增量遷移會持續進行(任務運行狀態為運行中)。當增量遷移顯示無延遲時,表示源庫與目標庫資料一致,可以進行資料驗證。

資料驗證

當遷移任務結束或增量遷移無延遲(低延遲)時,可以進行源庫和目標庫的資料驗證:

自動校正:在DTS中配置資料校正任務,自動對比源庫和目標庫的資料。

手動抽樣校正:您可以從多種維度手動校正資料(如對比源庫和目標庫的錶行數、核心業務資料等),抽樣驗證資料一致性。以下為範例程式碼:

-- 樣本1:在源庫和目標庫分別執行,對比錶行數 SELECT COUNT(*) FROM your_table; -- 樣本2:在源庫和目標庫分別執行,對比關鍵業務指標,如某時間段內的訂單總金額 SELECT SUM(amount) FROM orders WHERE create_time >= '2024-01-01';

業務切換

資料驗證完成後,建議您選擇業務低峰期,將應用服務內的自建資料庫連接地址修改為RDS MySQL執行個體的串連地址,完成業務切換。如果DTS遷移任務中包含增量遷移,請在切換完成後及時釋放該任務,避免遷移任務持續計費。

附錄1:遷移類型說明

遷移類型 | 說明 |

庫表結構遷移 | 將源庫中待遷移對象的結構定義遷移到目標庫:

|

全量遷移 | 將源庫中待遷移對象的存量資料,全部遷移到目標庫中。 |

增量遷移 | 在全量遷移的基礎上,將源庫的累加式更新資料移轉到目標庫中。通過增量資料移轉可以實現在自建應用不停機的情況下,平滑地完成資料移轉。 |

附錄2:增量遷移時支援的SQL操作

操作類型 | SQL動作陳述式 |

DML | INSERT、UPDATE、DELETE |

DDL |

|

常見問題

Q1:測試連接報錯“JDBC: [conn_error, cause: null, message from server: "Host 'XXX' is not allowed to connect to this MySQL server"]; PING: []; TELNET: []; requestId=[XXX]”

該報錯為JDBC異常,請您檢查JDBC的帳號、密碼及許可權問題,或使用高許可權帳號測試連接。

Q2:建立遷移任務,為什麼無法選擇福州地區的RDS執行個體?

目前DTS不支援福州地區的執行個體。您可以將MySQL 5.7、8.0自建Database Backup上雲。