By Mengshi

Paged queries are common operations in databases. This article introduces how to efficiently perform paging operations in a database (standalone or distributed).

The requirements are as follows:

Taking MySQL as an example, LIMIT M, N is the simplest way to query by page.

SELECT * FROM t1 ORDER BY ID LIMIT 10000,1000;The cost is low when you query the first few pages. However, the cost will increase when you query more subsequent pages. The reason is easy to understand. Common databases are unable to directly locate the position of the data in the 10,000th row. Therefore, for queries with similar syntax, the execution method of the database is to scan from the first row and row by row. After skipping irrelevant rows and completing the scanning of all the 10,000 rows, it returns the result of the next 1,000 rows.

For the preceding query, the database scans 10000 + 1000 = 11000 records. It is far more than the last 10 records returned. The more pages you turn forward, the more data you need to scan, causing higher costs and worse performance.

Note that the preceding description assumes that you use indexes. If you do not use indexes, the cost will be higher.

For distributed databases, the cost of LIMIT M, N is also O (M + N). However, in most cases, the cost is greater than that of standalone databases. For distributed databases, the preceding LIMIT query is equivalent to the following query that each node needs to execute:

SELECT * FROM t1 ORDER BY ID LIMIT 0, 10000 + 1000;These 10000 + 1000 pieces of data need to be passed to a certain node. The final 1,000 pieces of data can be returned after sorting.

Therefore, though the total cost is also O (M + N), compared with the standalone database, the distributed database needs to multiply the cost of network transmission. In some cases, the shards are ordered according to the sort key. It executes only on one node. Therefore, in this scenario, the cost is similar to that of a standalone database.

In a situation with small amounts of data, low concurrency, and low requirements of performance, LIMIT M, N is enough. If the requirements are higher, we need other methods.

In a standalone database, we can use the following method:

SELECT * FROM t1 ORDER BY id LIMIT 1000;

SELECT * FROM t1 WHERE id > ?ORDER BY id LIMIT 1000;Record the last ID of each batch. Take the ID as the starting value of the next batch and write it in the WHERE condition. In most cases, we use an auto-increment column for ID, whose size represents the time when data is inserted.

Because ID is an ordered index, the database directly locates the starting position of scanning according to the value of ID without scanning the previous data first. Therefore, the final cost is only 1,000 pieces of data required by the result. This method is efficient enough to meet the performance requirements.

However, if you apply the method to distributed databases or other business scenarios, the following problems appear:

PolarDB-X generates auto-increment primary keys in two ways:

In addition, we view the mode of the database by using SHOW CREATE DATABASE and view the auto-increment strategy of the table by using SHOW SEQUENCES.

Because PolarDB-X is highly compatible with MySQL AUTO_INCREMENT, for tables using New Sequence, the paging traversal method is the same as that of standalone MySQL to use the following query:

SELECT * FROM t1 ORDER BY id LIMIT 1000;

SELECT * FROM t1 WHERE id > ?ORDER BY id LIMIT 1000;However, the database does not ensure the order of each return without specifying ORDER BY:

Therefore, for such paged queries, whether using standalone or distributed databases, it is recommended to explicitly specify ORDER BY to ensure that the order returned is semantically restricted from the SQL.

For such tables, the order of ID does not represent the time sequence when records are written. In most cases, tables have a time column to mark the writing time of the row, for example:

CREATE TABLE t1(

id bigint PRIMARY KEY AUTO_INCREMENT BY GROUP,

gmt_create timestamp DEFAULT current_timestamp,

INDEX idx_gmt_create_id(gmt_create, id)

) PARTITION BY HASH(id);If we simply apply the preceding method to record the maximum value of gmt_create in each batch and use the maximum value at the start of the next batch, the details are as follows:

## Wrong!! Do not use it!!

SELECT * FROM t1 ORDER BY gmt_create LIMIT 1000;

SELECT * FROM t1 WHERE gmt_create > ?ORDER BY gmt_create LIMIT 1000;

## Wrong!! Do not use it!! Because gmt_create may repeat, data are missed by using “gmt_create > ?” in the second batch, and repeated data appear by using “gmt_create >= ?” in the second batch.

Correct statements:

SELECT * FROM t1 ORDER BY gmt_create, id LIMIT 1000;

SELECT * FROM t1 WHERE (gmt_create = ?AND > ?) OR gmt_create > ?ORDER BY gmt_create, id LIMIT 1000;Or:

SELECT * FROM t1 ORDER BY gmt_create, id LIMIT 1000;

SELECT * FROM t1 WHERE (gmt_create, id) > (?, ?) ORDER BY gmt_create, id LIMIT 1000;The preceding two sample codes can achieve the same effect. In PolarDB-X, the second code is recommended. The first code is suitable for other databases that do not support tuple conditions.

The same applies to the requirements for sorting and paging by other columns.

When the data to be queried do not have a partition key, the preceding paged query is a cross-partition one. This type of query can be used directly without too many performance problems under the condition of low concurrency. In some extreme scenarios, for example:

In this case, we traverse the data by shard.

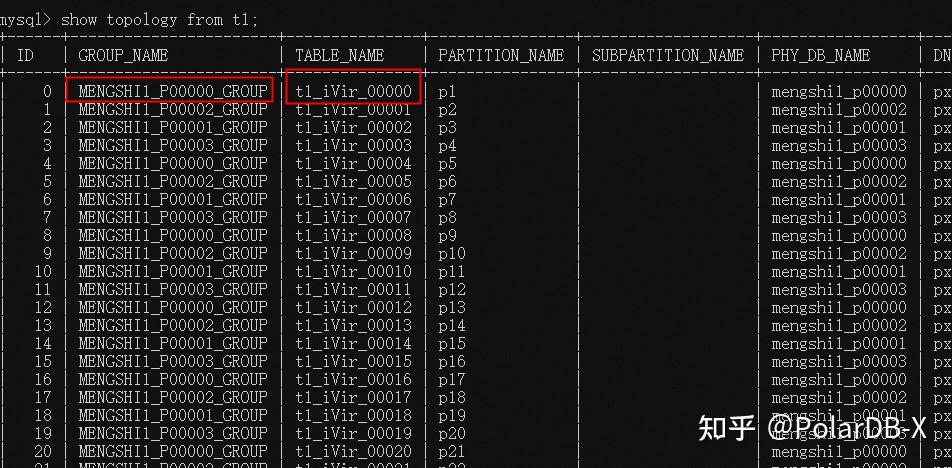

1. Use SHOW TOPOLOGY FROM tbl to obtain the topology information of the table:

2. Use HINT to specify shard information. For example, query the p1 shard:

/*TDDL:node='MENGSHI1_P00000_GROUP'*/SELECT * FROM t1_iVir_00000 ORDER BY id LIMIT 10003. Use the paged query mentioned above to traverse the data of a shard.

4. Set a loop on the outer layer to traverse the data of all shards.

In some cases, paged queries are performed to export data. In this scenario, we use the open source PolarDB-X BatchTool. The tool internally optimizes the export operation of PolarDB-X.

For more details, see Use BatchTool to export and import data

Notes:

1. Sorted columns need a suitable index. For example, if you sort by (gmt_create, id), you need a combined index on (gmt_create, id). The index information should be considered together with other WHERE conditions. For example, for a query:

SELECT * FROM t1 WHERE c1 = xxx ORDER BY gmt_create, id LIMIT 1000;Typically, a combined index on (c1, gmt_create, id) is required.

2. For JAVA applications, we need to set appropriate JDBC values to avoid timeout, including:

3. Set netTimeoutForStreamingResults=0.

4. Set socketTimeout, Unit: ms.

5. Set Statement object setFetchSize(Integer.MIN_VALUE) to avoid memory exhaustion.

6. Keep autocommit=true to avoid long transactions caused by paged queries.

package com.taobao.tddl.sample;

import java.sql.Connection;

import java.sql.PreparedStatement;

import java.sql.ResultSet;

public class PageSample {

public static void main(String[] args) throws Exception {

int index = 0;

boolean first = true;

Object maxGmtCreate = null;

long maxId = -1;

while (true) {

Connection conn = null;

try {

conn = ds.DriverManager.getConnection("jdbc:mysql://xxxxxxx:3306/dbname","user","password")

PreparedStatement ps = null;

if (first) {

ps = conn.prepareStatement("SELECT * FROM t1 order by gmt_create,id limit 99");

first = false;

} else {

ps = conn.prepareStatement(

"SELECT * FROM t1 where gmt_create >= ? and (gmt_create > ? or id > ?) order by gmt_create,id limit 99");

ps.setObject(1, maxGmtCreate);

ps.setObject(2, maxGmtCreate);

ps.setLong(3, maxId);

}

ResultSet rs = ps.executeQuery();

maxGmtCreate = null;

maxId = -1;

while (rs.next()) {

System.out.println((++index) + " " + rs.getInt("id") + " " + rs.getString("gmt_Create"));

maxGmtCreate = rs.getObject("gmt_create");

maxId = rs.getLong("id");

}

if (maxId == -1) {

break;

}

} finally {

conn.close();

}

}

}

}PolarDB-X Best Practice Series (1): How to Design a User Table

Discover the Latest in ApsaraDB for MongoDB 7.0 and See What Difference It Can Make for You

ApsaraDB - December 12, 2025

ApsaraDB - April 16, 2025

ApsaraDB - April 10, 2024

ApsaraDB - April 20, 2023

ApsaraDB - April 16, 2025

ApsaraDB - April 20, 2023

Best Practices

Best Practices

Follow our step-by-step best practices guides to build your own business case.

Learn More PolarDB for Xscale

PolarDB for Xscale

Alibaba Cloud PolarDB for Xscale (PolarDB-X) is a cloud-native high-performance distributed database service independently developed by Alibaba Cloud.

Learn More ApsaraDB RDS for SQL Server

ApsaraDB RDS for SQL Server

An on-demand database hosting service for SQL Server with automated monitoring, backup and disaster recovery capabilities

Learn More Database for FinTech Solution

Database for FinTech Solution

Leverage cloud-native database solutions dedicated for FinTech.

Learn MoreMore Posts by ApsaraDB