When scheduling large-scale jobs in Flink 1.12, a lot of time is required to initialize jobs and deploy tasks. The scheduler also requires a large amount of heap memory to store the execution topology and host temporary deployment descriptors. For example, Flink's JobManager would require 30 GiB of heap memory and more than four minutes to deploy all of the tasks for a job with a topology that contains two vertices connected with an all-to-all edge and parallelism of 10k. This means there are 10k source tasks and 10k sink tasks, and every source task is connected to all sink tasks.

Furthermore, task deployment may block the JobManager's main thread for a long time, and the JobManager cannot respond to any other requests from TaskManagers. This could lead to heartbeat timeouts that trigger a failover. In the worst case, this will render the Flink cluster unusable because it cannot deploy the job.

We've implemented several optimizations in Flink 1.13 and 1.14 to improve the performance of the scheduler for large-scale jobs:

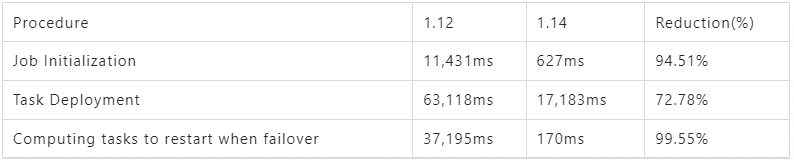

We conducted several experiments to estimate the effect of our optimizations and compare the performance of Flink 1.12 (before the optimization) with Flink 1.14 (after the optimization). The job in our experiments contains two vertices connected with an all-to-all edge. The parallelisms of these vertices are both 10K. We set the configuration blob.offload.minsize to 100KiB (from default value 1MiB) to make temporary deployment descriptors distributed via the blob server. This configuration means the blobs larger than the set value will be distributed via the blob server, and the size of deployment descriptors in our test job is about 270 KiB. The results of our experiments are illustrated below:

Table 1 - The comparison of time cost between Flink 1.12 and 1.14

In addition to quicker speeds, memory usage is reduced significantly. It requires 30 GiB of heap memory for a JobManager to deploy the test job and keep it running stably with Flink 1.12, while the minimum heap memory required by the JobManager with Flink 1.14 is only 2 GiB.

There are also fewer occurrences of long-term garbage collection. When running the test job with Flink 1.12, a garbage collection that lasts more than ten seconds occurs during job initialization and task deployment. With Flink 1.14, since there is no long-term garbage collection, there is also a decreased risk of heartbeat timeouts, which creates better cluster stability.

In our experiment, it took more than four minutes for the large-scale job with Flink 1.12 to transition to running (excluding the time spent on allocating resources.) With Flink 1.14, it took no more than 30 seconds (excluding the time spent on allocating resources.) The time cost is reduced by 87%. Thus, users that want better scheduling performance and running large-scale jobs for production should consider upgrading Flink to 1.14.

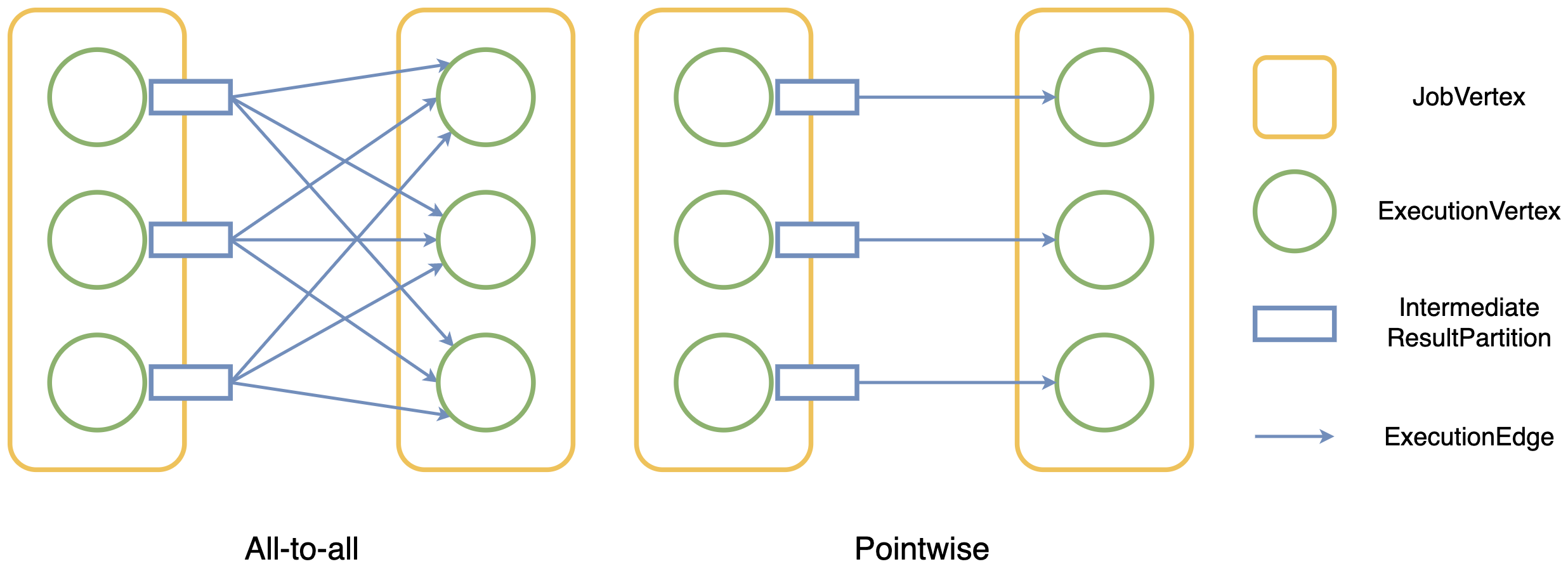

A distribution pattern describes how consumer tasks are connected to producer tasks. Currently, there are two distribution patterns in Flink: pointwise and all-to-all. When the distribution pattern is pointwise between two vertices, the computational complexity of traversing all edges is O(n). When the distribution pattern is all-to-all, the complexity of traversing all edges is O(n2), which means complexity increases rapidly when the scale goes up.

Fig. 1 - Two distribution patterns in Flink

In Flink 1.12, the ExecutionEdge class is used to store the information of connections between tasks. This means that for the all-to-all distribution pattern, there would be O(n2) ExecutionEdges, which would take up a lot of memory for large-scale jobs. It would take more than 4 GiB of memory to store 100M ExecutionEdges for two JobVertices connected with an all-to-all edge and parallelism of 10K. Since there can be multiple all-to-all connections between vertices in production jobs, the amount of memory required would increase rapidly.

As we can see in Fig. 1, all IntermediateResultPartitions produced by upstream ExecutionVertices are isomorphic for two JobVertices connected with the all-to-all distribution pattern, which means the downstream ExecutionVertices they connect to are exactly the same. The downstream ExecutionVertices belonging to the same JobVertex are also isomorphic, as the upstream IntermediateResultPartitions they connect to are also the same. Since every JobEdge has exactly one distribution type, we can divide vertices and result partitions into groups according to the distribution type of the JobEdge.

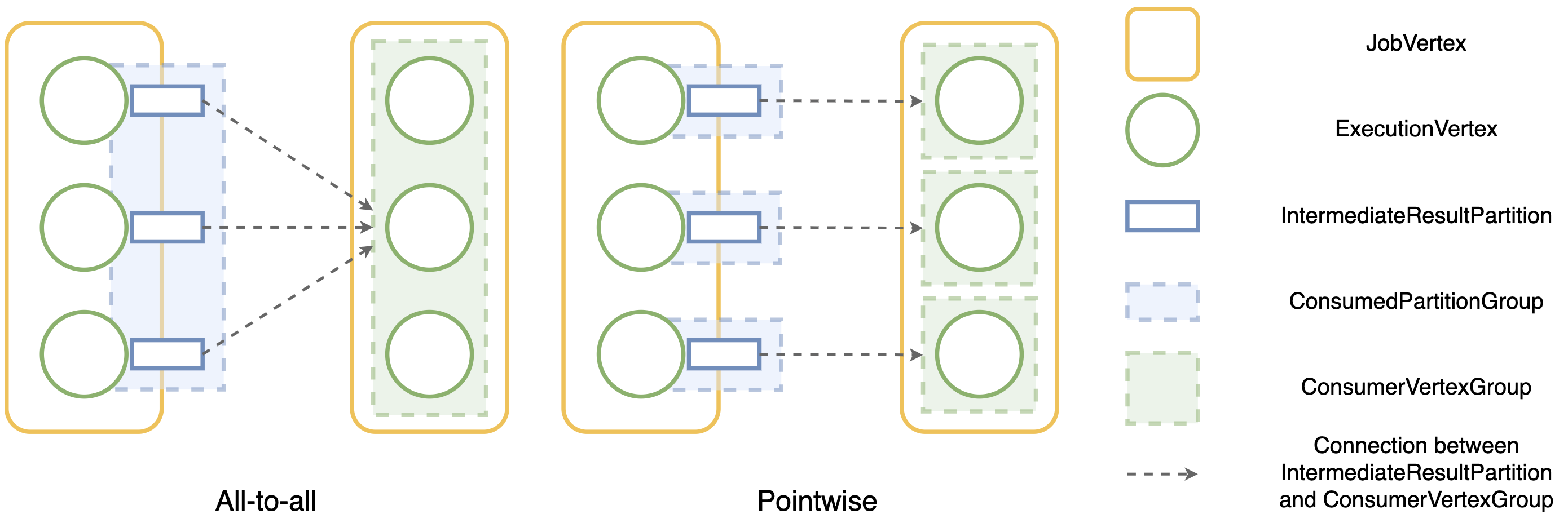

For the all-to-all distribution pattern, since all downstream ExecutionVertices belonging to the same JobVertex are isomorphic and belong to a single group, all the result partitions they consume are connected to this group. This group is called ConsumerVertexGroup. Inversely, all the upstream result partitions are grouped into a single group, and all the consumer vertices are connected to this group. This group is called ConsumedPartitionGroup.

The basic idea of our optimizations is to put all the vertices that consume the same result partitions into one ConsumerVertexGroup and put all the result partitions with the same consumer vertices into one ConsumedPartitionGroup.

Fig. 2 - How partitions and vertices are grouped w.r.t. distribution patterns

When scheduling tasks, Flink needs to iterate over all the connections between result partitions and consumer vertices. In the past, since there were O(n2) edges in total, the overall complexity of the iteration was O(n2). Now ExecutionEdge is replaced with ConsumerVertexGroup and ConsumedPartitionGroup. As all the isomorphic result partitions are connected to the same downstream ConsumerVertexGroup, when the scheduler iterates over all the connections, it just needs to iterate over the group once. The computational complexity decreases from O(n2) to O(n).

For the pointwise distribution pattern, one ConsumedPartitionGroup is connected to one ConsumerVertexGroup point-to-point. The number of groups is the same as the number of ExecutionEdges. Thus, the computational complexity of iterating over the groups is still O(n).

For the example job we mentioned above, replacing ExecutionEdges with the groups can reduce the memory usage of ExecutionGraph from more than 4 GiB to about 12 MiB. Based on the concept of groups, we further optimized several procedures, including job initialization, scheduling tasks, failover, and partition releasing. These procedures are all involved with traversing all consumer vertices for all the partitions. After the optimization, their overall computational complexity decreases from O(n2) to O(n).

In Flink 1.12, it takes a long time to deploy tasks for large-scale jobs if they contain all-to-all edges. Furthermore, a heartbeat timeout may happen during or after task deployment, which makes the cluster unstable.

Currently, task deployment includes the following steps:

A TaskDeploymentDescriptor (TDD) contains all the information required by TaskManagers to create a task. At the beginning of task deployment, a JobManager creates the TDDs for all tasks. Since this happens in the main thread, the JobManager cannot respond to any other requests. For large-scale jobs, the main thread may get blocked for a long time, heartbeat timeouts may happen, and a failover would be triggered.

A JobManager can become a bottleneck during task deployment since all descriptors are transmitted from JobManager to all TaskManagers. For large-scale jobs, these temporary descriptors would require a lot of heap memory and cause frequent long-term garbage collection pauses.

Thus, we need to speed up the creation of the TDDs. Furthermore, if the size of descriptors can be reduced, they will be transmitted faster, which leads to faster task deployments.

ShuffleDescriptors are used to describe the information of result partitions that a task consumes and can be the largest part of a TaskDeploymentDescriptor. For an all-to-all edge, when the parallelisms of both upstream and downstream vertices are n, the number of ShuffleDescriptors for each downstream vertex is n since they are connected to n upstream vertices. Thus, the total count of the ShuffleDescriptors for the vertices is n2.

However, the ShuffleDescriptors for the downstream vertices are all the same since they all consume the same upstream result partitions. Therefore, Flink doesn't need to create ShuffleDescriptors for each downstream vertex individually. Instead, it can create them once and cache them to be reused. This will decrease the overall complexity of creating TaskDeploymentDescriptors for tasks from O(n2) to O(n).

The cached ShuffleDescriptors can be compressed to decrease the size of RPC messages and reduce the transmission of replicated data over the network. For the example job we mentioned above, if the parallelisms of vertices are both 10k, each downstream vertex has 10k ShuffleDescriptors. After compression, the size of the serialized value would be reduced by 72%.

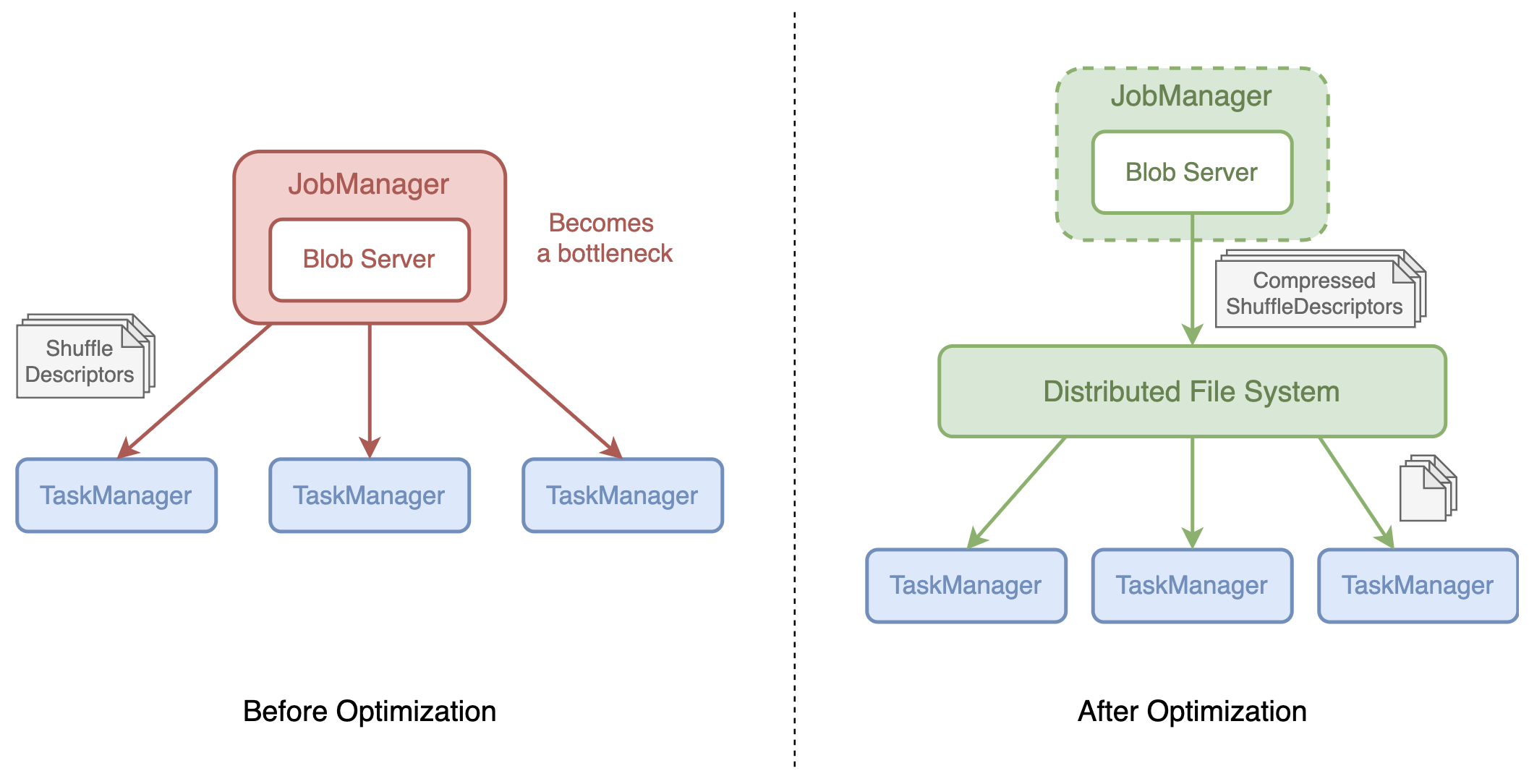

A binary large object (blob) is a collection of binary data used to store large files. Flink hosts a blob server to transport large-sized data between the JobManager and TaskManagers. When a JobManager decides to transmit a large file to TaskManagers, it would store the file in the blob server (it will also upload files to the distributed file system) and get a token representing the blob called the blob key. Then, it would transmit the blob key instead of the blob file to TaskManagers. When TaskManagers get the blob key, they will retrieve the file from the distributed file system (DFS). The blobs are stored in the blob cache on TaskManagers, so they only need to retrieve the file once.

During task deployment, the JobManager is responsible for distributing the ShuffleDescriptors to TaskManagers via RPC messages. The messages will be garbage collected once they are sent. However, if the JobManager cannot send the messages as fast as they are created, these messages will take up a lot of space in heap memory and become a heavy burden for the garbage collector. There will be more long-term garbage collections that stop the world and slow down the task deployment.

The blob server can be used to distribute large ShuffleDescriptors to solve this problem. The JobManager sends ShuffleDescriptors to the blob server, which stores ShuffleDescriptors in the DFS. TaskManagers request ShuffleDescriptors from the DFS once they begin to process TaskDeploymentDescriptors. With this change, the JobManager doesn't need to keep all the copies of ShuffleDescriptors in heap memory until they are sent. Moreover, the frequency of garbage collections for large-scale jobs is reduced significantly. Also, task deployment will be faster since there will be no bottleneck during task deployment anymore because the DFS provides multiple distributed nodes for TaskManagers to download the ShuffleDescriptors.

Fig. 3 - How ShuffleDescriptors are distributed

The cache will be cleared when the related partitions are no longer valid, and a size limit is added for ShuffleDescriptors in the blob cache on TaskManagers to avoid running out of space on the local disk. If the overall size exceeds the limit, the least recently used cached value will be removed. This ensures that the local disks on the JobManager and TaskManagers won't be filled up with ShuffleDescriptors, especially in session mode.

In Flink, there are two types of data exchanges: pipelined and blocking. When using blocking data exchanges, result partitions are fully produced and consumed by the downstream vertices. The produced results are persisted and can be consumed multiple times. When using pipelined data exchanges, result partitions are produced and consumed concurrently. The produced results are not persisted and can only be consumed once.

Since the pipelined data stream is produced and consumed simultaneously, Flink needs to make sure that the vertices connected via pipelined data exchanges execute at the same time. These vertices form a pipelined region. The pipelined region is the basic unit of scheduling and failover by default. During scheduling, all vertices in a pipelined region will be scheduled together, and all pipelined regions in the graph will be scheduled one by one topologically.

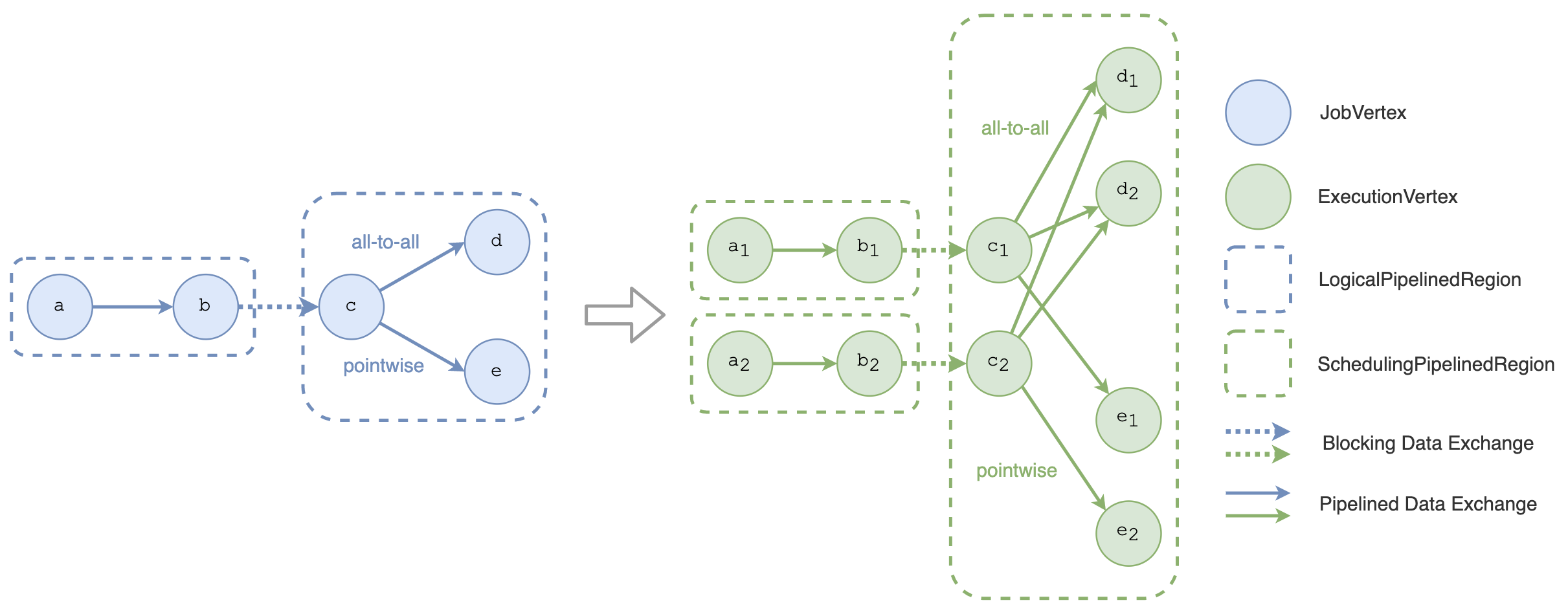

Fig. 4 - The LogicalPipelinedRegion and the SchedulingPipelinedRegion

Currently, there are two types of pipelined regions in the scheduler: LogicalPipelinedRegion and SchedulingPipelinedRegion. The LogicalPipelinedRegion denotes the pipelined regions on the logical level. It consists of JobVertices and forms the JobGraph. The SchedulingPipelinedRegion denotes the pipelined regions on the execution level. It consists of ExecutionVertices and forms the ExecutionGraph. As Fig. 4 shows, ExecutionVertices are derived from a JobVertex, and SchedulingPipelinedRegions are derived from a LogicalPipelinedRegion.

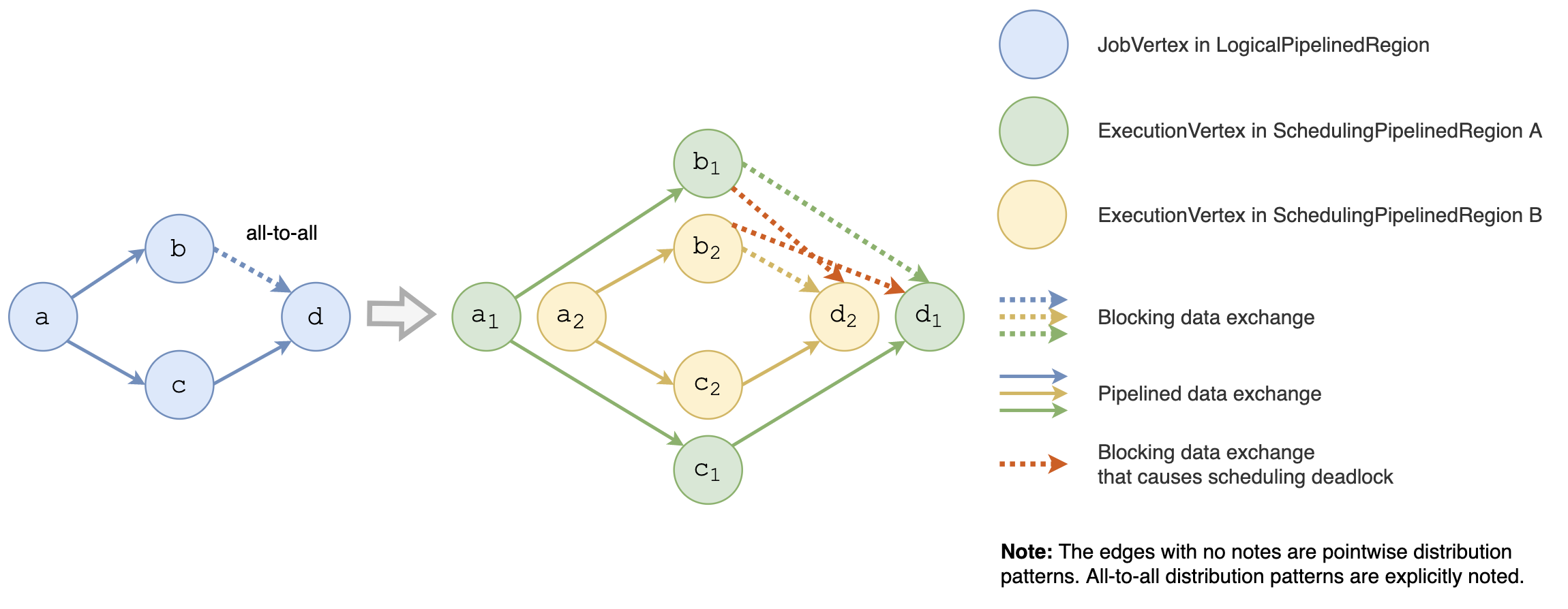

A problem arises during the construction of pipelined regions. There may be cyclic dependencies between pipelined regions. A pipelined region can only be scheduled if all its dependencies have finished. However, if there are two pipelined regions with cyclic dependencies between each other, there will be a scheduling deadlock. They are both waiting for the other one to be scheduled first, and none of them can be scheduled. Therefore, Tarjan's strongly connected components algorithm is adopted to discover the cyclic dependencies between regions and merge them into one pipelined region. It will traverse all the edges in the topology. For the all-to-all distribution pattern, the number of edges is O(n2). Thus, the computational complexity of this algorithm is O(n2), and it slows down the initialization of the scheduler significantly.

Fig. 5 - The topology with scheduling deadlock

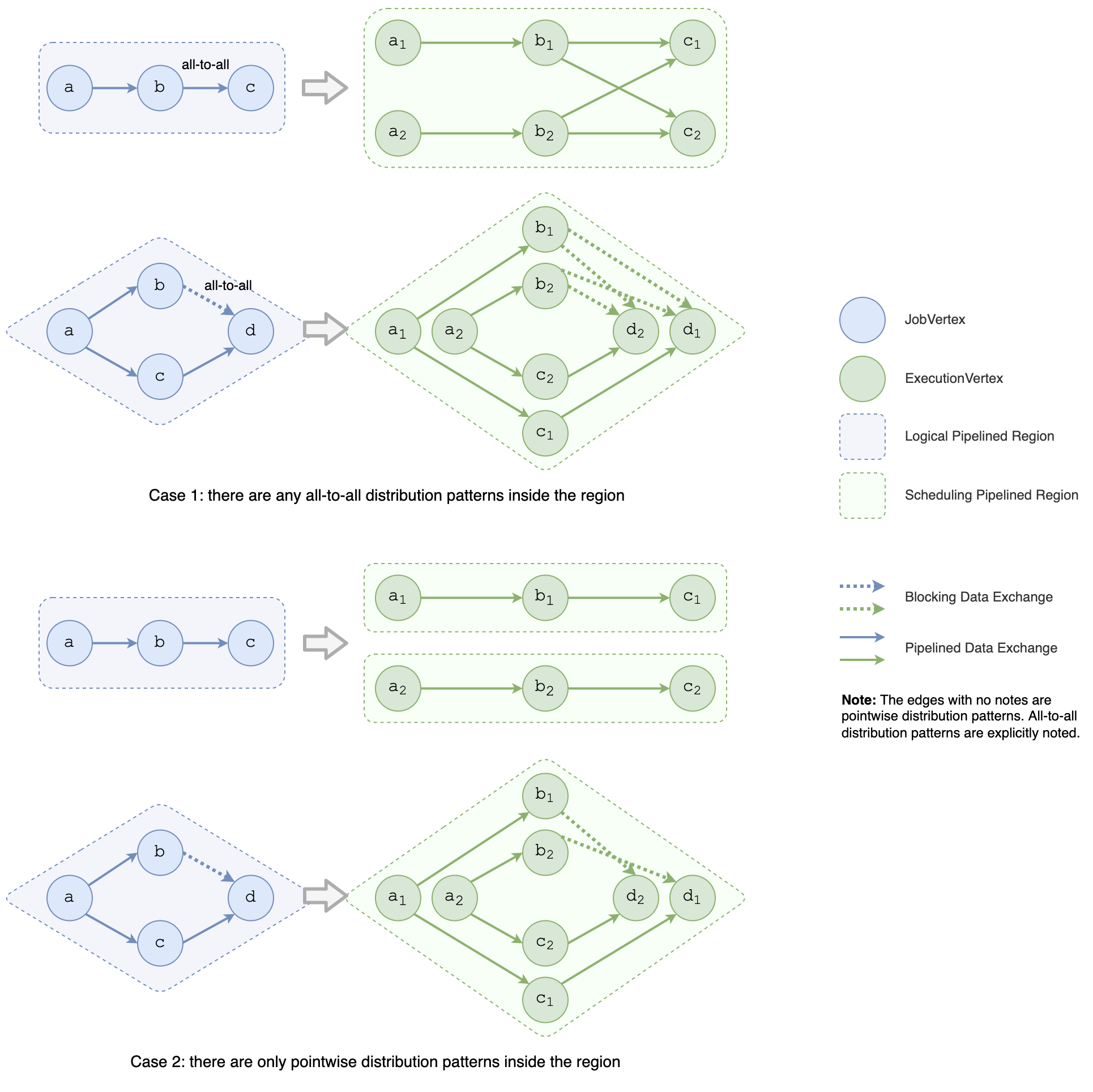

The relevance between the logical topology and the scheduling topology can be leveraged to speed up the construction of pipelined regions. Since a SchedulingPipelinedRegion is derived from one LogicalPipelinedRegion, Flink traverses all LogicalPipelinedRegions and converts them into SchedulingPipelinedRegions one by one. The conversion varies based on the distribution patterns of edges that connect vertices in the LogicalPipelinedRegion.

If there are any all-to-all distribution patterns inside the region, the entire region can be converted into one SchedulingPipelinedRegion directly. That's because, for the all-to-all edge with the pipelined data exchange, all the regions connected to this edge must execute simultaneously, which means they are merged into one region. As Fig. 5 shows, it will introduce cyclic dependencies for the all-to-all edge, with a blocking data exchange. As Fig. 6 shows, all the regions it connects must be merged into one region to avoid scheduling deadlock. Since there's no need to use Tarjan's algorithm, in this case, the computational complexity is O(n).

If there are only pointwise distribution patterns inside a region, Tarjan's strongly connected components algorithm is still used to ensure no cyclic dependencies. Since there are only pointwise distribution patterns, the number of edges in the topology is O(n), and the computational complexity of the algorithm will be O(n).

Fig. 6 - How to convert a LogicalPipelinedRegion to ScheduledPipelinedRegions

After the optimization, the overall computational complexity of building pipelined regions decreases from O(n2) to O(n). In our experiments, for the job that contains two vertices connected with a blocking all-to-all edge, when their parallelisms are both 10K, the time of building pipelined regions decreases by 99%, from 8,257 ms to 120 ms.

All in all, we've done several optimizations to improve the scheduler's performance for large-scale jobs in Flink 1.13 and 1.14. The optimizations involve procedures, such as job initialization, scheduling, task deployment, and failover.

206 posts | 56 followers

FollowAlibaba Cloud MaxCompute - August 31, 2020

Alibaba Cloud MaxCompute - August 31, 2020

Alibaba Container Service - February 13, 2026

Apache Flink Community China - April 17, 2023

Alibaba Clouder - December 2, 2020

Apache Flink Community China - February 28, 2022

206 posts | 56 followers

Follow Realtime Compute for Apache Flink

Realtime Compute for Apache Flink

Realtime Compute for Apache Flink offers a highly integrated platform for real-time data processing, which optimizes the computing of Apache Flink.

Learn More Big Data Consulting for Data Technology Solution

Big Data Consulting for Data Technology Solution

Alibaba Cloud provides big data consulting services to help enterprises leverage advanced data technology.

Learn More Big Data Consulting Services for Retail Solution

Big Data Consulting Services for Retail Solution

Alibaba Cloud experts provide retailers with a lightweight and customized big data consulting service to help you assess your big data maturity and plan your big data journey.

Learn More Message Queue for Apache Kafka

Message Queue for Apache Kafka

A fully-managed Apache Kafka service to help you quickly build data pipelines for your big data analytics.

Learn MoreMore Posts by Apache Flink Community