An SLS Catalog automatically parses logs in a Simple Log Service (SLS) Logstore to infer the table schema. After you configure an SLS Catalog, you can directly access the SLS Logstore in your Flink jobs without declaring the SLS table schema in Flink SQL. This enables you to retrieve field information seamlessly. This topic describes how to create, view, use, and delete an SLS Catalog.

Context

This topic covers the following aspects of managing SLS Catalogs:

Limits

All fields are parsed as the String type. If you require other data types, use Flink SQL to convert them.

Only Flink jobs that use Ververica Runtime (VVR) 6.0.7 or later support SLS Catalogs.

You cannot modify an existing SLS Catalog using DDL statements.

You can only query data tables. Creating, modifying, or deleting databases and tables is not supported.

Tables provided by an SLS Catalog can be used as source tables and sink tables in Flink SQL jobs. They cannot be used as lookup dimension tables.

Notes

An SLS Catalog generates a table schema by parsing sample data. For a Logstore with inconsistent data formats, the catalog retains all columns by default to return the widest possible schema. If the data format of the Logstore changes, the table schema obtained by the SLS Catalog at different times can be inconsistent. If the inferred schema differs before and after a job restart, job execution issues can occur.

For example, a Flink SQL job references a table in an SLS Catalog. If the job restarts from a savepoint after running for a period, it might retrieve a schema that differs from the one used in the previous run. The execution plan of the SQL job uses the version generated with the previous schema. This can cause mismatches in downstream operations, such as filter conditions or field value retrieval. Therefore, create an SLS table using `CREATE TEMPORARY TABLE` in your Flink SQL job to use a fixed schema.

Create an SLS Catalog

You can configure an SLS Catalog in the UI or by running an SQL command. We recommend using the UI.

Use the UI

Go to the Data Management page.

Log on to the Realtime Compute console, and click Actions in the Console column of the target workspace.

Click Data Management.

Click Create Catalog, select SLS, and then click Next.

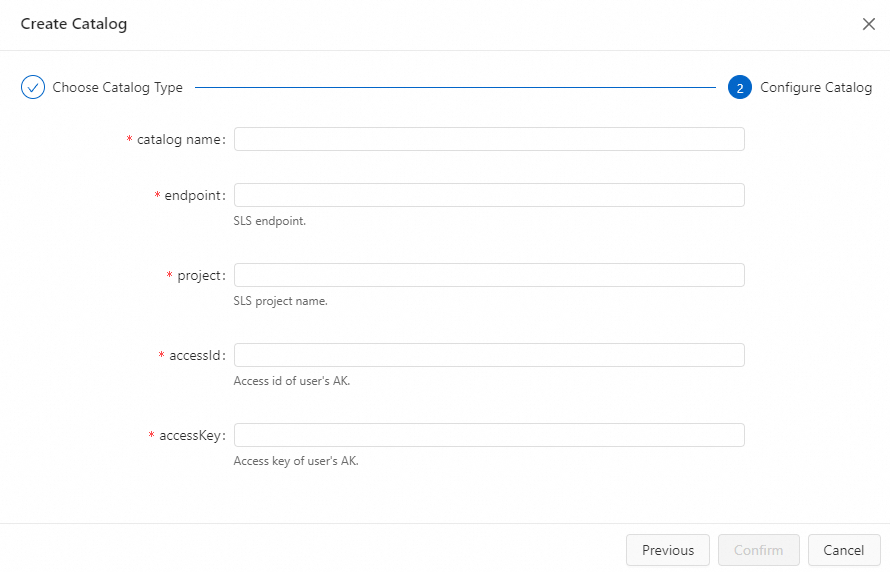

Configure the parameters.

ImportantAfter you create a catalog, these settings cannot be changed. To change the settings, delete the catalog and create a new one.

Parameter

Type

Description

Required

Remarks

catalog name

String

The name of the SLS Catalog.

Yes

Enter a custom English name.

endpoint

String

The endpoint address.

Yes

For more information, see Endpoints.

project

String

The name of the SLS project.

Yes

None.

accessId

String

The AccessKey ID of your Alibaba Cloud account.

Yes

For more information, see How do I view the AccessKey ID and AccessKey secret?

ImportantTo protect your AccessKey information, use variables to specify the AccessKey value. For more information, see Project variables.

accessKey

String

The AccessKey secret of your Alibaba Cloud account.

Yes

Click Confirm.

You can view the created catalog in the left Metadata area.

SQL commands

In the Data Query editor, enter the command to configure the SLS Catalog.

CREATE CATALOG <catalogName> WITH( 'type'='sls', 'endpoint'='<brokers>', 'project'='project', 'accessId'='${secret_values.ak_id}', 'accessKey'='${secret_values.ak_secret}' )Parameter

Type

Description

Required

Remarks

catalogName

String

The name of the SLS Catalog.

Yes

Enter a custom English name.

type

String

The type of the catalog.

Yes

The value is fixed to sls.

endpoint

String

The endpoint address.

Yes

For more information, see Endpoints.

project

String

The name of the SLS project.

Yes

None.

accessId

String

The AccessKey ID of your Alibaba Cloud account.

Yes

For more information, see How do I view the AccessKey ID and AccessKey secret?

ImportantTo protect your AccessKey information, use secrets management to specify the AccessKey ID value. For more information, see Manage variables.

accessKey

String

The AccessKey secret of your Alibaba Cloud account.

Yes

For more information, see How do I view the AccessKey ID and AccessKey secret?

ImportantTo protect your AccessKey information, use secrets management to specify the AccessKey secret value. For more information, see Manage variables.

Select the code that is used to create a catalog and click Run on the left side of the line numbers. You can also position your cursor on the code that is used to create a catalog and right-click Run.

In the left Metadata area, view the created catalog.

View an SLS Catalog

After you configure an SLS Catalog, follow these steps to view the SLS metadata.

Go to the Data Management page.

Log on to the Realtime Computing for Apache Flink console.

In the Actions column for the target workspace, click Console.

Click Data Management.

On the Catalog List page, view the Name and Type columns.

NoteTo view information about a Logstore in a catalog, click View.

View an SLS Logstore

In the Data Query editor, enter the following command.

DESCRIBE `${catalog_name}`.`${project_name}`.`${logstore_name}`;Parameter

Description

${catalog_name}

The name of the SLS Catalog.

${project_name}

The name of the SLS project.

${logstore_name}

The name of the SLS Logstore.

Select the code that is used to view a catalog and click Run next to the line numbers on the left side of the code.

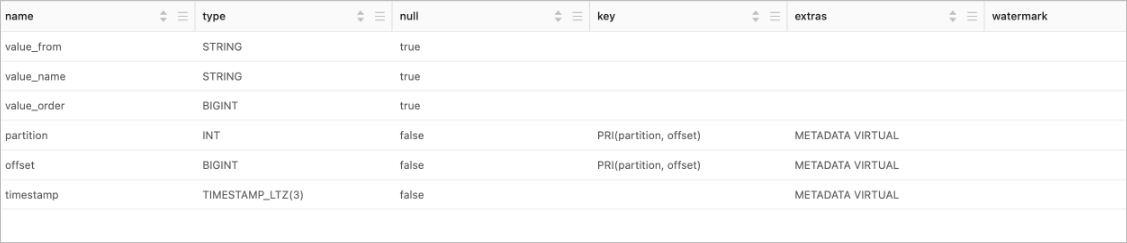

Upon successful execution, you can view the table details in the results.

Use an SLS Catalog

As a source table to read data from an SLS Logstore.

INSERT INTO ${other_sink_table} SELECT... FROM `${catalog_name}`.`${project_name}`.`${logstore_name}`/*+OPTIONS('startTime'='2023-06-01 00:00:00')*/;NoteTo specify additional `WITH` parameters when you use a table from the SLS Catalog, use SQL Hints to add the parameters. For example, the preceding SQL statement uses SQL Hints to specify that data consumption starts from '2023-06-01 00:00:00'. For more information about other parameters, see Simple Log Service connector.

As a sink table to write data to an SLS Logstore.

INSERT INTO `${catalog_name}`.`${project_name}`.`${logstore_name}` SELECT ... FROM ${other_source_table}

Before reading from a source Logstore or writing to a destination Logstore, you must enable indexing for the Logstore. This is because the catalog reads data from the sink table to verify that the schema of the data to be written matches the schema of the destination SLS Logstore. For more information about how to enable indexing, see Create an index.

Delete an SLS Catalog

You can delete an SLS Catalog in the UI or by running an SQL command. We recommend using the UI.

Deleting an SLS Catalog does not affect running jobs. However, jobs that use a table from the deleted catalog will fail to find the table when they are published or restarted. Proceed with caution.

UI method

Go to the Data Management page.

Log on to the Realtime Computing for Apache Flink console.

Click Console in the Actions column of the target workspace.

Click Data Management.

On the Catalog List page, click Delete in the Actions column next to the target catalog name.

In the message that appears, click Delete.

In the Metadata area on the left, verify that the target catalog has been deleted.

SQL command

In the Data Query editor, enter the following command.

DROP CATALOG ${catalog_name};${catalog_name} is the name of the target SLS Catalog that you want to delete.

Right-click the command that drops the catalog and select Run.

In the Metadata area on the left, check if the target catalog has been deleted.

Details of table information from an SLS Catalog

To make tables easier to use, an SLS Catalog adds default configuration parameters and metadata to the tables it derives. The following list describes the details of the tables obtained from an SLS Catalog:

Schema inference for SLS tables

When an SLS Catalog parses logs to get a topic's schema, it consumes one message to parse the data schema. The SLS Catalog parses field names and types from the SLS logs. Because SLS stores all log data as strings, all fields are of the String type.

Default table parameters

Parameter

Description

Remarks

connector

The type of the connector.

The value is fixed to sls.

endpoint

The endpoint address.

For more information, see Endpoints.

project

The name of the SLS project.

None.

logstore

The name of the SLS Logstore or metricstore.

None.

accessId

The AccessKey ID of your Alibaba Cloud account.

For more information, see How do I view the AccessKey ID and AccessKey secret?

ImportantTo protect your AccessKey information, use secrets management to specify the AccessKey ID value. For more information, see Manage variables.

accessKey

The AccessKey secret of your Alibaba Cloud account.

For more information, see How do I view the AccessKey ID and AccessKey secret?

ImportantTo protect your AccessKey information, use secrets management to specify the AccessKey secret value. For more information, see Manage variables.