This topic describes the usage notes of, limits on, and procedure for data migration from a local file system to an Alibaba Cloud Object Storage Service (OSS) bucket.

Usage notes

When you migrate data by using Data Online Migration, take note of the following items:

When you create a source data address, you must set the value of the Directory To Be Migrated parameter to an absolute path. The path must start and end with a forward slash (/) and cannot contain environment variables or special characters.

When you create a source data address, make sure that the directory to be migrated exists and is valid.

When Data Online Migration is used for migration, it consumes resources at the source and destination data addresses. This may interrupt your business. To ensure business continuity, we recommend that you enable throttling for your migration tasks or run the migration tasks during off-peak hours after careful assessment.

Before a migration task starts, Data Online Migration checks the files at the source and destination data addresses. If a file at the source data address and a file at the destination data address have the same name, and the File Overwrite Method parameter of the migration task is set to Yes, the file at the destination data address is overwritten during migration. If the two files contain different information and the file at the destination data address needs to be retained, we recommend that you change the name of one file or back up the file at the destination data address.

The LastModifyTime attribute of the source file is retained after the file is migrated to the destination bucket. If a lifecycle rule is configured for the destination bucket and takes effect, the migrated file whose last modification time is within the specified time period of the lifecycle rule may be deleted or archived in specific storage types.

Limits

Empty directories, files and directories to which symbolic links point, character device files, block device files, socket files, and pipeline files at the source data address cannot be migrated.

After a hard link at the source data address is migrated to the destination data address, the hard link becomes a regular file.

The attributes of parent directories cannot be migrated.

File permissions such as SUID, SGID, and SBID cannot be migrated.

Only specific attributes of data can be migrated from local file systems to an OSS bucket.

Attributes that can be migrated include ModifyTime, Permissions, and Uid:Gid, which correspond to X-Oss-Meta-Mtime, X-Oss-Meta-Perms, and X-Oss-Meta-Owner in OSS.

NoteThe permissions include nine permissions such as read, write, and execute.

Uid indicates the user ID. Gid indicates the ID of the group to which the user belongs. The two parts are separated by a colon (:).

Attributes that cannot be migrated include but are not limited to AccessTime, ChangeTime, Attr, and Acl.

NoteWhether other attributes can be migrated is unknown. The actual migration results prevail.

Step 1: Select a region

Log on to the Data Online Migration console as the Resource Access Management (RAM) user that you created for data migration.

In the upper-left corner of the top navigation bar, select the region in which the agent that you want to use resides.

Important

ImportantThe tunnels, agents, data addresses, and migration tasks that you create in a region cannot be used in another region. Select a region with caution.

We recommend that you select the region in which the agent resides. If the region in which the agent resides is not supported by Data Online Migration, select the region that is closest to the region in which the agent resides.

If you want to migrate data across borders, we recommend that you enable transfer acceleration to increase the migration speed. If you enable transfer acceleration for one or more OSS buckets, you are charged transfer acceleration fees. For more information, see Access OSS using transfer acceleration.

Step 2: Create a tunnel

In the left-side navigation pane, choose Data Online Migration > Tunnel Management. On the Tunnel Management page, click Create Tunnel.

In the Create Tunnel dialog box, configure the parameters and click OK. The following table describes the parameters.

Parameter

Required

Description

Name

Yes

The name of the tunnel.

The name cannot be empty and can be up to 100 characters in length.

The name can contain letters, digits, hyphens (-), and underscores (_).

Maximum Bandwidth

Yes

The maximum bandwidth that the tunnel can use.

If you do not configure this parameter, the default value 0 is used, which indicates that the bandwidth for the tunnel is not limited.

If you configure this parameter, enter a value based on the note in the console.

ImportantThe bandwidth that is available for the tunnel depends on the actual bandwidth of the network connection.

Requests/s

Yes

The maximum number of requests per second over the tunnel.

If you do not configure this parameter, the default value 0 is used, which indicates that the number of requests per second over the tunnel is not limited.

If you configure this parameter, enter a value based on the note in the console.

WarningWe recommend that you evaluate the capabilities of the storage system of the data source before you configure this parameter. If you set this parameter to a great value, your business is affected. We recommend that you enter a value based on the note in the console.

For more information about tunnels, see Manage tunnels.

Step 3: Create an agent

If the local file system is a file system that is deployed on a local computer, you can deploy only one agent.

If the local file system is a file system that is mounted to a local computer, such as an Apsara File Storage NAS (NAS) file system, you can deploy multiple agents. Make sure that the NAS file system is mounted to directories with the same name.

In the left-side navigation pane, choose Data Online Migration > Agent Management. On the Agent Management page, click New Agent.

In the New Agent dialog box, configure the parameters and click OK. The following table describes the parameters.

Parameter

Required

Description

Name

Yes

The name of the agent.

The name cannot be empty and must be 3 to 63 characters in length.

It can contain lowercase English letters, digits, hyphens (-), and underscores (_). The name is case-sensitive.

The name must be in UTF-8 encoding and cannot start with a hyphen (-) or an underscore (_).

Network Type

Yes

The network connection method for the agent. It includes the following two types:

VPC (recommended): The agent connects to the Data Online Migration service through a VPC. This method requires the machine where the agent is deployed to be able to access the internal same-region endpoint of the Data Online Migration service in the corresponding region. For example, if you use the migration service in the China (Beijing) region, the agent machine must be able to access the internal same-region endpoint {TunnelId}.cn-beijing.mgw-tc-internal.aliyuncs.com. Use an ECS instance in the same region as the Data Online Migration console to deploy the agent.

Internet: The agent connects to the Data Online Migration service over the Internet. This method requires the machine where the agent is deployed to be able to access the public endpoint of the Data Online Migration service in the corresponding region. For example, if you use the migration service in the China (Beijing) region, the agent machine must be able to access the public endpoint {TunnelId}.cn-beijing.mgw-tc.aliyuncs.com.

NoteTunnelId indicates the tunnel ID.

You can use the ping command to test the network connectivity between the agent and the migration service.

Deployment Method

Yes

The deployment method of the agent. Currently, only the Independent process mode is supported.

Tunnel

Yes

The tunnel to which the agent belongs. An agent can be associated with only one tunnel. The bandwidth of the agent is affected by the total bandwidth of the tunnel.

For example, a tunnel named tunnel-1 has a maximum bandwidth of 10 Gbit/s. tunnel-1 is associated with three agents: agent-1, agent-2, and agent-3. The total bandwidth of the three agents cannot exceed 10 Gbit/s. If you set the bandwidth of agent-1 to 3 Gbit/s, only 7 Gbit/s of bandwidth is available for agent-2 and agent-3. Plan and allocate bandwidth carefully.

Generate the command used to deploy the agent. For more information, see the "Generate the command to deploy an agent" section of the Manage agents topic.

For more information about agents, see Agent management.

Step 4: Create a source data address

If the local file system is a file system that is deployed on a local computer, only one agent can be deployed.

If the local file system is a NAS file system that is mounted to multiple computers, make sure that the NAS file system is mounted to directories with the same name. When you create a source data address, set the Directory To Be Migrated parameter to the name of the directories to which the NAS file system is mounted.

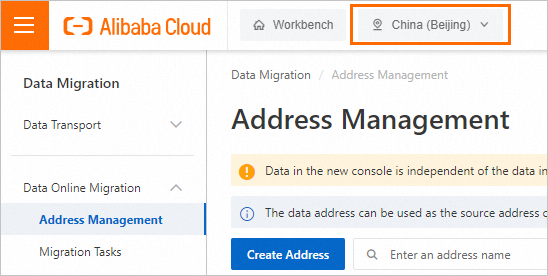

In the left-side navigation pane, choose Data Online Migration > Address Management. On the Address Management page, click Create Address.

In the Create Address panel, configure the parameters and click OK. The following table describes the parameters.

Parameter

Required

Description

Name

Yes

The name of the source data address. The name must meet the following requirements:

The name is 3 to 63 characters in length.

The name must be case-sensitive and can contain lowercase letters, digits, hyphens (-), and underscores (_).

The name is encoded in the UTF-8 format and cannot start with a hyphen (-) or an underscore (_).

Type

Yes

The type of the source data address. Select LocalFS.

Directory To Be Migrated

Yes

The directory to be migrated. Enter the path of the directory in the field. The path must be an absolute path. The path must start and end with a forward slash (/) and cannot contain environment variables and special characters.

For example, you set the prefix of the source data address to

/example/src/, store a file named example.jpg in /example/src/, and set the prefix of the destination data address to/example/dest/. After the example.jpg file is migrated to the destination data address, the full path of the file is/example/dest/example.jpg.ImportantIf a data address is associated with multiple agents, you must make sure that each agent can access the directory. Otherwise, specific data may not be migrated.

If the local file system is a NAS file system that is mounted to multiple computers, make sure that the NAS file system is mounted to directories with the same name. When you create a source data address, set the Directory To Be Migrated parameter to the name of the directories to which the NAS file system is mounted.

Tunnel

Yes

The name of the tunnel that you want to use.

ImportantThis parameter is required only when you migrate data to the cloud by using Express Connect circuits or VPN gateways or migrate data from self-managed databases to the cloud.

If data at the destination data address is stored in a local file system or you need to migrate data over an Express Connect circuit in an environment such as Alibaba Finance Cloud or Apsara Stack, you must create and deploy an agent.

Agent

Yes

The name of the agent that you want to use.

ImportantThis parameter is required only when you migrate data to the cloud by using Express Connect circuits or VPN gateways or migrate data from self-managed databases to the cloud.

You can select up to 200 agents at a time for a specific tunnel.

Step 5: Create a destination data address

In the left-side navigation pane, choose Data Online Migration > Address Management. On the Address Management page, click Create Address.

In the Create Address panel, configure the parameters and click OK. The following table describes the parameters.

Parameter

Required

Description

Name

Yes

The name of the destination data address. The name must meet the following requirements:

The name is 3 to 63 characters in length.

The name must be case-sensitive and can contain lowercase letters, digits, hyphens (-), and underscores (_).

The name is encoded in the UTF-8 format and cannot start with a hyphen (-) or an underscore (_).

Type

Yes

The type of the destination data address. Select Alibaba OSS.

Region

No

The region in which the destination data address resides. Example: China (Hangzhou).

Authorize Role

Yes

The destination bucket belongs to the Alibaba Cloud account that is used to log on to the Data Online Migration console

We recommend that you create and authorize a RAM role in the Data Online Migration console. For more information, see Authorize a RAM role in the Data Online Migration console.

You can also manually attach policies to a RAM role in the RAM console. For more information, see the "Step 4: Grant permissions on the destination bucket to a RAM role" section of the Preparations topic.

The destination bucket does not belong to the Alibaba Cloud account that is used to log on to the Data Online Migration console

You can attach policies to a RAM role in the OSS console. For more information, see the "Step 4: Grant permissions on the destination bucket to a RAM role" section of the Preparations topic.

Bucket

Yes

The name of the OSS bucket to which the data is migrated.

Agent

No

The name of the agent that you want to use.

ImportantThis parameter is required only when you migrate data to the cloud by using Express Connect circuits or VPN gateways or migrate data from self-managed databases to the cloud.

You can select up to 200 agents at a time for a specific tunnel.

Step 6: Create a migration task

In the left-side navigation pane, choose Data Online Migration > Migration Tasks. On the Migration Tasks page, click Create Task.

In the Select Address step,configure the parameters. The following table describes the parameters.

Parameter

Required

Description

Name

Yes

The name of the migration task. The name must meet the following requirements:

The name is 3 to 63 characters in length.

The name must be case-sensitive and can contain lowercase letters, digits, hyphens (-), and underscores (_).

The name is encoded in the UTF-8 format and cannot start with a hyphen (-) or an underscore (_).

Source Address

Yes

The source data address that you created.

Destination Address

Yes

The destination data address that you created.

In the Task Configurations step, configure the parameters that are described in the following table.

Parameter

Required

Description

Migration Bandwidth

No

The maximum bandwidth that is available to the migration task. Valid values:

Default: Use the default upper limit for the migration bandwidth. The actual migration bandwidth depends on the file size and the number of files.

Specify an upper limit: Specify a custom upper limit for the migration bandwidth as prompted.

ImportantThe actual migration speed depends on multiple factors, such as the source data address, network, throttling at the destination data address, and file size. Therefore, the actual migration speed may not reach the specified upper limit.

Specify a reasonable value for the upper limit of the migration bandwidth based on the evaluation of the source data address, migration purpose, business situation, and network bandwidth. Inappropriate throttling may affect business performance.

Files Migrated Per Second

No

The maximum number of files that can be migrated per second. Valid values:

Default: Use the default upper limit for the number of files that can be migrated per second.

Specify an upper limit: Specify a custom upper limit as prompted for the number of files that can be migrated per second.

ImportantThe actual migration speed depends on multiple factors, such as the source data address, network, throttling at the destination data address, and file size. Therefore, the actual migration speed may not reach the specified upper limit.

Specify a reasonable value for the upper limit of the migration bandwidth based on the evaluation of the source data address, migration purpose, business situation, and network bandwidth. Inappropriate throttling may affect business performance.

Overwrite Mode

No

Specifies whether to overwrite a file at the destination data address if the file has the same name as a file at the source data address. Valid values:

Do not overwrite: does not migrate the file at the source data address.

Overwrite All: overwrites the file at the destination data address.

Overwrite based on the last modification time:

If the last modification time of the file at the source data address is later than that of the file at the destination data address, the file at the destination data address is overwritten.

If the last modification time of the file at the source data address is the same as that of the file at the destination data address, the file at the destination data address is overwritten if the files differ from one of the following aspects: size and Content-Type header.

If you select Overwrite based on the last modification time, there is no guarantee that newer files won’t be overwritten by older ones, which creates a risk of losing recent updates.

If you select Overwrite based on the last modification time, make sure that the file at the source data address contains information such as the last modification time, size, and Content-Type header. Otherwise, the overwrite policy may become invalid and unexpected migration results may occur.

If you select Do not overwrite or Overwrite based on the last modification time, the system sends a request to the source and destination data addresses to obtain the meta information and determines whether to overwrite a file. Therefore, request fees are generated for the source and destination data addresses.

WarningMigration Report

Yes

Specifies whether to push a migration report. Valid values:

Do not push (default): does not push the migration report to the destination bucket.

Push: pushes the migration report to the destination bucket. For more information, see What to do next.

ImportantThe migration report occupies storage space at the destination data address.

The migration report may be pushed with a delay. Wait until the migration report is generated.

A unique ID is generated for each execution of a task. A migration report is pushed only once. We recommend that you do not delete the migration report unless necessary.

Migration Logs

Yes

Specifies whether to push migration logs to Simple Log Service (SLS). Valid values:

Do not push (default): Does not push migration logs.

Push: Pushes migration logs to SLS. View the migration logs in the SLS console.

Push only file error logs: Pushes only error migration logs to SLS. View the error migration logs in the SLS console.

If you select Push or Push only file error logs, Data Online Migration creates a project in SLS. The name of the project is in the aliyun-oss-import-log-Alibaba Cloud account ID-Region of the Data Online Migration console format. Example: aliyun-oss-import-log-137918634953****-cn-hangzhou.

ImportantTo prevent errors in the migration task, make sure that the following requirements are met before you select Push or Push only file error logs:

SLS is activated.

You have confirmed the authorization on the Authorize page.

Authorize

No

This parameter is displayed if you set the Migration Logs parameter to Push or Push only file error logs.

Click Authorize to go to the Cloud Resource Access Authorization page. On this page, click Confirm Authorization Policy. The RAM role AliyunOSSImportSlsAuditRole is created and permissions are granted to the RAM role.

File Name

No

The filter based on the file name.

Both inclusion and exclusion rules are supported. However, only the syntax of specific regular expressions is supported. For more information about the syntax of regular expressions, visit re2. Example:

.*\.jpg$ indicates all files whose names end with .jpg.

By default, ^file.* indicates all files whose names start with file in the root directory.

If a prefix is configured for the source data address and the prefix is data/to/oss/, you need to use the ^data/to/oss/file.* filter to match all files whose names start with file in the specified directory.

.*/picture/.* indicates files whose paths contain a subdirectory called picture.

ImportantIf an inclusion rule is configured, all files that meet the inclusion rule are migrated. If multiple inclusion rules are configured, files are migrated as long as one of the inclusion rules is met.

For example, the picture.jpg and picture.png files exist and the inclusion rule .*\.jpg$ is configured. Only the picture.jpg file is migrated. If the inclusion rule .*\.png$ is configured at the same time, both files are migrated.

If an exclusion rule is configured, all files that meet the exclusion rule are not migrated. If multiple exclusion rules are configured, files are not migrated as long as one of the exclusion rules is met.

For example, the picture.jpg and picture.png files exist and the exclusion rule .*\.jpg$ is configured. Only the picture.png file is migrated. If the exclusion rule .*\.png$ is configured at the same time, neither file is migrated.

Exclusion rules take precedence over inclusion rules. If a file meets both an exclusion rule and an inclusion rule, the file is not migrated.

For example, the file.txt file exists, and the exclusion rule .*\.txt$ and the inclusion rule file.* are configured. In this case, the file is not migrated.

File Modification Time

No

The filter based on the last modification time of files.

You can specify the last modification time as a filter rule. If you specify a time period, only the files whose last modification time is within the specified time period are migrated. Examples:

If you specify January 1, 2019 as the start time and do not specify the end time, only the files whose last modification time is not earlier than January 1, 2019 are migrated.

If you specify January 1, 2022 as the end time and do not specify the start time, only the files whose last modification time is not later than January 1, 2022 are migrated.

If you specify January 1, 2019 as the start time and January 1, 2022 as the end time, only the files whose last modification time is not earlier than January 1, 2019 and not later than January 1, 2022 are migrated.

Whether to Migrate

No

Specifies whether to migrate special entities. If you select the check box, special entities are migrated. If you clear the check box, special entities are not migrated.

Directory

If you select the check box, all the directories scanned at the source data address are to be migrated. In addition, the statistics about the directories are included in the values of the Files and Volume of Stored Data parameters for the migration task. Corresponding directories that end with a forward slash (/) are created at the destination data address and configured with the attributes of the source directories as the user metadata. Only attributes that support migration are configured for the directories.

If you clear the check box, all the directories at the source data address are ignored. In addition, the statistics about directories are excluded from the values of the Files and Volume of Stored Data parameters for the migration task. No corresponding directory that ends with a forward slash (/) is created at the destination data address.

symlink

If you select the check box, all the symbolic links at the source data address are to be migrated. In addition, the statistics about symbolic links are included in the values of the Files and Volume of Stored Data parameters for the migration task. Corresponding symbolic links are created at the destination data address and configured with the attributes of the source symbolic links as the user metadata. Only attributes that support migration are configured for the symbolic links. The value of the Target attribute of a destination symbolic link depends on the value of the Convert Destination Path Or Not parameter.

If you clear the check box, all the symbolic links at the source data address are ignored. In addition, the statistics about symbolic links are excluded in the values of the Files and Volume of Stored Data parameters for the migration task.

ImportantWhether you migrate or ignore the symbolic links at the source data address, the files and directories to which the symbolic links point are not migrated unless the files and directories are already included in the data to be migrated.

Convert Destination Path Or Not

No

Specifies whether to convert the value of the Target attribute of a symbolic link. You can convert the value of the Target attribute for specific symbolic links so that the migrated symbolic links still point to the same files. If you select the check box, the Target attribute value is converted. If you clear the check box, the Target attribute value is not converted.

ImportantThis parameter is available only if you select symlink for the Whether to Migrate parameter.

Whether you enable or disable this feature, the system does not check whether the files to which the symbolic links point exist, are of valid types, or can be accessed.

If you enable this feature, the value is first parsed into the shortest equivalent absolute path (AbsTarget) based on the source directory that stores the symbolic link. If AbsTarget contains the prefix of the source data address, the prefix is replaced with the prefix of the destination data address. The value after the replacement is set as the Target attribute value of the corresponding destination symbolic link.

NoteFor example, the prefix of the source data address of a migration task is /mnt/nas1/, the prefix of the destination data address is cloud_base/, and the symbolic link /mnt/nas1/links/a.lnk exists at the source data address. The following list describes the Target value of the corresponding destination symbolic link in different cases.

The Target attribute value of the source symbolic link is ../data/./a.txt. The value is parsed into the shortest absolute path /mnt/nas1/data/a.txt. Then, /mnt/nas1/ in the shortest absolute path is replaced with cloud_base/. Therefore, the Target attribute value of the corresponding destination symbolic link is cloud_base/data/a.txt.

The Target attribute value of the source symbolic link is /mnt/nas1/verbose/../data/./a.txt. The value is parsed into the shortest absolute path /mnt/nas1/data/a.txt. Then, /mnt/nas1/ in the shortest absolute path is replaced with cloud_base/. Therefore, the Target attribute value of the corresponding destination symbolic link is cloud_base/data/a.txt.

The Target attribute value of the source symbolic link is /root/outer/../data/./a.txt. The value is parsed into the shortest absolute path /root/data/a.txt. The shortest absolute path does not contain the prefix of the source data address. In this case, the Target attribute value of the corresponding destination symbolic link is /root/data/a.txt.

If you disable this feature, Target attribute values are not converted. The Target attribute values of the source symbolic links are set as the Target attribute values of the corresponding destination symbolic links.

Execution Time

No

ImportantIf the current execution of a migration task is not complete by the next scheduled start time, the task starts its next execution at the subsequent scheduled start time after the current migration is complete. This process continues until the task is run the specified number of times.

If Data Online Migration is deployed in the China (Hong Kong) region or the regions in the Chinese mainland, up to 10 concurrent migration tasks are supported. If Data Online Migration is deployed in regions outside China, up to five concurrent migration tasks are supported. If the number of concurrent tasks exceeds the limit, executions of tasks may not be complete as scheduled.

The time when the migration task is run. Valid values:

Immediately: The task is immediately run.

Scheduled Task: The task is run within the specified time period every day. By default, the task is started at the specified start time and stopped at the specified stop time.

Periodic Scheduling: The task is run based on the execution frequency and number of execution times that you specify.

Execution Frequency: Specify the execution frequency of the task. Valid values: Every Hour, Every Day, Every Week, Certain Days of the Week, and Custom. For more information, see the Supported execution frequencies section of this topic.

Executions: Specify the maximum number of execution times of the task as prompted. By default, if you do not specify this parameter, the task is run once.

ImportantYou can manually start and stop tasks at any point in time. This is not affected by the custom execution time of tasks.

Read and confirm the Data Online Migration Agreement. Then click Next.

Verify that the configurations are correct and click OK. The migration task is created.

Supported execution frequencies

Execution frequency | Description | Example |

Hourly | Runs the task every hour. You can combine this with the maximum number of executions. | The current time is 8:05. You set the frequency to hourly and the number of executions to 3. The first task starts at the next hour, 9:00.

|

Daily | Runs the task daily at a specified hour (0–23). You can combine this with the maximum number of executions. | The current time is 8:05. You set the task to run daily at 10:00 for 5 executions. The first task starts at 10:00 today.

|

Weekly | Runs the task on a specific day of the week at a specified hour (0–23). You can combine this with the maximum number of executions. | The current time is Monday, 8:05. You set the task to run every Monday at 10:00 for 10 executions. The first task starts at 10:00 today.

|

Specific days of the week | Runs the task on specific days of the week at a specified hour (0–23). | The current time is Wednesday, 8:05. You set the task to run at 10:00 on Mondays, Wednesdays, and Fridays. The first task starts at 10:00 today.

|

Custom | Uses a cron expression to set a custom schedule for the task. | Note A cron expression consists of 6 fields separated by spaces. The fields represent the execution schedule in the following order: second, minute, hour, day of the month, month, and day of the week. The following are example cron expressions. For more information, see a cron expression generator.

|

Step 7: Verify data

Data Online Migration solely handles the migration of data and does not ensure data consistency or integrity. After a migration task is complete, you must review all the migrated data and verify the data consistency between the source and destination data addresses.

Make sure that you verify the migrated data at the destination data address after a migration task is complete. If you delete the data at the source data address before you verify the migrated data at the destination data address, you are liable for the losses and consequences caused by any data loss.