When you run a self-managed Apache RocketMQ cluster, you are responsible for patching, scaling, monitoring, and securing every broker node. ApsaraMQ for RocketMQ eliminates this overhead by providing a fully managed messaging service. The built-in migration tool moves your cluster to an ApsaraMQ for RocketMQ instance with minimal disruption: it synchronizes metadata, routes traffic through a phased per-topic switchover, and supports batch operations and rollback at every stage.

Prerequisites

Before you begin, make sure that you have:

An ApsaraMQ for RocketMQ 5.0 instance. See Create an instance

The service-linked role for migration, as described in the following table. See Service-linked roles

Item Value Role name AliyunServiceRoleForRMQMigration Policy AliyunServiceRolePolicyForRMQMigration Purpose Allows ApsaraMQ for RocketMQ to access Virtual Private Clouds (VPCs) during migration

Assess your current cluster

Before you start, verify that your source cluster is compatible and plan the migration scope.

Source cluster requirements

The source Apache RocketMQ cluster must meet the following requirements. If it does not, submit a ticket.

| Requirement | Details |

|---|---|

| Broker version | Apache RocketMQ 4.x or 5.x |

| Network | Deployed in a VPC. If deployed on-premises, the cluster must be accessible from a VPC. |

| Region | China (Hangzhou), China (Shanghai), China (Beijing), China (Shenzhen), China (Zhangjiakou), US (Silicon Valley), or Singapore |

Destination instance limits

| Parameter | Limit |

|---|---|

| Message size | 4 MB maximum |

| Message retention period | 24 hours (minimum) to 720 hours (maximum) |

| Scheduled message delay | Subscription and pay-as-you-go Standard Edition, serverless Standard and Professional Edition: 7 days. Subscription and pay-as-you-go Professional Edition and Enterprise Platinum Edition: 40 days. |

For the full list, see Quotas and limits.

SDK compatibility

| SDK | Language | Version | Upgrade needed? |

|---|---|---|---|

| Apache RocketMQ Remoting SDK | Java | 5.x | No. Compatible with ApsaraMQ for RocketMQ. |

| Apache RocketMQ Remoting SDK | Java, C++ | 4.x | Yes, if PullConsumer, DefaultLitePullConsumer, or DefaultPullConsumer is used. Upgrade to 5.x. See SDK overview. |

The Remoting SDK uses the following Maven dependency and endpoint format:

<dependency>

<groupId>org.apache.rocketmq</groupId>

<artifactId>rocketmq-client</artifactId>

<version>{version}</version>

</dependency>producer.setNamesrvAddr("xxx:9876");

consumer.setNamesrvAddr("xxx:9876");If you send and receive messages through the RocketMQ Flink connector, use the latest SDK version (compilation required). See rocketmq-flink.

Scheduled and retry messages

Check whether your source cluster contains scheduled messages or messages pending retry. After migration, all producer and consumer connections switch to the ApsaraMQ for RocketMQ instance. Scheduled messages and retry messages that remain in the source cluster may not be consumed. Keep some consumer processes running in the source cluster to drain these messages before you decommission it.

Plan your migration batches

The migration tool supports per-topic migration with batch operations, canary release, and rollback, reducing the blast radius of each change.

Select topics and plan batches. Identify the topics to migrate and group them into batches. Start with non-critical business topics.

Coordinate with upstream and downstream teams. Notify all producer and consumer applications that use the selected topics. Every application must update its endpoint.

Make sure every upstream and downstream application updates its endpoint. Applications that do not switch may cause message consumption delays.

How it works

The migration follows five steps, each with built-in validation checks and rollback support.

| Step | What happens |

|---|---|

| 1. Assess the migration | Verify version and feature compatibility between the source cluster and destination instance. Define which topics to migrate in each batch. |

| 2. Configure the network | Provide the network and node details of the source cluster. The migration tool uses these to read cluster metadata through a least-privilege connection. |

| 3. Migrate metadata | Select topics and consumer groups from the source cluster and import their metadata into the destination instance. |

| 4. Change the endpoint | Update the endpoint in all producer and consumer applications from the source cluster address to the destination instance address. |

| 5. Switch traffic | Perform phased traffic switching per topic, moving read and write traffic from the source cluster to the destination instance. |

Configure the network

Create a migration task and provide the network details of your source Apache RocketMQ cluster. The migration tool uses these details to read cluster metadata and manage traffic switching.

What the migration tool accesses

The migration tool accesses only the following information from the source cluster (least-privilege):

Topic metadata

Consumer group metadata

Dynamic routing registration for topics

Consumer connection and message accumulation status

The tool does not access other cluster data or write any configuration to the source cluster.

Verify network configurations before proceeding. Once you move to the next step, you cannot return to modify network settings. To change the network configuration, create a new migration task.

Procedure

Log on to the ApsaraMQ for RocketMQ console.

In the top navigation bar, select the region where both the source cluster and destination instance are located. In the left-side navigation pane, choose RocketMQ Copilot > Migration to Cloud.

On the Migration to Cloud page, click Create Task.

In the Create Migration Task panel, configure the parameters described in the following table and click OK.

In the Network Settings step of the migration wizard, enter the network details for the source Apache RocketMQ cluster and click Configure Network.

Wait for the network configuration to complete, then click Next.

Parameter reference

| Parameter | Description | Example |

|---|---|---|

| Cluster Type | The network environment of the source cluster. Internet-connected Cluster: deployed over the Internet. VPC-connected Cluster: deployed in an Alibaba Cloud VPC. | VPC-connected Cluster |

| Cluster Name | A custom name to identify the source cluster. Used for display purposes only. | first |

| VPC | The VPC ID where the source cluster is deployed. Required only for VPC-connected Cluster. | vpc-bp1mhd\*\*\*\*\*\*24chrxn |

| vSwitch | The vSwitch that enables the migration tool to access the source cluster network. Select a vSwitch in a zone supported by the migration task region. See Regions and zones. If no vSwitch is available, create one in a supported zone. See Create and manage vSwitches. Required only for VPC-connected Cluster. | vsw-bp1hejs\*\*\*\*\*\*0los38rn |

| Security Group | The security group for network access. Use the same security group as the Elastic Compute Service (ECS) instance running the source cluster. If you use a different security group, make sure its rules allow access to the destination instance. Required only for VPC-connected Cluster. | sg-bp160q\*\*\*\*\*\*vtcxvwl |

| Name Server Address | The IP addresses of all name servers in the source cluster. Separate multiple addresses with commas (,) or semicolons (;). | 192.168.XX.XX:9876 |

| Access Credential | N/A: Access Control List (ACL) is not enabled. ACL: ACL is enabled. Provide admin credentials. | ACL |

| Username | The admin account name. Required only when ACL is enabled. | admin |

| Password | The admin account password. Required only when ACL is enabled. | \*\*\*\*\*\* |

Specify all name server IP addresses. Missing addresses may prevent topics from appearing during migration.

Migrate metadata

Select the topics and consumer groups to migrate based on your migration plan. The migration tool reads all topics and groups from the source cluster and displays them for selection.

You cannot return to this step after proceeding. Make sure all topics and groups in the current migration batch are imported before you click Next. Any missing topics must be created manually later.

Procedure

In the Metadata Migration step, click the Topic Metadata tab.

Select a topic from the list, choose the correct Message Type from the drop-down list, and click Confirm and Import. To import multiple topics at once, select them and click Batch Import.

ImportantApache RocketMQ 4.x does not define message types at the topic level. ApsaraMQ for RocketMQ validates that the message type you assign matches the actual messages in the topic. Select the type based on your application logic. If you select an incorrect type, messages fail to send or receive after migration. If you are unsure about the message type, submit a ticket.

Click the Group Metadata tab. Select a consumer group, choose the correct Consumption Order from the drop-down list, and click Confirm and Import. To import multiple groups at once, select them and click Batch Import.

ImportantIn the Apache RocketMQ 4.x SDK, message consumption order is specified on the client side. In ApsaraMQ for RocketMQ 5.x, the broker controls consumption order at the group level. Select the order based on your application requirements. An incorrect order causes consumption exceptions. If you are unsure, submit a ticket.

Verify that all topics and groups for this migration batch are imported, then click Next.

Change the endpoint

Update the endpoint in all producer and consumer applications from the source cluster address to the destination ApsaraMQ for RocketMQ 5.x instance address. During this step, the migration tool continues routing traffic to the source cluster, so messaging works normally regardless of the order in which applications update their endpoints.

Before you proceed

After changing the endpoint, restart each application to connect it to the destination instance.

Make sure every producer and consumer application updates its endpoint:

Producers that do not switch: some messages fail to send.

Consumers that do not switch: messages accumulate and cannot be consumed.

Endpoint change examples

Apache RocketMQ Remoting SDK

Before (source cluster):

producer.setNamesrvAddr("192.168.XX.XX:9876");

consumer.setNamesrvAddr("192.168.XX.XX:9876");SDK version >= 4.5.1

After (destination instance) -- version 4.5.1 and later:

producer.setNamesrvAddr("rmq-cn-pe334******-vpc.cn-hangzhou.rmq.aliyuncs.com:8080");

// vipChannelEnabled defaults to false. If set to true, comment out this line.

// producer.setVipChannelEnabled(false);

consumer.setNamesrvAddr("rmq-cn-pe334******-vpc.cn-hangzhou.rmq.aliyuncs.com:8080");

// vipChannelEnabled defaults to false. If set to true, comment out this line.

// consumer.setVipChannelEnabled(false);SDK version < 4.5.1

After (destination instance) -- versions earlier than 4.5.1:

producer.setNamesrvAddr("rmq-cn-pe334******-vpc.cn-hangzhou.rmq.aliyuncs.com:8080");

// vipChannelEnabled defaults to true. Set it to false.

producer.setVipChannelEnabled(false);

consumer.setNamesrvAddr("rmq-cn-pe334******-vpc.cn-hangzhou.rmq.aliyuncs.com:8080");

// vipChannelEnabled defaults to true. Set it to false.

consumer.setVipChannelEnabled(false);Apache RocketMQ gRPC SDK

Before:

ClientConfiguration clientConfiguration = ClientConfiguration.newBuilder()

.setEndpoints("192.168.XX.XX:9876")

.setCredentialProvider(sessionCredentialsProvider)

.build();After:

ClientConfiguration clientConfiguration = ClientConfiguration.newBuilder()

.setEndpoints("rmq-cn-pe334******-vpc.cn-hangzhou.rmq.aliyuncs.com:8080")

.setCredentialProvider(sessionCredentialsProvider)

.build();Procedure

After all applications have updated their endpoint and restarted, click Next in the Change Endpoint step.

Switch traffic

Switch read and write traffic per topic from the source cluster to the destination instance. Traffic switching progresses through four sequential stages for each topic.

Before you proceed

At each stage, verify that messages are sent and received correctly before proceeding. If an issue occurs, roll back to the previous stage and troubleshoot.

Switch traffic for all migrated topics and confirm normal operation before completing the migration task. Traffic switching settings cannot be modified after the task is marked complete.

Traffic switching stages

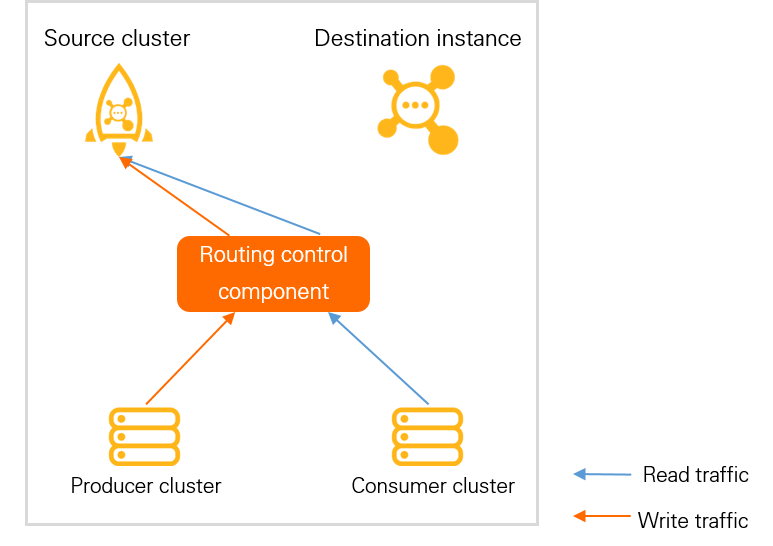

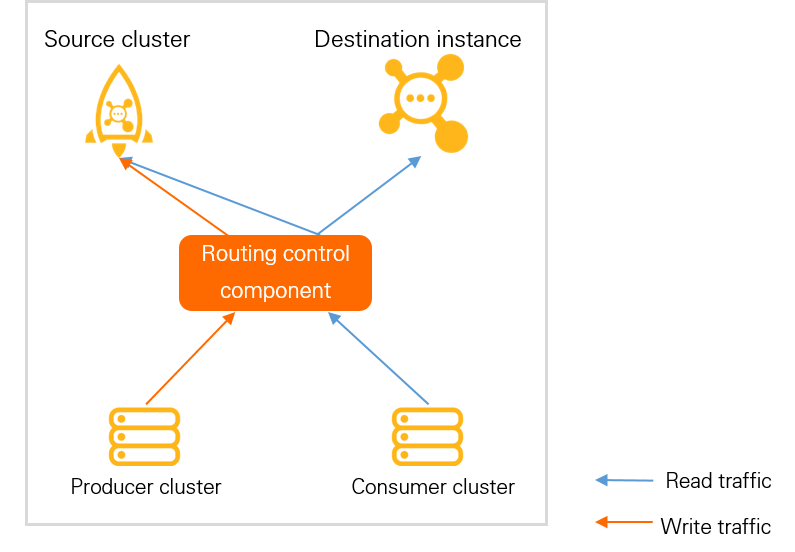

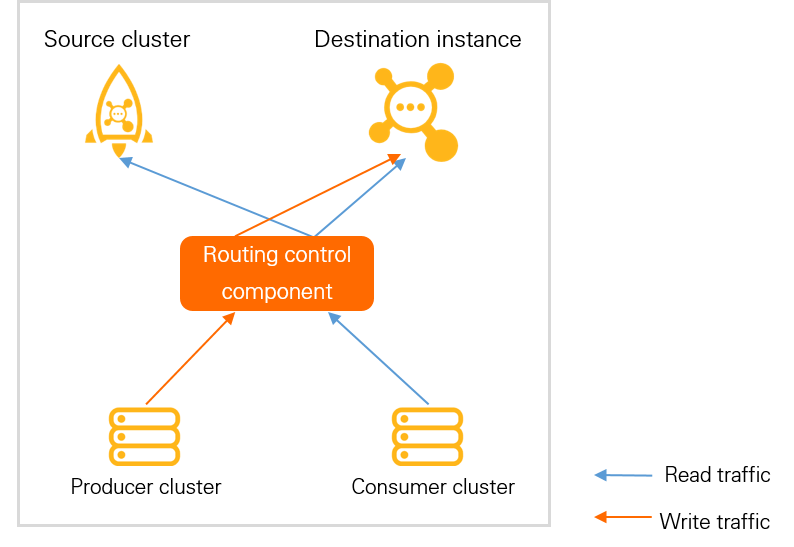

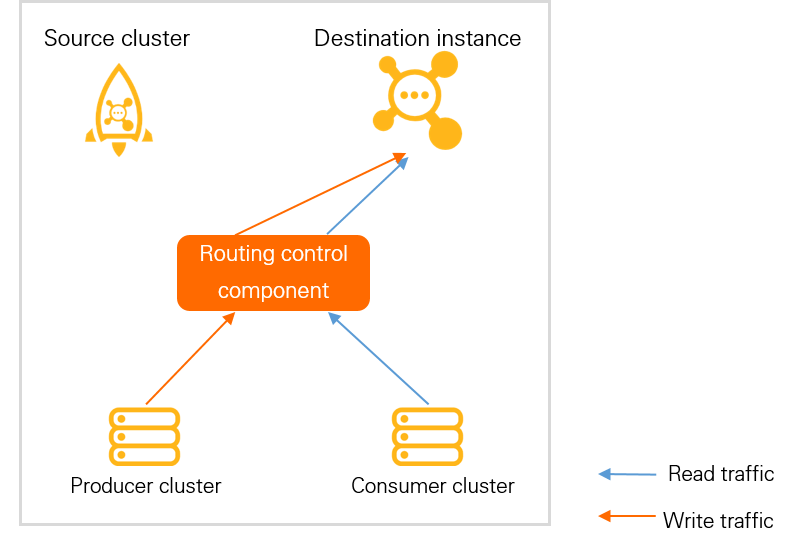

Each topic progresses through the following four stages:

| Stage | Read traffic | Write traffic | What to verify |

|---|---|---|---|

| Read and write in source cluster (initial) | Source cluster | Source cluster | Starting state. No action required. |

| Write in source cluster, read in both clusters | Source and destination clusters | Source cluster | Consumers receive messages from both clusters. Verify that consumption works correctly in the destination. |

| Write in destination cluster, read in both clusters | Source and destination clusters | Destination cluster | Producers send to the destination. Verify that messages are sent and received correctly. Wait for accumulated messages in the source cluster to be fully consumed. |

| Read and write in destination cluster (final) | Destination cluster | Destination cluster | Migration is complete for this topic. All traffic flows through the destination instance. |

Traffic topology for each stage:

| Stage | Topology |

|---|---|

| Read and write in source cluster |  |

| Write in source cluster, read in both clusters |  |

| Write in destination cluster, read in both clusters |  |

| Read and write in destination cluster |  |

Verification checks at each stage

| Stage | Checks performed |

|---|---|

| Switch to write in source, read in both | Topic exists in both clusters. Permissions are granted correctly. Clients are connected to the destination cluster. All clients are connected to the destination cluster. |

| Switch to write in destination, read in both | Same checks as the previous stage. |

| Switch to read and write in destination | All previous checks, plus: no messages are being produced in the source cluster, and no message accumulation exists in the source cluster. |

Procedure

In the Message Migration step, select the topic to migrate and check its verification status.

Check Passed: Proceed to the next step.

Not passed: Troubleshoot based on the check result, then click Re-verify until the check passes.

Not passed, but confirmed non-blocking: Click Ignore Check and proceed.

In the Actions column, click Switch Traffic.

In the confirmation dialog, click OK.

Repeat steps 1 through 3 for each stage until Traffic Switching Stage shows Read and Write in Destination Cluster.

After all topics are fully switched, click Migrated at the bottom of the page.

Additional operations

The following operations are available on the Message Migration page:

Roll Back

Roll back to the previous stage: Reverts the topic to the last stage where migration was working correctly. Troubleshoot before proceeding again.

Roll back to the initial stage: Reverts to the original routing state. Use this for emergency troubleshooting only.

NoteRolling back to the initial stage may cause unconsumed messages produced during earlier stages to be delayed or cannot be processed.

Create Topic: If a topic was not selected during metadata migration, create it directly on the destination ApsaraMQ for RocketMQ 5.x instance.

Batch Traffic Switching / Batch Rollback: Switch traffic or roll back for multiple topics at once. Both operations apply only to topics at the same traffic switching stage.

References

For background on the differences between open-source Apache RocketMQ and ApsaraMQ for RocketMQ, including the migration mechanism, see Migration overview.

After the migration task is complete, you can use metrics on the ApsaraMQ for RocketMQ dashboard to check whether the instance is running as expected. If exceptions occur, you can roll back the task. For more information, see Dashboard and Roll Back.