By zwzhang

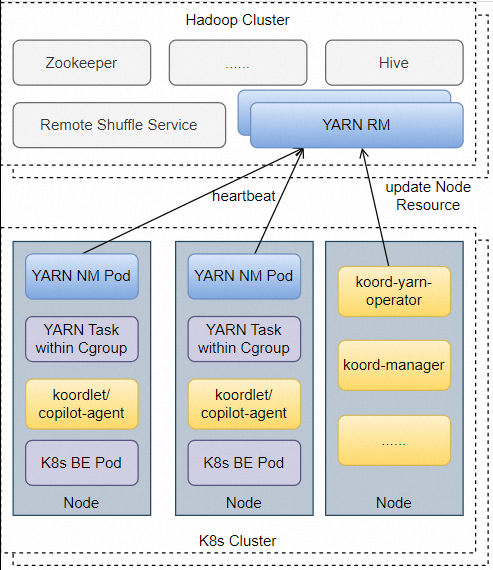

Koordinator has supported hybrid orchestration workloads on Kubernetes, so that batch jobs can use the requested but unused resource as koord-batch priority and BE QoS class to improve the cluster utilization. However, there are still lots of applications running beyond K8s such as Apache Haddop YARN. As a resource management platform in BigData ecosystem, YARN has supported numbers of computing engines including MapReduce, Spark, Flink, Presto, etc.

In order to extend the co-location scenario of Koordinator, now the community has provided Hadoop YARN extended suits Koordinator YARN Copilot in BigData ecosystem, supporting running Hadoop YARN jobs by koord-batch resources with other K8s pods. The Koordinator YARN Copilot has following characteristics:

koord-batch priority of Koordinator, and also managed by QoS strategies of koordlet.koord-batch priority can be requested by tasks of YARN or Batch pods both.All charts can be simply installed by helm v3.5+, which is a simple command-line tool, and you can get it from here.

Please make sure Koordinator components are correctly installed in your cluster. For more information about install and upgrade, please refer to Installation.

# Firstly add koordinator charts repository if you haven't do this.

$ helm repo add koordinator-sh https://koordinator-sh.github.io/charts/

# [Optional]

$ helm repo update

# Install the latest version.

$ helm install koordinator koordinator-sh/koordinatorHaddop YARN is consist of ResourceManger and NodeManager, and currently we recommend users deploy the ResourceManger independently on hosts, while the NodeManager as pod.

Koordinator community provides a demo chart hadoop-yarn with Hadoop YARN ResourceManager and NodeManager, also including HDFS components as optional for running example jobs easily. You can use the demo chart for quick start of YARN co-location, otherwise you can refer to Installation for official guides if you want to build your own YARN cluster.

# Firstly add koordinator charts repository if you haven't do this.

$ helm repo add koordinator-sh https://koordinator-sh.github.io/charts/

# [Optional]

$ helm repo update

# Install the latest version.

$ helm install hadoop-yarn koordinator-sh/hadoop-yarn

# check hadoop yarn pods running status

kubectl get pod -n hadoop-yarnSome key information should be known before you install YARN:

yarn.hadoop.apache.org/node-id=${nm-hostname}:8041 to identify node ID in YARN.koord-batch priority, so Koordinator must be pre-installed with co-location enabled.These features have already been configured in Haddop YARN chart in koordinator repo, and if you are using self-maintained YARN, please check the Koordinator repo for reference during installation.

Koordinator YARN Copilot is consist of yarn-opeartor and copilot-agent (WIP),

# Firstly add koordinator charts repository if you haven't do this.

$ helm repo add koordinator-sh https://koordinator-sh.github.io/charts/

# [Optional]

$ helm repo update

# Install the latest version.

$ helm install koordinator-yarn-copilot koordinator-sh/koordinator-yarn-copilot1. configuration of koord-manager

After installing through the helm chart, the ConfigMap slo-controller-config will be created in the koordinator-system namespace. YARN tasks are managed under best-effort cgroup, which should be configured as host level application, and here are the related issue of YARN tasks management under Koordinator.

Create a configmap.yaml file based on the following ConfigMap content:

apiVersion: v1

data:

colocation-config: |

{

"enable": true

}

resource-threshold-config: |

{

"clusterStrategy": {

"enable": true

}

}

resource-qos-config: |

{

"clusterStrategy": {

"lsrClass": {

"cpuQOS": {

"enable": true

}

},

"lsClass": {

"cpuQOS": {

"enable": true

}

},

"beClass": {

"cpuQOS": {

"enable": true

}

}

}

}

host-application-config: |

{

"applications": [

{

"name": "yarn-task",

"priority": "koord-batch",

"qos": "BE",

"cgroupPath": {

"base": "KubepodsBesteffort",

"relativePath": "hadoop-yarn/"

}

}

]

}

kind: ConfigMap

metadata:

name: slo-controller-config

namespace: koordinator-systemTo avoid changing other settings in the ConfigMap, we commend that you run the kubectl patch command to update the ConfigMap.

$ kubectl patch cm -n koordinator-system slo-controller-config --patch "$(cat configmap.yaml)"2. configuration of koord-yarn-copilot communicates with YARN ResourceManager during resource syncing, and the ConfigMap defines YARN related configurations.

apiVersion: v1

data:

core-site.xml: |

<configuration>

</configuration>

yarn-site.xml: |

<configuration>

<property>

<name>yarn.resourcemanager.admin.address</name>

<value>resource-manager.hadoop-yarn:8033</value>

</property>

<property>

<name>yarn.resourcemanager.address</name>

<value>resource-manager.hadoop-yarn:8032</value>

</property>

</configuration>

kind: ConfigMap

metadata:

name: yarn-config

namespace: koordinator-systemYou can change the default address and port at yarnConfiguration.resourceManager in chart values.

You can check the helm chart hadoop-yarm, and koordinator-yarn-copilot for more advanced settings.

1. Check node allocatable batch resources of Koordinator on node.

$ kubectl get node -o yaml | grep batch-cpu

kubernetes.io/batch-cpu: "60646"

kubernetes.io/batch-cpu: "60486"

$ kubectl get node -o yaml | grep batch-memory

kubernetes.io/batch-memory: "245976973438"

kubernetes.io/batch-memory: "243254790644"2. Check node allocatable resources in YARN Visit YARN ResourceManager web UI address ${hadoop-yarn-rm-addr}:8088/cluster/nodes in browser to get YARN NM status and allocatable resources.

If you are using the hadoop-yarn demo chart in Koordinator repo, please execute the following command to make RM accessible locally.

$ kubectl port-forward -n hadoop-yarn service/resource-manager 8088:8088Then open the ui in your browser: http://localhost:8088/cluster/nodes

The VCores Avail and Mem Avail will be exactly same with batch resources of K8s nodes.

Spark, Flink and other computing engines support submitting jobs to YARN since they were published, check the official manual like Spark and Flink before you start the work.

It is worth noting the hadoop-yarn demo chart in Koordinator repo has already integrated with Spark client, you can execute the following command to submit an example job, and get the running status through web UI of ResourceManager.

$ kubectl exec -n hadoop-yarn -it ${yarn-rm-pod-name} yarn-rm -- /opt/spark/bin/spark-submit --master yarn --deploy-mode cluster --class org.apache.spark.examples.SparkPi /opt/spark/examples/jars/spark-examples_2.12-3.3.3.jar 1000Apache RocketMQ EventBridge: Build the Next Generation of Event-driven Engines

Coordinated sharing of CPU resources in Colocation Scenarios - Fine-grained CPU Orchestration

674 posts | 56 followers

FollowAlibaba Cloud Native Community - January 25, 2024

Alibaba Cloud Native Community - November 3, 2025

Alibaba Cloud Native Community - December 1, 2022

Alibaba Container Service - February 13, 2026

Alibaba Cloud Native Community - March 11, 2025

Alibaba Cloud Native Community - March 29, 2023

674 posts | 56 followers

Follow Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More Managed Service for Prometheus

Managed Service for Prometheus

Multi-source metrics are aggregated to monitor the status of your business and services in real time.

Learn More ACK One

ACK One

Provides a control plane to allow users to manage Kubernetes clusters that run based on different infrastructure resources

Learn More Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn MoreMore Posts by Alibaba Cloud Native Community