By Wang Chen and Liu Jun

During 2025 Spring Festival, new versions of top-tier open-source Chinese AI models were launched. They showcase performance on par with OpenAI's proprietary models.

• On January 20, DeepSeek-R1 was launched, demonstrating performance comparable to OpenAI o1 in maths, coding, and reasoning tasks [1].

• On January 27, Qwen2.5-1M was introduced, supporting a 1 million token context window. Qwen2.5-1M 14B achieves performance similar to GPT-4o-mini in short-context tasks while offering a context window eight times larger. In long-context tasks, Qwen2.5-1M 14B consistently outperforms GPT-4o mini across multiple benchmark datasets [2].

• On January 27, DeepSeek unveiled Janus-Pro, a unified, multimodal understanding and generation model. In benchmark evaluations, Janus-Pro-7B surpassed both OpenAI's DALL-E 3 and Stability AI's Stable Diffusion in GenEval and DPG-Bench [3].

• On January 28, Qwen2.5-VL was released. This flagship vision-language model surpasses GPT-4o in document understanding, visual question answering, video understanding, and visual agent [4].

• On January 29, Qwen2.5-Max was launched, outperforming DeepSeek-V3 and GPT-4o in benchmark assessments like Arena-Hard, LiveBench, LiveCodeBench, and GPQA-Diamond [5].

There's a growing consensus in this industry that open-source models have evolved from simply following their closed-source counterparts to taking the lead in AI development. DeepSeek and Qwen are the frontrunners of current open-source projects. This article will walk you through the process of deploying an AI model, building a test application, and leverage the model's capabilities to perform tasks.

• Free-of-charge computing: The computing work is done on your device.

• Free-of-charge API calls: API requests are made in your local network.

• Securing sensitive data: Sensitive data is stored locally, which makes local deployment an ideal option for personal developers.

• Device limitations: A local device only supports a quantized or distilled version of DeepSeek-R1, not the full version with 671 billion parameters. To deploy DeepSeek-R1 FP4, a device requires at least 350 GB of memory.

• Accessibility: Released under the MIT license, DeepSeek-R1 supports free distillation. Additionally, DeepSeek-R1 distilled Qwen models are available for download and use.

This section will guide you in deploying a DeepSeek model on your local device and creating an application in Spring AI Alibaba to utilize DeepSeek:

The page shows that Ollama supports deploying DeepSeek-R1 locally:

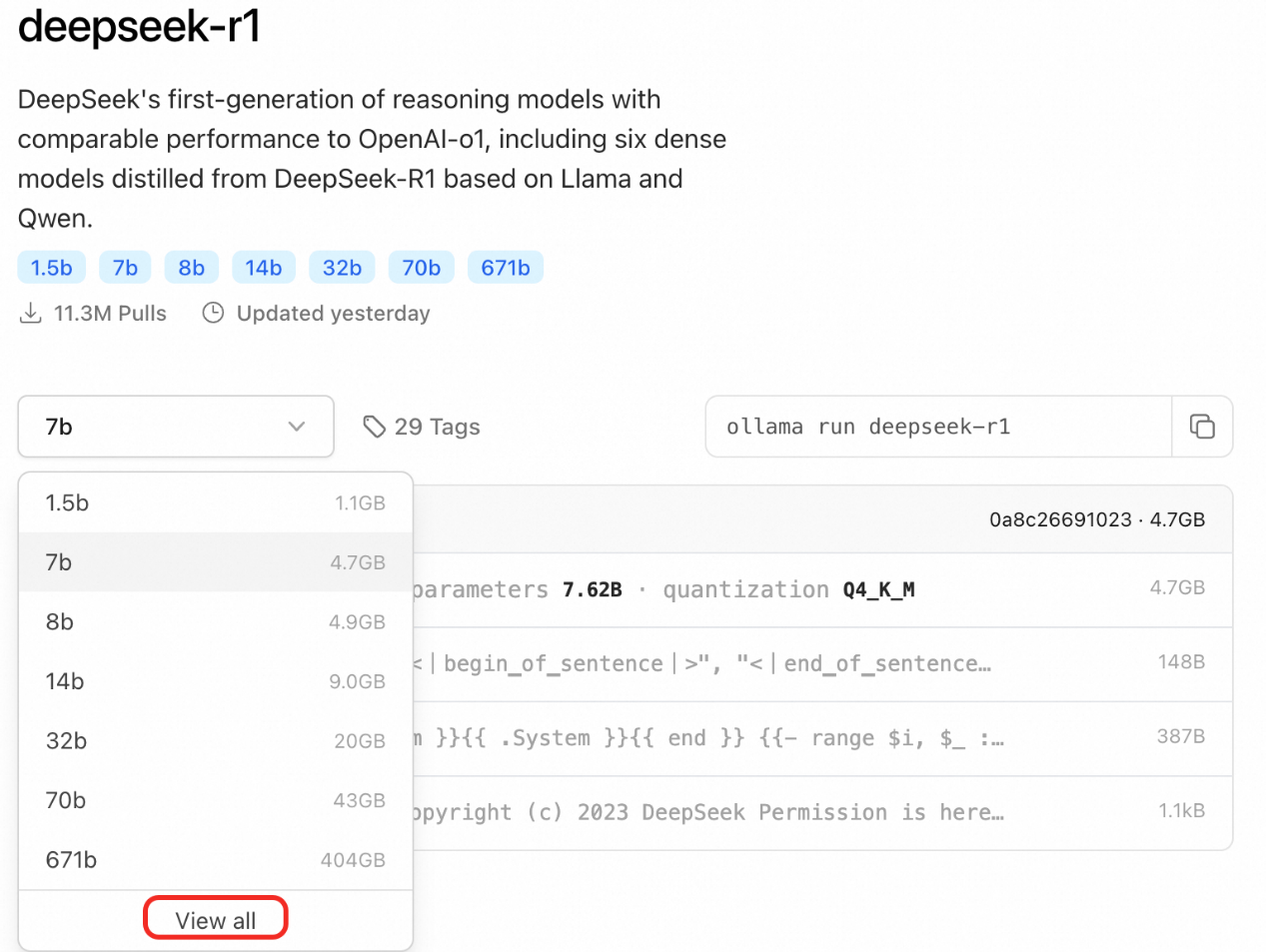

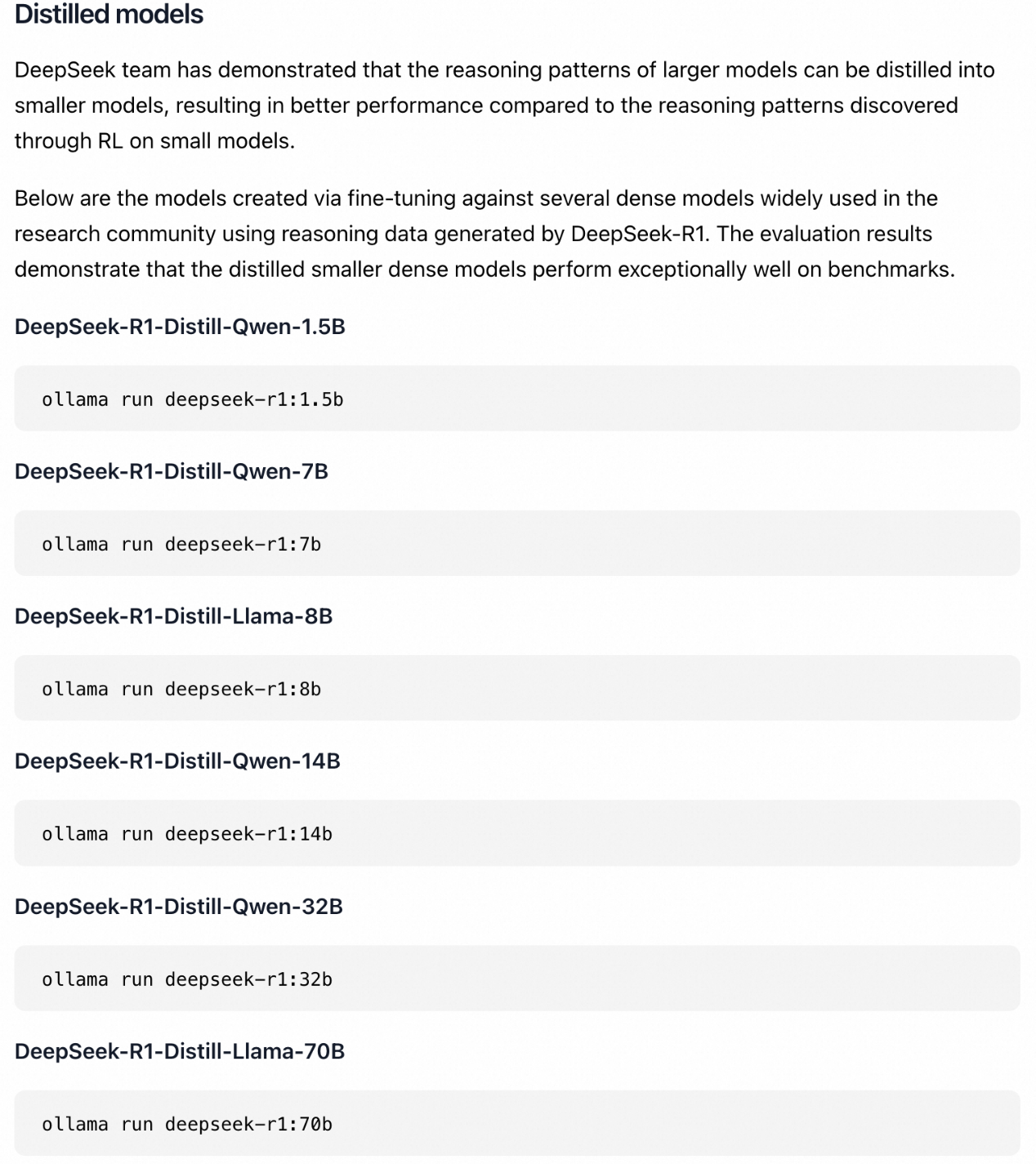

You can click DeepSeek-R1 to see the detailed introduction:

2. Click Download and install Ollama. After installation, press Command + Space to open your Terminal, and run the following command:

Terminal window

# Install DeepSeek-R1-Distill-Qwen-1.5B.

ollama run deepseek-r1:1.5bTerminal window

# Install Ollma

curl -fsSL https://ollama.com/install.sh | sh

# Install DeepSeek-R1-Distill-Qwen-1.5B.

ollama run deepseek-r1:1.5b3. Choose a model size appropriate for your device.

DeepSeek-R1 comes in these sizes: 1.5B, 7B, 8B, 14B, 32B, 70B, and 671B. In this guide, the 1.5B model is chosen for demo purposes. Generally speaking, deploying the 8B model requires 8 GB of memory, and the 32B model requires 24 GB of memory.

Create an application with Spring AI Alibaba, and call the local model

For the full code, see https://github.com/springaialibaba/spring-ai-alibaba-examples/tree/main/spring-ai-alibaba-chat-example/ollama-deepseek-chat

Download the sample code:

Terminal window

git clone https://github.com/springaialibaba/spring-ai-alibaba-examples.git

cd spring-ai-alibaba-examples/spring-ai-alibaba-chat-example/ollama-deepseek-chat/ollama-deepseek-chat-client

Terminal window

./mvnw compile exec:java -Dexec.mainClass="com.alibaba.cloud.ai.example.chat.deepseek.OllamaChatClientApplication"Enter http://localhost:10006/ollama/chat-client/simple/chat in your browser to use DeepSeek locally.

When using Spring AI Alibaba to build an application, you need to add the spring-ai-alibaba-starter dependency and inject the ChatClient bean, which are not required when you use Spring Boot.

Add the spring-ai-alibaba-starter dependency. You also need to add the spring-ai-ollama-spring-boot-starter dependency to run the DeepSeek model with Ollama:

<dependency>

<groupId>org.springframework.ai</groupId>

<artifactId>spring-ai-ollama-spring-boot-starter</artifactId>

<version>1.0.0-M5</version>

</dependency>Configure an address for the model. Specify the base URL and name of the model in application.properties:

spring.ai.ollama.base-url=http://localhost:11434

spring.ai.ollama.chat.model=deepseek-r1

Inject ChatClient:

@RestController

public class ChatController {

private final ChatClient chatClient;

public ChatController(ChatClient.Builder builder) {

this.chatClient = builder.build();

}

@GetMapping("/chat")

public String chat(String input) {

return this.chatClient.prompt()

.user(input)

.call()

.content();

}

}Spring AI Alibaba DingTalk Group ID: 105120009405

[1] https://github.com/deepseek-ai/DeepSeek-R1

[2] https://qwenlm.github.io/blog/qwen2.5-1m

[3] https://github.com/deepseek-ai/Janus?tab=readme-ov-file

[4] https://qwenlm.github.io/blog/qwen2.5-vl/

[5] https://qwenlm.github.io/zh/blog/qwen2.5-max/

675 posts | 56 followers

FollowAlibaba Cloud Native Community - March 10, 2025

Alibaba Cloud Native Community - March 6, 2025

Alibaba Cloud Native Community - June 16, 2025

Alibaba Cloud Native Community - September 4, 2025

Alibaba Cloud Native Community - February 20, 2025

Alibaba Cloud Native Community - May 22, 2025

675 posts | 56 followers

Follow Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More Tongyi Qianwen (Qwen)

Tongyi Qianwen (Qwen)

Top-performance foundation models from Alibaba Cloud

Learn More AI Acceleration Solution

AI Acceleration Solution

Accelerate AI-driven business and AI model training and inference with Alibaba Cloud GPU technology

Learn MoreMore Posts by Alibaba Cloud Native Community