このトピックでは、Data Transmission Service (DTS) を使用して Amazon Aurora MySQL クラスタから PolarDB for MySQL クラスタにデータを移行する方法について説明します。DTS は、スキーマ移行、フルデータ移行、増分データ移行をサポートしています。データ移行タスクを構成する際に、サポートされているすべての移行タイプを選択して、サービスの継続性を確保できます。

前提条件

DTS はソース Amazon Aurora MySQL クラスタに接続できます。

ソース Amazon Aurora MySQL クラスタのネットワークとセキュリティ設定で、[パブリックアクセス可能] を [はい] に設定することをお勧めします。次に、データ移行タスクを構成する際に、ソースデータベースの アクセス方法 を パブリック IP アドレス に設定します。こうすることで、DTS はインターネット経由でソース Amazon Aurora MySQL クラスタにアクセスできます。

説明VPN ゲートウェイを使用して DTS をソース Amazon Aurora MySQL クラスタに接続する方法については、「IPsec-VPN を使用して Alibaba Cloud VPC を Amazon VPC に接続する」をご参照ください。

PolarDB for MySQL クラスタが作成されます。詳細については、「カスタム購入」をご参照ください。

PolarDB for MySQL クラスタの使用可能なストレージ容量は、Amazon Aurora MySQL クラスタのデータの合計サイズよりも大きくなっています。

制限事項

DTS は、フルデータ移行中にソースデータベースと宛先データベースの読み取りおよび書き込みリソースを使用します。これにより、データベースサーバーの負荷が増加する可能性があります。データベースのパフォーマンスが良くない場合、仕様が低い場合、またはデータ量が大きい場合、データベースサービスが利用できなくなる可能性があります。たとえば、ソースデータベースで多数のスロー SQL クエリが実行されている場合、テーブルにプライマリキーがない場合、または宛先データベースでデッドロックが発生した場合、DTS は大量の読み取りおよび書き込みリソースを占有します。データ移行を実行する前に、データ移行がソースデータベースと宛先データベースのパフォーマンスに与える影響を評価してください。オフピーク時にデータ移行を実行することをお勧めします。たとえば、ソースデータベースと宛先データベースの CPU 使用率が 30% 未満のときにデータ移行を実行できます。

ソースデータベースには PRIMARY KEY または UNIQUE 制約が必要であり、すべてのフィールドが一意である必要があります。そうでない場合、宛先データベースに重複したデータレコードが含まれる可能性があります。

DTS は

ROUND(COLUMN,PRECISION)関数を使用して、FLOAT または DOUBLE データ型の列から値を取得します。精度を指定しない場合、DTS は FLOAT データ型の精度を 38 桁、DOUBLE データ型の精度を 308 桁に設定します。精度の設定がビジネス要件を満たしているかどうかを確認する必要があります。ソースデータベースの名前が無効な場合、データ移行タスクを構成する前に、PolarDB for MySQL クラスタにデータベースを作成する必要があります。

説明データベースの作成方法とデータベースの命名規則の詳細については、「データベース管理操作」をご参照ください。

データ移行タスクが失敗した場合、DTS はタスクを自動的に再開します。ワークロードを宛先データベースに切り替える前に、データ移行タスクを停止または解放してください。そうしないと、タスクが再開された後、ソースデータベースのデータが宛先データベースのデータを上書きします。

課金ルール

移行タイプ | タスク構成料金 | インターネットトラフィック料金 |

スキーマ移行とフルデータ移行 | 無料。 | Alibaba Cloud からインターネット経由でデータが移行される場合にのみ課金されます。詳細については、「課金の概要」をご参照ください。 |

増分データ移行 | 課金されます。詳細については、「課金の概要」をご参照ください。 |

移行タイプ

スキーマ移行

DTS は、オブジェクトのスキーマを PolarDB for MySQL クラスタに移行します。DTS は、テーブル、ビュー、トリガー、ストアドプロシージャ、関数などのオブジェクトタイプのスキーマ移行をサポートしています。DTS は、イベントのスキーマ移行をサポートしていません。

説明スキーマ移行中に、DTS はビュー、ストアドプロシージャ、およびストアドファンクションの SECURITY 属性の値を DEFINER から INVOKER に変更します。

DTS はユーザー情報を移行しません。宛先データベースのビュー、ストアドプロシージャ、またはストアドファンクションを呼び出すには、INVOKER に読み取りおよび書き込み権限を付与する必要があります。

フルデータ移行

DTS は、必要なオブジェクトの既存データを Amazon Aurora MySQL クラスタから PolarDB for MySQL クラスタに移行します。

説明フルデータ移行中に、同時 INSERT 操作によって宛先データベースのテーブルで断片化が発生します。フルデータ移行が完了すると、宛先データベースの使用済み表領域のサイズはソースデータベースのサイズよりも大きくなります。

増分データ移行

フルデータ移行が完了すると、DTS は Amazon Aurora MySQL クラスタからバイナリログファイルを取得します。次に、DTS は Amazon Aurora MySQL クラスタから PolarDB for MySQL クラスタに増分データを同期します。増分データ移行により、MySQL データベース間でデータを移行する際のサービスの継続性を確保できます。

データベースアカウントに必要な権限

データベース | スキーマ移行 | フルデータ移行 | 増分データ移行 |

Amazon Aurora MySQL | 移行対象のオブジェクトに対する SELECT 権限 | 移行対象のオブジェクトに対する SELECT 権限 | 移行対象のオブジェクトに対する SELECT 権限、REPLICATION SLAVE 権限、REPLICATION CLIENT 権限、および SHOW VIEW 権限 |

PolarDB for MySQL | 移行対象のオブジェクトに対する読み取りおよび書き込み権限 | 移行対象のオブジェクトに対する読み取りおよび書き込み権限 | 移行対象のオブジェクトに対する読み取りおよび書き込み権限 |

データベースアカウントの作成方法とデータベースアカウントへの権限の付与方法の詳細については、以下のトピックをご参照ください。

Amazon Aurora MySQL クラスタ: 自己管理 MySQL データベースのアカウントを作成し、バイナリロギングを構成する

PolarDB for MySQL クラスタ: データベースアカウントの作成と管理

準備

Amazon Aurora コンソールにログオンします。

左側のナビゲーションウィンドウで、[データベース] をクリックします。

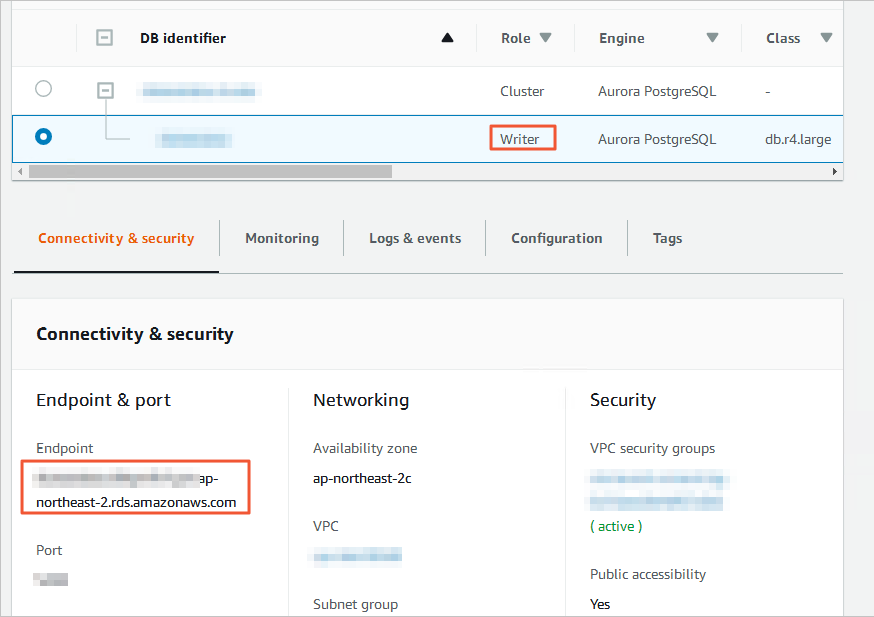

[DB 識別子] が [ロール] [ライターインスタンス] であるノードをクリックします。

[接続とセキュリティ] タブで、ノードに対応する VPC セキュリティグループの名前をクリックします。

[セキュリティグループ] ページで、構成するセキュリティグループの ID をクリックします。

[インバウンドルール] タブで、[インバウンドルールを編集] をクリックします。

[インバウンドルールを編集] ページで、[ルールを追加] をクリックし、対応するリージョンにある DTS サーバーの CIDR ブロックをインバウンドルールに追加して、[ルールを保存] をクリックします。詳細については、「DTS サーバーの CIDR ブロックを追加する」をご参照ください。

説明宛先データベースと同じリージョンにある DTS サーバーの CIDR ブロックのみを追加する必要があります。たとえば、ソースデータベースがシンガポールリージョンにあり、宛先データベースが中国 (杭州) リージョンにあるとします。中国 (杭州) リージョンにある DTS サーバーの CIDR ブロックのみを追加する必要があります。

必要な CIDR ブロックをすべて一度にインバウンドルールに追加できます。

他に質問がある場合は、Amazon の公式ドキュメントを参照するか、テクニカルサポートにお問い合わせください。

ソース Amazon Aurora MySQL クラスタにログオンし、バイナリログファイルの保存期間を指定します。増分データ移行を実行する必要がない場合は、この手順をスキップします。

call mysql.rds_set_configuration('binlog retention hours', 24);/* バイナリログファイルの保存期間を24時間に設定 */説明上記のステートメントは、バイナリログファイルの保存期間を 24 時間に設定します。最大保存期間は 168 時間 (7 日間) です。

Amazon Aurora MySQL クラスタのバイナリロギング機能を有効にし、binlog_format パラメータの値を row に設定する必要があります。MySQL のバージョンが 5.6 以降の場合は、binlog_row_image パラメータの値を full に設定する必要があります。詳細については、Amazon の公式ドキュメントを参照するか、テクニカルサポートにお問い合わせください。

手順 (新しい DTS コンソール)

宛先リージョンの移行タスクリストページに移動します (次のいずれかの方法を選択します)。

DTS コンソール経由

Data Transmission Service (DTS) コンソール にログオンします。

左側のナビゲーションウィンドウで、データの移行 をクリックします。

ページの左上隅で、移行インスタンスが存在するリージョンを選択します。

DMS コンソール経由

説明実行する必要のある実際の手順は、DMS コンソールのモードとレイアウトによって異なる場合があります。詳細については、「簡易モードコンソール」および「DMS インターフェースのレイアウトとスタイルのカスタマイズ」をご参照ください。

Data Management (DMS) にログオンします。

上部のメニューバーで、 を選択します。

データ移行タスク の右側で、移行インスタンスが存在するリージョンを選択します。

タスクの作成 をクリックして、タスク構成ページに移動します。

ソースデータベースと宛先データベースを構成します。

警告ソースインスタンスと宛先インスタンスを選択した後、ページ上部に表示される [制限事項] を注意深く読むことをお勧めします。そうしないと、タスクが失敗したり、データの不整合が発生したりする可能性があります。

セクション

パラメータ

説明

該当なし

タスク名

DTS はタスク名を自動的に生成します。識別しやすい説明的な名前を指定することをお勧めします。一意のタスク名を使用する必要はありません。

ソースデータベース

既存のDMSデータベースインスタンスの選択

ビジネス要件に基づいて、既存のインスタンスを使用するかどうかを指定できます。

既存のインスタンスを使用する場合、DTS はインスタンスのパラメータを自動的に入力します。

既存のインスタンスを使用しない場合は、次のデータベース情報を入力する必要があります。

説明[DMS データベースインスタンスを追加] をクリックして、DMS コンソールにデータベースインスタンスを登録します。詳細については、「Alibaba Cloud データベースインスタンスを登録する」および「サードパーティクラウドサービスまたは自己管理データベースでホストされているデータベースを登録する」をご参照ください。

DTS コンソールで、[データベース接続] ページまたは新しい構成ページで DTS にデータベースを登録します。詳細については、「データベース接続の管理」をご参照ください。

データベースタイプ

ソースデータベースのタイプ。[mysql] を選択します。

アクセス方法

ソースデータベースのアクセス方法。[パブリック IP アドレス] を選択します。

インスタンスリージョン

Amazon Aurora MySQL クラスタが存在するリージョン。

説明Amazon Aurora MySQL クラスタが存在するリージョンがドロップダウンリストに表示されない場合は、Amazon Aurora MySQL クラスタに地理的に最も近いリージョンを選択してください。

[ドメイン名または IP]

Amazon Aurora MySQL クラスタへのアクセスに使用するエンドポイント。

説明エンドポイントは、Amazon Aurora MySQL クラスタの基本情報ページで取得できます。

[ポート番号]

Amazon Aurora MySQL クラスタのサービスポート番号。デフォルト値: [3306]。

データベースアカウント

Amazon Aurora MySQL クラスターのデータベースアカウント。アカウントに必要な権限については、このトピックの「データベースアカウントに必要な権限」セクションをご参照ください。

データベースパスワード

アカウントのパスワード。

[暗号化]

ソースデータベースへの接続を暗号化するかどうかを指定します。ビジネス要件に基づいて、[暗号化なし] または [SSL 暗号化] を選択します。

Amazon Aurora MySQL クラスタで SSL 暗号化が有効になっていない場合は、[暗号化なし] を選択します。

Amazon Aurora MySQL クラスタで SSL 暗号化が有効になっている場合は、[SSL 暗号化] を選択します。[CA 証明書] をアップロードし、[CA キー] を指定する必要もあります。

宛先データベース

既存のDMSデータベースインスタンスの選択

ビジネス要件に基づいて、既存のインスタンスを使用するかどうかを指定できます。

既存のインスタンスを使用する場合、DTS はインスタンスのパラメータを自動的に入力します。

既存のインスタンスを使用しない場合は、次のデータベース情報を入力する必要があります。

説明[DMS データベースインスタンスを追加] をクリックして、DMS コンソールにデータベースインスタンスを登録します。詳細については、「Alibaba Cloud データベースインスタンスを登録する」および「サードパーティクラウドサービスまたは自己管理データベースでホストされているデータベースを登録する」をご参照ください。

DTS コンソールで、[データベース接続] ページまたは新しい構成ページで DTS にデータベースを登録します。詳細については、「データベース接続の管理」をご参照ください。

データベースタイプ

宛先データベースのタイプ。PolarDB for MySQL を選択します。

アクセス方法

宛先データベースのアクセス方法。Alibaba Cloud インスタンス を選択します。

インスタンスリージョン

宛先 PolarDB for MySQL クラスタが存在するリージョン。

PolarDB クラスター ID

宛先 PolarDB for MySQL クラスタの ID。

データベースアカウント

宛先 PolarDB for MySQL クラスタのデータベースアカウント。アカウントに必要な権限については、このトピックの「データベースアカウントに必要な権限」セクションをご参照ください。

データベースパスワード

アカウントのパスワード。

[暗号化]

ソースデータベースへの接続を暗号化するかどうかを指定します。ビジネス要件に基づいて、このパラメータを構成できます。SSL 暗号化機能の詳細については、「SSL 暗号化の構成」をご参照ください。

構成が完了したら、ページ下部にある 接続をテストして続行 をクリックし、DTS サーバーの CIDR ブロック ダイアログボックスの 接続テスト をクリックします。

説明DTS サーバーの IP アドレス範囲が、ソースデータベースとターゲットデータベースのセキュリティ設定に自動または手動で追加され、DTS サーバーからのアクセスが許可されていることを確認します。詳細については、「オンプレミスデータベースのセキュリティ設定に DTS サーバーの CIDR ブロックを追加する」をご参照ください。

移行するオブジェクトを構成します。

オブジェクト設定 ページで、移行するオブジェクトを構成します。

パラメータ

説明

[移行タイプ]

フルデータ移行のみを実行する必要がある場合は、スキーマ移行 と 完全データ移行 の両方を選択することをお勧めします。

ダウンタイムを最小限に抑えてデータを移行する必要がある場合は、スキーマ移行、完全データ移行、増分データ移行 を選択することをお勧めします。

説明スキーマ移行 を選択しない場合は、宛先データベースにデータを受信するためのデータベースとテーブルが含まれていることを確認し、実際の状況に応じて 選択中のオブジェクト セクションのオブジェクトに名前マッピング機能を使用してください。

増分データ移行 を選択しない場合は、データの整合性を確保するために、データ移行中にソースインスタンスに新しいデータを書き込まないでください。

[ソースデータベースのトリガーを移行する方法]

ソースデータベースからトリガーを移行するために使用される方法。ビジネス要件に基づいて移行方法を選択できます。移行するトリガーがない場合は、このパラメータを構成する必要はありません。詳細については、「ソースデータベースからトリガーを同期または移行する」をご参照ください。

説明[スキーマ移行][移行タイプ] で を選択した場合にのみ、このパラメータを構成できます。

[競合するテーブルの処理モード]

エラーの事前チェックと報告: 宛先データベースにソースデータベースのテーブルと同じ名前のテーブルが含まれているかどうかを確認します。宛先データベースに同じ名前のテーブルが含まれていない場合、事前チェックは合格です。それ以外の場合、事前チェック中にエラーが返され、データ移行タスクを開始できません。

説明宛先データベースのテーブルの名前がソースデータベースのテーブルの名前と同じで、削除または名前変更できない場合は、宛先データベースのテーブルの名前を変更できます。詳細については、「オブジェクト名マッピング」をご参照ください。

エラーを無視して続行: ソースデータベースと宛先データベースで同じテーブル名の事前チェックをスキップします。

警告エラーを無視して続行 を選択すると、データの不整合が発生し、ビジネスがリスクにさらされる可能性があります。次に例を示します。

ソースデータベースと宛先データベースのスキーマが同じであるが、同じプライマリキーを持つデータが含まれている場合:

フルデータ移行中、宛先データベースのレコードは変更されません。ソースデータベースのレコードは宛先データベースに移行されません。

増分データ移行中、宛先データベースのレコードは上書きされます。ソースデータベースのレコードは宛先データベースに移行されます。

ソースデータベースと宛先データベースのスキーマが異なる場合、データ移行が失敗するか、特定の列のみを移行できる可能性があります。このオプションを選択する際は注意してください。

[宛先インスタンスのオブジェクト名の大文字と小文字の区別]

宛先インスタンスのデータベース名、テーブル名、列名の大文字と小文字の区別を構成できます。デフォルトでは、[DTS デフォルトポリシー] が選択されています。ソースデータベースまたは宛先データベースのデフォルトポリシーと一致するポリシーを選択することもできます。詳細については、「宛先インスタンスのオブジェクト名の大文字と小文字の区別に関するポリシー」をご参照ください。

[ソースオブジェクト]

ソースオブジェクト セクションから 1 つ以上のオブジェクトを選択します。

アイコンをクリックして、[選択済みオブジェクト] セクションにオブジェクトを追加します。説明

アイコンをクリックして、[選択済みオブジェクト] セクションにオブジェクトを追加します。説明スキーマ、テーブル、または列を移行対象のオブジェクトとして選択できます。テーブルまたは列を移行対象のオブジェクトとして選択した場合、ビュー、トリガー、ストアドプロシージャなどの他のオブジェクトは宛先データベースに移行されません。

[選択済みオブジェクト]

宛先インスタンスに移行するオブジェクトの名前を変更するには、[選択済みオブジェクト] セクションでオブジェクトを右クリックします。詳細については、「単一オブジェクトの名前をマッピングする」をご参照ください。

複数のオブジェクトの名前を一度に変更するには、[選択済みオブジェクト] セクションの右上隅にある [一括編集] をクリックします。詳細については、「一度に複数のオブジェクト名をマッピングする」をご参照ください。

説明オブジェクト名マッピング機能を使用してオブジェクトの名前を変更した場合、そのオブジェクトに依存する他のオブジェクトは移行に失敗する可能性があります。

WHERE 条件を指定してデータをフィルタリングするには、[選択済みオブジェクト] セクションでテーブルを右クリックします。表示されるダイアログボックスで、条件を指定します。詳細については、「フィルタ条件を指定する」をご参照ください。

特定のデータベースまたはテーブルで実行された SQL 操作を移行するには、[選択済みオブジェクト] セクションでオブジェクトを右クリックします。表示されるダイアログボックスで、移行する SQL 操作を選択します。

次へ:詳細設定 をクリックして、詳細パラメータを構成します。

パラメータ

説明

[タスクスケジューリング用の専用クラスタ]

デフォルトでは、専用クラスタを指定しない場合、DTS は共有クラスタにタスクをスケジュールします。タスクの安定性を高めるために、専用クラスタを購入して DTS 移行タスクを実行できます。詳細については、「DTS 専用クラスタとは」をご参照ください。

[宛先データベースのエンジンの種類を選択]

宛先データベースのエンジンの種類を選択します。

[innodb]: デフォルトのストレージエンジン。

[x-engine]: オンライントランザクション処理 (OLTP) 用のデータベースストレージエンジン。

[ソーステーブルで生成されたオンライン DDL ツールの一時テーブルを宛先データベースにコピーします。]

ソースデータベースが Data Management (DMS) または gh-ost を使用してオンライン DDL 操作を実行する場合、オンライン DDL 操作によって生成された一時テーブルを移行するかどうかを指定できます。

重要DTS タスクは、pt-online-schema-change などのツールを使用して実行されるオンライン DDL 操作をサポートしていません。サポートしていない場合、DTS タスクは失敗します。

[はい]: オンライン DDL 操作によって生成された一時テーブルを移行します。

説明オンライン DDL 操作によって大量のデータが生成される場合、移行タスクに遅延が発生する可能性があります。

[いいえ、DMS オンライン DDL に適応]: オンライン DDL 操作によって生成された一時テーブルを移行しません。ソースデータベースで Data Management (DMS) を使用して実行された元の DDL ステートメントのみが移行されます。

説明[いいえ] を選択すると、宛先データベースのテーブルがロックされる可能性があります。

[いいえ、gh-ost に適応]: オンライン DDL 操作によって生成された一時テーブルを移行しません。ソースデータベースで gh-ost を使用して実行された元の DDL ステートメントのみが移行されます。gh-ost シャドウテーブルと不要なテーブルにデフォルトの正規表現を使用するか、正規表現をカスタマイズできます。

説明[いいえ] を選択すると、宛先データベースのテーブルがロックされる可能性あります。

[接続失敗時のリトライ時間]

移行タスクの開始後、ソースデータベースまたは宛先データベースへの接続に失敗した場合、DTS はエラーを報告し、すぐに連続リトライ試行を開始します。デフォルトのリトライ時間は 720 分です。10 ~ 1440 分の範囲でリトライ時間をカスタマイズすることもできます。リトライ時間は 30 分以上に設定することをお勧めします。指定された時間内に DTS がソースデータベースと宛先データベースに再接続すると、DTS はデータ移行タスクを再開します。それ以外の場合、移行タスクは失敗します。

説明同じソースまたは宛先を持つ複数の DTS インスタンスの場合、ネットワークリトライ時間は後で作成されたタスクの設定によって決まります。

DTS がソースデータベースと宛先データベースへの再接続を試行すると、DTS インスタンスの料金が発生します。ビジネス要件に基づいてリトライ時間範囲を指定するか、ソースインスタンスと宛先インスタンスが解放された後、できるだけ早く DTS インスタンスを解放することをお勧めします。

[その他の問題のリトライ時間]

移行タスクの開始後、ソースデータベースまたは宛先データベースで接続以外の問題 (DDL または DML の実行例外など) が発生した場合、DTS はエラーを報告し、すぐに連続リトライ試行を開始します。デフォルトのリトライ時間は 10 分です。1 ~ 1440 分の範囲でリトライ時間をカスタマイズすることもできます。リトライ時間は 10 分以上に設定することをお勧めします。指定されたリトライ時間内に関連操作が成功すると、DTS は移行タスクを自動的に再開します。それ以外の場合、移行タスクは失敗します。

重要移行元データベースと移行先データベースで他の問題が発生した場合の、再試行までの待機時間です。 の値は、失敗した接続の再試行時間 の値よりも小さくなければなりません。

[フルデータ移行のスロットリングを有効にする]

フルデータ移行中、DTS はソースデータベースと宛先データベースの読み取りおよび書き込みリソースを使用します。これにより、データベースサーバーの負荷が増加する可能性があります。宛先データベースへの負荷を軽減するために、ビジネス要件に基づいてフル移行タスクのスロットリングを設定できます (1 秒あたりのソースデータベースのクエリ率 QPS、1 秒あたりの完全移行の行数 RPS、1 秒あたりの完全移行データ量 (MB) BPS を設定)。

説明このパラメータは、移行タイプ が 完全データ移行 に設定されている場合にのみ使用できます。

[増分データ移行のスロットリングを有効にする]

宛先データベースへの負荷を軽減するために、ビジネス要件に基づいて増分移行タスクのスロットリングを設定できます (1 秒あたりの増分移行の行数 RPS と 1 秒あたりの増分移行データ量 (MB) BPS を設定)。

説明このパラメータは、移行タイプ が 増分データ移行 に設定されている場合にのみ使用できます。

[環境タグ]

インスタンスを識別するために使用される環境タグ。ビジネス要件に基づいて環境タグを選択できます。この例では、このパラメータを構成する必要はありません。

[転送タスクと逆タスクのハートビートテーブルの SQL 操作を削除するかどうか]

ビジネス要件に基づいて、DTS インスタンスの実行時にハートビート SQL 情報をソースデータベースに書き込むかどうかを指定します。

[はい]: ハートビート SQL 情報はソースデータベースに書き込まれません。DTS インスタンスに遅延が表示される場合があります。

[いいえ]: ハートビート SQL 情報はソースデータベースに書き込まれます。これは、ソースデータベースの物理バックアップやクローニングなどの機能に影響を与える可能性があります。

[ETL の構成]

抽出、変換、書き出し (ETL) 機能を構成するかどうかを指定します。ETL の詳細については、「ETL とは」をご参照ください。

[はい]: ETL 機能を構成し、テキストボックスにデータ処理ステートメントを入力します。詳細については、「DTS 移行タスクまたは同期タスクで ETL を構成する」をご参照ください。

[いいえ]: ETL 機能を構成しません。

[監視とアラート]

データ移行タスクのアラートを構成するかどうかを指定します。タスクが失敗した場合、または移行遅延が指定されたしきい値を超えた場合、アラート連絡先に通知されます。

[いいえ]: アラートを構成しません。

[はい]: アラートを構成します。アラートしきい値と アラート通知 も設定する必要があります。詳細については、「タスク構成中の監視とアラートの構成」をご参照ください。

[次へ: データ検証] をクリックして、データ検証タスクを構成します。

データ検証機能を使用する必要がある場合は、「データ検証の構成」で構成方法をご確認ください。

タスクを保存し、事前チェックを実行します。

インスタンスの構成に使用する API 操作を呼び出すために使用されるパラメータ情報を表示する必要がある場合は、次:タスク設定の保存と事前チェック ボタンにポインタを置き、吹き出しの OpenAPI パラメーターのプレビュー をクリックします。

API パラメータを表示する必要がない場合、または表示が完了した場合は、ページ下部にある 次:タスク設定の保存と事前チェック をクリックします。

説明DTS は、データ移行タスクが開始される前に事前チェックを実行します。タスクが事前チェックに合格した後でのみ、移行タスクを開始できます。

事前チェックに失敗した場合は、失敗したチェック項目の横にある [詳細の表示] をクリックして詳細を表示し、指示に従って問題を修正して、事前チェックを再実行します。

事前チェック中に項目に対してアラートが生成された場合は、シナリオに基づいて次の操作を実行します。

無視できないチェック項目の場合は、失敗したチェック項目の横にある [詳細の表示] をクリックして詳細を表示し、指示に従って問題を修正して、事前チェックを再実行します。

無視できるチェック項目で修正する必要がない場合は、[アラートの詳細を確認するにはクリックしてください]、[無視することを確認]、[OK]、[事前チェックを再実行] の順にクリックして、アラートチェック項目をスキップし、事前チェックを再実行します。アラート項目を無視すると、データの不整合が発生し、ビジネスが潜在的なリスクにさらされる可能性があります。

インスタンスを購入します。

[事前チェックの合格率] が [100%] の場合は、[次へ: 購入] をクリックします。

[購入] ページで、データ移行インスタンスのインスタンスクラスを選択します。詳細については、次の表をご参照ください。

カテゴリ

パラメータ

説明

新しいインスタンスクラス

[リソースグループ構成]

インスタンスが属するリソースグループを選択します。デフォルト値は [デフォルトのリソースグループ] です。詳細については、「リソース管理とは」をご参照ください。

インスタンスクラス

DTS は、移行速度が異なるインスタンスクラスを提供します。ビジネス要件に基づいてインスタンスクラスを選択できます。詳細については、「データ移行インスタンスの仕様」をご参照ください。

構成が完了したら、[data Transmission Service (従量課金) 利用規約] を読んで選択します。

[購入して開始] をクリックし、表示される OK ダイアログボックスで、[OK] をクリックします。

[データ移行] ページで、データ移行タスクの進行状況を表示できます。

手順 (古い DTS コンソール)

DTS コンソール にログオンします。

説明データ管理 ( DMS ) コンソールにリダイレクトされた場合は、

アイコンを

アイコンを  でクリックすると、以前のバージョンの DTS コンソールに移動できます。

でクリックすると、以前のバージョンの DTS コンソールに移動できます。左側のナビゲーションウィンドウで、[データ移行] をクリックします。

[移行タスク] ページの上部で、宛先クラスタが存在するリージョンを選択します。

ページの右上隅にある [移行タスクの作成] をクリックします。

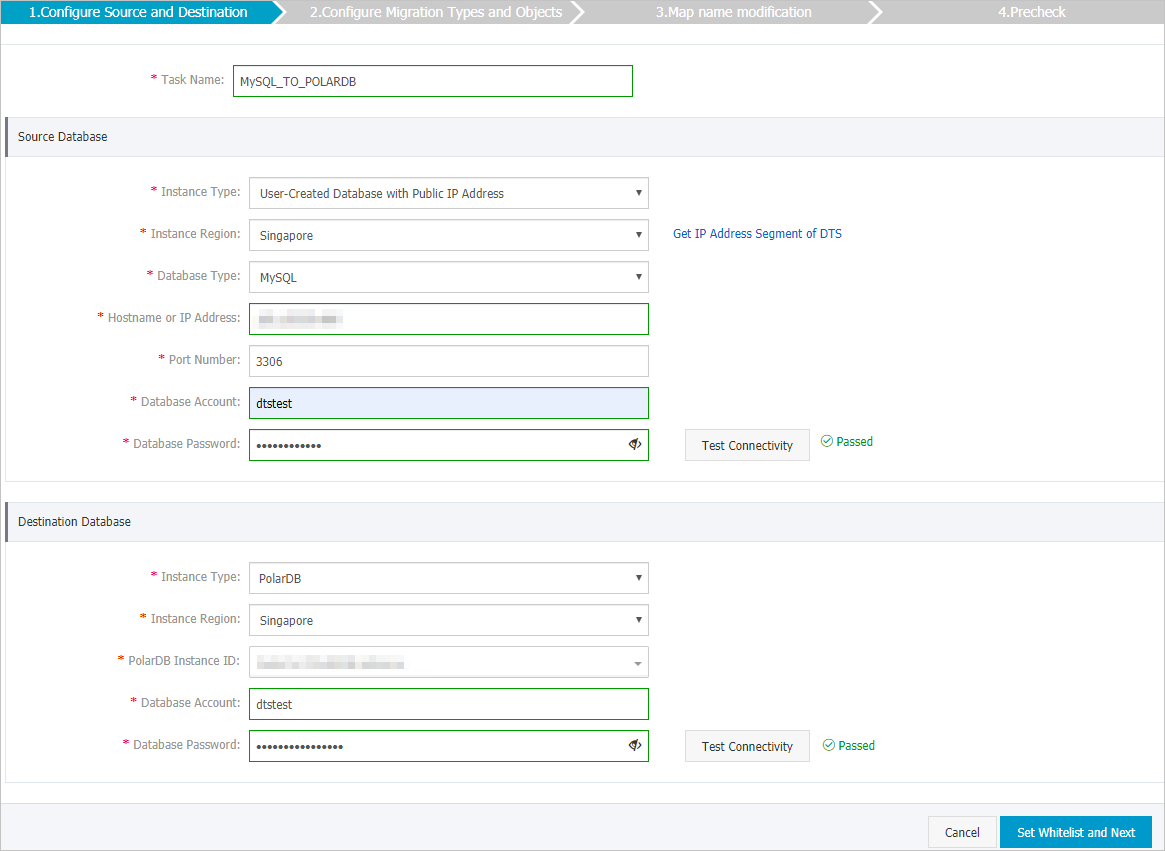

ソースデータベースと宛先データベースを構成します。

セクション

パラメータ

説明

該当なし

タスク名

DTS が自動的に生成するタスク名。タスクを識別しやすい説明的な名前を指定することをお勧めします。一意のタスク名を指定する必要はありません。

ソースデータベース

インスタンスタイプ

ソースデータベースのタイプ。[パブリック IP アドレスを持つユーザー作成データベース] を選択します。

インスタンスリージョン

ソースデータベースが存在するリージョン。ソースデータベースのインスタンスタイプとして [パブリック IP アドレスを持つユーザー作成データベース] を選択した場合、[インスタンスリージョン] パラメータを指定する必要はありません。

データベースタイプ

ソースデータベースのタイプ。[mysql] を選択します。

ホスト名または IP アドレス

Amazon Aurora MySQL クラスタへのアクセスに使用するエンドポイント。

説明エンドポイントは Amazon Aurora MySQL クラスタの基本情報ページで取得できます。

ポート番号

Amazon Aurora MySQL クラスタのサービスポート番号。デフォルト値: [3306]。

データベースアカウント

Amazon Aurora MySQL クラスタのデータベースアカウント。アカウントに必要な権限については、このトピックの「データベースアカウントに必要な権限」セクションをご参照ください。

データベースパスワード

データベースアカウントのパスワード。

説明ソースデータベースパラメータを構成した後、[データベースパスワード] の横にある [接続をテスト] をクリックして、構成されたパラメータが有効かどうかを確認します。構成されたパラメータが有効な場合、[合格] メッセージが表示されます。[失敗] メッセージが表示された場合は、[失敗] の横にある [チェック] をクリックして、チェック結果に基づいてソースデータベースパラメータを変更します。

宛先データベース

インスタンスタイプ

宛先データベースのタイプ。[polardb] を選択します。

インスタンスリージョン

PolarDB for MySQL クラスタが存在するリージョン。

PolarDB インスタンス ID

宛先 PolarDB for MySQL クラスタの ID。

データベースアカウント

宛先 PolarDB for MySQL クラスタのデータベースアカウント。アカウントに必要な権限については、このトピックの「データベースアカウントに必要な権限」セクションをご参照ください。

データベースパスワード

データベースアカウントのパスワード。

説明宛先データベースパラメータを構成した後、[データベースパスワード] の横にある [接続をテスト] をクリックして、構成されたパラメータが有効かどうかを確認します。構成されたパラメータが有効な場合、[合格] メッセージが表示されます。[失敗] メッセージが表示された場合は、[失敗] の横にある [チェック] をクリックして、チェック結果に基づいて宛先データベースパラメータを変更します。

ページの右下隅にある [ホワイトリストを設定して次へ] をクリックします。

ソースデータベースインスタンスまたは宛先データベースインスタンスが、ApsaraDB RDS for MySQL や ApsaraDB for MongoDB インスタンスなどの Alibaba Cloud データベースインスタンスである場合、または ECS でホストされている自己管理データベースである場合、DTS は DTS サーバーの CIDR ブロックをデータベースインスタンスまたは ECS セキュリティグループルールのホワイトリストに自動的に追加します。ソースデータベースまたは宛先データベースがデータセンター上の自己管理データベースであるか、他のクラウド サービス プロバイダーからのものである場合は、DTS がデータベースにアクセスできるように DTS サーバーの CIDR ブロックを手動で追加する必要があります。詳細については、「DTS サーバーの CIDR ブロックを追加する」トピックの「DTS サーバーの CIDR ブロック」セクションをご参照ください。

警告DTS サーバーの CIDR ブロックがデータベースまたはインスタンスのホワイトリスト、または ECS セキュリティグループルールに自動または手動で追加されると、セキュリティリスクが発生する可能性があります。したがって、DTS を使用してデータを移行する前に、潜在的なリスクを理解して認識し、ユーザー名とパスワードのセキュリティ強化、公開されているポートの制限、API 呼び出しの認証、ホワイトリストまたは ECS セキュリティグループルールの定期的なチェックと不正な CIDR ブロックの禁止、Express Connect、VPN Gateway、または Smart Access Gateway を使用したデータベースと DTS の接続などの予防措置を講じる必要があります。

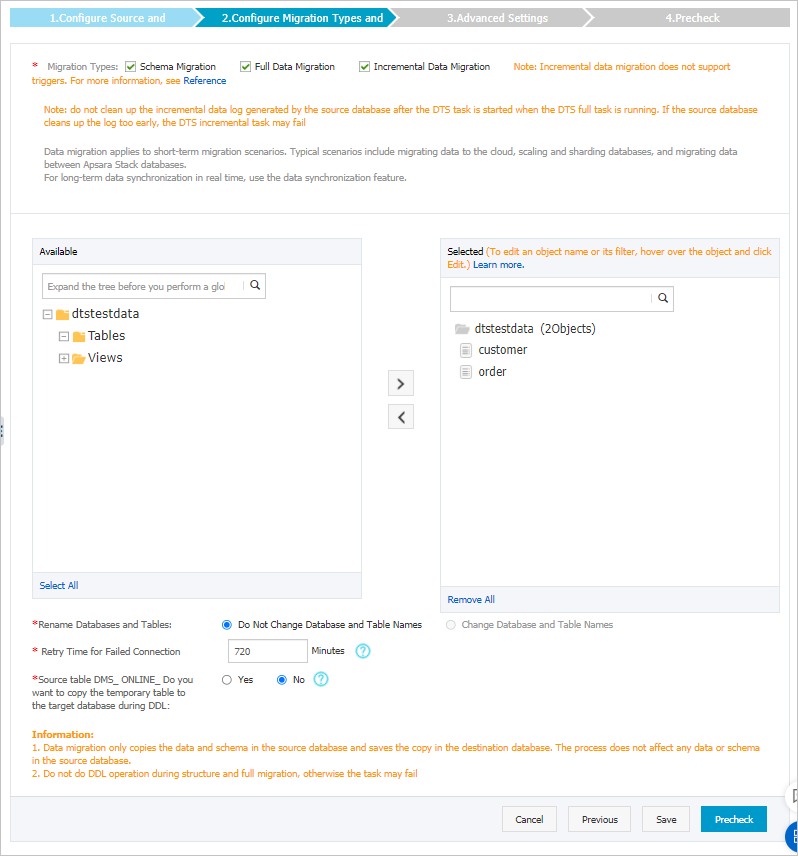

移行するオブジェクトと移行タイプを選択します。

設定

説明

移行タイプを選択

フルデータ移行のみを実行するには、[スキーマ移行] と [フルデータ移行] を選択します。

データ移行中のサービスの継続性を確保するには、[スキーマ移行]、[フルデータ移行]、[増分データ移行] を選択します。

説明[増分データ移行] が選択されていない場合は、フルデータ移行中に Amazon Aurora MySQL クラスタにデータを書き込まないことをお勧めします。これにより、ソースデータベースと宛先データベース間のデータの整合性が確保されます。

移行するオブジェクトを選択

[使用可能] セクションから 1 つ以上のオブジェクトを選択し、

アイコンをクリックして、オブジェクトを [選択済み] セクションに移動します。説明

アイコンをクリックして、オブジェクトを [選択済み] セクションに移動します。説明列、テーブル、またはデータベースを移行対象のオブジェクトとして選択できます。

デフォルトでは、オブジェクトが宛先データベースに移行された後、オブジェクトの名前は宛先データベースで変更されません。オブジェクト名マッピング機能を使用して、宛先データベースに移行されたオブジェクトの名前を変更できます。詳細については、「オブジェクト名マッピング」をご参照ください。

オブジェクト名マッピング機能を使用してオブジェクトの名前を変更した場合、そのオブジェクトに依存する他のオブジェクトは移行に失敗する可能性があります。

オブジェクトの名前を変更するかどうかを指定

オブジェクト名マッピング機能を使用して、宛先データベースに移行されたオブジェクトの名前を変更できます。詳細については、「オブジェクト名マッピング」をご参照ください。

ソースデータベースまたは宛先データベースへの接続に失敗した場合のリトライ時間範囲を指定

デフォルトでは、DTS がソースデータベースまたは宛先データベースに接続できない場合、DTS は次の 12 時間以内に再試行します。ビジネス要件に基づいてリトライ時間範囲を指定できます。指定されたリトライ時間内に DTS がソースデータベースと宛先データベースに再接続すると、DTS はデータ移行タスクを再開します。それ以外の場合、データ移行タスクは失敗します。

説明DTS が接続を再試行すると、DTS インスタンスの料金が発生します。ビジネス要件に基づいてリトライ時間範囲を指定することをお勧めします。ソースインスタンスと宛先インスタンスが解放された後、できるだけ早く DTS インスタンスを解放することもできます。

DMS がソーステーブルでオンライン DDL 操作を実行するときに、一時テーブルを宛先データベースにコピーするかどうかを指定

Data Management (DMS) を使用してソースデータベースでオンライン DDL 操作を実行する場合、オンライン DDL 操作によって生成された一時テーブルを移行するかどうかを指定できます。有効な値:

[はい]: DTS は、オンライン DDL 操作によって生成された一時テーブルのデータを移行します。

説明オンライン DDL 操作によって大量のデータが生成される場合、データ移行タスクに遅延が発生する可能性があります。

[いいえ]: DTS は、オンライン DDL 操作によって生成された一時テーブルのデータを移行しません。ソースデータベースの元の DDL データのみが移行されます。

説明[いいえ] を選択すると、PolarDB for MySQL クラスタのテーブルがロックされる可能性があります。

ページの右下隅にある [事前チェック] をクリックします。

説明データ移行タスクを開始する前に、DTS は事前チェックを実行します。タスクが事前チェックに合格した後でのみ、データ移行タスクを開始できます。

タスクが事前チェックに合格しなかった場合は、各失敗項目の横にある

アイコンをクリックして詳細を表示できます。

アイコンをクリックして詳細を表示できます。原因に基づいて問題のトラブルシューティングを行い、事前チェックを再実行できます。

問題のトラブルシューティングを行う必要がない場合は、失敗した項目を無視して事前チェックを再実行できます。

タスクが事前チェックに合格したら、[次へ] をクリックします。

[設定の確認] ダイアログボックスで、[チャネル仕様] パラメータを指定し、[data Transmission Service (従量課金) 利用規約] を選択します。

[購入して開始] をクリックして、データ移行タスクを開始します。

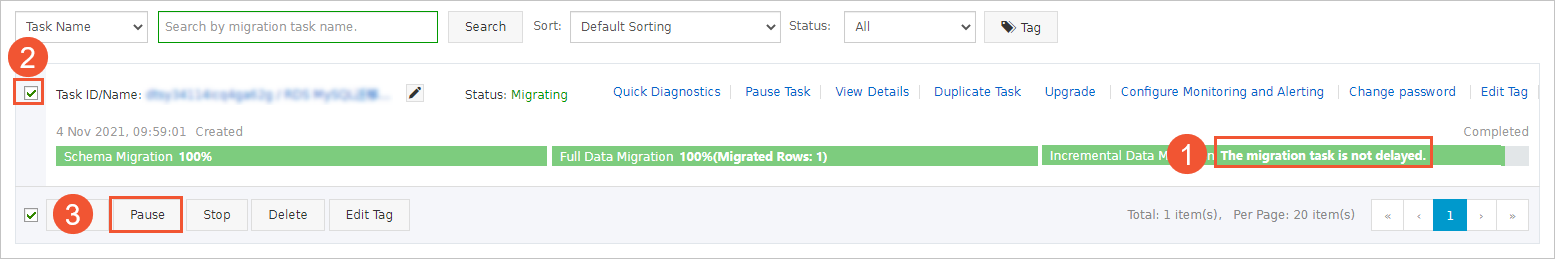

スキーマ移行とフルデータ移行

フルデータ移行中にタスクを手動で停止しないことをお勧めします。そうしないと、宛先データベースに移行されたデータが不完全になる可能性があります。データ移行タスクが自動的に停止するまで待つことができます。

スキーマ移行、フルデータ移行、増分データ移行

増分データ移行タスクは自動的に停止しません。タスクを手動で停止する必要があります。

重要適切な時間にデータ移行タスクを手動で停止することをお勧めします。たとえば、オフピーク時や、ワークロードを宛先クラスタに切り替える前にタスクを停止できます。

移行タスクのプログレスバーに [増分データ移行] と [移行タスクは遅延していません] が表示されるまで待ちます。次に、数分間ソースデータベースへのデータの書き込みを停止します。プログレスバーに [増分データ移行] の遅延が表示される場合があります。

[増分データ移行] のステータスが再び [移行タスクは遅延していません] に変わるまで待ちます。次に、移行タスクを手動で停止します。

ワークロードを PolarDB for MySQL クラスタに切り替えます。