You can use an ACK One Application Load Balancer (ALB) multi-cluster gateway with ACK One GitOps or multi-cluster application distribution to quickly build an active-active zone-disaster recovery system for your applications. This system ensures high availability and provides smooth, automatic migration during failures. This topic describes how to build a zone-disaster recovery system using a multi-cluster gateway.

Solution architecture

This topic uses a web application as an example to show the architecture of a zone-disaster recovery solution that uses an ALB multi-cluster gateway. The architecture includes deployment and service resources. The following figure shows the structure.

Create two ACK clusters, Cluster 1 and Cluster 2, in two different zones, Zone 1 and Zone 2, within the same region.

Use ACK One GitOps to distribute the application to Cluster 1 and Cluster 2.

Create an ALB multi-cluster gateway in the ACK One fleet instance by creating an AlbConfig resource.

After the ALB multi-cluster gateway is created, you can create an Ingress to route traffic based on weights or to a specified cluster based on the header. If a cluster fails, traffic automatically fails over to the other cluster.

RDS data synchronization depends on the capabilities of the middleware itself.

Disaster recovery overview

Cloud disaster recovery is divided into three main types:

Cross-zone disaster recovery: This includes active-active and active-standby strategies within the same region. The physical distance between data centers in the same region is short, which results in low network latency. This approach protects against zone-level disasters, such as fires, network outages, or power failures. This solution is practical because it simplifies data backup and rapid recovery.

Cross-region active-active disaster recovery: This approach has higher network latency but protects against regional disasters, such as earthquakes and floods.

Two-region three-data-center architecture: This architecture combines a dual-center setup in one region with a cross-region disaster recovery site. It offers the advantages of both approaches and is suitable for scenarios that require high continuity and availability for applications and data.

From a business architecture perspective, a company's business system is typically structured from top to bottom into an access layer, an application layer, and a data layer.

Access layer: This is the traffic entry point. It receives traffic and forwards it to the backend application layer based on routing rules.

Application layer: This layer contains application services. It processes data based on requests and returns the results upstream.

Data layer: This layer provides data storage services. It supplies data to and stores data from the application layer.

To achieve disaster recovery for the entire business, you must implement corresponding measures for each layer.

Access layer: The ACK One multi-cluster gateway serves as the access layer and supports high availability across zones in the same region.

Application layer: The ACK One multi-cluster gateway handles application layer disaster recovery. It can be used to implement active-active or active-standby zone-disaster recovery and cross-region disaster recovery for applications.

Data layer: Data layer disaster recovery and data synchronization depend on the middleware itself.

Feature advantages

The disaster recovery solution based on the ACK One multi-cluster gateway has the following advantages over a solution based on DNS traffic distribution:

A DNS-based solution requires multiple load balancer (LB) IP addresses, one for each cluster. The multi-cluster gateway solution requires only one LB IP address at the region level and provides high availability across multiple zones in the same region by default.

The multi-cluster gateway solution supports Layer 7 routing and forwarding. The DNS-based solution does not.

In a DNS-based solution, IP address switching often causes brief service interruptions due to client-side caching. The multi-cluster gateway solution provides a smoother traffic failover to the backend service of another cluster.

The multi-cluster gateway is a regional resource. All operations are performed only in the fleet instance. You do not need to install an Ingress Controller or create Ingress resources in each ACK cluster. This provides region-level global traffic management and reduces multi-cluster management costs.

Prerequisites

The Server Load Balancer (SLB) ALB service is enabled.

You have enabled the fleet management feature.

Two ACK clusters that are in the same VPC as the fleet instance are associated with the ACK One fleet. For more information, see Manage associated clusters.

Obtain the KubeConfig for the Fleet instance from the ACK One console, and connect to the Fleet instance using kubectl.

Install the latest version of Cloud Assistant CLI and configure Cloud Assistant CLI.

Step 1: Deploy applications to multiple clusters using GitOps or application distribution

ACK One supports two methods to deploy applications to multiple clusters: multi-cluster GitOps and multi-cluster application resource distribution. For more information, see Quick Start for GitOps, Create a multi-cluster application, and Quick Start for application distribution. This section uses multi-cluster GitOps as an example.

Log on to the ACK One console. In the navigation pane on the left, choose .

In the upper-left corner of the Multi-cluster GitOps page, click the

button next to the fleet name, and select the target fleet from the drop-down list.

button next to the fleet name, and select the target fleet from the drop-down list.Click to go to the Create Multi-cluster Application - GitOps page.

NoteIf GitOps is not enabled for the ACK One fleet instance, enable it first. For more information, see Enable GitOps in an ACK One fleet instance.

To access GitOps over the Internet, enable public access to GitOps.

On the Create with YAML tab, copy the following YAML content to the console, and then click OK to create and deploy the application.

NoteThe following YAML content deploys

web-demoto all associated clusters. You can also select clusters on the Quick Create tab. Your selections are reflected in the YAML content on the Create with YAML tab.apiVersion: argoproj.io/v1alpha1 kind: ApplicationSet metadata: name: appset-web-demo namespace: argocd spec: template: metadata: name: '{{.metadata.annotations.cluster_id}}-web-demo' namespace: argocd spec: destination: name: '{{.name}}' namespace: gateway-demo project: default source: repoURL: https://github.com/AliyunContainerService/gitops-demo.git path: manifests/helm/web-demo targetRevision: main helm: valueFiles: - values.yaml parameters: - name: envCluster value: '{{.metadata.annotations.cluster_name}}' syncPolicy: automated: {} syncOptions: - CreateNamespace=true generators: - clusters: selector: matchExpressions: - values: - cluster key: argocd.argoproj.io/secret-type operator: In - values: - in-cluster key: name operator: NotIn goTemplateOptions: - missingkey=error syncPolicy: preserveResourcesOnDeletion: false goTemplate: true

Step 2: Create an ALB multi-cluster gateway in the fleet using kubectl

Create an AlbConfig object in the ACK One fleet instance to create an ACK One ALB multi-cluster gateway and associate clusters with it.

Obtain the IDs of two vSwitches from the VPC where the ACK One fleet is located.

Create a

gateway.yamlfile with the following content.NoteReplace

${vsw-id1}and${vsw-id2}with the vSwitch IDs obtained from the preceding step, and replace${cluster1}and${cluster2}with the IDs of the associated clusters you want to add.For associated clusters

${cluster1}and${cluster2}, you must configure the inbound rules of their security group to allow access from all IP addresses and ports of the vSwitch CIDR block.

apiVersion: alibabacloud.com/v1 kind: AlbConfig metadata: name: ackone-gateway-demo annotations: # Add the associated clusters that process traffic to the ALB multi-cluster instance. alb.ingress.kubernetes.io/remote-clusters: ${cluster1},${cluster2} spec: config: name: one-alb-demo addressType: Internet addressAllocatedMode: Fixed zoneMappings: - vSwitchId: ${vsw-id1} - vSwitchId: ${vsw-id2} listeners: - port: 8001 protocol: HTTP --- apiVersion: networking.k8s.io/v1 kind: IngressClass metadata: name: alb spec: controller: ingress.k8s.alibabacloud/alb parameters: apiGroup: alibabacloud.com kind: AlbConfig name: ackone-gateway-demoThe following table describes the parameters.

Parameter

Required

Description

metadata.nameYes

The name of the AlbConfig.

metadata.annotations:alb.ingress.kubernetes.io/remote-clustersYes

The list of associated clusters to be added to the ALB multi-cluster gateway. The cluster IDs listed here have been associated with the Fleet instance.

spec.config.nameNo

The name of the ALB instance.

spec.config.addressTypeNo

The network type of the ALB instance. Valid values:

Internet (default): Public network. The ALB instance provides services to the Internet and is accessible over the Internet.

NoteTo allow an ALB instance to provide Internet-facing services, the ALB instance needs to be associated with an elastic IP address (EIP). If you use an Internet-facing ALB instance, you are charged instance fees and bandwidth or data transfer fees for the associated EIPs. For more information, see Pay-as-you-go.

Intranet: Private network. The ALB instance provides services within a VPC and cannot be accessed over the Internet.

spec.config.zoneMappingsYes

The IDs of the vSwitches that are associated with the ALB instance. For more information about how to create a vSwitch, see Create and manage vSwitches.

NoteThe specified vSwitches must be deployed in the zones supported by the ALB instance and deployed in the same VPC as the cluster. For more information about regions and zones supported by ALB, refer to Regions and zones.

ALB supports multi-zone deployment. If the current region supports two or more zones, select vSwitches in at least two zones to ensure high availability.

spec.listenersNo

The listener port and protocol of the ALB instance. The example provided in this topic configures an HTTP listener on port 8001.

A listener defines how ALB receives traffic. We recommend that you retain the listener configuration. Otherwise, you must create a listener before you can use ALB Ingresses.

Run the following command to deploy

gateway.yamland create the ALB multi-cluster gateway and IngressClass.kubectl apply -f gateway.yamlRun the following command to check whether the ALB multi-cluster gateway is created. This may take 1 to 3 minutes.

kubectl get albconfig ackone-gateway-demoThe expected output is as follows:

NAME ALBID DNSNAME PORT&PROTOCOL CERTID AGE ackone-gateway-demo alb-xxxx alb-xxxx.<regionid>.alb.aliyuncsslb.com 4d9hRun the following command to check whether the associated clusters are added successfully.

kubectl get albconfig ackone-gateway-demo -ojsonpath='{.status.loadBalancer.subClusters}'The expected output is a list of cluster IDs.

Step 3: Use an Ingress to implement zone-disaster recovery

The multi-cluster gateway uses an Ingress to manage traffic for multiple clusters. Create an Ingress object in the ACK One fleet instance to implement active-active zone-disaster recovery.

Create the namespace where the Service resides in the fleet instance. In this example, the namespace is

gateway-demo.Create an

ingress-demo.yamlfile with the following content.NoteThe sum of the weights specified by multiple

alb.ingress.kubernetes.io/cluster-weightannotations must be 100.This Ingress exposes the backend service

service1using the/svc1routing rule under theexample.comdomain name. Before you apply the YAML file, replace${cluster1-id}and${cluster2-id}with your cluster IDs.

apiVersion: networking.k8s.io/v1 kind: Ingress metadata: annotations: alb.ingress.kubernetes.io/listen-ports: | [{"HTTP": 8001}] alb.ingress.kubernetes.io/cluster-weight.${cluster1-id}: "20" alb.ingress.kubernetes.io/cluster-weight.${cluster2-id}: "80" name: web-demo namespace: gateway-demo spec: ingressClassName: alb rules: - host: alb.ingress.alibaba.com http: paths: - path: /svc1 pathType: Prefix backend: service: name: service1 port: number: 80Run the following command to deploy the Ingress in the ACK One fleet.

kubectl apply -f ingress-demo.yaml -n gateway-demo

Step 4: Verify the active-active zone-disaster recovery

Traffic is routed to clusters based on the specified weights

The format for accessing the service is as follows:

curl -H "host: alb.ingress.alibaba.com" alb-xxxx.<regionid>.alb.aliyuncsslb.com:<listeners port>/svc1The following table lists the sync parameters and their descriptions:

Parameter | Description |

| The |

| Port 8001, which is defined in the AlbConfig and declared in the |

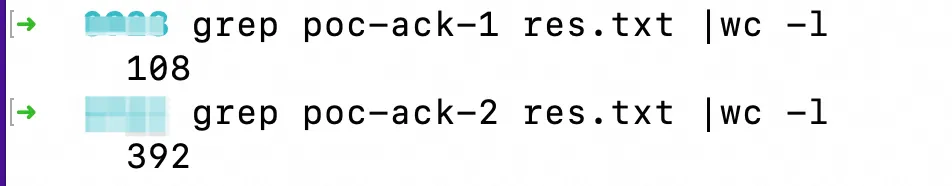

After you run the following command, the result shows that requests are sent to Cluster 1 (poc-ack-1) and Cluster 2 (poc-ack-2) at a 20:80 ratio.

for i in {1..500}; do curl -H "host: alb.ingress.alibaba.com" alb-xxxx.cn-beijing.alb.aliyuncsslb.com:8001/svc1; done > res.txt

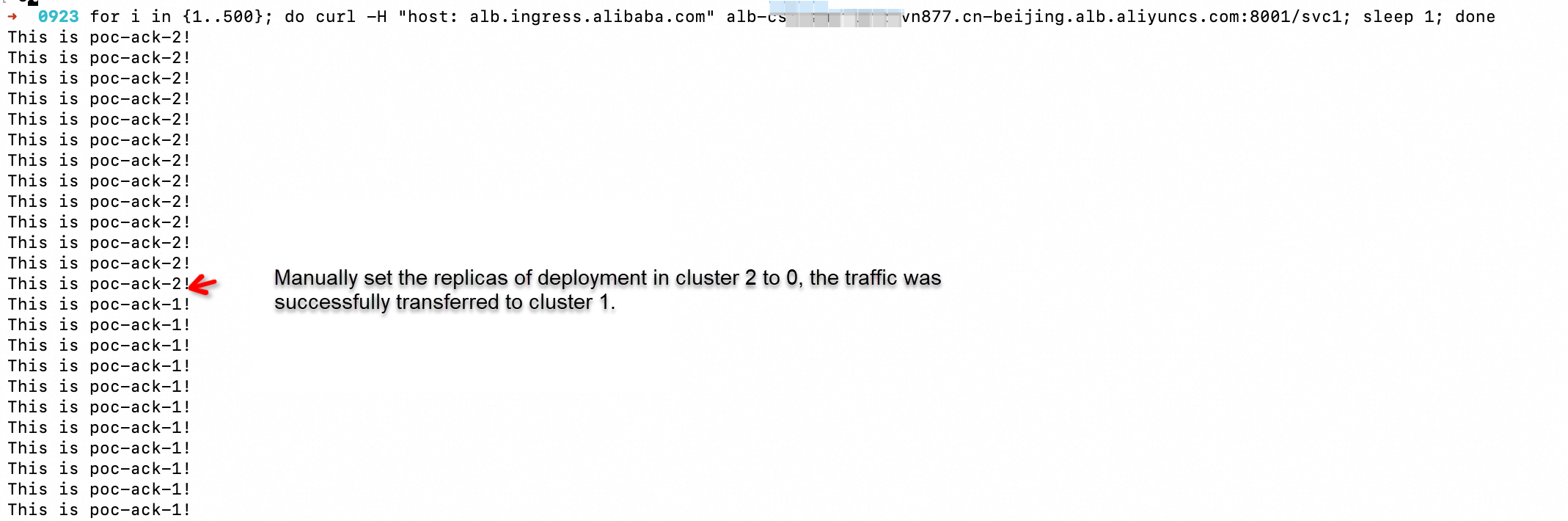

Traffic is automatically and smoothly migrated when an application in a cluster becomes abnormal

After you run the following command, manually set the number of application replicas in Cluster 2 to 0. You can see that the traffic automatically and smoothly fails over to Cluster 1.

for i in {1..500}; do curl -H "host: alb.ingress.alibaba.com" alb-xxxx.cn-beijing.alb.aliyuncsslb.com:8001/svc1; sleep 1; done