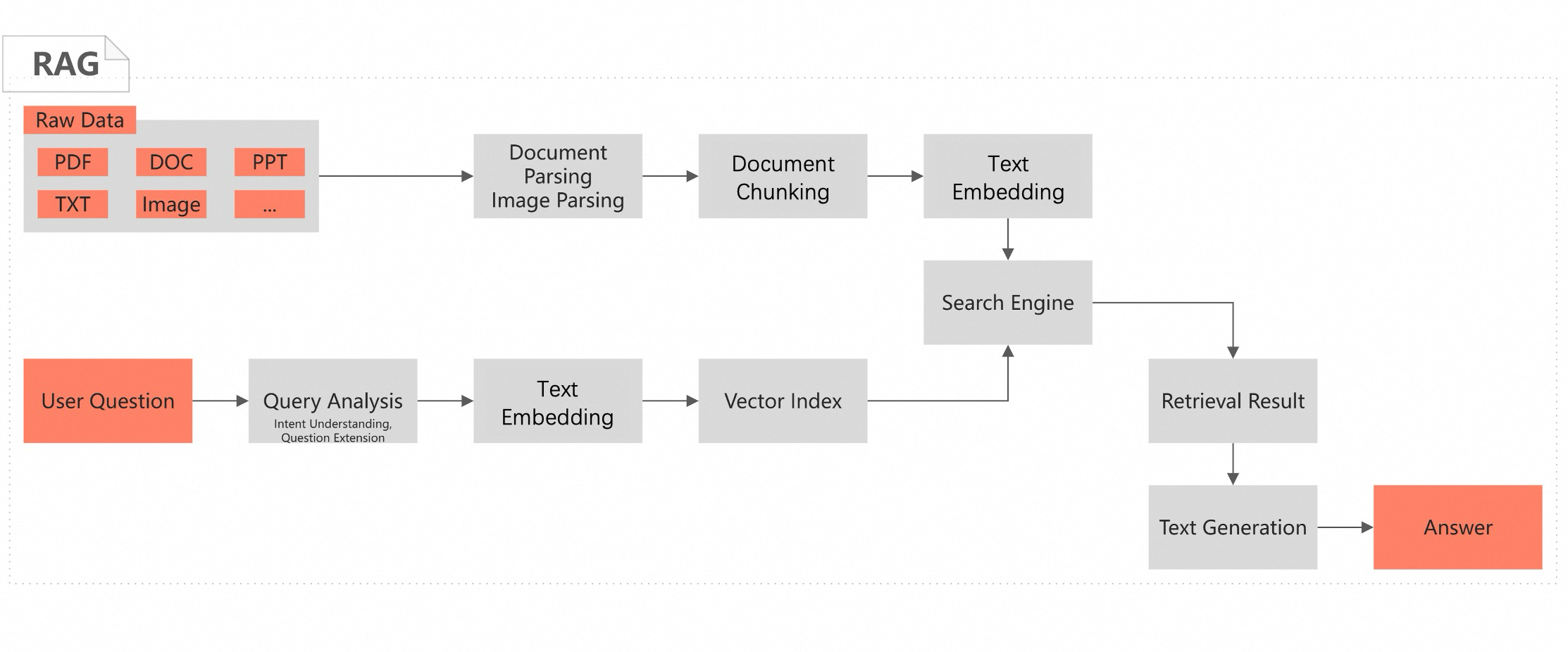

AI Search Open Platform provides component-based algorithm services for intelligent search and retrieval-augmented generation (RAG) scenarios. AI Search Open Platform features a variety of built-in services such as document parsing, document chunking, text embedding, query analysis, retrieval, sorting, performance evaluation, and large language models (LLMs). Developers can flexibly select components for search service development based on their business requirements.

You are not charged for the activation of AI Search Open Platform unless you use the service.

After you activate AI Search Open Platform as a new user, the system provides each Alibaba Cloud account with 10 free service calls. The RAM users within an Alibaba Cloud account share the free quota with the Alibaba Cloud account. Click Activate Now to experience the LLM service. After 10 free calls are used up, the system charges based on the actual usage of LLM service calls.

Features

Document content parsing

Supports minute-level document parsing. For PDF, DOC, HTML, TXT, and other document formats, AI Search Open Platform can distinguish various layouts and extract logical hierarchical structures such as titles and paragraphs from unstructured documents, along with content elements such as text, tables, images, and code. AI Search Open Platform also removes headers and footers, and identifies superscripts, subscripts, and other information, and generates documents in a structured format.

Image content parsing

Allows you to parse the content and identify the text of images, such as architecture diagrams and analytical charts, based on multi-modal LLMs. You can also use the optical character recognition (OCR) feature to identify the text in an image and use the extracted text for image retrieval and image-based Q&A.

Document chunking

Provides a general-purpose document chunking service based on document semantics, paragraph structure, and specific rules to improve the efficiency of subsequent document processing and retrieval. The generated chunk tree can be used for context completion during retrieval.

Multilingual embedding models

Text embedding converts text data to dense vectors. Multiple models are available for different languages, input length, and output dimensions. You can use this service to search information, classify texts, and compare relevance.

Text sparse embedding converts text data into sparse vectors that occupy less storage space. You can use sparse vectors to express keywords and the information about frequently used terms. You can perform a hybrid search by using sparse and dense vectors to improve the retrieval performance.

The vector model-based tuning service is supported. You can customize and train a dimensionality reduction model to reduce the dimensions of vectors without excessively affecting the retrieval results.

Query analysis

Provides the content analysis service for queries based on LLMs and the NLP capabilities to understand the intent of users, extend similar questions, and convert questions in natural language into SQL statements. This improves the effect of conversational search in RAG scenarios.

Search engine

Provides the vector and text retrieval engines. You can store vectors and texts, build indexes, and perform online vector and text retrieval. After you enable the engines, you can use the engines together with the API operations of AI Search Open Platform to process and retrieve data.

Sorting

Provides a query and doc-related sorting service. In RAG and search scenarios, you can use the sorting service to find more relevant content and return the content in turn. The sorting service can effectively improve the accuracy of retrieval and LLM generation.

LLM-based text generation

Provides various LLMs, including Qwen3-235B-A22B models, QwQ models, all DeepSeek models (including DeepSeek R1 and V3, and 7B and 14B distilled models), and Qwen series (Qwen-Turbo, Qwen-Plus, and Qwen-Max). AI Search Open Platform also provides the built-in OpenSearch-Qwen-Turbo model, which is developed based on the qwen-turbo model and is enhanced in RAG capabilities after supervised fine-tuning to reduce the hallucination rate.

Benefits

Rich AI search capabilities: AI Search Open Platform allows you to train dedicated AI search models based on a leading model base. AI Search Open Platform integrates end-to-end component-based services for search and RAG scenarios.

Flexible call methods: Developers, enterprise customers, and independent software vendors (ISVs) can call API operations or use SDKs to integrate some or all of the AI search services with their business systems.

Out-of-the-box availability: All services are available immediately after you activate AI Search Open Platform.

Best practices: With years of accumulation in intelligent search and RAG, AI Search Open Platform provides a variety of AI search best practices to help you quickly build search pipelines that are better tailored for your business requirements.

Scenarios

AI Search Open Platform allows you to perform service development in the following scenarios:

RAG

Application scenarios:

Intelligent customer service

Conversational search

Knowledge graph enhancement

Personalized recommendation

For information about development examples, see

Multi-modal search

Application scenarios:

E-commerce and retail

News content

Gaming

Healthcare

Finance

For information about development examples, see