The Qwen image editing models support multi-image input and output. They can accurately modify text in an image, add or remove objects, adjust a subject's pose, transfer an image style, and enhance image details.

Model overview

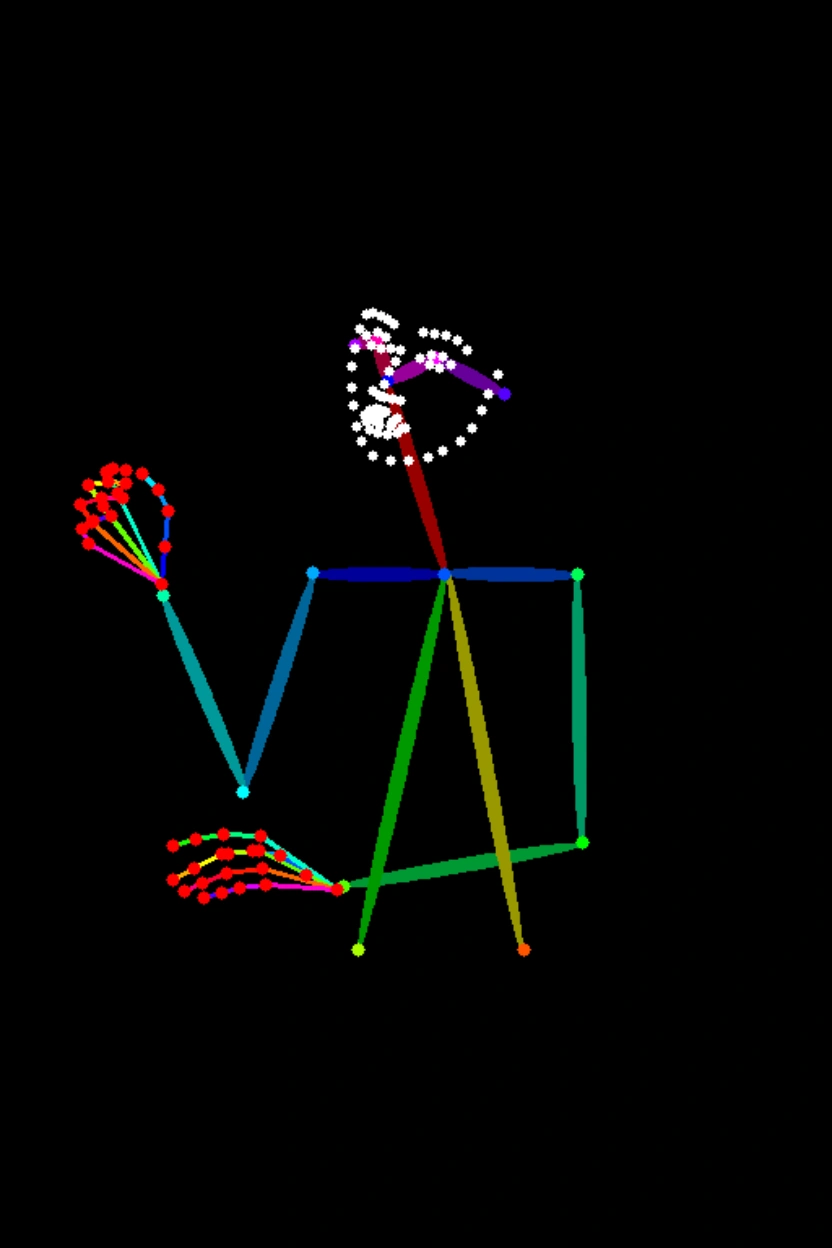

Input image 1 | Input image 2 | Input image 3 | Output images (multiple images) | |

|

|

|

|

|

Input prompt: The girl in Image 1 wears the black dress from Image 2 and sits in the pose from Image 3.

Model | Description | Output image specifications |

qwen-image-2.0-pro Currently has the same capabilities as qwen-image-2.0-pro-2026-03-03 | The Qwen Pro series for image generation and editing. It offers enhanced capabilities for text rendering, realistic textures, and semantic adherence. For image generation, see Qwen-Image. | Image resolution:

Image format: PNG Number of images: 1 to 6 |

qwen-image-2.0-pro-2026-03-03 | ||

qwen-image-2.0 Currently has the same capabilities as qwen-image-2.0-2026-03-03 | An accelerated version of the Qwen image generation and editing model that balances performance and response speed. For image generation, see Qwen-Image. | |

qwen-image-2.0-2026-03-03 | ||

qwen-image-edit-max Currently has the same capabilities as qwen-image-edit-max-2026-01-16 | The Qwen image editing Max series. It offers enhanced capabilities for industrial design, geometric reasoning, and character consistency. | Image resolution:

Image format: PNG Number of images: 1 to 6 |

qwen-image-edit-max-2026-01-16 | ||

qwen-image-edit-plus Currently has the same capabilities as qwen-image-edit-plus-2025-10-30 | The Qwen image editing Plus series supports multi-image output and custom resolutions. | |

qwen-image-edit-plus-2025-12-15 | ||

qwen-image-edit-plus-2025-10-30 | ||

qwen-image-edit | Supports single-image editing and multi-image fusion. | Image resolution: Not customizable. The output resolution follows the default behavior described above. Image format: PNG Number of images: 1 |

Before calling the API, see the Models for each region.

Prerequisites

Before making a call, get an API key and export the API key as an environment variable.

To call the API using the SDK, install the DashScope SDK. The SDK is available for Python and Java.

The Beijing and Singapore regions have separate API keys and request endpoints. Do not use them interchangeably. Cross-region calls result in authentication failures or service errors.

HTTP

Singapore: POST https://dashscope-intl.aliyuncs.com/api/v1/services/aigc/multimodal-generation/generation

Beijing: POST https://dashscope.aliyuncs.com/api/v1/services/aigc/multimodal-generation/generation

Request parameters | Single-image editingThis example shows how to use the Multi-image fusionThis example shows how to use the |

Headers | |

Content-Type The content type of the request. Must be | |

Authorization The authentication credentials using a Model Studio API key. Example: | |

Request body | |

model The model name. Example value: qwen-image-2.0-pro. | |

input The input object, which contains the following fields: | |

parameters Additional parameters to control image generation. |

Response parameters | Successful ResponseTask data (task status and image URLs) is retained for only 24 hours and then automatically purged. Save generated images promptly. Error ResponseIf a task fails, the response returns relevant information. You can identify the cause of the failure from the code and message fields. For more information about how to resolve errors, see Error codes. |

output The results generated by the model. | |

usage The resource usage for this request. This parameter is returned only when the request is successful. | |

request_id Unique identifier for the request. Use for tracing and troubleshooting issues. | |

code The error code. Returned only when the request fails. See error codes for details. | |

message Detailed error message. Returned only when the request fails. See error codes for details. |

DashScope SDK

The SDK parameter names are mostly consistent with the HTTP API. For a complete list of parameters, see the Qwen API reference.

Python SDK

Install the latest version of the DashScope Python SDK. Otherwise, runtime errors may occur: Install or upgrade the SDK.

Asynchronous APIs are not supported.

Request examples

Pass an image using a public URL

import json

import os

import dashscope

from dashscope import MultiModalConversation

# The following is the URL for the Singapore region. If you use a model in the Beijing region, replace the URL with: https://dashscope.aliyuncs.com/api/v1

dashscope.base_http_api_url = 'https://dashscope-intl.aliyuncs.com/api/v1'

# The model supports one to three input images.

messages = [

{

"role": "user",

"content": [

{"image": "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20250925/thtclx/input1.png"},

{"image": "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20250925/iclsnx/input2.png"},

{"image": "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20250925/gborgw/input3.png"},

{"text": "Make the girl from Image 1 wear the black dress from Image 2 and sit in the pose from Image 3."}

]

}

]

# The Singapore and Beijing regions use separate API keys. Get an API key: https://www.alibabacloud.com/help/en/model-studio/get-api-key

# Replace with your API key if the environment variable is not set: api_key="sk-xxx"

api_key = os.getenv("DASHSCOPE_API_KEY")

# qwen-image-2.0, qwen-image-edit-max, and qwen-image-edit-plus series support outputting 1 to 6 images. This example shows how to output 2 images.

response = MultiModalConversation.call(

api_key=api_key,

model="qwen-image-2.0-pro",

messages=messages,

stream=False,

n=2,

watermark=False,

negative_prompt=" ",

prompt_extend=True,

size="1024*1536",

)

if response.status_code == 200:

# To view the full response, uncomment the following line.

# print(json.dumps(response, ensure_ascii=False))

for i, content in enumerate(response.output.choices[0].message.content):

print(f"URL of output image {i+1}: {content['image']}")

else:

print(f"HTTP status code: {response.status_code}")

print(f"Error code: {response.code}")

print(f"Error message: {response.message}")

print("For more information, see the documentation: https://www.alibabacloud.com/help/en/model-studio/error-code")

Pass an image using Base64 encoding

import json

import os

import dashscope

from dashscope import MultiModalConversation

import base64

import mimetypes

# The following is the URL for the Singapore region. If you use a model in the Beijing region, replace the URL with: https://dashscope.aliyuncs.com/api/v1

dashscope.base_http_api_url = 'https://dashscope-intl.aliyuncs.com/api/v1'

# --- For Base64 encoding ---

# Format: data:{mime_type};base64,{base64_data}

def encode_file(file_path):

mime_type, _ = mimetypes.guess_type(file_path)

if not mime_type or not mime_type.startswith("image/"):

raise ValueError("Unsupported or unrecognized image format")

try:

with open(file_path, "rb") as image_file:

encoded_string = base64.b64encode(

image_file.read()).decode('utf-8')

return f"data:{mime_type};base64,{encoded_string}"

except IOError as e:

raise IOError(f"Error reading file: {file_path}, Error: {str(e)}")

# Get the Base64 encoding of the image.

# Call the encoding function. Replace "/path/to/your/image.png" with the path to your local image file.

image = encode_file("/path/to/your/image.png")

messages = [

{

"role": "user",

"content": [

{"image": image},

{"text": "Generate an image that matches the depth map, following this description: A dilapidated red bicycle is parked on a muddy path with a dense primeval forest in the background."}

]

}

]

# The Singapore and Beijing regions use separate API keys. Get an API key: https://www.alibabacloud.com/help/en/model-studio/get-api-key

# Replace with your API key if the environment variable is not set: api_key="sk-xxx"

api_key = os.getenv("DASHSCOPE_API_KEY")

# qwen-image-2.0, qwen-image-edit-max, and qwen-image-edit-plus series support outputting 1 to 6 images. This example shows how to output 2 images.

response = MultiModalConversation.call(

api_key=api_key,

model="qwen-image-2.0-pro",

messages=messages,

stream=False,

n=2,

watermark=False,

negative_prompt=" ",

prompt_extend=True,

size="1536*1024",

)

if response.status_code == 200:

# To view the full response, uncomment the following line.

# print(json.dumps(response, ensure_ascii=False))

for i, content in enumerate(response.output.choices[0].message.content):

print(f"URL of output image {i+1}: {content['image']}")

else:

print(f"HTTP status code: {response.status_code}")

print(f"Error code: {response.code}")

print(f"Error message: {response.message}")

print("For more information, see the documentation: https://www.alibabacloud.com/help/en/model-studio/error-code")

Download an image from a URL

# Install the requests library to download images: pip install requests

import requests

def download_image(image_url, save_path='output.png'):

try:

response = requests.get(image_url, stream=True, timeout=300) # Set timeout

response.raise_for_status() # Raise an exception if the HTTP status code is not 200

with open(save_path, 'wb') as f:

for chunk in response.iter_content(chunk_size=8192):

f.write(chunk)

print(f"Image downloaded successfully to: {save_path}")

except requests.exceptions.RequestException as e:

print(f"Image download failed: {e}")

image_url = "https://dashscope-result-sz.oss-cn-shenzhen.aliyuncs.com/xxx.png?Expires=xxx"

download_image(image_url, save_path='output.png')

Response example

The image link is valid for 24 hours. Download the image promptly.

input_tokensandoutput_tokensare compatibility fields, currently fixed at 0.

{

"status_code": 200,

"request_id": "fa41f9f9-3cb6-434d-a95d-4ae6b9xxxxxx",

"code": "",

"message": "",

"output": {

"text": null,

"finish_reason": null,

"choices": [

{

"finish_reason": "stop",

"message": {

"role": "assistant",

"content": [

{

"image": "https://dashscope-result-hz.oss-cn-hangzhou.aliyuncs.com/xxx.png?Expires=xxx"

},

{

"image": "https://dashscope-result-hz.oss-cn-hangzhou.aliyuncs.com/xxx.png?Expires=xxx"

}

]

}

}

],

"audio": null

},

"usage": {

"input_tokens": 0,

"output_tokens": 0,

"characters": 0,

"height": 1536,

"image_count": 2,

"width": 1024

}

}Java SDK

Install the latest version of the DashScope Java SDK. Otherwise, a runtime error may occur. See Install or upgrade the SDK.

Request examples

Pass an image using a public URL

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversation;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversationParam;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversationResult;

import com.alibaba.dashscope.common.MultiModalMessage;

import com.alibaba.dashscope.common.Role;

import com.alibaba.dashscope.exception.ApiException;

import com.alibaba.dashscope.exception.NoApiKeyException;

import com.alibaba.dashscope.exception.UploadFileException;

import com.alibaba.dashscope.utils.JsonUtils;

import com.alibaba.dashscope.utils.Constants;

import java.io.IOException;

import java.util.Arrays;

import java.util.Collections;

import java.util.HashMap;

import java.util.Map;

import java.util.List;

public class QwenImageEdit {

static {

// The following URL is for the Singapore region. If you use a model in the Beijing region, replace the URL with: https://dashscope.aliyuncs.com/api/v1

Constants.baseHttpApiUrl = "https://dashscope-intl.aliyuncs.com/api/v1";

}

// The Singapore and Beijing regions use separate API keys. Get an API key: https://www.alibabacloud.com/help/en/model-studio/get-api-key

// If you have not configured the environment variable, replace the following line with your API key: apiKey="sk-xxx"

static String apiKey = System.getenv("DASHSCOPE_API_KEY");

public static void call() throws ApiException, NoApiKeyException, UploadFileException, IOException {

MultiModalConversation conv = new MultiModalConversation();

// The model supports one to three input images.

MultiModalMessage userMessage = MultiModalMessage.builder().role(Role.USER.getValue())

.content(Arrays.asList(

Collections.singletonMap("image", "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20250925/thtclx/input1.png"),

Collections.singletonMap("image", "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20250925/iclsnx/input2.png"),

Collections.singletonMap("image", "https://help-static-aliyun-doc.aliyuncs.com/file-manage-files/zh-CN/20250925/gborgw/input3.png"),

Collections.singletonMap("text", "Make the girl from Image 1 wear the black dress from Image 2 and sit in the pose from Image 3.")

)).build();

// qwen-image-2.0, qwen-image-edit-max, and qwen-image-edit-plus series support outputting 1 to 6 images. This example shows how to output 2 images.

Map<String, Object> parameters = new HashMap<>();

parameters.put("watermark", false);

parameters.put("negative_prompt", " ");

parameters.put("n", 2);

parameters.put("prompt_extend", true);

parameters.put("size", "1024*1536");

MultiModalConversationParam param = MultiModalConversationParam.builder()

.apiKey(apiKey)

.model("qwen-image-2.0-pro")

.messages(Collections.singletonList(userMessage))

.parameters(parameters)

.build();

MultiModalConversationResult result = conv.call(param);

// To view the complete response, uncomment the following line.

// System.out.println(JsonUtils.toJson(result));

List<Map<String, Object>> contentList = result.getOutput().getChoices().get(0).getMessage().getContent();

int imageIndex = 1;

for (Map<String, Object> content : contentList) {

if (content.containsKey("image")) {

System.out.println("URL of output image " + imageIndex + ": " + content.get("image"));

imageIndex++;

}

}

}

public static void main(String[] args) {

try {

call();

} catch (ApiException | NoApiKeyException | UploadFileException | IOException e) {

System.out.println(e.getMessage());

}

}

}Pass an image using Base64 encoding

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversation;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversationParam;

import com.alibaba.dashscope.aigc.multimodalconversation.MultiModalConversationResult;

import com.alibaba.dashscope.common.MultiModalMessage;

import com.alibaba.dashscope.common.Role;

import com.alibaba.dashscope.exception.ApiException;

import com.alibaba.dashscope.exception.NoApiKeyException;

import com.alibaba.dashscope.exception.UploadFileException;

import com.alibaba.dashscope.utils.JsonUtils;

import com.alibaba.dashscope.utils.Constants;

import java.io.IOException;

import java.nio.file.Files;

import java.nio.file.Path;

import java.nio.file.Paths;

import java.util.Arrays;

import java.util.Base64;

import java.util.Collections;

import java.util.HashMap;

import java.util.Map;

import java.util.List;

public class QwenImageEdit {

static {

// The following URL is for the Singapore region. If you use a model in the Beijing region, replace the URL with: https://dashscope.aliyuncs.com/api/v1

Constants.baseHttpApiUrl = "https://dashscope-intl.aliyuncs.com/api/v1";

}

// The Singapore and Beijing regions use separate API keys. Get an API key: https://www.alibabacloud.com/help/en/model-studio/get-api-key

// If you have not configured the environment variable, replace the following line with your API key: apiKey="sk-xxx"

static String apiKey = System.getenv("DASHSCOPE_API_KEY");

public static void call() throws ApiException, NoApiKeyException, UploadFileException, IOException {

// Replace "/path/to/your/image.png" with the path to your local image file.

String image = encodeFile("/path/to/your/image.png");

MultiModalConversation conv = new MultiModalConversation();

MultiModalMessage userMessage = MultiModalMessage.builder().role(Role.USER.getValue())

.content(Arrays.asList(

Collections.singletonMap("image", image),

Collections.singletonMap("text", "Generate an image that matches the depth map, following this description: A dilapidated red bicycle is parked on a muddy path with a dense primeval forest in the background.")

)).build();

// qwen-image-2.0, qwen-image-edit-max, and qwen-image-edit-plus series support outputting 1 to 6 images. This example shows how to output 2 images.

Map<String, Object> parameters = new HashMap<>();

parameters.put("watermark", false);

parameters.put("negative_prompt", " ");

parameters.put("n", 2);

parameters.put("prompt_extend", true);

parameters.put("size", "1536*1024");

MultiModalConversationParam param = MultiModalConversationParam.builder()

.apiKey(apiKey)

.model("qwen-image-2.0-pro")

.messages(Collections.singletonList(userMessage))

.parameters(parameters)

.build();

MultiModalConversationResult result = conv.call(param);

// To view the complete response, uncomment the following line.

// System.out.println(JsonUtils.toJson(result));

List<Map<String, Object>> contentList = result.getOutput().getChoices().get(0).getMessage().getContent();

int imageIndex = 1;

for (Map<String, Object> content : contentList) {

if (content.containsKey("image")) {

System.out.println("URL of output image " + imageIndex + ": " + content.get("image"));

imageIndex++;

}

}

}

/**

* Encodes a file into a Base64 string.

* @param filePath The file path.

* @return A Base64 string in the format: data:{mime_type};base64,{base64_data}

*/

public static String encodeFile(String filePath) {

Path path = Paths.get(filePath);

if (!Files.exists(path)) {

throw new IllegalArgumentException("File does not exist: " + filePath);

}

// Detect the MIME type.

String mimeType = null;

try {

mimeType = Files.probeContentType(path);

} catch (IOException e) {

throw new IllegalArgumentException("Unable to detect file type: " + filePath);

}

if (mimeType == null || !mimeType.startsWith("image/")) {

throw new IllegalArgumentException("Unsupported or unrecognized image format");

}

// Read the file content and encode it.

byte[] fileBytes = null;

try{

fileBytes = Files.readAllBytes(path);

} catch (IOException e) {

throw new IllegalArgumentException("Unable to read file content: " + filePath);

}

String encodedString = Base64.getEncoder().encodeToString(fileBytes);

return "data:" + mimeType + ";base64," + encodedString;

}

public static void main(String[] args) {

try {

call();

} catch (ApiException | NoApiKeyException | UploadFileException | IOException e) {

System.out.println(e.getMessage());

}

}

}Download an image from a URL

import java.io.FileOutputStream;

import java.io.InputStream;

import java.net.HttpURLConnection;

import java.net.URL;

public class ImageDownloader {

public static void downloadImage(String imageUrl, String savePath) {

try {

URL url = new URL(imageUrl);

HttpURLConnection connection = (HttpURLConnection) url.openConnection();

connection.setConnectTimeout(5000);

connection.setReadTimeout(300000);

connection.setRequestMethod("GET");

InputStream inputStream = connection.getInputStream();

FileOutputStream outputStream = new FileOutputStream(savePath);

byte[] buffer = new byte[8192];

int bytesRead;

while ((bytesRead = inputStream.read(buffer)) != -1) {

outputStream.write(buffer, 0, bytesRead);

}

inputStream.close();

outputStream.close();

System.out.println("Image downloaded successfully to: " + savePath);

} catch (Exception e) {

System.err.println("Image download failed: " + e.getMessage());

}

}

public static void main(String[] args) {

String imageUrl = "http://dashscope-result-bj.oss-cn-beijing.aliyuncs.com/xxx?Expires=xxx";

String savePath = "output.png";

downloadImage(imageUrl, savePath);

}

}Response example

The image link is valid for 24 hours. Download the image promptly.

{

"requestId": "46281da9-9e02-941c-ac78-be88b8xxxxxx",

"usage": {

"image_count": 2,

"width": 1024,

"height": 1536

},

"output": {

"choices": [

{

"finish_reason": "stop",

"message": {

"role": "assistant",

"content": [

{

"image": "https://dashscope-result-sz.oss-cn-shenzhen.aliyuncs.com/xxx.png?Expires=xxx"

},

{

"image": "https://dashscope-result-sz.oss-cn-shenzhen.aliyuncs.com/xxx.png?Expires=xxx"

}

]

}

}

]

}

}Error codes

If the model call fails and returns an error message, see Error messages for resolution.

Billing and rate limiting

For model pricing and free quotas, see Model list and pricing.

For rate limits, see Rate limits.

Billing description: You are charged based on the number of images that are successfully generated. Failed model calls or processing errors do not incur fees or consume the free quota.

FAQ

Q: What languages do the Qwen image editing models support?

A: They officially support Simplified Chinese and English. Other languages may work, but results are not guaranteed.

Q: How do I view model invocation metrics?

A: One hour after a model invocation completes, go to the Monitoring (Singapore) or Monitoring (China (Beijing)) page to view metrics such as invocation count and success rate. For more information, see Bill query and cost management.

Q: How do I get the domain name whitelist for image storage?

A: Images generated by models are stored in OSS. The API returns a temporary public URL. To configure a firewall whitelist for this download URL, note the following: The underlying storage may change dynamically. This topic does not provide a fixed OSS domain name whitelist to prevent access issues caused by outdated information. If you have security control requirements, contact your account manager to obtain the latest OSS domain name list.