Data Transmission Service (DTS) allows you to synchronize data from a MongoDB database to an AnalyticDB for PostgreSQL instance. This topic describes how to synchronize data from an ApsaraDB for MongoDB replica set instance to an AnalyticDB for PostgreSQL instance by using DTS.

Prerequisites

The destination AnalyticDB for PostgreSQL instance is created. The available storage space of this instance is larger than the total size of data in the source ApsaraDB for MongoDB instance. For more information about how to create an AnalyticDB for PostgreSQL instance, see Create an instance.

NoteThe available storage space of the destination database is 10% larger than the total size of data in the source database. This is a recommended prerequisite.

A database, a schema, and a table with a primary key column are created in the destination AnalyticDB for PostgreSQL instance to receive data. For more information, see SQL syntax.

ImportantMake sure that the data type of data in the destination table is compatible with the data in the source ApsaraDB for MongoDB instance. For example, if the

_idfield of the ApsaraDB for MongoDB instance is of the ObjectId type, the data type of the AnalyticDB for PostgreSQL instance must be varchar.Do not name the columns of the destination table in the AnalyticDB for PostgreSQL instance as _id or _value.

The endpoints of all shard nodes are obtained and the usernames and passwords of the accounts used to log on to the shard nodes are consistent if the source database is an ApsaraDB for MongoDB sharded cluster instance. For more information, see Apply for an endpoint for a shard.

Usage notes

Category | Description |

Limits on the source database |

|

Other limits |

|

Billing

Synchronization type | Task configuration fee |

Full data synchronization | Free of charge. |

Incremental data synchronization | Charged. For more information, see Billing overview. |

Synchronization types

Synchronization type | Description |

Full data synchronization | DTS synchronizes the historical data of the selected objects from the source ApsaraDB for MongoDB instance to the destination AnalyticDB for PostgreSQL instance. |

Incremental data synchronization | After full data synchronization is complete, DTS synchronizes incremental data from the source ApsaraDB for MongoDB instance to the destination AnalyticDB for PostgreSQL instance. Note

|

Permissions required for database accounts

Database | Required permissions | References |

Source MongoDB instance | Read permissions on the source, admin, and local databases. | |

Destination AnalyticDB for PostgreSQL instance | Read and write permissions on the destination database | Create and manage a database account and Manage users and permissions Note You can use the initial account or an account that has the RDS_SUPERUSER permission. |

Procedure

Use one of the following methods to go to the Data Synchronization page and select the region in which the data synchronization instance resides.

DTS console

Log on to the DTS console.

In the left-side navigation pane, click Data Synchronization.

In the upper-left corner of the page, select the region in which the data synchronization task resides.

DMS console

NoteThe actual operations may vary based on the mode and layout of the DMS console. For more information, see Simple mode and Customize the layout and style of the DMS console.

Log on to the DMS console.

In the top navigation bar, move the pointer over Data + AI and choose .

From the drop-down list to the right of Data Synchronization Tasks, select the region in which the data synchronization instance resides.

Click Create Task to go to the task configuration page.

Configure the source and destination databases. The following table describes the parameters.

Section

Parameter

Description

N/A

Task Name

The name of the DTS task. DTS automatically generates a task name. We recommend that you specify a descriptive name that makes it easy to identify the task. You do not need to specify a unique task name.

Source Database

Select Existing Connection

If you use a database instance that is registered with DTS, select the instance from the drop-down list. DTS automatically populates the following database parameters for the instance. For more information, see Manage database connections.

NoteIn the DMS console, you can select the database instance from the Select a DMS database instance drop-down list.

If you fail to register the instance with DTS, or you do not need to use the instance that is registered with DTS, you must configure the following database information.

Database Type

The type of the source database. Select MongoDB.

Access Method

The access method of the source database. Select Alibaba Cloud Instance.

Instance Region

The region in which the source ApsaraDB for MongoDB instance resides.

Replicate Data Across Alibaba Cloud Accounts

In this example, a database of the current Alibaba Cloud account is used. Select No.

Architecture

The architecture in which the source instance is deployed. In this example, Replica Set is selected.

NoteIf the source ApsaraDB for MongoDB instance is deployed in the Sharded Cluster architecture, you must configure the Shard account and Shard password parameters.

Migration Method

The method used to synchronize incremental data from the source database. Select a method based on your business requirements. Valid values:

Oplog (recommended):

This option is available if the oplog feature is enabled for the source database.

NoteBy default, the oplog feature is enabled for both self-managed MongoDB databases and ApsaraDB for MongoDB instances. This feature allows you to synchronize incremental data at a low latency because of a fast log pulling speed. Therefore, we recommend that you select Oplog for the Migration Method parameter.

ChangeStream:

This option is available if change streams are enabled for the source database. For more information, see Change Streams.

NoteIf the source database is an inelastic Amazon DocumentDB cluster, you can set the Migration Method parameter only to ChangeStream.

If you select Sharded Cluster for the Architecture parameter, you do not need to configure the Shard account and Shard password parameters.

Instance ID

The ID of the source ApsaraDB for MongoDB instance.

Authentication Database

The name of the authentication database that stores the database accounts and passwords of the source ApsaraDB for MongoDB instance. If you did not change the name before, the default value admin is used.

Database Account

The database account of the source ApsaraDB for MongoDB instance. For more information about the permissions required for the account, see the Permissions required for database accounts section of this topic.

Database Password

The password that is used to access the database.

Encryption

Specifies whether to encrypt the connection to the source database. You can select Non-encrypted, SSL-encrypted, or Mongo Atlas SSL based on your business requirements. The options available for the Encryption parameter are determined by the values selected for the Access Method and Architecture parameters. The options displayed in the DTS console prevail.

NoteIf the Architecture parameter is set to Sharded Cluster, and the Migration Method parameter is set to Oplog for the ApsaraDB for MongoDB database, the Encryption parameter SSL-encrypted is unavailable.

If the source database is a self-managed MongoDB database that uses the Replica Set architecture, the Access Method parameter is not set to Alibaba Cloud Instance, and the Encryption parameter is set to SSL-encrypted, you can upload a certification authority (CA) certificate to verify the connection to the source database.

Destination Database

Select Existing Connection

If you use a database instance that is registered with DTS, select the instance from the drop-down list. DTS automatically populates the following database parameters for the instance. For more information, see Manage database connections.

NoteIn the DMS console, you can select the database instance from the Select a DMS database instance drop-down list.

If you fail to register the instance with DTS, or you do not need to use the instance that is registered with DTS, you must configure the following database information.

Database Type

The type of the destination database. Select AnalyticDB for PostgreSQL.

Access Method

The access method of the destination database. Select Alibaba Cloud Instance.

Instance Region

The region in which the destination AnalyticDB for PostgreSQL instance resides.

Instance ID

The ID of the destination AnalyticDB for PostgreSQL instance.

Database Name

The name of the database that is used to receive the synchronized objects in the destination AnalyticDB for PostgreSQL instance.

Database Account

The database account of the destination AnalyticDB for PostgreSQL instance. For information about the permissions that are required for the account, see the Permissions required for database accounts section of this topic.

Database Password

The password that is used to access the database.

Click Test Connectivity and Proceed in the lower part of the page.

NoteMake sure that the CIDR blocks of DTS servers can be automatically or manually added to the security settings of the source and destination databases to allow access from DTS servers. For more information, see Add the CIDR blocks of DTS servers.

If the source or destination database is a self-managed database and its Access Method is not set to Alibaba Cloud Instance, click Test Connectivity in the CIDR Blocks of DTS Servers dialog box.

Configure the objects to be synchronized.

In the Configure Objects step, configure the objects that you want to synchronize.

Parameter

Description

Synchronization Types

By default, Incremental Data Synchronization is selected. You can select only Full Data Synchronization. You cannot select Schema Synchronization. After the precheck is complete, DTS synchronizes the historical data of the selected objects from the source database to the destination database. The historical data is the basis for subsequent incremental synchronization.

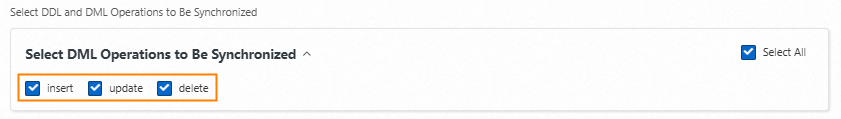

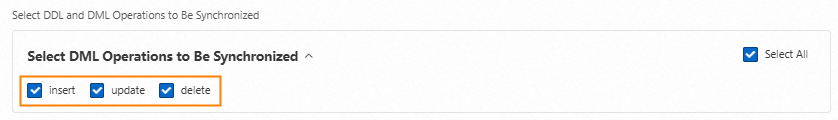

DDL and DML Operations to Be Synchronized

Select the DDL and DML operations that you want to synchronize at the instance level during incremental data synchronization.

NoteTo synchronize DDL and DML operations at the collection level during incremental data synchronization, right-click a collection in the Selected Objects section. In the dialog box that appears, select the operations that you want to synchronize.

Processing Mode of Conflicting Tables

Precheck and Report Errors: checks whether the destination database contains tables that have the same names as tables in the source database. If the source and destination databases do not contain tables that have identical table names, the precheck is passed. Otherwise, an error is returned during the precheck, and the data synchronization task cannot be started.

NoteIf the source and destination databases contain tables with identical names and the tables in the destination database cannot be deleted or renamed, you can use the object name mapping feature to rename the tables that are synchronized to the destination database. For more information, see Map object names.

Ignore Errors and Proceed: skips the precheck for identical table names in the source and destination databases.

WarningIf you select Ignore Errors and Proceed, data inconsistency may occur and your business may be exposed to potential risks.

If the source and destination databases have the same schema and a data record in the destination database has the same primary key value or unique key value as a data record in the source database:

During full data synchronization, DTS does not synchronize the data record to the destination database. The existing data record in the destination database is retained.

During incremental data synchronization, DTS synchronizes the data record to the destination database. The existing data record in the destination database is overwritten.

If the source and destination databases have different schemas, data may fail to be initialized. In this case, only some columns are synchronized, or the data synchronization instance fails. Proceed with caution.

Source Objects

Select one or more objects from the Source Objects section and click the

icon to add the objects to the Selected Objects section. Note

icon to add the objects to the Selected Objects section. NoteYou can select collections as the objects to be synchronized.

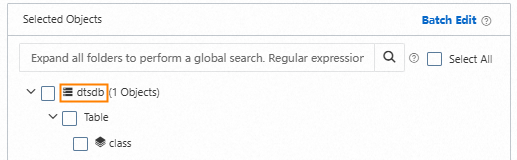

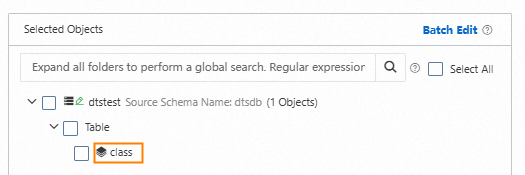

Selected Objects

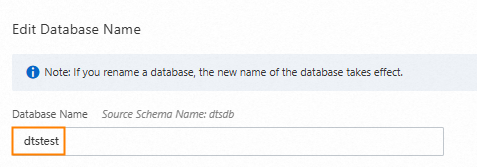

Modify the database name.

In the Selected Objects section, right-click the database to which the collections to be synchronized belong.

Change the value of Database Name to the name of the schema that is used to receive data in the destination AnalyticDB for PostgreSQL instance.

Optional. In the Select DDL and DML Operations to Be Synchronized section, select the operations that you want to synchronize during incremental data synchronization.

Click OK.

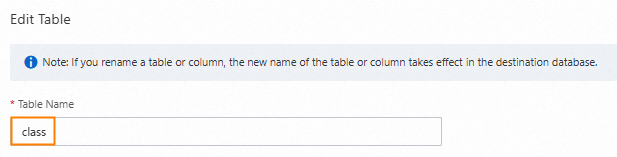

Modify table names.

In the Selected Objects section, right-click a collection.

Change the value of Table Name to the name of the table that is used to receive data in the destination AnalyticDB for PostgreSQL instance.

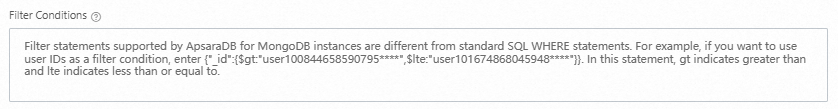

Optional. You can specify conditions to filter data. For more information, see Specify filter conditions.

Optional. In the Select DDL and DML Operations to Be Synchronized section, select the operations that you want to synchronize during incremental data synchronization.

Specify the fields to be synchronized from the source ApsaraDB for MongoDB instance.

By default, DTS maps the data of a collection to be synchronized and configures an expression in the Assign Value column. You must check whether the expression meets your requirements and specify the Column Name, Type, Length, and Precision parameters.

In the

bson_value()expression of the Assign Value column, view the field name of the row of data in the ApsaraDB for MongoDB instance.The field in

""is the field name in the ApsaraDB for MongoDB instance. For example, if the expression isbson_value("age"),ageis the field name of the row of data in the ApsaraDB for MongoDB instance.Optional: You can delete fields that do not need to be synchronized.

NoteClick the

icon after the row of data to delete fields that do not need to be synchronized.

icon after the row of data to delete fields that do not need to be synchronized.Specify the fields to be synchronized.

Perform one of the following procedures based on whether the

bson_value()expression meets your requirements.The expression meets field requirements

Configure the Column Name parameter.

NoteEnter the name of the column in the table that is used to receive data in the destination AnalyticDB for PostgreSQL instance.

Select a data Type for each column.

ImportantMake sure that the data type of the destination table is compatible with the data in the source ApsaraDB for MongoDB instance.

Optional: Specify a data length and a precision for each column.

Repeat the preceding steps to map the columns of the source table onto the columns of the destination table.

The expression does not meet field requirements

NoteFor example, fields with hierarchical relationships such as parent-child structure.

In the Actions column, click the

icon after the row of the data.

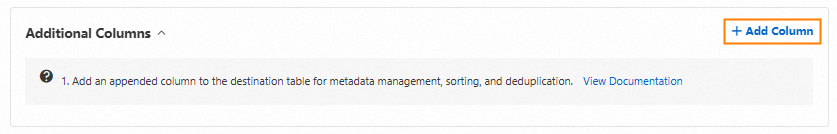

icon after the row of the data.Click + Add Column.

Configure the Column Name, Type, Length, and Precision parameters.

Enter the

bson_value()expression in the Assign Value field. For more information, see the Example of value assignment section of this topic.ImportantYou must assign

bson_value("_id")to the primary key column of the destination table.You must specify the field and subfield of each column in the corresponding

bson_value()expression based on the hierarchical relationship. Otherwise, data loss may occur or the task may fail.

Repeat the preceding steps to map the columns of the source table onto the columns of the destination table.

Click OK.

Click Next: Advanced Settings to configure advanced settings.

Parameter

Description

Dedicated Cluster for Task Scheduling

By default, DTS schedules the task to the shared cluster if you do not specify a dedicated cluster. If you want to improve the stability of data synchronization instances, purchase a dedicated cluster. For more information, see What is a DTS dedicated cluster.

Retry Time for Failed Connections

The retry time range for failed connections. If the source or destination database fails to be connected after the data synchronization task is started, DTS immediately retries a connection within the time range. Valid values: 10 to 1440. Unit: minutes. Default value: 720. We recommend that you set this parameter to a value greater than 30. If DTS reconnects to the source and destination databases within the specified time range, DTS resumes the data synchronization task. Otherwise, the data synchronization task fails.

NoteIf you specify different retry time ranges for multiple data synchronization tasks that have the same source or destination database, the shortest retry time range takes precedence.

When DTS retries a connection, you are charged for the DTS instance. We recommend that you specify the retry time range based on your business requirements. You can also release the DTS instance at your earliest opportunity after the source and destination instances are released.

Retry Time for Other Issues

The retry time range for other issues. For example, if the DDL or DML operations fail to be performed after the data synchronization task is started, DTS immediately retries the operations within the time range. Valid values: 1 to 1440. Unit: minutes. Default value: 10. We recommend that you set this parameter to a value greater than 10. If the failed operations are successfully performed within the specified time range, DTS resumes the data synchronization task. Otherwise, the data synchronization task fails.

ImportantThe value of the Retry Time for Other Issues parameter must be smaller than the value of the Retry Time for Failed Connections parameter.

Enable Throttling for Full Data Synchronization

During full data synchronization, DTS uses the read and write resources of the source and destination databases. This may increase the load on the database servers. You can configure the Queries per second (QPS) to the source database, RPS of Full Data Migration, and Data migration speed for full migration (MB/s) parameters for full data synchronization tasks to reduce the load on the destination database server.

NoteYou can configure this parameter only if Full Data Synchronization is selected for the Synchronization Types parameter.

Only one data type for primary key _id in a table of the data to be synchronized

Whether the data type for primary key

_idin a collection of the data to be synchronized is unique. Valid value:NoteSpecify this parameter based on your business requirements. Otherwise, data may be lost.

This parameter is displayed only if Full Data Synchronization is selected for the Synchronization Types parameter.

Yes: The data type is unique. During full data synchronization, DTS does not scan the data type for primary key

_idof the data to be synchronized from the source database. DTS synchronizes only the data of the primary key for a data type in a single collection.No: The data type is not unique. During full data synchronization, DTS scans the data type for primary key

_idof the data to be synchronized from the source database and synchronizes all data to be synchronized.

Enable Throttling for Incremental Data Synchronization

Specifies whether to enable throttling for incremental data synchronization. You can enable throttling for incremental data synchronization based on your business requirements. To configure throttling, you must configure the RPS of Incremental Data Synchronization and Data synchronization speed for incremental synchronization (MB/s) parameters. This reduces the load on the destination database server.

Environment Tag

The environment tag that is used to identify the DTS instance. You can select an environment tag based on your business requirements. In this example, you do not need to configure this parameter.

Configure ETL

Specifies whether to enable the extract, transform, and load (ETL) feature. For more information, see What is ETL? Valid values:

Yes: configures the ETL feature. You can enter data processing statements in the code editor. For more information, see Configure ETL in a data migration or data synchronization task.

No: does not configure the ETL feature.

Monitoring and Alerting

Specifies whether to configure alerting for the data synchronization instance. If the task fails or the synchronization latency exceeds the specified threshold, alert contacts will receive notifications. Valid values:

No: does not enable alerting.

Yes: configures alerting. In this case, you must also configure the alert threshold and alert notification settings. For more information, see the "Configure monitoring and alerting when you create a DTS task" section of the Configure monitoring and alerting topic.

Save the task settings and run a precheck.

To view the parameters to be specified when you call the relevant API operation to configure the DTS task, move the pointer over Next: Save Task Settings and Precheck and click Preview OpenAPI parameters.

If you do not need to view or have viewed the parameters, click Next: Save Task Settings and Precheck in the lower part of the page.

NoteBefore you can start the data synchronization task, DTS performs a precheck. You can start the data synchronization task only after the task passes the precheck.

If the data synchronization task fails the precheck, click View Details next to each failed item. After you analyze the causes based on the check results, troubleshoot the issues. Then, rerun the precheck.

If an alert is triggered for an item during the precheck:

If an alert item cannot be ignored, click View Details next to the failed item and troubleshoot the issue. Then, run a precheck again.

If an alert item can be ignored, click Confirm Alert Details. In the View Details dialog box, click Ignore. In the message that appears, click OK. Then, click Precheck Again to run a precheck again. If you ignore the alert item, data inconsistency may occur, and your business may be exposed to potential risks.

Purchase an instance.

Wait until the Success Rate becomes 100%. Then, click Next: Purchase Instance.

On the buy page, configure the Billing Method and Instance Class parameters for the data synchronization task. The following table describes the parameters.

Section

Parameter

Description

New Instance Class

Billing Method

Subscription: You pay for a subscription when you create a data synchronization instance. The subscription billing method is more cost-effective than the pay-as-you-go billing method for long-term use.

Pay-as-you-go: A pay-as-you-go instance is billed on an hourly basis. The pay-as-you-go billing method is suitable for short-term use. If you no longer require a pay-as-you-go data synchronization instance, you can release the instance to reduce costs.

Resource Group Settings

The resource group to which the data synchronization instance belongs. Default value: default resource group. For more information, see What is Resource Management?

Instance Class

DTS provides instance classes that vary in synchronization speed. You can select an instance class based on your business requirements. For more information, see Instance classes of data synchronization instances.

Subscription Duration

If you select the subscription billing method, specify the subscription duration and the number of data synchronization instances that you want to create. The subscription duration can be one to nine months, one year, two years, three years, or five years.

NoteThis parameter is available only if you select the Subscription billing method.

Read and select Data Transmission Service (Pay-as-you-go) Service Terms.

Click Buy and Start. In the dialog box that appears, click OK.

You can view the progress of the task in the task list.

Example of value assignment

Data structure of the source ApsaraDB for MongoDB instance

{

"_id":"62cd344c85c1ea6a2a9f****",

"person":{

"name":"neo",

"age":26,

"sex":"male"

}

}Table schema of the destination AnalyticDB for PostgreSQL instance

Column name | Type |

mongo_id | varchar Note The primary key column. |

person_name | varchar |

person_age | decimal |

Configuration of additional columns

You must specify the field and subfield of each column in the corresponding bson_value() expression based on the hierarchical relationship. Otherwise, data loss may occur or the task may fail. For example, if you specify only the person field of the source column by using the bson_value("person") expression, DTS cannot write the incremental data in the subfields of the person field, such as name, age, and sex, to the destination column.

Column name | Type | Value |

mongo_id | STRING | bson_value("_id") |

person_name | STRING | bson_value("person","name") |

person_age | DECIMAL | bson_value("person","age") |