Application Load Balancer (ALB) Ingress is built on ALB to provide powerful Ingress traffic management. It is compatible with Nginx Ingress, can handle complex business routing, and supports automatic certificate discovery. It supports the HTTP, HTTPS, and QUIC protocols. Using ALB Ingress in an Alibaba Cloud Container Service for Kubernetes (ACK) cluster provides the high elasticity and large-scale Layer 7 traffic processing required for cloud-native applications.

How it works

ALB Ingress involves the following basic concepts:

The ALB Ingress Controller is a component that manages Ingress resources. It uses the API Server to dynamically retrieve changes in Ingress and AlbConfig resources, and then updates the ALB instance. Unlike the Nginx Ingress Controller, the ALB Ingress Controller is the control plane for the ALB instance. It manages the ALB instance but does not handle user traffic directly. Instead, the ALB instance forwards user traffic. The ALB Ingress Controller uses the cluster's API Server to dynamically retrieve changes in Ingress resources and updates the ALB instance based on the forwarding rules defined in the Ingress.

AlbConfig (cluster-level CRD): An AlbConfig is a cluster-level Custom Resource Definition (CRD) created by the ALB Ingress Controller. The parameters in an AlbConfig define the configuration of the ALB instance. One AlbConfig corresponds to one ALB instance. The ALB instance is the entry point for user traffic and is responsible for forwarding user requests to backend Services. It is fully managed by Application Load Balancer (ALB).

IngressClass: An IngressClass defines the association between an Ingress and an AlbConfig.

Ingress: An Ingress is a resource object in Kubernetes that defines external traffic routing and access rules. The ALB Ingress Controller monitors changes in Ingress resources and updates the ALB instance to forward traffic.

Service: In Kubernetes, pods are temporary resources that change frequently. A service provides a stable and unified entry point for a group of pods that perform the same function. Other applications or services can communicate with the backend pods by accessing the virtual IP address and port of the service, without needing to be aware of changes to the individual pods.

The following figure shows the logical relationship between an ALB instance and an ALB Ingress.

Limitations

The names of AlbConfig, Namespace, Ingress, and Service resources cannot start with aliyun.

Earlier versions of the Nginx Ingress controller do not recognize the spec:ingressClassName field. If both Nginx Ingresses and ALB Ingresses are configured in your cluster, the ALB Ingresses may be reconciled by an earlier version of the Nginx Ingress controller. To avoid this issue, update the Nginx Ingress controller as soon as possible or use annotations to specify the IngressClasses of ALB Ingresses. For more information, see Upgrade the Nginx Ingress controller component or Advanced ALB Ingress usage.

Scenario example

This tutorial uses an Nginx deployment with four pods as an example to demonstrate how to configure ALB Ingress to forward traffic based on different URL paths under the same domain name.

Frontend request | Traffic is forwarded to |

| the |

| the |

Prerequisites

You have created two vSwitches in different zones. The vSwitches must be in the same VPC as the cluster. For more information, see Create and manage vSwitches.

Step 1: Deploy backend services

Console

-

Log on to the ACS console. In the left navigation pane, click Clusters.

-

On the Clusters page, click the name of the target cluster. In the left navigation pane, choose Workloads > Deployments.

-

On the Deployments page, click Create from YAML in the upper-right corner.

On the Create page, perform the following steps.

Sample Template: Select Custom.

Template: Enter the YAML configuration file code. This configuration file deploys two Deployments named

coffeeandtea, and two Services namedcoffee-svcandtea-svc.

After the configuration is complete, click Create. A Created Successfully message appears.

Verify that the deployments and services are created.

In the navigation pane on the left, choose Workloads > Deployments. Verify that the deployments named

coffeeandteaare created.In the navigation pane on the left, choose Network > Services. Verify that the services named

coffee-svcandtea-svcare created.

kubectl

Create a file named

cafe-service.yamlwith the following content. This file is used to deploy two deployments namedcoffeeandtea, and two services namedcoffee-svcandtea-svc.Run the following command to deploy the two deployments and two services.

kubectl apply -f cafe-service.yamlExpected output:

deployment "coffee" created service "coffee-svc" created deployment "tea" created service "tea-svc" createdRun the following commands to check the status of the applications and services.

Run the following command to check the status of the applications.

kubectl get deploymentExpected output:

NAME READY UP-TO-DATE AVAILABLE AGE coffee 2/2 2 2 2m 26s tea 2/2 2 2 2m 26sRun the following command to check the status of the services.

kubectl get svcExpected output:

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE coffee-svc ClusterIP 172.16.XX.XX <none> 80/TCP 9m 38s tea-svc ClusterIP 172.16.XX.XX <none> 80/TCP 9m 38s

Step 2: Create an ALBConfig

Console

-

Log on to the ACS console. In the left navigation pane, click Clusters.

-

On the Clusters page, click the name of the target cluster. In the left navigation pane, choose Workloads > Custom Resources.

On the CRDs tab, click Create from YAML.

Sample Template: Select Custom.

Template: Enter the YAML configuration file code.

The following table describes the adjustable parameters.

Parameter

Required

Description

metadata.nameYes

The name of the AlbConfig.

NoteThe name of the AlbConfig must be unique within the cluster. When you create an AlbConfig, ensure its name is unique to avoid naming conflicts.

spec.config.nameNo

The name of the ALB instance.

spec.config.addressTypeNo

The network type of the ALB instance. Valid values:

Internet (default): The ALB instance is public-facing and accessible from the Internet.

NoteApplication Load Balancer provides Internet-facing services by associating with an Elastic IP Address (EIP). If you use an Internet-facing ALB instance, you are charged for the EIP instance, bandwidth, and data transfer. For more information, see Pay-as-you-go.

Intranet: The ALB instance is private and accessible only within the VPC.

spec.config.zoneMappingsYes

Set the ALB vSwitch ID. For more information about how to create a vSwitch, see Create and manage vSwitches.

NoteThe specified vSwitch must be in a zone supported by ALB and in the same VPC as the cluster. For more information about the regions and zones supported by ALB, see Regions and zones that support ALB.

Application Load Balancer supports multi-zone deployment. If the current region supports two or more zones, select at least two vSwitches in different zones to ensure high availability.

spec.listenersNo

Configure the listener port and protocol for the ALB instance. This example configures an HTTP listener on port 80.

A listener defines how traffic enters the load balancer. If you do not keep this configuration, you must create a listener to use ALB Ingress.

After the configuration is complete, click Create. A Created Successfully message appears.

Verify that the ALB instance is created.

Log on to the Application Load Balancer (ALB) console.

In the top menu bar, select the region where the instance is located.

On the Instances page, find the ALB instance named

alb-test. This indicates that the instance was created successfully.

kubectl

Copy the following content to a file named

alb-test.yamlto create the ALBConfig.The following table describes the adjustable parameters.

Parameter

Required

Description

metadata.nameYes

The name of the AlbConfig.

NoteThe name of the AlbConfig must be unique within the cluster. When you create an AlbConfig, ensure its name is unique to avoid naming conflicts.

spec.config.nameNo

The name of the ALB instance.

spec.config.addressTypeNo

The network type of the ALB instance. Valid values:

Internet (default): The ALB instance is public-facing and accessible from the Internet.

NoteApplication Load Balancer provides Internet-facing services by associating with an Elastic IP Address (EIP). If you use an Internet-facing ALB instance, you are charged for the EIP instance, bandwidth, and data transfer. For more information, see Pay-as-you-go.

Intranet: The ALB instance is private and accessible only within the VPC.

spec.config.zoneMappingsYes

Set the ALB vSwitch ID. For more information about how to create a vSwitch, see Create and manage vSwitches.

NoteThe specified vSwitch must be in a zone supported by ALB and in the same VPC as the cluster. For more information about the regions and zones supported by ALB, see Regions and zones that support ALB.

Application Load Balancer supports multi-zone deployment. If the current region supports two or more zones, select at least two vSwitches in different zones to ensure high availability.

spec.listenersNo

Configure the listener port and protocol for the ALB instance. This example configures an HTTP listener on port 80.

A listener defines how traffic enters the load balancer. If you do not keep this configuration, you must create a listener to use ALB Ingress.

Run the following command to create the ALBConfig.

kubectl apply -f alb-test.yamlExpected output:

albconfig.alibabacloud.com/alb-demo createdThe output shows that the ALBConfig was created successfully.

Step 3: Create an IngressClass

Each IngressClass must correspond to one AlbConfig.

Console

-

Log on to the ACS console. In the left navigation pane, click Clusters.

-

On the Clusters page, click the name of the target cluster. In the left navigation pane, choose Workloads > Custom Resources.

On the CRDs tab, click Create from YAML.

Sample Template: Select Custom.

Template: Enter the YAML configuration file code.

The following table describes the adjustable parameters.

Parameter

Required

Description

metadata.name

Yes

The name of the IngressClass.

NoteThe name of the IngressClass must be unique within the cluster. When you create an IngressClass, ensure its name is unique to avoid naming conflicts.

spec.parameters.name

Yes

The name of the associated AlbConfig.

After the configuration is complete, click Create. The page displays a message indicating that the creation is successful.

Verify that the IngressClass is created.

In the navigation pane on the left, choose .

Click the Resource Object Browser tab.

In the API Group search bar, enter IngressClass and search. Verify that the corresponding IngressClass has been created.

kubectl

Create a file named

alb.yamlwith the following content to create the IngressClass.The following table describes the adjustable parameters.

Parameter

Required

Description

metadata.nameYes

The name of the IngressClass.

NoteThe name of the IngressClass must be unique within the cluster. When you create an IngressClass, ensure its name is unique to avoid naming conflicts.

spec.parameters.nameYes

The name of the associated AlbConfig.

Run the following command to create the IngressClass.

kubectl apply -f alb.yamlExpected output:

ingressclass.networking.k8s.io/alb created

Step 4: Create an Ingress

Console

-

Log on to the ACS console. In the left navigation pane, click Clusters.

On the Clusters page, click the name of the target cluster. In the left navigation pane, choose Network > Ingresses.

On the Ingresses page, click Create Ingress, and in the Create Ingress dialog box, configure routing.

Configuration Item

Description

Example Value

Gateway Type

You can select ALB or MSE as the application load balancing gateway type.

ALB Ingress

Name

The custom name of the Ingress.

cafe-ingress

Ingress Class

The custom class of the Ingress.

alb

Rules

Click + Add Rule to add multiple routing rules.

Domain Name: The custom domain name.

Path Mapping: Configure the following items.

Path: The URL path for accessing the service. In this example, this is not configured, and the root path / is used.

Matching Rule: Supports Prefix, Exact, and Default (ImplementationSpecific).

Service Name: The target service, which is a Service in Kubernetes.

Port: The port that the service needs to expose.

An Ingress supports multiple paths under the same domain name. Click + Add Path to add a path.

Domain Name: demo.domain.ingress.top

Path Mapping:

Path: /tea

Match Type: ImplementationSpecific

Service Name: tea-svc

Port: 80

Path Mapping:

Path: /coffee

Match Type: ImplementationSpecific

Service Name: coffee-svc

Port: 80

TLS Configuration

Enable TLS configuration to configure a secure routing service.

Domain Name: A custom domain name.

Secret: Select the corresponding secret as needed.

To create a secret, perform the following steps.

To the right of Secret, click Create.

In the Create Secret dialog box, enter a custom Name, Cert, and Key for the secret, then click OK.

From the Secret drop-down list, select the created secret.

Click + Add TLS Configuration to configure multiple TLS settings.

For more information, see Configure an HTTPS certificate for encrypted communication.

Disable TLS Configuration; TLS is not required for this example.

More Configurations

Phased Release: Turn on the phased release switch. You can set phased release rules based on request headers, cookies, and weights.

NoteYou can set only one of the request header, cookie, or weight rules. If multiple rules are set at the same time, they are matched in the order of request header, cookie, and weight.

By request header: Splits traffic based on the request header. After this is set, the

alb.ingress.kubernetes.io/canary-by-headerandalb.ingress.kubernetes.io/canary-by-header-valueannotations are added.By Cookie: Splits traffic based on the cookie. After this is set, the

alb.ingress.kubernetes.io/canary-by-cookieannotation is added.By weight: Sets the percentage of requests to a specified service. The value must be an integer from 0 to 100. After this is set, the

alb.ingress.kubernetes.io/canary-weightannotation is added.

Protocol: Supports backend services that use the HTTPS and gRPC protocols. After this is set, the

alb.ingress.kubernetes.io/backend-protocolannotation is added.Rewrite Path: The path in a client request is rewritten before the request is sent to the backend service. After this is set, the alb.ingress.kubernetes.io/rewrite-target annotation is added.

Disable phased release, and keep the default protocol and rewrite path; none of these are required for this example.

Custom Forwarding Rules

Enable custom forwarding rules for fine-grained management of inbound traffic.

NoteA forwarding rule can have a maximum of 10 condition entries.

From the Forwarding Conditions drop-down list, select one of the following:

Domain Name:

Matches the request domain name. If multiple domain names are set, they are joined by a logical OR. When set, the annotation

alb.ingress.kubernetes.io/conditions.host-exampleis added.Path:

Matches the request path. If multiple paths are set, they are joined by a logical OR. When set, the annotation

alb.ingress.kubernetes.io/conditions.path-exampleis added.HTTP Header:

Matches the request header as a key-value pair. For example, Key Is:

headername, Value Is:headervalue1. If multiple header values are set, they are joined by a logical OR. When set, the annotationalb.ingress.kubernetes.io/conditions.http-header-exampleis added.

From the Forwarding Actions drop-down list, select one of the following:

Forward To

Forward to multiple backend server groups. For Service Name, select the target service. For Port, select the target port number. Then, configure a custom weight value.

NoteIf you select Forward to, you do not need to configure Path Mapping in the rule.

Return Fixed Response

Set a fixed response to be returned to the client by ALB. You can set the response status code, body content, and content type. Configure Response Status Code, Response Body Type (Optional), and Response Body (Optional) as needed.

Response Body Type:

text/plain: Plain text content type.

text/css: CSS content.

text/html: HTML content.

application/javascript: JavaScript content.

application/json: JSON content type.

Custom forwarding rules support various forwarding conditions and actions. Configure forwarding conditions based on domain name, path, and HTTP header, and forwarding actions such as forwarding to a service or returning a fixed response. See Customize forwarding rules for ALB Ingress.

Disable custom forwarding rules; they are not required for this example.

Annotations

You can specify a custom annotation name and value, or select or search for an annotation to configure. For more information about Ingress annotations, see Annotations.

No configuration needed; annotations are not required for this example.

Labels

Labels are used to add corresponding tags to an Ingress to indicate its characteristics.

No configuration needed; labels are not required for this example.

After the configuration is complete, click OK at the bottom left of the Create Ingress dialog box.

Verify that the Ingress is created.

In the navigation pane on the left, choose Network > Ingresses. Verify that the Ingress named

cafe-ingressis deployed.In the Endpoints column for

cafe-ingress, view the endpoint information.

kubectl

Create a file named `cafe-ingress.yaml` with the following content to create the Ingress.

The following table describes the adjustable parameters.

Parameter

Required

Description

metadata.nameYes

The name of the Ingress.

NoteThe name of the Ingress must be unique within the cluster. When you create an Ingress, ensure its name is unique to avoid naming conflicts.

spec.ingressClassNameYes

The name of the associated IngressClass.

spec.rules.hostNo

The domain name from the `Host` field of the HTTP header. Set this to your custom domain name.

When you access a custom domain name in a browser, such as "http://demo.domain.ingress.top", the browser automatically adds a "Host: demo.domain.ingress.top" header to the HTTP request. This allows the server to identify the target hostname from the header. In Kubernetes, the `host` field in an Ingress rule is matched against the `Host` header from the request. When a match is found, the Ingress rule routes the request to the corresponding backend service.

NoteIf you configure a custom domain name here, ensure that an ICP filing is obtained for the domain name. Otherwise, the domain name may fail to resolve. For more information, see ICP filing process.

If this is not configured, the Ingress rule matches all requests that reach the Ingress Controller.

spec.rules.http.paths.pathYes

The forwarding path URL.

spec.rules.http.paths.pathTypeYes

The URL matching rule. For more information, see Forward requests based on URL paths.

spec.rules.http.paths.backend.service.nameYes

Enter the name of the Service you created earlier.

spec.rules.http.paths.backend.service.port.numberYes

Enter the service port number of the Service you created earlier.

This port number setting is important because it determines the port used when routing to the backend service. Ensure the port number is set correctly so that requests can be properly routed to and processed by the backend service.

Run the following command to configure the domain name and

paththat expose thecoffeeandteaservices.kubectl apply -f cafe-ingress.yamlExpected output:

ingress.networking.k8s.io/cafe-ingress created(Optional) Run the following command to retrieve the DNS address of the ALB instance.

kubectl get ingressExpected output:

NAME CLASS HOSTS ADDRESS PORTS AGE cafe-ingress alb demo.domain.ingress.top alb-m551oo2zn63yov****.cn-hangzhou.alb.aliyuncs.com 80 50s

(Optional) Step 5: Configure domain name resolution

If you set the spec.rules.host field to a custom domain name when creating the Ingress, add a CNAME record to resolve the domain name to the ALB DNS name. Then access the service through your custom domain name.

Log on to the Container Service for Kubernetes (ACK) console.

Click the name of the cluster to open the cluster management page.

In the navigation pane on the left, choose Network > Ingresses.

In the Endpoints column for

cafe-ingress, copy its corresponding DNS name.Perform the following steps to add a CNAME record.

Log on to the Alibaba Cloud DNS console.

On the Domain Names page, click Add Domain Name.

In the Add Domain Name dialog box, enter the host domain name, and then click OK.

ImportantThe host domain name must have passed TXT record verification.

In the Actions column of the target domain name, click Configure.

On the Configure page, click Add Record.

In the Add Record panel, configure the following information to complete the CNAME configuration, then click OK.

Configuration

Description

Type

From the drop-down list, select CNAME.

Host

The prefix of the domain name, such as

www.Resolution Request Source

Select Default.

Value

Enter the CNAME address corresponding to the domain name, which is the DNS name you copied in the previous step.

TTL

Time to Live (TTL) is the cache time for the DNS record on the DNS server. This topic uses the default value.

Step 6: Test traffic forwarding

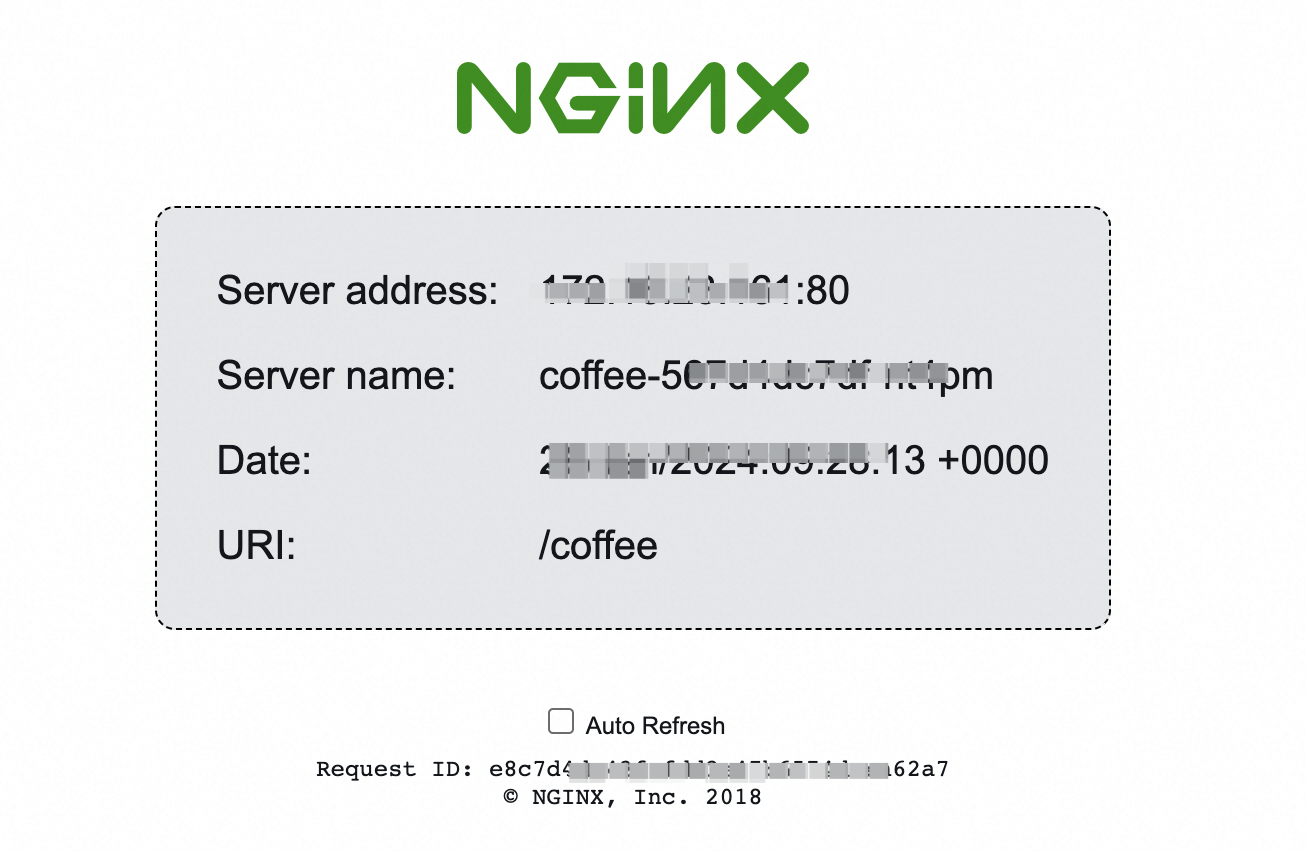

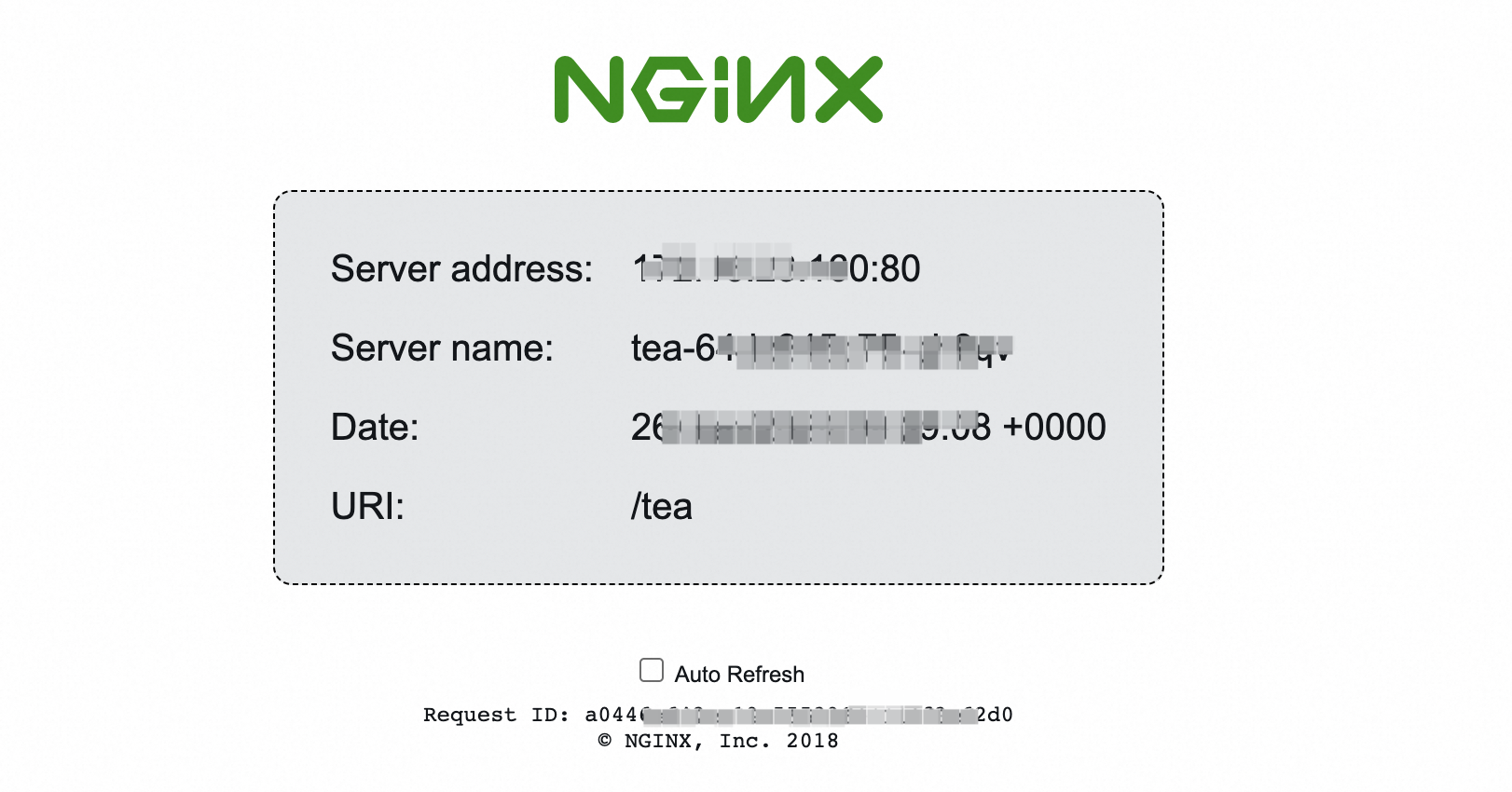

In a browser, enter the test domain name and URL path to test whether traffic is forwarded correctly.

If you configured a custom domain name, the test domain name is your custom domain name.

If you did not configure a custom domain name, the test domain name is the endpoint DNS name of

cafe-ingress.

This example uses demo.domain.ingress.top as the test domain name.

In a browser, enter

demo.domain.ingress.top/coffee. The backend service interface corresponding tocoffee-svcis returned.

In a browser, enter

demo.domain.ingress.top/tea. The backend service interface corresponding totea-svcis returned.

References

For details about forwarding requests from different domain names or URL paths to different backend server groups, configuring health checks, redirecting HTTP access to HTTPS, phased releases, and configuring custom listener ports, see Advanced configurations for ALB Ingress.

To customize forwarding rules for ALB Ingress, such as configuring forwarding conditions and actions, see Customize forwarding rules for ALB Ingress.

To configure an HTTPS listener to forward requests that use the HTTPS protocol, see Configure an HTTPS certificate for encrypted communication.

If you encounter issues when you use ALB Ingress, see Troubleshoot ALB Ingress issues and ALB Ingress FAQ.