The Container Storage Interface (CSI) components handle dynamic volume lifecycle operations in ACK clusters. This guide covers component roles, upgrade procedures, and troubleshooting.

CSI component overview

ACK installs two CSI components by default when creating a cluster:

| Component | Role | Deployment type |

|---|---|---|

| csi-plugin | Mounts, unmounts, and formats volumes | DaemonSet |

| csi-provisioner | Dynamically creates and scales out volumes, and creates snapshots. Supports Elastic Block Storage (EBS), NAS, and OSS volumes by default. | Deployment |

New clusters install the managed version of csi-provisioner by default. Alibaba Cloud handles operations and maintenance (O&M) for managed components, so the related pods are not visible in the cluster.

Upgrade csi-plugin and csi-provisioner

Check for available updates and upgrade the CSI components from the ACK console.

If the csi-compatible-controller component is in use for FlexVolume-to-CSI migration and the migration is not complete, automatic upgrades are blocked. Complete the migration first, or manually upgrade the CSI components during migration. For details, see Upgrade components.

Log on to the ACK console. In the left navigation pane, click Clusters.

On the Clusters page, find the target cluster and click its name. In the left navigation pane, click Add-ons.

Click the Storage tab. In the csi-plugin and csi-provisioner cards, check for available upgrades and apply them.

NoteIf the upgrade fails in the console, see Component upgrade failures for troubleshooting steps.

Troubleshooting

Component issues

csi-plugin fails to start with exec format error

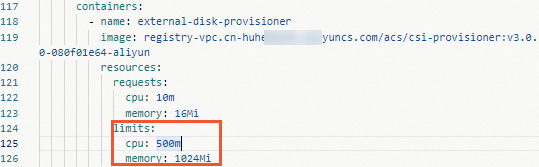

Out-of-memory (OOM) errors from csi-provisioner

High network traffic on the csi-plugin pod

csi-provisioner logs show failed to renew lease error

Component upgrade failures

csi-plugin pre-check fails

csi-plugin pre-check passes but upgrade fails

Console shows csi-plugin but not csi-provisioner

csi-provisioner pre-check fails

csi-provisioner pre-check passes but upgrade fails

csi-provisioner upgrade fails due to node count requirements

csi-provisioner upgrade fails due to StorageClass property changes

References

For more information about CSI, see alibaba-cloud-csi-driver.

For more information about component installation and uninstallation, see Components.

icon, and select View YAML.

icon, and select View YAML.