By Lie Lu

The content of this article includes but is not limited to TCP four-way handshake (close at the same time), seq/ack rules of TCP packets, TCP state machine, kernel TCP code, and TCP send window.

Linux kernel version 5.10.112

In brief, during the four-way handshake, the connection closure experienced a timeout due to the disorder of fin and ack packets.

Details of the process:

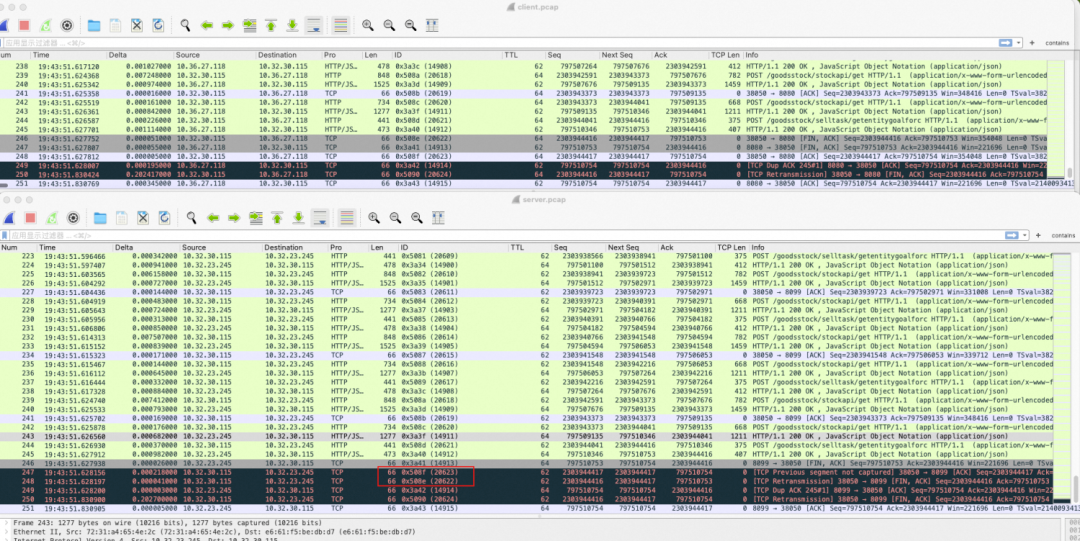

The upper part of the figure is the client, and the lower part is the server.

Focus on the four packets whose IDs are 14913, 14914, 20622, and 20623. For convenience of analysis, seq and ack are numbered with the last four numbers:

The problem occurred on the server (in the red box in the figure). After the server sent 14913:

(ack-20623 and fin-20622 are emphasized again here, which will be mentioned frequently later).

First of all, this phenomenon was unreasonable based on our intuition. TCP should have appropriate mechanisms to ensure the recovery from disorder. Both 20622 and 20623 had arrived at the server here. Although there was a disorder, it should not prevent the server from receiving both. This is the main concern.

After conducting preliminary analysis, we speculated that the most likely cause was that the server kernel ignored the 20622 packet (reason unknown). Since it was a kernel behavior, our initial attempt was to reproduce the issue in a local environment. However, it did not succeed as expected.

To simulate the preceding disorder scenario, we used two ECS instances to forge TCP packets on the client and communicate with the normal socket at the server.

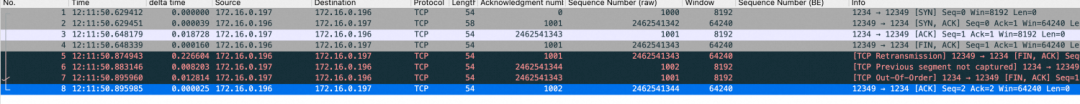

The packet capture result at the server is as follows:

Pay attention to No.5, No.6, No.7, and No.8 packets:

After that, in order to keep the kernel version consistent, the same program was transferred to the local virtual machine to run, and the same result was obtained. In other words, the reproduction failed.

Tool: python + scapy

Here, scapy was used to forge the client and send out-of-order ack and fin to observe the ack packet returned by the server. Because the client did not really follow the TCP protocol, timeout retransmission cannot be observed no matter whether the reproduction was successful or not.

(1) Normal socket listening at the server:

import socket

server_ip = "0.0.0.0"

server_port = 12346

server_socket = socket.socket(socket.AF_INET, socket.SOCK_STREAM)

server_socket.bind((server_ip, server_port))

server_socket.listen(5)

connection, client_address = server_socket.accept()

connection.close() #Send fin

server_socket.close()(2) The client simulates disorder:

from scapy.all import *

import time

import sys

target_ip = "omitted"

target_port = 12346

src_port = 1234

# Forge data packets to establish a TCP connection

ip = IP(dst=target_ip)

syn = TCP(sport=src_port, dport=target_port, flags="S", seq=1000)

syn_ack = sr1(ip / syn)

if syn_ack and TCP in syn_ack and syn_ack[TCP].flags == "SA":

print("Received SYN-ACK")

ack = TCP(sport=src_port, dport=target_port,

flags="A", seq=syn_ack.ack, ack=syn_ack.seq+1)

send(ip / ack)

print("Sent ACK")

else:

print("Failed to establish TCP connection")

def handle_packet(packet):

if TCP in packet and packet[TCP].flags & 0x01:

print("Received FIN packet") # If fin is received from the server, pass ack and fin

ack = TCP(sport=src_port, dport=target_port,

flags="A", seq=packet.ack+1, ack=packet.seq+1)

send(ip / ack)

time.sleep(0.1)

fin = TCP(sport=src_port, dport=target_port,

flags="FA", seq=packet.ack, ack=packet.seq)

send(ip / fin)

sys.exit(0)

sniff(prn=handle_packet)The issue occurs at the server where disorder disrupts the normal closure of the connection, resulting in the connection being closed only after the client retransmits the fin packet due to a timeout..

The extended connection closure time at the server (an additional 200 ms) significantly affects latency-sensitive scenarios.

After approximately 6 weekends of intermittent code-testing loops, we have finally identified the problem! The following section provides a brief overview of the troubleshooting process, including some unsuccessful attempts.

Let's back to the problems above. The cause of the problems is unclear now, and the local reproduction is perfectly in line with the ideal situation.

To simplify:

It can be determined that the problem is likely to occur in the server's processing of ack-20623 and fin-20622.

(ack-20623 and fin-20622 will be used below to refer to out-of-order ack and fin packets)

The key question is: How does the server handle the out-of-order ack-20623 and fin-20622 packets after sending the fin packet (entering the FIN_WAIT_1 state)? This involves the state transition of TCP. Therefore, the initial step is to determine the state transition process and subsequently analyze the specific code snippet based on the state transition.

Since the problem occurred during the handshake process, it is natural to observe state transitions to determine packet reception and processing situations.

Based on the reproduction process, we utilized ss and ebpf to monitor the state changes of TCP. We confirmed that after the server received ack-20623, it transitioned from the FIN_WAIT_1 state to the FIN_WAIT_2 state, indicating that ack-20623 was processed correctly. Therefore, the issue likely arises in the processing of fin-20622, which aligns with our initial assumption.

There is another peculiar point: according to the correct handshake process, the server should enter the

TIMEWAITstate after it received fin in theFIN_WAIT_2state. We observed this state transition in ss, but did not capture it when using ebpf monitoring. We didn't pay attention to this problem, and we learned the reason later: in the ebpf implementation, only state transitions caused by tcp_set_state() were recorded. Although the server had entered theTIMEWAITstate, it had not entered thetcp_set_state(), so it could not be seen in ebpf. For how to enter theTIMEWAITstate, please refer to the Extra section at the end.

Attached: ebpf monitoring results

(When the FIN_WAIT1 state was transitioned to the FIN_WAIT2 state, the snd_una was updated to make sure the ack-20623 was handled correctly)

<idle>-0 [000] d.s. 42261.233642: PASSIVE_ESTABLISHED: start monitor tcp state change

<idle>-0 [000] d.s. 42261.233651: port:12346,snd_nxt:154527568,snd_una:154527568

<idle>-0 [000] d.s. 42261.233652: rcv_nxt:1001,recved:0,acked:0

<...>-9451 [007] d... 42261.233808: changing from ESTABLISHED to FIN_WAIT1

<...>-9451 [007] d... 42261.233815: port:12346,snd_nxt:154527568,snd_una:154527568

<...>-9451 [007] d... 42261.233816: rcv_nxt:1001,recved:0,acked:0

<idle>-0 [000] dNs. 42261.464578: changing from FIN_WAIT1 to FIN_WAIT2

<idle>-0 [000] dNs. 42261.464588: port:12346,snd_nxt:154527569,snd_una:154527569

<idle>-0 [000] dNs. 42261.464589: rcv_nxt:1001,recved:0,acked:1So far, we have to take a look at the kernel source code. In accordance with the above analysis, the problem is likely to occur in the tcp_rcv_state_process() function. However, after the fragment about the TCP_FIN_WAIT2 was extracted, no doubt was found in this fragment.

(tcp_rcv_state_process is the function that handles state transitions when receiving packets, located in net/ipv4/tcp_input.c)

case TCP_FIN_WAIT1:

case TCP_FIN_WAIT2:

/* RFC 793 says to queue data in these states,

* RFC 1122 says we MUST send a reset.

* BSD 4.4 also does reset.

*/

if (sk->sk_shutdown & RCV_SHUTDOWN) {

if (TCP_SKB_CB(skb)->end_seq != TCP_SKB_CB(skb)->seq &&

after(TCP_SKB_CB(skb)->end_seq - th->fin, tp->rcv_nxt)) { //After analysis, the condition is not met.

NET_INC_STATS(sock_net(sk), LINUX_MIB_TCPABORTONDATA);

tcp_reset(sk);

return 1;

}

}

fallthrough;

case TCP_ESTABLISHED:

tcp_data_queue(sk, skb); //Enter this function, and the disorder will be corrected. This function is also responsible for processing fin.

queued = 1;

break;If we can come here, we can be almost certain that fin will be processed normally, so we use this position as the end point of our check. That is, the out-of-order fin-20622 had not arrived here. We started at this position and looked forward and found a very suspicious position, also in the tcp_rcv_state_process.

//Check whether the ack value is acceptable

acceptable = tcp_ack(sk, skb, FLAG_SLOWPATH |

FLAG_UPDATE_TS_RECENT |

FLAG_NO_CHALLENGE_ACK) > 0;

if (!acceptable) { //If it is not acceptable

if (sk->sk_state == TCP_SYN_RECV) //This branch will not be entered during handshake

return 1; /* send one RST */

tcp_send_challenge_ack(sk, skb); //Return an ack and discard it

goto discard;

}If the ack check for fin-20622 fails here, an ack (i.e., packet 14914, challenge ack in this code) will also be sent and then discarded (it does not enter the process of processing fin). This is consistent with the problem scenario. We continued to analyze the tcp_ack() function and found points that may be determined as not acceptable:

/*This segment determines the relationship between the received ack value and the local send window

snd_una means sending un-acknowledge here, that is, the position that has been sent but not acknowledged

*/

if (before(ack, prior_snd_una)) { //If the received ack value is already acknowledged by the previous packet

/* RFC 5961 5.2 [Blind Data Injection Attack].[Mitigation] */

···

goto old_ack;

}

···

old_ack:

/* If data was SACKed, tag it and see if we should send more data.

* If data was DSACKed, see if we can undo a cwnd reduction.

*/

···

return 0;To sum up: fin-20622 has a possible processing path, which is consistent with the performance of the problem scenario. From the server perspective:

snd_una was updated to the ack value of the packet, that is, 754.snd_nxt at this time, it was determined to be old_ack and was acceptable. After that, the acceptable return value was 0.tcp_rcv_state_process and did not enter the process of fin packet processing.This was equivalent to the server not receiving the fin signal, which was consistent with the problem scenario.

After finding this suspicious path, we must find a way to verify it. Because the specific code snippets were located and the actual code was quite complex, it was difficult to determine the true running path through code analysis alone.

Therefore, we used a big trick - directly modified the kernel to verify the tcp state information of the above-mentioned points, mainly the state transition and the send window.

The specific process will not be repeated, and we discovered something new:

(Note: We still used the normal reproduction script)

snd_una was indeed updated, which conformed to the above assumption and provided the condition for fin packet discarding.tcp_rcv_state_process () function at all, but were directly processed by the outer layer of the tcp_v4_rcv() function according to the TIMEWAIT process. The connection was finally closed.Obviously, the second point is likely to be the key to the failure to reproduce.

tcp_rcv_state_process() function, it should be able to reproduce the problem. However, the code path may be different due to some configuration differences between online scenarios and reproduction scenarios.FIN_WAIT_2 state. The same is true for tool monitoring results, but why is TIMEWAIT here?With these questions, let's go back to the code and continue our analysis. Between the ack check and the fin processing, we found the most suspicious location:

case TCP_FIN_WAIT1: {

int tmo;

···

if (tp->snd_una != tp->write_seq) // An exception. There is still data to be sent

break; // Suspicious

tcp_set_state(sk, TCP_FIN_WAIT2); // Move to FIN_WAIT2 and close send direction

sk->sk_shutdown |= SEND_SHUTDOWN;

sk_dst_confirm(sk);

if (!sock_flag(sk, SOCK_DEAD)) { // Delay closure

/* Wake up lingering close() */

sk->sk_state_change(sk);

break; //Suspicious

}

···

// The timewait-related logic may be entered

tmo = tcp_fin_time(sk); // Calculate fin timeout

if (tmo > sock_net(sk)->ipv4.sysctl_tcp_tw_timeout) {

// If the timeout period is long, start the keepalive timer

inet_csk_reset_keepalive_timer(sk,

tmo - sock_net(sk)->ipv4.sysctl_tcp_tw_timeout);

} else if (th->fin || sock_owned_by_user(sk)) {

/* Bad case. We could lose such FIN otherwise.

* It is not a big problem, but it looks confusing

* and not so rare event. We still can lose it now,

* if it spins in bh_lock_sock(), but it is really

* marginal case.

*/

inet_csk_reset_keepalive_timer(sk, tmo);

} else { // Otherwise, directly enter timewait. According to the test, the ack packet enters this branch when reproduction fails

tcp_time_wait(sk, TCP_FIN_WAIT2, tmo);

goto discard;

}

break;

}This fragment corresponded to the processing of the ack-20623, and we did find a connection with TIMEWAIT, so we suspected the first two breaks. If the break is triggered in advance, will it not lead to TIMEWAIT and then be reproduced successfully?

Without saying much, we practiced this assumption directly. By modifying the code, we found that any one of the two breaks can reproduce the problem scene if triggered, resulting in the connection not being closed normally! We compared two break conditions, and SOCK_DEAD became the most possible suspect.

From the literal meaning, this flag should be related to the shutdown process of TCP. We looked up in the kernel code and found two related functions:

/*

* Shutdown the sending side of a connection. Much like close except

* that we don't receive shut down or sock_set_flag(sk, SOCK_DEAD).

*/

void tcp_shutdown(struct sock *sk, int how)

{

/* We need to grab some memory, and put together a FIN,

* and then put it into the queue to be sent.

* Tim MacKenzie(tym@dibbler.cs.monash.edu.au) 4 Dec '92.

*/

if (!(how & SEND_SHUTDOWN))

return;

/* If we've already sent a FIN, or it's a closed state, skip this. */

if ((1 << sk->sk_state) &

(TCPF_ESTABLISHED | TCPF_SYN_SENT |

TCPF_SYN_RECV | TCPF_CLOSE_WAIT)) {

/* Clear out any half completed packets. FIN if needed. */

if (tcp_close_state(sk))

tcp_send_fin(sk);

}

}

EXPORT_SYMBOL(tcp_shutdown);As can be seen from the annotations, this function has part of the function of close, but is not equipped with the function of sock_set_flag(sk, SOCK_DEAD). Let's take another look at tcp_close():

void tcp_close(struct sock *sk, long timeout)

{

struct sk_buff *skb;

int data_was_unread = 0;

int state;

···

if (unlikely(tcp_sk(sk)->repair)) {

sk->sk_prot->disconnect(sk, 0);

} else if (data_was_unread) {

/* Unread data was tossed, zap the connection. */

NET_INC_STATS(sock_net(sk), LINUX_MIB_TCPABORTONCLOSE);

tcp_set_state(sk, TCP_CLOSE);

tcp_send_active_reset(sk, sk->sk_allocation);

} else if (sock_flag(sk, SOCK_LINGER) && !sk->sk_lingertime) {

/* Check zero linger _after_ checking for unread data. */

sk->sk_prot->disconnect(sk, 0);

NET_INC_STATS(sock_net(sk), LINUX_MIB_TCPABORTONDATA);

} else if (tcp_close_state(sk)) {

/* We FIN if the application ate all the data before

* zapping the connection.

*/

tcp_send_fin(sk); // Send the fin packet

}

sk_stream_wait_close(sk, timeout);

adjudge_to_death:

state = sk->sk_state;

sock_hold(sk);

sock_orphan(sk); // The SOCK_DEAD flag is set here

···

}

EXPORT_SYMBOL(tcp_close);Here, both tcp_shutdown and tcp_close are standard interfaces of the TCP protocol and can be used to close the connection:

struct proto tcp_prot = {

.name = "TCP",

.owner = THIS_MODULE,

.close = tcp_close, //close is here

.pre_connect = tcp_v4_pre_connect,

.connect = tcp_v4_connect,

.disconnect = tcp_disconnect,

.accept = inet_csk_accept,

.ioctl = tcp_ioctl,

.init = tcp_v4_init_sock,

.destroy = tcp_v4_destroy_sock,

.shutdown = tcp_shutdown, //shutdown is here

.setsockopt = tcp_setsockopt,

.getsockopt = tcp_getsockopt,

.keepalive = tcp_set_keepalive,

.recvmsg = tcp_recvmsg,

.sendmsg = tcp_sendmsg,

···

};

EXPORT_SYMBOL(tcp_prot);In summary, an important difference between shutdown and close is that shutdown does not set SOCK_DEAD.

We replaced the close() of the reproduction script with the shutdown() to retest, and finally successfully reproduced the result of fin being discarded!

(And by printing the log, it was determined that the reason for discarding was the old_ack mentioned earlier, which finally verified our hypothesis.)

In the following example, we only need to return to the online scenario and check whether the shutdown() is called to close the connection. As confirmed by online personnel, the server here did use shutdown() to close the connection (through nginx lingering_close).

At this point, the truth is finally out!

Finally, let's answer the initial two questions as a summary:

Is this an acceptable behavior of the kernel?

snd_una, and by snd_una, determine whether the ack packet needs to be processed.snd_una is updated. During the ack check process, the fin that arrives later is considered as a packet acknowledged in comparing with snd_una, which does not need to be processed again. In consequence, it is directly discarded, and challenge_ack is returned. This leads to the problem scenario.Why did the local reproduction fail?

close() interface is used when the TCP connection is closed. In contrast, shutdown() is used in the online environment.In fact, there is still a question left: why is it that when the connection is closed with close(), the state transition of the fin packet - from FIN_WAIT_2 to TIMEWAIT - (tcp_rcv_state_process` is not entered) is not observed?

This should be explained from when FIN_WAIT_1 received the ack. As mentioned in the code analysis above, if two suspicious breaks are not triggered, it will enter tcp_time_wait when processing the ack:

case TCP_FIN_WAIT1: {

int tmo;

···

else {

tcp_time_wait(sk, TCP_FIN_WAIT2, tmo);

goto discard;

}

break;

}The main logic of () tcp_time_wait is as follows:

/*

* Move a socket to time-wait or dead fin-wait-2 state.

*/

void tcp_time_wait(struct sock *sk, int state, int timeo)

{

const struct inet_connection_sock *icsk = inet_csk(sk);

const struct tcp_sock *tp = tcp_sk(sk);

struct inet_timewait_sock *tw;

struct inet_timewait_death_row *tcp_death_row = &sock_net(sk)->ipv4.tcp_death_row;

//Create a tw in which the TCP status is set to TCP_TIME_WAIT

tw = inet_twsk_alloc(sk, tcp_death_row, state);

if (tw) { // If the creation is successful, initialization is performed

struct tcp_timewait_sock *tcptw = tcp_twsk((struct sock *)tw);

const int rto = (icsk->icsk_rto << 2) - (icsk->icsk_rto >> 1); //Calculate the timeout period

struct inet_sock *inet = inet_sk(sk);

tw->tw_transparent = inet->transparent;

tw->tw_mark = sk->sk_mark;

tw->tw_priority = sk->sk_priority;

tw->tw_rcv_wscale = tp->rx_opt.rcv_wscale;

tcptw->tw_rcv_nxt = tp->rcv_nxt;

tcptw->tw_snd_nxt = tp->snd_nxt;

tcptw->tw_rcv_wnd = tcp_receive_window(tp);

tcptw->tw_ts_recent = tp->rx_opt.ts_recent;

tcptw->tw_ts_recent_stamp = tp->rx_opt.ts_recent_stamp;

tcptw->tw_ts_offset = tp->tsoffset;

tcptw->tw_last_oow_ack_time = 0;

tcptw->tw_tx_delay = tp->tcp_tx_delay;

/* Get the TIME_WAIT timeout firing. */

// Specify the timeout period

if (timeo < rto)

timeo = rto;

if (state == TCP_TIME_WAIT)

timeo = sock_net(sk)->ipv4.sysctl_tcp_tw_timeout;

/* tw_timer is pinned, so we need to make sure BH are disabled

* in following section, otherwise timer handler could run before

* we complete the initialization.

*/

//Update the structure of timewait sock

local_bh_disable();

inet_twsk_schedule(tw, timeo);

/* Linkage updates.

* Note that access to tw after this point is illegal.

*/

inet_twsk_hashdance(tw, sk, &tcp_hashinfo); // Join the global hash table(tcp_hashinfo)

local_bh_enable();

} else {

/* Sorry, if we're out of memory, just CLOSE this

* socket up. We've got bigger problems than

* non-graceful socket closings.

*/

NET_INC_STATS(sock_net(sk), LINUX_MIB_TCPTIMEWAITOVERFLOW);

}

tcp_update_metrics(sk); // Update TCP statistical metrics, which does not affect this behavior

tcp_done(sk); // Destroy the SK

}

EXPORT_SYMBOL(tcp_time_wait);It can be seen that in this process, the original sk is destroyed, and the corresponding inet_timewait_sock is created to start the timing. In other words, when the server of close receives ack, although it will enter the FIN_WAIT_2 state, it will immediately switch to the TIMEWAIT state and will not go through the standard tcp_set_state() function. Therefore, ebpf cannot monitor it.

When the fin packet is received later, it will not enter tcp_rcv_state_process() at all, but will be processed by the outer tcp_v4_rcv() for the timewait process. Specifically, tcp_v4_rcv() will query the corresponding kernel sk according to the received skb. The timewait_sock created above will be found, whose status is TIMEWAIT, so the processing of timewait will be performed directly. The core code is as follows:

int tcp_v4_rcv(struct sk_buff *skb)

{

struct net *net = dev_net(skb->dev);

struct sk_buff *skb_to_free;

int sdif = inet_sdif(skb);

int dif = inet_iif(skb);

const struct iphdr *iph;

const struct tcphdr *th;

bool refcounted;

struct sock *sk;

int ret;

···

th = (const struct tcphdr *)skb->data;

···

lookup:

sk = __inet_lookup_skb(&tcp_hashinfo, skb, __tcp_hdrlen(th), th->source,

th->dest, sdif, &refcounted); //Query sk from the global hash table tcp_hashinfo

···

process:

if (sk->sk_state == TCP_TIME_WAIT)

goto do_time_wait;

···

do_time_wait: // The normal timewait process

···

goto discard_it;

}To sum up, the server calls close() to close the connection. It transfers to the FIN_WAIT_2 state after receiving ack, and then immediately transfer to the TIMEWAIT state without waiting for the client's fin packet.

A simple qualitative understanding is that calling close() on the socket means fully closing the receiving and sending, so waiting in the FIN_WAIT_2 state for the other side's fin has little significance (one of the main purposes of waiting for the other side's fin is to determine if they have finished sending). Therefore, after confirming that the fin sent by the other side has been received (by receiving the client's ack for fin), the server can enter the TIMEWAIT state.

Disclaimer: The views expressed herein are for reference only and don't necessarily represent the official views of Alibaba Cloud.

Japanese-Language AI Models Based on Tongyi Qianwen (Qwen) Were Launched by rinna

Tech for Innovation | Alibaba Cloud 2023 Milestones and Highlights

1,353 posts | 479 followers

FollowAlibaba Cloud Native Community - September 13, 2023

William Pan - February 6, 2020

Alibaba Clouder - April 19, 2019

GXIC - February 20, 2020

Alibaba Cloud Community - June 19, 2023

Alibaba Cloud Blockchain Service Team - August 29, 2018

1,353 posts | 479 followers

Follow .XYZ Domain

.XYZ Domain

Special offer for .XYZ and other domain names from just $0.18 per year. No hidden fees.

Learn MoreMore Posts by Alibaba Cloud Community