Relational databases remain the persistence layer for the majority of transactional applications: order systems, financial ledgers, identity services, and content management platforms. The data model is well understood, the query language is mature, and the consistency guarantees are clearly defined. The operational burden, however, is substantial. Provisioning hardware, patching engine versions, configuring synchronous replication, automating backups, scheduling failover drills, hardening network access, and maintaining performance baselines collectively absorb a significant share of database engineering effort that is necessary but rarely differentiated between organisations.

The structural problem is that this operational work scales poorly. Each new application typically introduces another database instance, another patching schedule, another set of replication topologies, and another backup routine. ApsaraDB RDS addresses this by exposing relational database engines MySQL, PostgreSQL, SQL Server, and MariaDB through a managed interface that consolidates instance lifecycle, replication, backup, security, and observability into a single configurable service. This article documents the architectural layers an engineering team should understand when designing production deployments on ApsaraDB RDS.

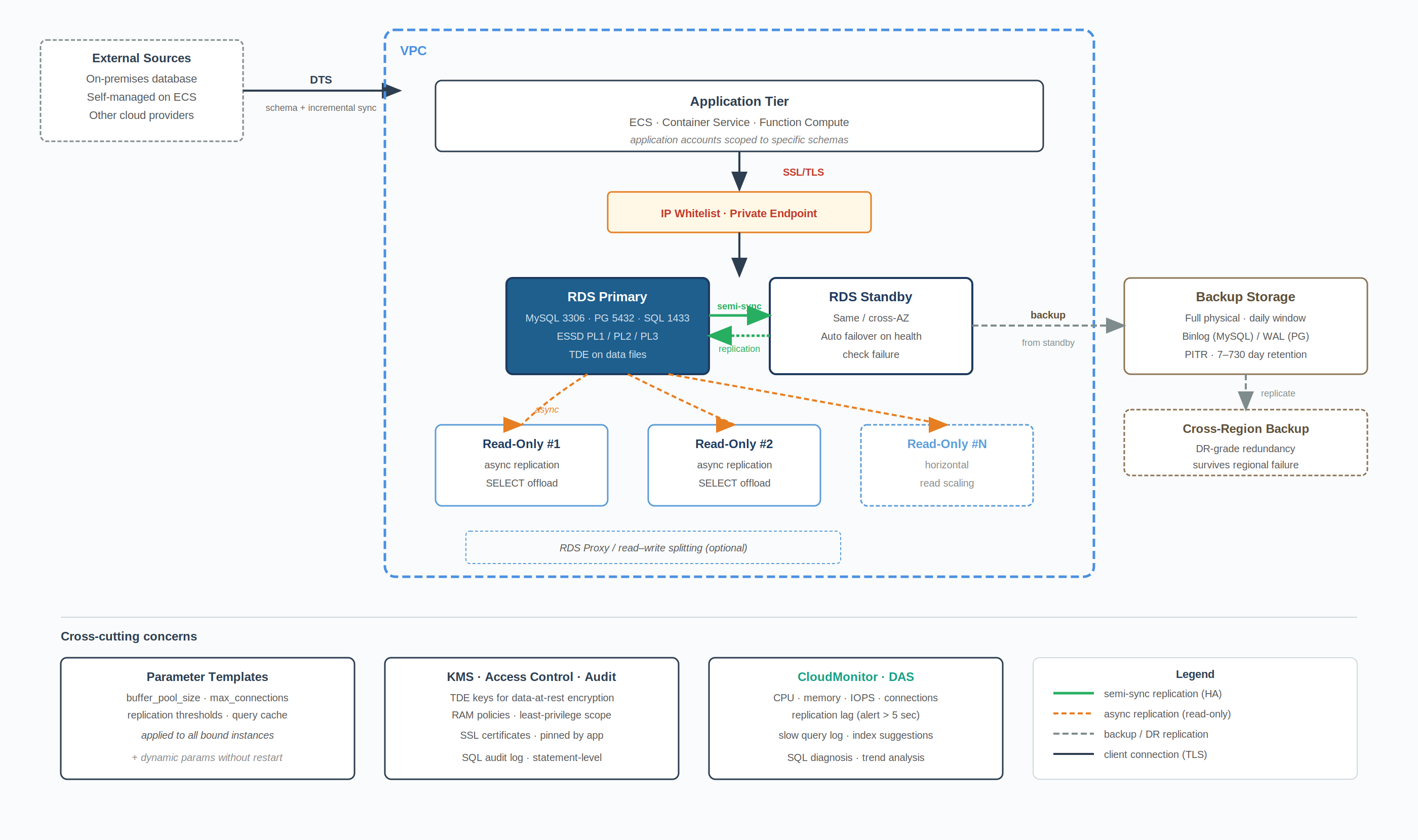

*Figure 1. ApsaraDB RDS reference architecture: HA topology, network isolation, backup, and observability.

*

An RDS instance is the unit of deployment. Each instance encapsulates a database engine binary, a compute resource, attached storage, and a network endpoint. The instance class is selected from defined CPU and memory tiers; storage is provisioned independently using ESSD volumes, with multiple performance levels available (PL1, PL2, and PL3) distinguished by their IOPS and throughput ceilings. PL1 suits general OLTP workloads; PL2 and PL3 are reserved for higher concurrency or large working-set patterns where storage latency materially affects query response time.

The High-Availability instance topology deploys a primary node and a standby node within an availability zone, or across zones for higher fault tolerance. Replication between primary and standby uses semi-synchronous mode by default for MySQL, ensuring transactions are acknowledged on the standby's relay log before commit confirmation returns to the application. Failover from primary to standby is automatic on health check failure, with promotion typically completing within tens of seconds. Cross-zone deployment increases this slightly due to inter-zone network latency but provides resilience against zone-level failures that single-zone HA cannot survive.

Read-only instances can be attached to the primary for offloading read traffic. Each read-only instance maintains its own asynchronous replication stream from the primary and is independently scalable. For MySQL workloads with significant read concurrency, an RDS Proxy or read/write splitting layer can route SELECT statements across read-only instances while directing writes exclusively to the primary, distributing load without application-side connection management or driver-level routing logic.

Production RDS instances should be deployed within a VPC. The instance is assigned a private endpoint resolvable only within the VPC and any connected VPCs through Cloud Enterprise Network or VPN gateway. Public endpoints exist for development and migration scenarios but should be disabled on production instances unless an explicit access requirement exists and should not serve as the default access path for application traffic.

Access control is enforced through IP whitelists at the instance level. Whitelist entries can be CIDR ranges or individual addresses; for VPC-internal access, the whitelist should be scoped to the application subnet rather than the entire VPC range. Default engine ports 3306 for MySQL, 5432 for PostgreSQL, and 1433 for SQL Server can be changed during instance configuration to reduce exposure to opportunistic port scanning, though this is a defence-in-depth measure rather than a primary security control.

SSL/TLS is supported on all engines and should be enabled on production instances to encrypt transport between the application and database. Server certificates can be downloaded from the RDS console and pinned in the application connection configuration. For data at rest, Transparent Data Encryption encrypts the underlying data files using a KMS-managed key, transparent to the application layer. TDE introduces a measurable performance overhead that varies with the workload's write profile, and is required for several regulatory frameworks, including PCI DSS and certain financial industry standards.

Automated backup is enabled by default. Full physical backups are taken on a configurable schedule, typically daily during a low-traffic window and incremental backups (binary log archives for MySQL, WAL segments for PostgreSQL) are captured continuously. Backup retention is configurable, with a default of 7 days and an extended retention tier reaching 730 days for compliance-driven workloads. Backup storage is billed separately from instance storage and uses a different cost basis, so retention policy directly affects long-term operating cost and should be set deliberately rather than left at default.

Point-in-Time Recovery restores a database to any second within the retention window by replaying incremental backups onto the most recent full backup preceding the target time. Recovery is performed into a new instance rather than overwriting the source, which preserves the original instance for forensic analysis after data corruption or accidental modification events. PITR latency depends on the volume of incremental backups to replay; for workloads with high write throughput, more frequent full backups reduce recovery time at the cost of higher backup storage consumption.

Cross-region backup replication is available for disaster recovery scenarios where regional failure must be survivable. The destination region holds a copy of full and incremental backups, enabling instance reconstruction in the alternate region without dependency on the source region's availability, a prerequisite for any meaningful regional disaster recovery posture.

Engine parameters, buffer pool sizes, connection limits, query cache configuration, and replication thresholds are managed through parameter templates. A template defines the parameter values applied to instances bound to it; modifying the template propagates changes to bound instances on next restart, and dynamic parameters can be updated without restart for engines that support them. Templates separate parameter governance from instance lifecycle, enabling consistent configuration across instance fleets without per-instance tuning drift.

Slow query logs are captured automatically with a configurable execution time threshold. The log is queryable through the RDS console and integrates with Database Autonomy Service (DAS), which surfaces query patterns, missing indexes, and lock contention without requiring manual log analysis. DAS provides query-level performance insights, automatic SQL diagnosis, and historical trend analysis, reducing the manual effort of performance triage on production instances and shortening the feedback loop between observation and remediation.

CloudMonitor exposes instance-level metrics, CPU utilisation, memory pressure, connection count, IOPS, and replication lag, at one-minute resolution by default. Alert rules can be configured against any metric with notification delivery via SMS, email, or webhook integration. Replication lag in particular warrants a low alert threshold on HA instances; sustained lag above 5 seconds typically indicates either standby resource saturation or write throughput exceeding replication capacity, both of which compromise failover RPO if left unaddressed.

Three operational factors determine reliable production behaviour.

ApsaraDB RDS reduces relational database operations to a set of configuration decisions rather than infrastructure work. High availability, backup, replication, and observability are the four areas where self-managed deployments typically accumulate operational debt and are exposed as managed features with clear configuration interfaces. Engineering teams retain control over the decisions that affect application behaviour: engine version, instance topology, parameter values, network access, and backup retention. Decisions that affect operational reliability without affecting application behaviour, failover orchestration, backup execution, and patch application are handled by the service.

Engineers designing on RDS should evaluate three patterns based on workload characteristics. Cluster Edition deployments suit MySQL workloads requiring stronger consistency on read-only replicas, using a shared-storage architecture rather than asynchronous replication. PolarDB or PolarDB-X is the appropriate target where sharded horizontal scale beyond a single primary instance is required, though these are distinct services with different operational semantics. For development and lower-tier environments, the Basic Edition single-node instance reduces cost relative to HA but should not be used for production workloads where the absence of a standby node materially affects recovery objectives.

Disclaimer: The views expressed herein are for reference only and don’t necessarily represent the official views of Alibaba Cloud.

End-to-End MLOps Pipeline with Alibaba Cloud: PAI-DSW, PAI-DLC, OSS and PAI-EAS

109 posts | 2 followers

FollowH Ohara - November 20, 2023

Alibaba Clouder - January 7, 2021

Alibaba Clouder - February 11, 2020

Alibaba Clouder - August 7, 2020

Alibaba Cloud Community - February 2, 2022

afzaalvirgoboy - February 25, 2020

109 posts | 2 followers

Follow Data Transmission Service

Data Transmission Service

Supports data migration and data synchronization between data engines, such as relational database, NoSQL and OLAP

Learn More AnalyticDB for PostgreSQL

AnalyticDB for PostgreSQL

An online MPP warehousing service based on the Greenplum Database open source program

Learn More PolarDB for PostgreSQL

PolarDB for PostgreSQL

Alibaba Cloud PolarDB for PostgreSQL is an in-house relational database service 100% compatible with PostgreSQL and highly compatible with the Oracle syntax.

Learn More AnalyticDB for MySQL

AnalyticDB for MySQL

AnalyticDB for MySQL is a real-time data warehousing service that can process petabytes of data with high concurrency and low latency.

Learn MoreMore Posts by PM - C2C_Yuan