This topic describes the concept, scenarios, limits, and usage notes of Row-oriented AI, a sub-feature of PolarDB for AI.

Background information

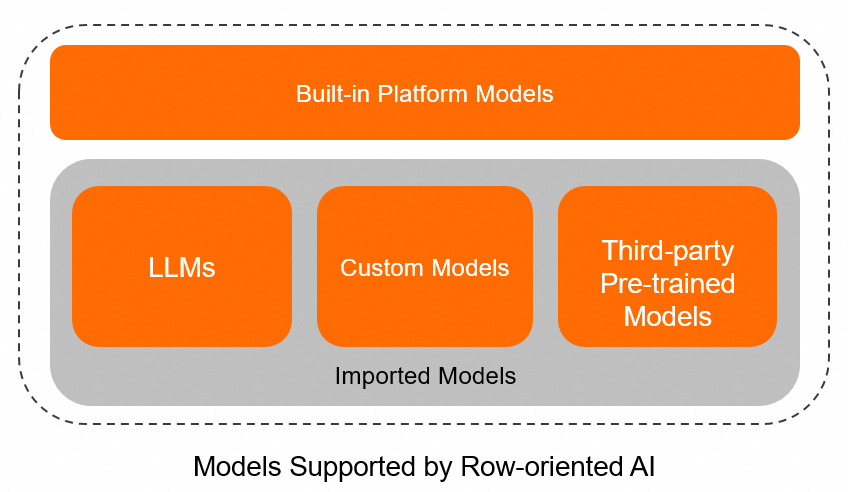

As an important sub-feature of PolarDB for AI, Row-oriented AI performs model inference and calls large language models (LLMs) by using loadable functions as hooks. The Row-oriented AI feature allows you to register created AI models to PolarDB and invoke hook functions to use the models for inference. The feature provides the native SQL capabilities and delivers significant advantages for built-in models.

You can use AI models in a similar way as you use built-in database functions. The Row-oriented AI feature is designed to integrate AI capabilities into PolarDB so that you can leverage SQL statements to efficiently manage and use AI models. This way, data in PolarDB can be used by AI models without additional data migration, which ensures data consistency and improves inference performance.

The Row-oriented AI feature allows you to use imported models and built-in platform models. They are different in registration methods, but both use native SQL statements for subsequent queries.

Imported models

You can import the following types of created models or remote LLMs:

Custom models: You can register your custom models to PolarDB by executing SQL statements and then create functions for inference. The Row-oriented AI feature deploys the inference service on the AI node, and then uses automatically generated user-defined functions (in

.sofiles) to call the inference service on the AI node. Data does not need to be exported out of the database.Third-party pre-created models: You can use third-party pre-created models similar to how you use custom models. You need to register the models to PolarDB and then create functions for inference.

Remote LLMs: You need to provide the metadata of remote LLMs such as inference service URLs and API keys. The Row-oriented AI feature automatically generates corresponding user-defined functions (in

.sofiles). Then, the feature uses these functions as hooks to call the inference service of the model.

Built-in platform models

Built-in platform models are Alibaba's self-developed models that are provided by PolarDB for AI. The models include Tongyi Qianwen, a robot for diagnosis consulting, chatbot, Cainiao decision tree model, and anomaly detection model. To use the models for inference, you need only to deploy the models and create functions in your database. The following table describes the available built-in functions and

.sofiles corresponding to the functions.Function

Model name

File name

Data type of return values

Description

polarchat

builtin_polarchat

#ailib#_builtin_polarchat.so

STRING

The function for interactive Q&A based on LLMs.

polarzixun

builtin_polarzixun

#ailib#_builtin_polarzixun.so

STRING

The function for consulting based on retrieval-augmented LLMs.

qwen

builtin_qwen

#ailib#_builtin_qwen.so

STRING

The function based on the Tongyi Qianwen model.

Scenarios

Each row of data in a table corresponds to an inference result.

AI models rely on data updates and data cannot be frequently exported out of the database for inference.

Complex queries, such as those that include Group By, subqueries, and Join, are required for model inference.

Table data in databases is used by LLMs for inference.

Only models on the TensorFlow platform can be imported. These models use one-dimensional arrays or sentences as inputs and return INTEGER, REAL, or STRING values.

Limits

The Row-oriented AI feature is supported only for PolarDB for MySQL 8.0.2 clusters of Enterprise Edition.

Billing

You are charged only for AI nodes. For more information about the pricing, see Pay-as-you-go prices of compute nodes and Subscription prices of compute nodes.

Usage notes

For more information, see Usage for imported models and Usage for built-in platform models.