This guide explains how to use the open-source elasticsearch-dump tool to migrate settings, mappings, and documents between Alibaba Cloud Elasticsearch clusters, or between an on-premises environment and the cloud.

Overview

elasticsearch-dump is a command-line tool used to export data from one Elasticsearch index and import it into another, or into a local file.

Best for:

Migrating small volumes of data.

Moving specific indexes.

Backing up mappings or settings to local JSON files.

Official documentation: elasticdump.

Prerequisites

Source/Destination clusters: Alibaba Cloud Elasticsearch clusters must be created. See Create an Alibaba Cloud Elasticsearch cluster.

Auto-indexing: The destination cluster must have Auto Indexing enabled, or the target index must be created manually in advance. See Configure the YML file.

Migration node: An Elastic Compute Service (ECS) is required to run the tool.

If the ECS instance and Elasticsearch cluster are in the same Virtual Private Cloud (VPC), use internal endpoints for faster, free data transfer.

If they are in different regions/VPCs, use public endpoints and ensure the ECS IP is added to the cluster whitelist. See Manage IP address whitelists.

Install elasticsearch-dump

Connect to the ECS instance.

For more information, see Connect to a Linux instance by using a password or key.

Install Node.js.

Download the package:

wget https://nodejs.org/dist/v16.18.0/node-v16.18.0-linux-x64.tar.xzDecompress and move:

tar -xf node-v16.18.0-linux-x64.tar.xzConfigure environment variables:

For the environment variables to temporarily take effect, run the following command:

export PATH=$PATH:/root/node-v16.18.0-linux-x64/bin/To make Node.js permanent, add it to your profile:

vim ~/.bash_profile export PATH=$PATH:/root/node-v16.18.0-linux-x64/bin/ source ~/.bash_profile

Install elasticsearch-dump:

npm install elasticdump -g

Examples

If your password contains special characters (e.g., #, $, @), standard URL strings may fail. See the FAQ and troubleshooting section for the --httpAuthFile solution.

Migrate data between clusters (cloud-to-cloud)

To fully migrate an index, run the command for settings, mappings, and data in that order.

Migrate index settings:

elasticdump --input=http://"<UserName>:<YourPassword>"@<YourEsHost>/<YourEsIndex> --output=http://"<OtherName>:<OtherPassword>"@<OtherEsHost>/<OtherEsIndex> --type=settingsMigrate mappings:

elasticdump --input=http://"<UserName>:<YourPassword>"@<YourEsHost>/<YourEsIndex> --output=http://"<OtherName>:<OtherPassword>"@<OtherEsHost>/<OtherEsIndex> --type=mappingMigrate documents (data)

elasticdump --input=http://"<UserName>:<YourPassword>"@<YourEsHost>/<YourEsIndex> --output=http://"<OtherName>:<OtherPassword>"@<OtherEsHost>/<OtherEsIndex> --type=data

Export to local file (backup)

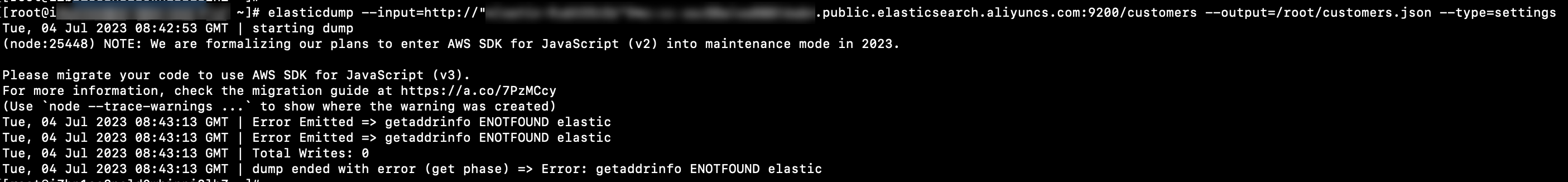

Migrate settings

elasticdump --input=http://"<UserName>:<YourPassword>"@<YourEsHost>/<YourEsIndex> --output=<YourLocalFile> --type=settingsMigrate mappings

elasticdump --input=http://"<UserName>:<YourPassword>"@<YourEsHost>/<YourEsIndex> --output=<YourLocalFile> --type=mappingMigrate documents (data)

elasticdump --input=http://"<UserName>:<YourPassword>"@<YourEsHost>/<YourEsIndex> --output=<YourLocalFile> --type=dataMigrate data based on a query

elasticdump --input=http://"<UserName>:<YourPassword>"@<YourEsHost>/<YourEsIndex> --output=<YourLocalFile> ----searchBody="<YourQuery>"

Import from local file (restore)

Restore documents

elasticdump --input=<YourLocalFile> --output=http://"<UserName>:<YourPassword>"@<YourEsHost>/<YourEsIndex> --type=dataParameter reference

Parameter | Description |

| Source and destination. Can be a cluster URL or a local file path. Important When you export to a local file, elasticsearch-dump automatically generates the destination file in the specified path. Therefore, before you migrate data to a local machine, ensure sure that the name of the destination file is unique in the related directory. |

<YourEsHost>/<OtherEsHost> | The endpoint (internal or public) of your Elasticsearch cluster (e.g., |

<UserName>/<OtherName> | Cluster username (default is |

<YourPassword>/<OtherPassword> | Cluster password. |

| The type of migration: |

| Filter data using a Query DSL. Example: |