By Dave Kyle and Weizijun

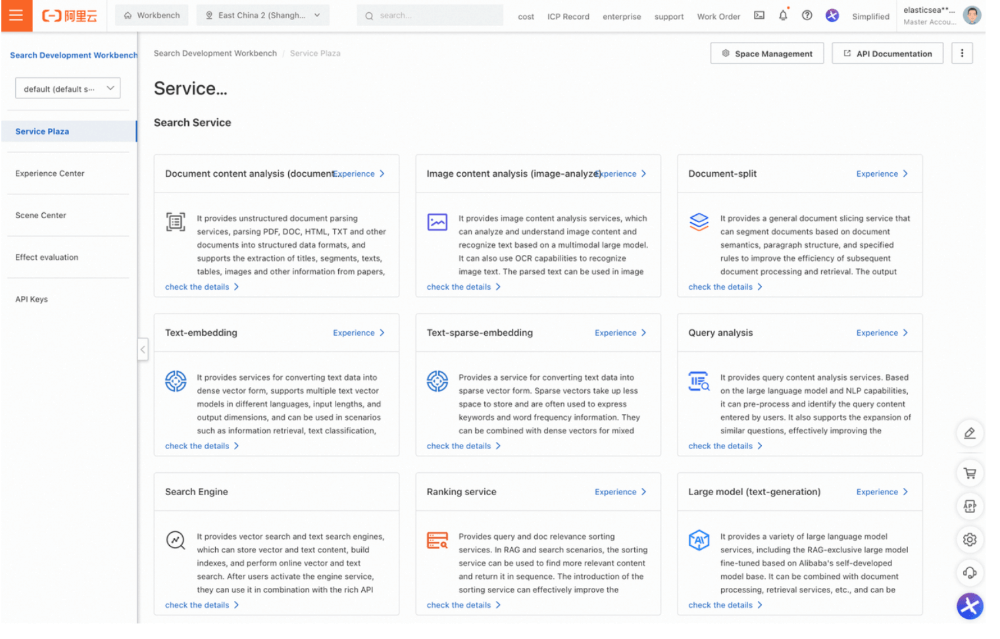

We are excited to announce our latest addition to the Elasticsearch Open Inference API: the integration of Alibaba Cloud AI Search. This work enables Elastic users to connect directly with the Alibaba Cloud AI platform. Developers building RAG applications using the Elasticsearch vector database can store and use dense and sparse embeddings generated from models hosted on Alibaba Cloud AI Search platform with semantic_text. In addition, Elastic users now have integrated access to reranking models for enhanced semantic reranking and the Qwen LLM family.

In this blog, we explore how to integrate Alibaba Cloud's AI services with Elasticsearch. You'll learn how to set up and use Alibaba's completion, rerank, sparse embedding, and text embedding services within Elasticsearch. The broad set of supported models integrated into inference task types will enhance the relevance of many use cases including RAG.

We’re grateful to the Alibaba Cloud team for contributing support for these task types to Elasticsearch open inference API!

Let’s walk through examples of how to configure and use these services within an Elasticsearch environment. Note Alibaba uses the term service_id instead of model_id.

This walkthrough assumes you already have an Alibaba Cloud Account with access to Alibaba Cloud AI Search platform. Next, you’ll need to create a workspace and API key for Inference creation.

In Elasticsearch, create your endpoint by providing the service as “alibabacloud-ai-search”, and the service settings including your workspace, the host, the service id and your api keys to access Alibaba Cloud AI Search platform. In our example, we're creating a text embedding endpoint using "ops-text-embedding-001" as the service id.

PUT _inference/text_embedding/ali_ai_embeddings{ "service": "alibabacloud-ai-search", "service_settings": { "api_key": "<api_key>", "service_id": "ops-text-embedding-001", "host": "xxxxx.platform-cn-shanghai.opensearch.aliyuncs.com", "workspace": "default" }}You will receive a response from Elasticsearch with the endpoint that was created successfully:

{

"inference_id": "ali_ai_embeddings",

"task_type": "text_embedding",

"service": "alibabacloud-ai-search",

"service_settings": {

"similarity": "dot_product",

"dimensions": 1536,

"service_id": "ops-text-embedding-001",

"host": "xxxxx.platform-cn-shanghai.opensearch.aliyuncs.com",

"workspace": "default" ,

"rate_limit": {

"requests_per_minute": 10000

}

},

"task_settings": {}

}Note that there are no additional settings for model creation. Elasticsearch will automatically connect to the Alibaba Cloud AI Search platform to test your credentials and the service id, and fill in the number of dimensions and similarity measures for you.

Next, let’s test our endpoint to ensure everything is set up correctly. To do this, we’ll call the perform inference API:

POST _inference/text_embedding/ali_ai_embeddings{ "input": "What is Elastic?"}The API call will return the generated embeddings for the provided input, which will look something like this:

{

"text_embedding": [

{

"embedding": [

0.048400473,

0.051464397,

… (additional values) …

0.033325635,

-0.008986305

]

}

]

}You are now ready to start exploring. After you have tried these examples, have a look at some new exciting innovations in Elasticsearch for semantic search use cases:

The new field simplifies storage and chunking of embeddings - just pick your model and Elastic does the rest!

Introduced in 8.14, allow you to setup multi-stage retrieval pipelines

But first, let’s dive into our examples!

To start, Alibaba Cloud provides several models for chat completion, with service IDs listed in their API documentation.

First, set up the inference service for text completion:

PUT _inference/completion/ali-chat{ "service": "alibabacloud-ai-search", "service_settings": { "host" : "xxxxx.platform-cn-shanghai.opensearch.aliyuncs.com", "api_key": "xxxxxxxxxxxxxxxxxx", "service_id": "ops-qwen-turbo", "workspace" : "default" }}Response

{

"inference_id": "ali-chat",

"task_type": "completion",

"service": "alibabacloud-ai-search",

"service_settings": {

"service_id": "ops-qwen-turbo",

"host": "xxxxx.platform-cn-shanghai.opensearch.aliyuncs.com",

"workspace": "default",

"rate_limit": {

"requests_per_minute": 1000

}

},

"task_settings": {}

}Using the configured endpoint, send a POST request to generate a completion:

POST _inference/completion/ali-chat{ "input":["Where is the capital of Henan?"]}Returns

{

"completion": [

{

"result": "The capital of Henan is Zhengzhou."

}

]

}Uniquely, for this Elastic Inference API integration with Alibaba, chat history can be included in the inputs, in this example, we’ve included the previous response and added: “What fun things are there?”

POST _inference/completion/ali-chat{ "input":["Where is the capital of Henan?", "The capital of Henan is Zhengzhou.", "What fun things are there?" ]}The response clearly includes the history

{

"completion": [

{

"result": "I'm sorry, I do not have enough information to provide a specific list of fun things to do in Zhengzhou, Henan. I can only tell you that Zhengzhou is the capital of Henan province. To find out about fun activities, attractions, or events in Zhengzhou, I would suggest researching local tourism websites, asking locals, or checking out travel guides for the area."

}

]

}In future updates, we plan to allow users to explicitly include chat history, improving the ease of usage.

Moving on to our next task type, rerank. Reranking helps re-order search results for improved relevance, using Alibaba's powerful models. If you want to read more about this concept, have a look at this blog on Elastic Search Labs.

Configure the reranking inference service:

PUT _inference/rerank/ali-rank{ "service": "alibabacloud-ai-search", "service_settings": { "api_key": "xxxxxxxxxxxxxxxxxx", "service_id": "ops-bge-reranker-larger", "host" : "xxxxx.platform-cn-shanghai.opensearch.aliyuncs.com", "workspace" : "default" }}

{

"inference_id": "ali-rank",

"task_type": "rerank",

"service": "alibabacloud-ai-search",

"service_settings": {

"service_id": "ops-bge-reranker-larger",

"host": "xxxxx.platform-cn-shanghai.opensearch.aliyuncs.com",

"workspace": "default",

"rate_limit": {

"requests_per_minute": 1000

}

},

"task_settings": {}

}Send a POST request to rerank your search query results:

The rerank interface does not require a lot of configuration (task_settings), it returns the relevance scores ordered by the most relevant first and the index of the document in the input array.

POST _inference/rerank/ali-rank{ "query": "What is the capital of the USA?", "input": [ "Carson City is the capital city of the American state of Nevada. At the 2010 United States Census, Carson City had a population of 55,274.",

"Capital punishment (the death penalty) has existed in the United States since before the United States was a country. As of 2017, capital punishment is legal in 30 of the 50 states.",

"The Commonwealth of the Northern Mariana Islands is a group of islands in the Pacific Ocean that are a political division controlled by the United States. Its capital is Saipan.",

"Washington, D.C. (also known as simply Washington or D.C., and officially as the District of Columbia) is the capital of the United States. It is a federal district.",

"Charlotte Amalie is the capital and largest city of the United States Virgin Islands. It has about 20,000 people. The city is on the island of Saint Thomas.",

"North Dakota is a state in the United States. 672,591 people lived in North Dakota in the year 2010. The capital and seat of government is Bismarck." ]}

{

"rerank": [

{

"index": 3,

"relevance_score": 0.9998832

},

{

"index": 4,

"relevance_score": 0.008847355

},

{

"index": 5,

"relevance_score": 0.0026626128

},

{

"index": 0,

"relevance_score": 0.00068250194

},

{

"index": 2,

"relevance_score": 0.00019716943

},

{

"index": 1,

"relevance_score": 0.00011591934

}

]

}Alibaba provides a model specifically for sparse embeddings, we will use ops-text-sparse-embedding-001 for our example.

PUT _inference/sparse_embedding/ali-sparse-embedding{ "service": "alibabacloud-ai-search", "service_settings": { "api_key": "xxxxxxxxxxxxxxxxxx", "service_id": "ops-text-sparse-embedding-001", "host" : "xxxxx.platform-cn-shanghai.opensearch.aliyuncs.com", "workspace" : "default" }}

{

"inference_id": "ali-sparse-embedding",

"task_type": "sparse_embedding",

"service": "alibabacloud-ai-search",

"service_settings": {

"service_id": "ops-text-sparse-embedding-001",

"host": "xxxxx.platform-cn-shanghai.opensearch.aliyuncs.com",

"workspace": "default",

"rate_limit": {

"requests_per_minute": 1000

}

},

"task_settings": {}

}Sparse has task_settings for:

input_type - either ingest or search

return_token - if true include the token text in the response, else it is a number

POST _inference/sparse_embedding/ali-sparse-embedding{ "input": "Hello world", "task_settings": { "input_type": "search", "return_token": true }}

{

"sparse_embedding": [

{

"is_truncated": false,

"embedding": {

"hello": 0.27783203,

"world": 0.28222656

}

}

]

}With return_token==false

{

"sparse_embedding": [

{

"is_truncated": false,

"embedding": {

"8999": 0.28222656,

"35378": 0.27783203

}

}

]

}Alibaba also offers text embedding models for different tasks.

Embeddings has one task_setting:

input_type - either ingest or search

PUT _inference/text_embedding/ali-embeddings{ "service": "alibabacloud-ai-search", "service_settings": { "api_key": "xxxxxxxxxxxxxxxxxx", "service_id": "ops-text-embedding-001", "host" : "xxxxx.platform-cn-shanghai.opensearch.aliyuncs.com", "workspace" : "default" }}

{

"inference_id": "ali-embeddings",

"task_type": "text_embedding",

"service": "alibabacloud-ai-search",

"service_settings": {

"service_id": "ops-text-embedding-001",

"host": "xxxxx.platform-cn-shanghai.opensearch.aliyuncs.com",

"workspace": "default",

"rate_limit": {

"requests_per_minute": 1000

},

"similarity": "dot_product",

"dimensions": 1536

},

"task_settings": {}

}Send a POST request to generate a text embedding:

POST _inference/text_embedding/ali-embeddings{ "input": "Hello world"}

{

"text_embedding": [

{

"embedding": [

-0.017036675,

0.07038724,

0.044685286,

0.0064531807,

0.013290042,

0.011183944,

-0.0020014185,

-0.009508779,

…Whether you're using Elasticsearch for implementing hybrid search, semantic reranking, or enhancing RAG use cases with summarization, the connection to Alibaba Cloud's AI Services opens up a new world of possibilities for Elasticsearch developers. Thanks again, Alibaba team, for the contribution!

To dive deep, try this Jupyter notebook with an end-to-end example of using Inference API with the Alibaba Cloud AI Search.

Read Alibaba Cloud's announcement about AI-powered search innovations with Elasticsearch.

Users can start using this with Elasticsearch Serverless environments today and in an upcoming version of Elasticsearch.

Happy searching!

Ready to try this out on your own? Start a free trial.

Elasticsearch has integrations for tools from LangChain, Cohere and more. Join our advanced semantic search webinar to build your next GenAI app!

Data Geek - January 15, 2025

Data Geek - February 21, 2025

5927941263728530 - May 15, 2025

Data Geek - February 28, 2025

Data Geek - November 4, 2024

Alibaba Cloud Data Intelligence - June 20, 2024

Vector Retrieval Service for Milvus

Vector Retrieval Service for Milvus

A cloud-native vector search engine that is 100% compatible with open-source Milvus, extensively optimized in performance, stability, availability, and management capabilities.

Learn More Alibaba Cloud Elasticsearch

Alibaba Cloud Elasticsearch

Alibaba Cloud Elasticsearch helps users easy to build AI-powered search applications seamlessly integrated with large language models, and featuring for the enterprise: robust access control, security monitoring, and automatic updates.

Learn More Database Migration Solution

Database Migration Solution

Migrating to fully managed cloud databases brings a host of benefits including scalability, reliability, and cost efficiency.

Learn More Cloud Migration Solution

Cloud Migration Solution

Secure and easy solutions for moving you workloads to the cloud

Learn MoreMore Posts by Data Geek