Ray Forward 2025 was the first large-scale developer activity in China after Ray joined the PyTorch Foundation. At the meeting, Li Wei from the Alibaba Cloud AnalyticDB team shared "AnalyticDB Ray: Data+AI Architecture," focusing on the AnalyticDB Data+AI elasticity and the native ecosystem architecture. He also introduced the enhanced features of AnalyticDB on Ray and the scheduling technology for multimodal processing.

AnalyticDB for MySQL, is a real-time data lakehouse built on a data lakehouse architecture. It is highly compatible with MySQL, supports millisecond-level updates and sub-second queries, and supports compute engines such as Ray and Spark. Whether for unstructured or semi-structured data in a data lake, or structured data in a database, you can use AnalyticDB for MySQL to simultaneously perform high-throughput offline processing and high-performance online analysis. This truly achieves the size of a data lake and the experience of a database. This helps enterprises build data analytics platforms to reduce costs and increase efficiency.

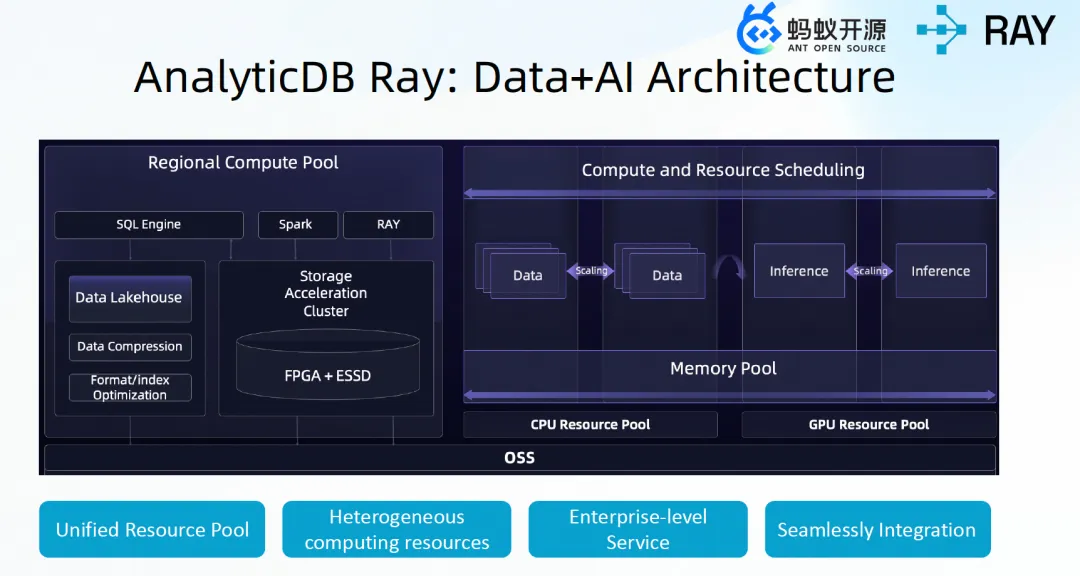

In terms of storage, a storage foundation is built based on Object Storage Service. A storage accelerator is built by using a combination of FPGA and SSD software and hardware to improve storage performance. In terms of computing, a unified resource pool of CPUs and GPUs is built to support the hybrid deployment of hybrid workloads and resource elasticity. AnalyticDB Ray possesses the advantages brought by this cloud-native Data+AI architecture, such as automatic elasticity and high resource utilization.

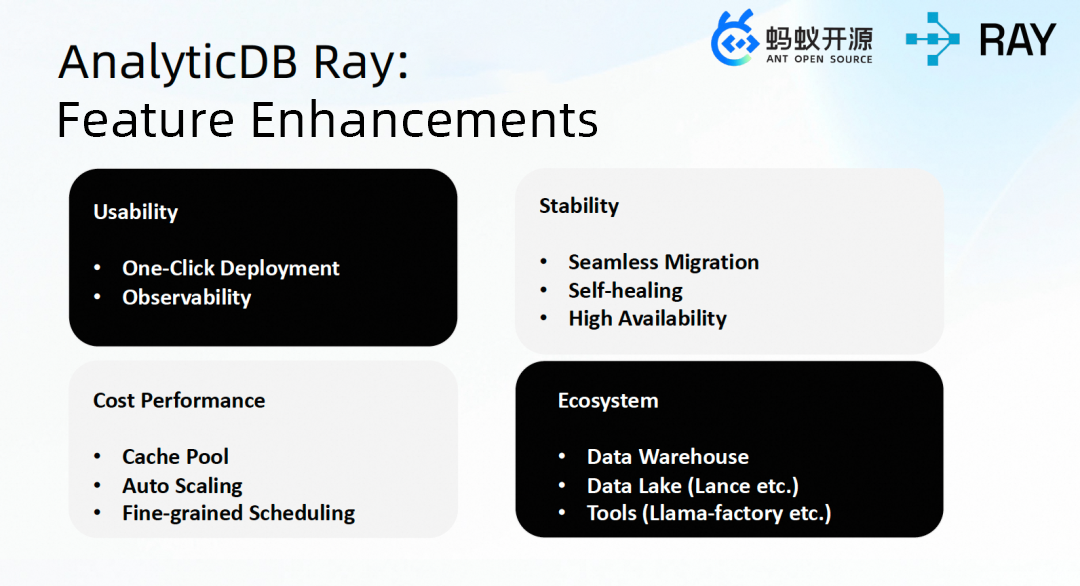

AnalyticDB Ray is a fully managed Ray service launched by AnalyticDB for MySQL. Based on open-source Ray, this service has been optimized and enhanced around four core dimensions: ease of use, stability, cost-effectiveness, and ecosystem. It supports one-click deployment and full-link observability, which significantly lowers the barrier to entry. In terms of stability, it supports seamless rotation upgrades of clusters, self-healing of abnormal nodes, and a high availability architecture with primary and backup Head nodes to ensure the system is as solid as a rock. In terms of cost control, costs are reduced by using automatic scaling technology and fine-grained scheduling that perceives the actual resource utilization of nodes. At the same time, by building an elastic cache pool, the service supports the elasticity of Ray nodes at the second level, which greatly improves elasticity efficiency. On the ecosystem side, the service deeply connects and integrates with the AnalyticDB for MySQL data lakehouse platform, connects to lake storage such as Lance, and seamlessly integrates with mainstream AI tools such as LLamaFactory. This helps enterprises build an integrated Data+AI architecture.

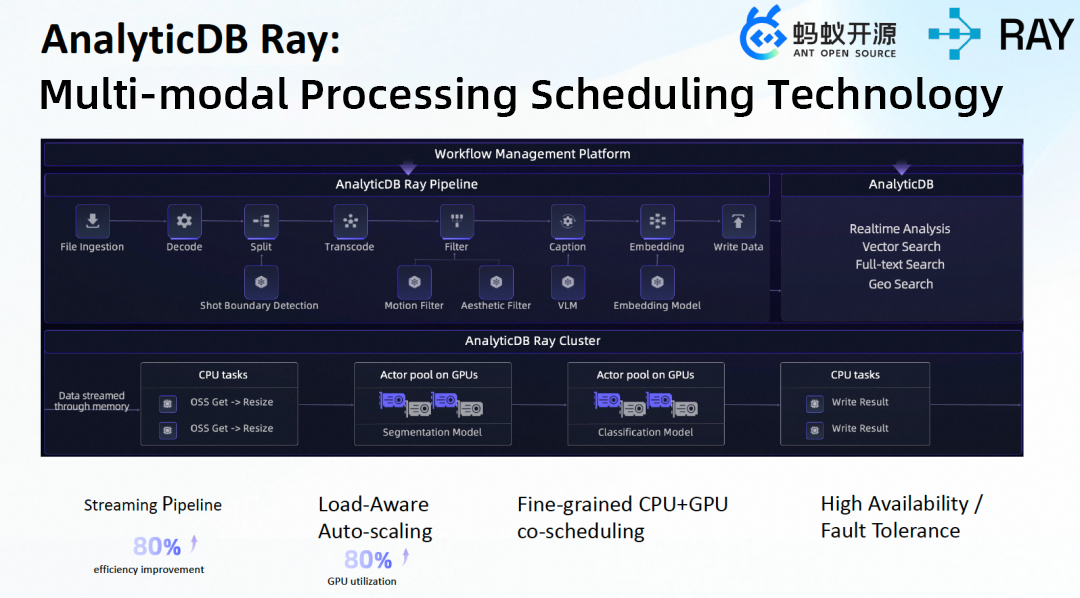

In scenarios such as autonomous driving and embodied intelligence, PB-level multimodal Clip data needs to be processed. The data includes multiple sources such as videos, point clouds, radars, GPS, and vehicle control signal lights. The processing procedure includes multiple stages such as decode, filter, and annotation. The resource overhead features of models vary greatly. The processing of a video usually uses CPUs and GPUs simultaneously. Therefore, traditional data processing solutions have problems such as complex environment configuration, low processing efficiency (offline processing based on Batch, job chunking according to processing stages), inflexible resource scheduling, and complex multimodal data management.

AnalyticDB Ray uses a self-developed Pipeline stream computing engine and possesses concurrency-aware adaptive scheduling capabilities. At the same time, based on Ray native APIs, it develops built-in high-performance video processing operators. It implements fine-grained resource scheduling of heterogeneous CPU and GPU resources based on profiles. Intermediate data uses Ray Object Store memory for interaction. The overall performance is improved by 1.8 times, and the GPU utilization exceeds 80%.

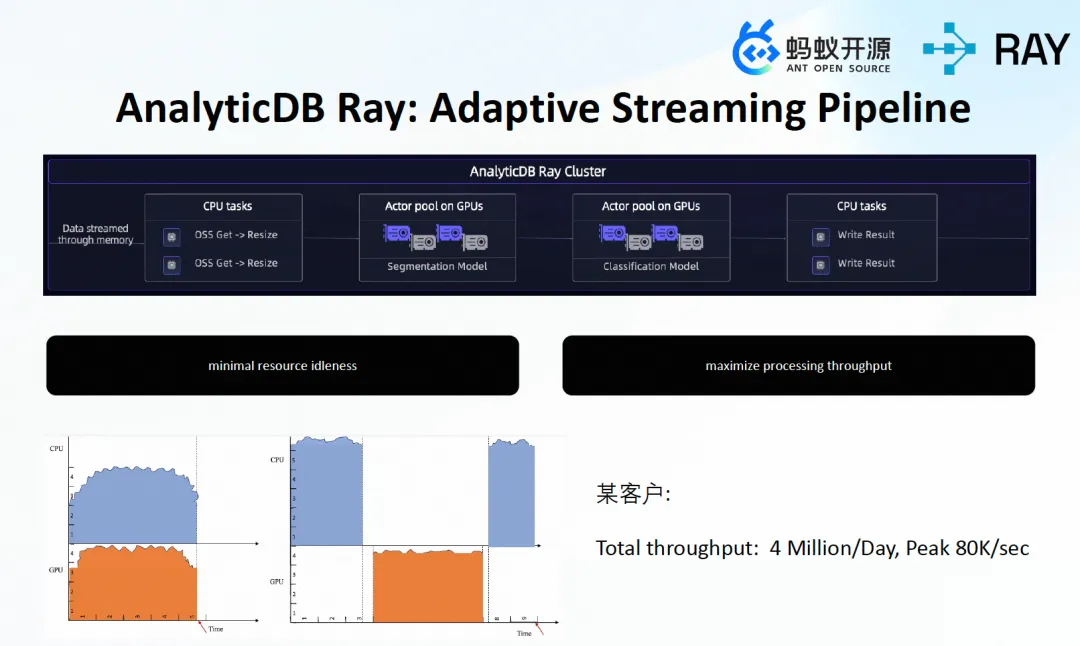

Compared with the heterogeneous resource usage and overhead of traditional scheduling, AnalyticDB Ray adopts adaptive Stream Computing. CPU and GPU tasks in different stages can be executed in parallel. Ray Actor Pool is used to separate CPU and GPU workloads to independently perform resource scheduling and elasticity. The task scheduling latency is 5 ms to 20 ms, the running time has no waiting, resource idleness is minimal, and processing throughput is maximized. In an AnalyticDB Customer scenario, there are nearly 4 million task schedules per day, with a peak of nearly 80,000 per second.

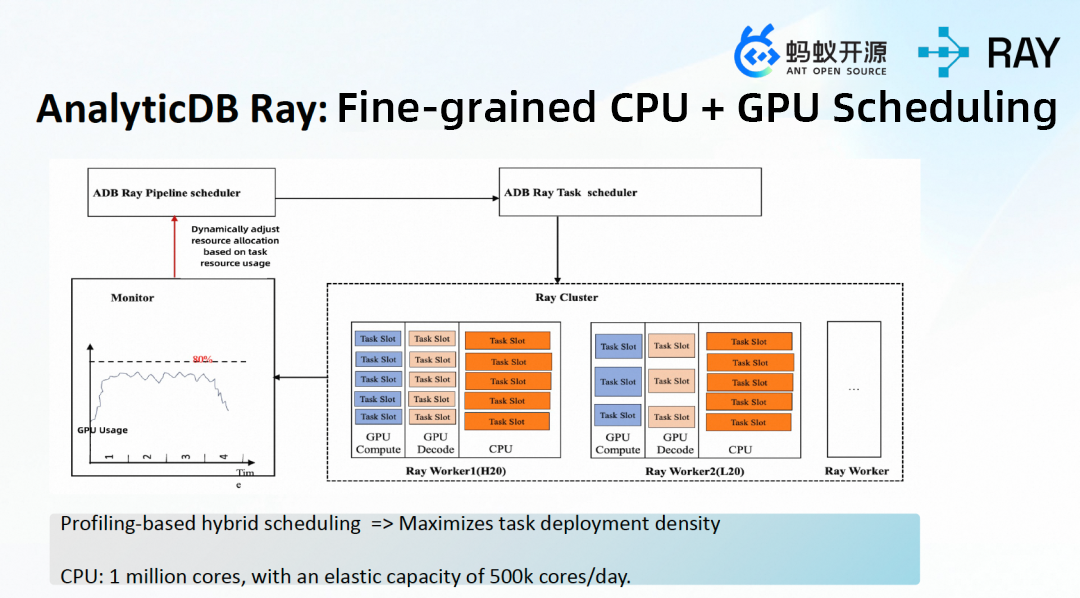

In terms of fine-grained resource scheduling, AnalyticDB implements hybrid scheduling of CPUs and GPUs based on job profiles at different stages through a self-developed heterogeneous resource pool. The built-in Monitor module performs real-time monitoring of throughput and resource overhead at different Stage phases, dynamically calculates, and selects the most suitable resource specifications for resource optimization. During the streaming processing procedure, each Stage dynamically requests task slots to execute calculations and schedules them to suitable Ray Workers for execution. For a single Ray Worker node, AnalyticDB separates three different resource types: GPU Compute, GPU Decode, and CPU. It requests resources for different jobs in a fine-grained manner. This maximizes the job deployment density on the node side and ensures that resource requests align closely with actual job usage and achieve dynamic elasticity.

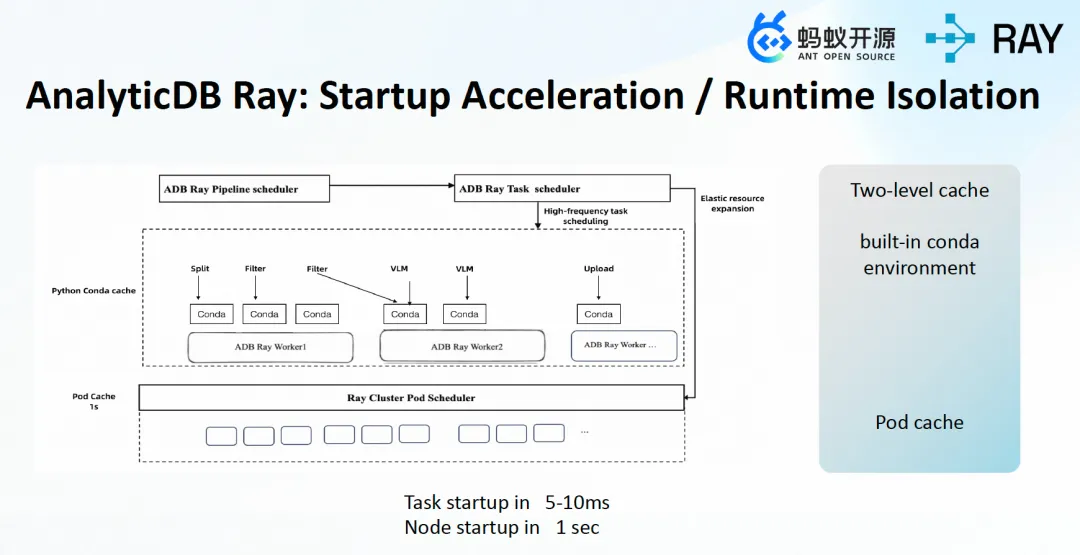

Regarding startup performance and runtime fencing, AnalyticDB supports 5 ms to 10 ms job startup based on a two-level cache. Ray Workers have built-in conda environments. When elastic startup is performed, the system reuses the pod cache of the AnalyticDB resource pool and pre-loads network interface cards and images to achieve second-level node elasticity. In the Pipeline engine of AnalyticDB Ray, different operators are mixed and executed in Ray Workers. Because conflicting dependencies such as Python packages exist during the runtime, AnalyticDB Ray implements runtime fencing through separate conda environments pre-built in images.

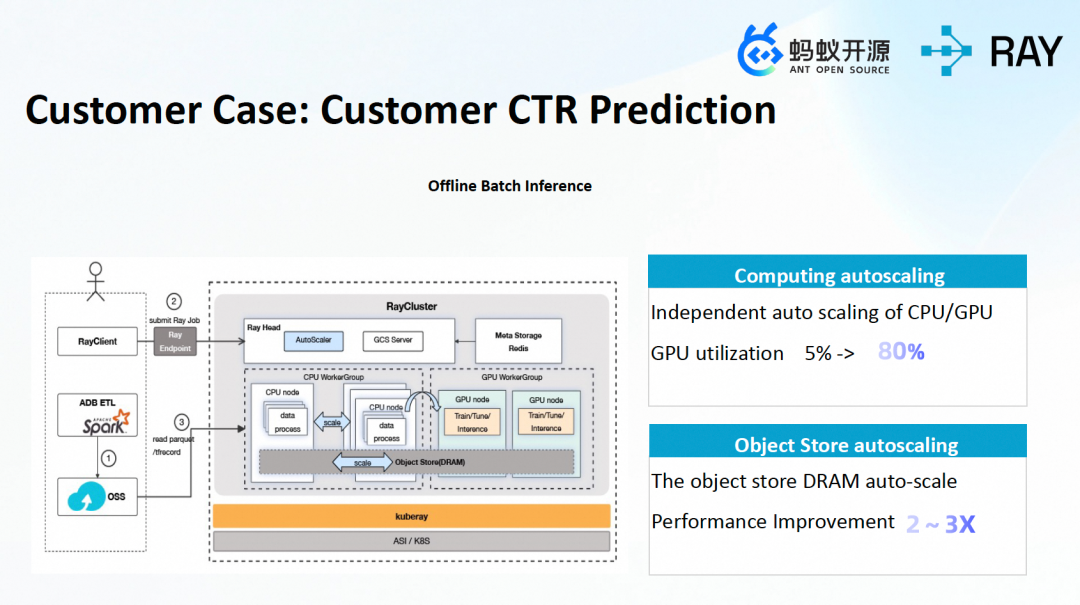

For a customer in the business intelligence scenario, the business scenario involves advertising recommendation Click-Through Rate (CTR) prediction and audience mining. Products need to find corresponding audiences. Data is generated at 12:00 for offline batch inference, and the prediction results are provided to the business side. By using AnalyticDB’s Spark engine, the customer performs extract, transform, and load (ETL) on the data in AnalyticDB data lake storage. The data after ETL is used for training and offline batch inference through AnalyticDB Ray. Through auto-scale horizontal dynamic scale-out of the object store and optimizations such as TensorFlow (TF) batch size, model hierarchy, and parameters, the customer's GPU utilization increased from 5% to 80%, and the overall performance improved by 2 to 3 times.

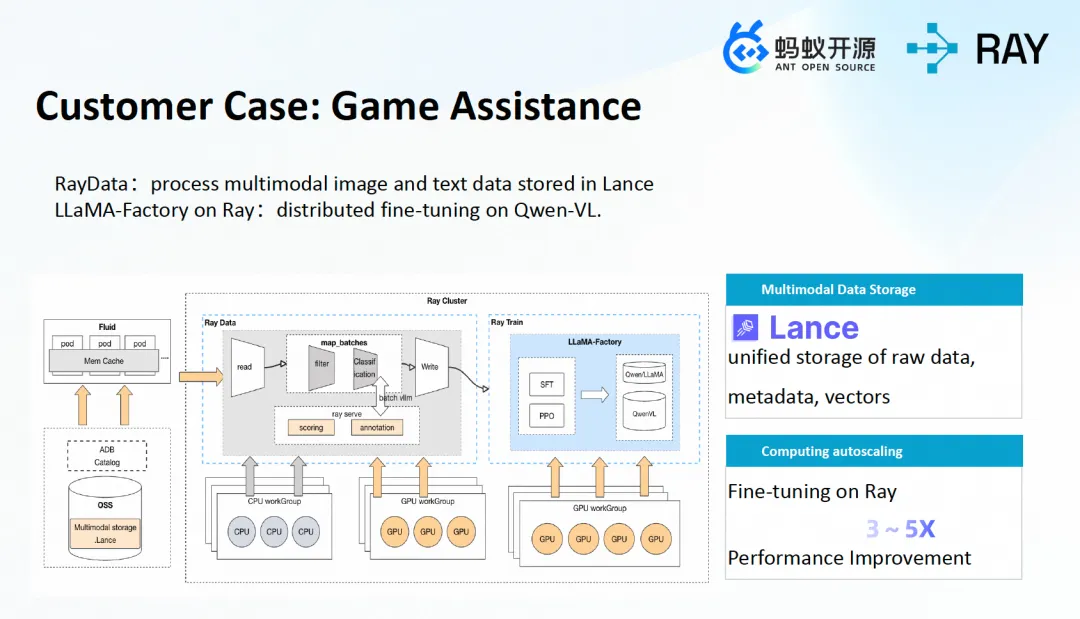

A customer in the game community scenario mainly provides game distribution and interaction services for players and developers. Based on AnalyticDB Ray, the customer first uses RayData to interface with source data in different formats and performs distributed high-efficiency loading and transformation to store data uniformly in the Lance format. By using Lance to support the integrated storage of image binary and structured data, the customer achieves better data consistency and versioning, and reduces remote I/O. Through Lance distributed data tagging and Delta Update implemented by AnalyticDB Ray, the performance improved by 193% compared to Parquet. Finally, the customer performs distributed fine-tuning of the Qwen-VL multimodal model through LLamaFactory, which is already integrated into AnalyticDB Ray.

[Infographic] Highlights | Database New Features in March 2026

PolarDB-X Best Practice Series (10): Best Practices for Data and Traffic Skew Analysis (Part 1)

ApsaraDB - October 29, 2025

ApsaraDB - October 24, 2025

Alibaba Container Service - April 8, 2025

Alibaba Container Service - June 23, 2025

ApsaraDB - October 29, 2024

Alibaba Container Service - March 12, 2024

Alibaba Cloud Model Studio

Alibaba Cloud Model Studio

A one-stop generative AI platform to build intelligent applications that understand your business, based on Qwen model series such as Qwen-Max and other popular models

Learn More Qwen

Qwen

Full-range, open-source, multimodal, and multi-functional

Learn More AI Acceleration Solution

AI Acceleration Solution

Accelerate AI-driven business and AI model training and inference with Alibaba Cloud GPU technology

Learn More Network Intelligence Service

Network Intelligence Service

Self-service network O&M service that features network status visualization and intelligent diagnostics capabilities

Learn MoreMore Posts by ApsaraDB