By Kan Junbao (Junbao), Alibaba Cloud Senior Technical Expert

This series of articles on cloud-native storage explains the concepts, features, requirements, principles, usage, and cases of cloud-native storage. It aims to explore the new opportunities and challenges of cloud-native storage technology. This article is the second in the series and explains the concepts of container storage. If you are not familiar with this concept, I suggest that you read the first article of this series, "Cloud-Native Storage: The Cornerstone of Cloud-Native Applications."

Docker storage volumes and Kubernetes storage volumes are essential for cloud-native storage.

This container service is widely used because it provides an organization format for container images during container runtime. Multiple containers can share the same image resource, or more precisely, the same image layer, on the same node by using the container image reuse technology. This means image files are not copied or loaded each time a container is started. This reduces the storage space usage of hosts and improves the container startup efficiency.

The same image resource can be shared by different running containers, and data can be shared by different images. This improves the storage efficiency of nodes. An image is divided into multiple data layers, and the data of each layer is superimposed and overwritten. This structure enables image data sharing.

Each layer of a container image is read-only so that image data can be shared by multiple containers. In practice, when you start a container by using an image, you can read and write this image in the container. How is this done?

When a container uses an image, the container adds a read/write layer at the top of all image layers. Each running container mounts a read/write layer on top of all layers of the current image. All operations on the container are completed at this layer. When the container is released, the read/write layer is also released.

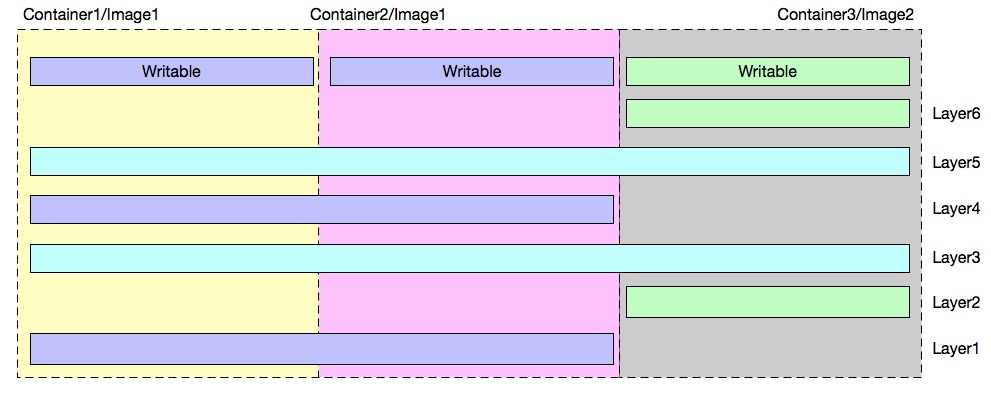

As shown in the preceding figure, three containers exist on the node. Container 1 and Container 2 run based on Image 1, and Container 3 runs based on Image 2.

The image storage layers are explained as follows:

The two images share Layer 3 and Layer 5.

Container storage is explained as follows:

Data sharing based on the layered structure of container images can significantly reduce the host storage usage by the container service.

In the container image structure with the read/write layer, data is read and written in the following way:

In the case of data read, when different layers contain duplicate data, data at the lower layer is overwritten by the same data at the upper layer.

Data is written at the uppermost read/write layer when you modify a file in a container. The technologies involved are copy-on-write (CoW) and allocate-on-demand.

CoW indicates that data is copied only when it is written. It is applicable to scenarios where existing files are modified. CoW allows all containers to share the file system of an image and read all data from this image. When you write a file, this file is copied to the uppermost read/write layer of the image for modification. For all the containers that share the same image, each container writes the file copies instead of the original files of the image. When multiple containers write the same file, each container creates a copy of this file in its file system and modifies this copy independently.

Storage space is allocated only when new files are written to images. This improves the utilization of storage resources. For example, disk space is allocated to a container only when new files are written to this container. Disk space is not pre-allocated during container startup.

Storage drivers are used to manage container data at each layer to enable image sharing among containers. Storage drivers support read and write operations on files. The storage drivers of containers store and manage data at the read/write layer. Common storage drivers include:

The following section explains how AUFS works.

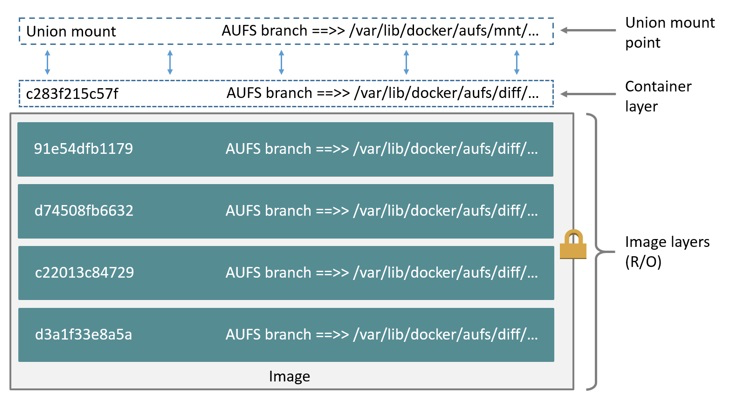

AUFS is a type of union file system (UFS) and a file-level storage driver.

AUFS is a layered file system able to transparently superimpose one or more existing file systems to form a single layer. AUFS can mount different directories to the file systems under the same virtual file system.

You can superimpose and modify files layer by layer. Only the file system at the uppermost layer is writable, whereas the file systems at lower layers are read-only.

When you modify a file, AUFS creates a copy of this file and uses CoW to transfer this copy from the read-only layer to the writable layer for modification. The modified file is stored at the writable layer.

In Docker, the uppermost writable layer is the container runtime, and all the lower layers are image layers.

Any data read and write operations on applications that run inside a container are completed at the read/write layer of the container. The image layers and the read/write layer are mapped to the underlying structure, which is responsible for intra-container storage in the container's internal file system. A container data volume allows applications inside a container to interact with external storage. This volume is similar to an external storage device, like a USB flash drive.

Containers store data temporarily. The stored data is deleted when containers are released. After you mount external storage to a container file system by using a data volume, the application can reference external data or persistently store its generated data in the data volume. Therefore, container data volumes provide a method for data persistence.

Container storage consists of multiple read-only layers (image layers), a read/write layer, and external storage (data volume).

Container data volumes can be divided into single-node data volumes and cluster data volumes. A single-node data volume is a data volume that the container service mounts to a node. Docker volumes are typical single-node data volumes. Cluster data volumes provide cluster-level data volume orchestration capabilities. Kubernetes data volumes are typical cluster data volumes.

A Docker volume is a directory that can be used by multiple containers simultaneously. It is independent of the UFS and provides the following features:

Bind: You can directly mount host directories and files to containers.

Volume: You can enable this mode when you use third-party data volumes.

Tmpfs is a non-persistent volume type, which stores data in the memory. Tmpfs data is easy to lose.

-v: src:dst:opts: This is only applicable to single-node data volumes.

Example:

$ docker run -d --name devtest -v /home:/data:ro,rslave nginx

$ docker run -d --name devtest --mount type=bind,source=/home,target=/data,readonly,bind-propagation=rslave nginx

$ docker run -d --name devtest -v /home:/data:z nginx-v: src:dst:opts: This is only applicable to single-node data volumes.

Example:

$ docker run -d --name devtest -v myvol:/app:ro nginx

$ docker run -d --name devtest --mount source=myvol2,target=/app,readonly nginxThis section explains how to use Docker data volumes.

Anonymous data volumes: docker run –d -v /data3 nginx

By default, the directory /var/lib/docker/volumes/{volume-id}/_data is created on the host for mapping purposes.

Named data volumes: docker run –d -v nas1:/data3 nginx

If the nas1 volume cannot be found, a volume of the default type (local) is created.

docker run -d -v /test:/data nginxIf the host does not contain the /test directory, this directory is created by default.

A volume container is a running container. Other containers can inherit the data volumes mounted to this container. All the mounts of the container are reflected in the reference containers.

docker run -d --volumes-from nginx1 -v /test1:/data1 nginxThe preceding command is used to inherit all data volumes from a configured container, including custom volumes.

You can configure mount propagation for Docker volumes by using the propagation command.

Examples:

$ docker run –d -v /home:/data:shared nginx

The directories mounted to the /home directory of the host are available in the /data directory of the container, and vice versa.

$ docker run –d -v /home:/data:slave nginx

The directories mounted to the /home directory of the host are available in the /data directory of the container, but not vice versa.Mount visibility in Volume mode:

Mount visibility in Bind mode: This is determined by host directories.

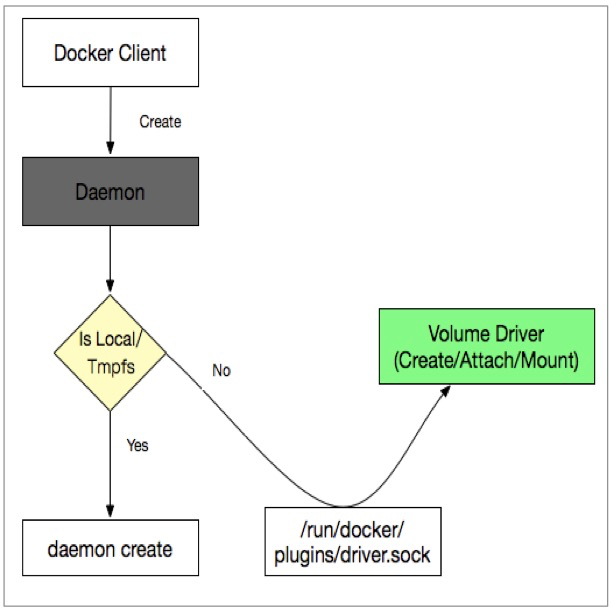

You can use Docker data volumes to mount the external storage of containers to container file systems. To allow containers to support more external storage classes, Docker supports the mounting of different types of storage services through storage plug-ins. The extension plug-ins are also known as volume drivers. You can develop a storage plug-in for each storage class.

The Docker daemon communicates with volume drivers in the following ways:

Example:

$ docker volume create --driver nas -o diskid="" -o host="10.46.225.247" -o path="/nas1" -o mode="" --name nas1Docker volume drivers can be used to manage data volumes in single-node container environments or on the Swarm platform. Currently, Docker volume drivers are less used because Kubernetes has become increasingly popular. For more information about Docker volume drivers, see https://docs.docker.com/engine/extend/plugins_volume/

As mentioned above, data volumes can be used to persistently store container data. Below, we will discuss how to define storage for loads or pods in a Kubernetes orchestration system during runtime. Kubernetes is a container orchestration system that is designed for the management and deployment of container applications throughout the cluster. Therefore, we need to define application storage in Kubernetes based on clusters. Kubernetes storage volumes define the relationship between applications and storage in a Kubernetes environment. The following sections explain related concepts.

A data volume defines the details of external storage and is embedded in a pod. In essence, a data volume records information about external storage for the Kubernetes system. When loads need external storage, the system queries related information in the data volume and mounts external storage.

Common types of Kubernetes volumes include:

Examples of volume templates:

volumes:

- name: hostpath

hostPath:

path: /data

type: Directory

---

volumes:

- name: disk-ssd

persistentVolumeClaim:

claimName: disk-ssd-web-0

- name: default-token-krggw

secret:

defaultMode: 420

secretName: default-token-krggw

---

volumes:

- name: "oss1"

flexVolume:

driver: "alicloud/oss"

options:

bucket: "docker"

url: "oss-cn-hangzhou.aliyuncs.com"PVCs are a type of abstract storage volume in Kubernetes and represents the data volume of a specific storage class. PVCs are designed to separate storage from application orchestration. A PVC object abstracts storage details and implements storage volume orchestration. This makes storage volume objects independent of application orchestration in Kubernetes and decouples applications from storage at the orchestration layer.

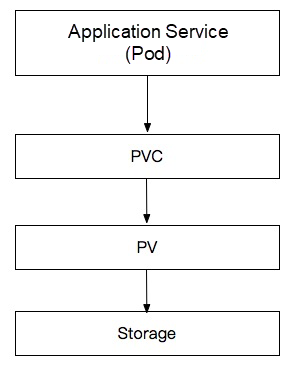

PVs are a specific type of storage volume in Kubernetes. A PV object defines a specific storage class and a set of volume parameters. All information about the target storage service is stored in a PV object. Kubernetes references the PV-stored information for mounting.

The following figure shows the relationships among loads, PVC objects, and PV objects.

A PV object can be used to separate storage from application orchestration and mount data volumes. So what is the purpose of combining PVC and PV objects? Through the combined use of PVC and PV objects, Kubernetes implements secondary abstraction of storage volumes. A PV object describes a specific storage class by defining storage details. Users do not want to have to study underlying details when they use storage services at the application layer. Therefore, it is not a user-friendly practice to define specific storage services at the application orchestration layer. To fix this problem, Kubernetes implements secondary abstraction of storage services. Kubernetes only extracts the parameters related to user relationships, and uses PVC objects to abstract underlying PV objects. Therefore, PVC and PV have different focuses. A PVC object focuses on users' storage needs and provides a unified way to define storage. A PV object focuses on storage details, allowing users to define specific storage classes and storage mount parameters.

Specifically, the application layer declares a storage need (PVC), and Kubernetes selects the PV object that best fits this PVC object and binds them together. PVCs are a type of storage object used by applications and belong to the application domain. That means the PVC object resides in the same noun space as the application. PVs are a type of storage object that belongs to the storage domain instead of a noun space.

PVC and PV objects have the following attributes:

The PVC definition template is as follows:

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: disk-ssd-web-0

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 20Gi

storageClassName: alicloud-disk-available

volumeMode: FilesystemThe PVC-defined storage interfaces are related to the storage access mode, resource capacity, and volume mode. The main parameters are described as follows:

"accessModes" defines the mode of access to storage volumes. The options include ReadWriteOnce, ReadWriteMany, and ReadOnlyMany.

Note: The preceding access modes are only declared at the orchestration layer. Whether stored files are readable and writable is determined by specific storage plug-ins.

"storage" defines the storage capacity that the specified PVC object is expected to provide. The defined data size is only declared at the orchestration layer. The actual storage capacity is determined by the type of the underlying storage service.

"volumeMode" defines the mode of mounting storage volumes. The options include FileSystem and Block.

"FileSystem" specifies that data volumes are mounted as file systems for use by applications.

"Block" specifies that data volumes are mounted as block devices for use by applications.

The following example illustrates how to orchestrate the PV objects of data volumes in cloud disks.

apiVersion: v1

kind: PersistentVolume

metadata:

labels:

failure-domain.beta.kubernetes.io/region: cn-shenzhen

failure-domain.beta.kubernetes.io/zone: cn-shenzhen-e

name: d-wz9g2j5qbo37r2lamkg4

spec:

accessModes:

- ReadWriteOnce

capacity:

storage: 30Gi

flexVolume:

driver: alicloud/disk

fsType: ext4

options:

VolumeId: d-wz9g2j5qbo37r2lamkg4

persistentVolumeReclaimPolicy: Delete

storageClassName: alicloud-disk-available

volumeMode: FilesystemOnly PVC-bound PV objects can be consumed by pods. The PVC-PV binding process is the process of PV consumption. Only a PV object that meets the following requirements can be bound to a PVC object:

Only a PV object that meets the preceding requirements can be bound to the PVC object.

If multiple PV objects meet requirements, the most appropriate PV object is selected for binding. Generally, the PV object with the minimum capacity is selected. If multiple PV objects have the same minimum capacity, one of them is randomly selected.

If no PV objects meet the preceding requirements, the PVC object enters the pending state until a conforming PV object appears.

As we have learned earlier, PVCs are secondary storage abstractions for application services. A PVC object provides simple storage definition interfaces. PVs are storage abstractions with complex details. PV objects are generally defined and maintained by the cluster management personnel.

Storage volumes are divided into dynamic storage volumes and static storage volumes based on the PV creation method.

A cluster administrator analyzes the storage needs of the cluster and pre-allocates storage media. The administrator also creates PV objects to be consumed by PVC objects. If PVC needs are defined in loads, Kubernetes binds PVC and PV objects according to relevant rules. This allows applications to access storage services.

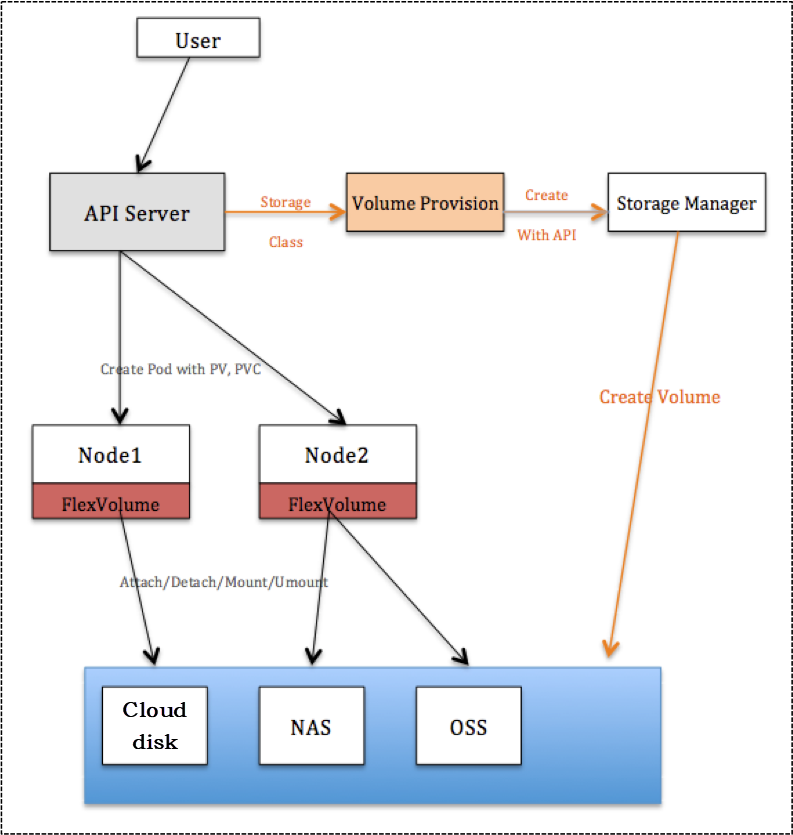

A cluster administrator configures a backend storage pool and creates a storage class template. When a PVC object needs to consume a PV object, the Provisioner plug-in dynamically creates a PV object based on the PVC needs and the details of the storage class.

Dynamic and static storage volumes are compared as follows:

Dynamic storage volumes provide the following advantages:

When you declare a PVC object, you can add the StorageClassName field to this PVC object. This allows the Provisioner plug-in to create a suitable PV object based on the definition of StorageClassName when no PV object in the cluster fits the declared PVC object. This process can be viewed as the creation of a dynamic data volume by the Provisioner plug-in. The created PV object is associated with the PVC object based on StorageClassName.

A storage class can be viewed as the template used to create a PV storage volume. When a PVC object triggers the automatic PV creation process, a PV object is created by using the content of a storage class. The content includes the name of the target Provisioner plug-in, a set of parameters used for PV creation, and the reclaim mode.

A storage class template is defined as follows:

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: alicloud-disk-topology

parameters:

type: cloud_ssd

provisioner: diskplugin.csi.alibabacloud.com

reclaimPolicy: Delete

allowVolumeExpansion: true

volumeBindingMode: WaitForFirstConsumer

When you create a PVC declaration, Kubernetes finds a suitable PV object in the cluster to be bound to the created PVC object. If no suitable PV object exists, the following process is triggered:

Certain types of storage, such as Alibaba Cloud disks, impose limitations on the mount attribute. For example, data volumes can only be mounted to nodes in the same zone as these volumes. This type of storage volume produces the following problems:

The storage class template provides the volumeBindingMode field to fix the preceding problems. When this field is set to WaitForFirstConsumer, the Provisioner plug-in delays data volume creation when it receives the PVC pending state. Instead, the Provisioner plug-in creates a data volume only after the PVC object is consumed by a pod.

The detailed process is as follows:

The delayed binding feature is used to schedule application loads to ensure that sufficient resources are available for use by pods before dynamic volumes are created. This also ensures that data volumes are created in zones with available resources and improves the accuracy of storage planning.

We recommend that you use the delayed binding feature when you create dynamic volumes in a multi-zone cluster. The preceding configuration process is supported by Alibaba Cloud Container Service for Kubernetes (ACK) clusters.

The following example illustrates how pods consume PVC and PV objects:

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: nas-pvc

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 50Gi

selector:

matchLabels:

alicloud-pvname: nas-csi-pv

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: nas-csi-pv

labels:

alicloud-pvname: nas-csi-pv

spec:

capacity:

storage: 50Gi

accessModes:

- ReadWriteOnce

persistentVolumeReclaimPolicy: Retain

flexVolume:

driver: "alicloud/nas"

options:

server: "***-42ad.cn-shenzhen.extreme.nas.aliyuncs.com"

path: "/share/nas"

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: deployment-nas

labels:

app: nginx

spec:

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx1

image: nginx:1.8

- name: nginx2

image: nginx:1.7.9

volumeMounts:

- name: nas-pvc

mountPath: "/data"

volumes:

- name: nas-pvc

persistentVolumeClaim:

claimName: nas-pvcTemplate explanation:

According to the PVC-PV binding logic, this PV object meets the PVC consumption requirements. Therefore, the PVC object is bound to the PV object and mounted to a pod.

This article gives a detailed explanation of container storage, including single-node Docker data volumes and cluster-level Kubernetes data volumes. Kubernetes data volumes are designed for cluster-level storage orchestration and can be mounted to nodes. Kubernetes provides a sophisticated architecture to implement complex storage volume orchestration capabilities. The next article will explain the Kubernetes storage architecture and its implementation process.

The Simplicity of COLA, an Alibaba Open-Source Application Architecture

675 posts | 56 followers

FollowAlibaba Developer - November 5, 2020

Alibaba Cloud Native Community - September 19, 2023

Alibaba Cloud Native - May 23, 2022

Alibaba Container Service - August 25, 2020

OpenAnolis - February 10, 2023

Alibaba Cloud Native - November 29, 2023

675 posts | 56 followers

Follow Cloud-Native Applications Management Solution

Cloud-Native Applications Management Solution

Accelerate and secure the development, deployment, and management of containerized applications cost-effectively.

Learn More Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More ACK One

ACK One

Provides a control plane to allow users to manage Kubernetes clusters that run based on different infrastructure resources

Learn More Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn MoreMore Posts by Alibaba Cloud Native Community